Ensuring Data Integrity: A Comprehensive Guide to LC-MS/MS Method Cross-Validation Between Laboratories

This article provides a systematic guide for researchers, scientists, and drug development professionals on cross-validating Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) methods between laboratories.

Ensuring Data Integrity: A Comprehensive Guide to LC-MS/MS Method Cross-Validation Between Laboratories

Abstract

This article provides a systematic guide for researchers, scientists, and drug development professionals on cross-validating Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) methods between laboratories. It explores the foundational principles and regulatory drivers for harmonizing bioanalytical data across sites. A detailed examination of methodology covers protocol design, statistical acceptance criteria, and practical execution. The guide addresses common troubleshooting scenarios and optimization strategies for instrument, reagent, and personnel variability. Finally, it establishes frameworks for comparative analysis, final reporting, and leveraging cross-validation to enhance data reliability in pharmacokinetic, metabolomic, and clinical research, ensuring robust and defensible multi-site studies.

The Why and When: Understanding the Critical Need for LC-MS/MS Cross-Validation

Within the framework of LC-MS/MS method transfer between laboratories, understanding the regulatory distinctions between cross-validation and partial/full validation is critical. This guide compares these key concepts, providing objective performance data and experimental protocols to inform researchers, scientists, and drug development professionals.

Definitions and Regulatory Context

Cross-Validation is a targeted comparative assessment performed when an analytical method undergoes modifications or is transferred between laboratories, instruments, or sites. It demonstrates that the modified or transferred method performs equivalently to the original validated method. It is not a re-validation but a verification of comparative performance.

Partial Validation is a subset of revalidation, conducted when minor but significant changes are made to an already validated method (e.g., sample processing change, species matrix change). It focuses only on the validation parameters likely to be impacted by the change.

Full Validation is the complete, initial establishment of documented evidence that a method fulfills its intended purpose, as per ICH Q2(R2) and other regulatory guidelines. It is required for new analytical methods.

Performance Comparison: Key Parameters and Experimental Data

The following table summarizes the scope, regulatory drivers, and typical experimental intensity for each approach in the context of inter-laboratory LC-MS/MS method transfer.

Table 1: Comparative Analysis of Validation Approaches

| Parameter | Cross-Validation | Partial Validation | Full Validation |

|---|---|---|---|

| Primary Objective | Demonstrate equivalence between two methods/labs. | Assess impact of a specific change. | Establish initial method performance. |

| Regulatory Trigger | Method transfer or minor modification. | Method change (e.g., matrix, instrument). | New method development. |

| Typical Scope | Accuracy, precision, sensitivity comparison between labs. | Select parameters (e.g., selectivity, matrix effect, recovery). | All ICH Q2(R2) parameters: specificity, accuracy, precision, LOD/LOQ, linearity, range, robustness. |

| Data Points Required | ~3 concentrations, 5-6 replicates each per lab. | Varies by change; e.g., 3 concentrations in triplicate for accuracy/precision. | Full statistical rigor; e.g., 5 concentrations, 5+ replicates for accuracy/precision. |

| Typical Timeline | 1-3 weeks | 2-4 weeks | 8-12+ weeks |

| Documentation | Comparative report linking to original validation. | Supplemental validation report. | Complete Validation Report and Protocol. |

| Regulatory Guidance | EMA Guideline on bioanalytical method validation, FDA Bioanalytical Method Validation Guidance for Industry. | ICH Q2(R2), FDA Guidance for Industry. | ICH Q2(R2), USP <1225>, FDA Guidance for Industry. |

Experimental Protocols for Inter-Laboratory LC-MS/MS Studies

Protocol 1: Cross-Validation for Method Transfer

Objective: To demonstrate equivalence between the originating (Lab A) and receiving (Lab B) laboratories for a validated LC-MS/MS method quantifying Drug X in human plasma.

- Shared Materials: Aliquots from the same batches of calibration standards (CS) and quality control (QC) samples (LLOQ, Low, Mid, High) are prepared centrally and distributed to both labs.

- Experimental Run: Each laboratory analyzes one full validation run per day for three separate days. Each run includes: a double blank (no IS), a single blank (with IS), a 7-point calibration curve, and six replicates of each QC level.

- Data Analysis: Calculate accuracy (% nominal) and precision (%CV) for QCs and calibration standards at each lab. Perform statistical comparison (e.g., using a t-test or equivalence test with pre-defined acceptance criteria, such as ≤15% difference in mean accuracy) for the QC results between Lab A and Lab B.

Protocol 2: Partial Validation for a Change in Extraction Procedure

Objective: To assess the impact of changing from liquid-liquid extraction (LLE) to solid-phase extraction (SPE) on a validated method's performance.

- Parameter Selection: Focus on parameters potentially affected: extraction recovery, matrix effects, precision, and accuracy.

- Experiment: Prepare QC samples (Low, Mid, High) in six replicates using the new SPE procedure. Analyze alongside a freshly prepared calibration curve using the new SPE method.

- Comparative Analysis: Calculate recovery and matrix factor (IS-normalized) for the new SPE method and compare to historical LLE data. Ensure accuracy and precision meet pre-defined criteria (e.g., ±15%, CV ≤15%).

Decision Pathway for Validation Strategy

Decision Logic for Validation Type Selection

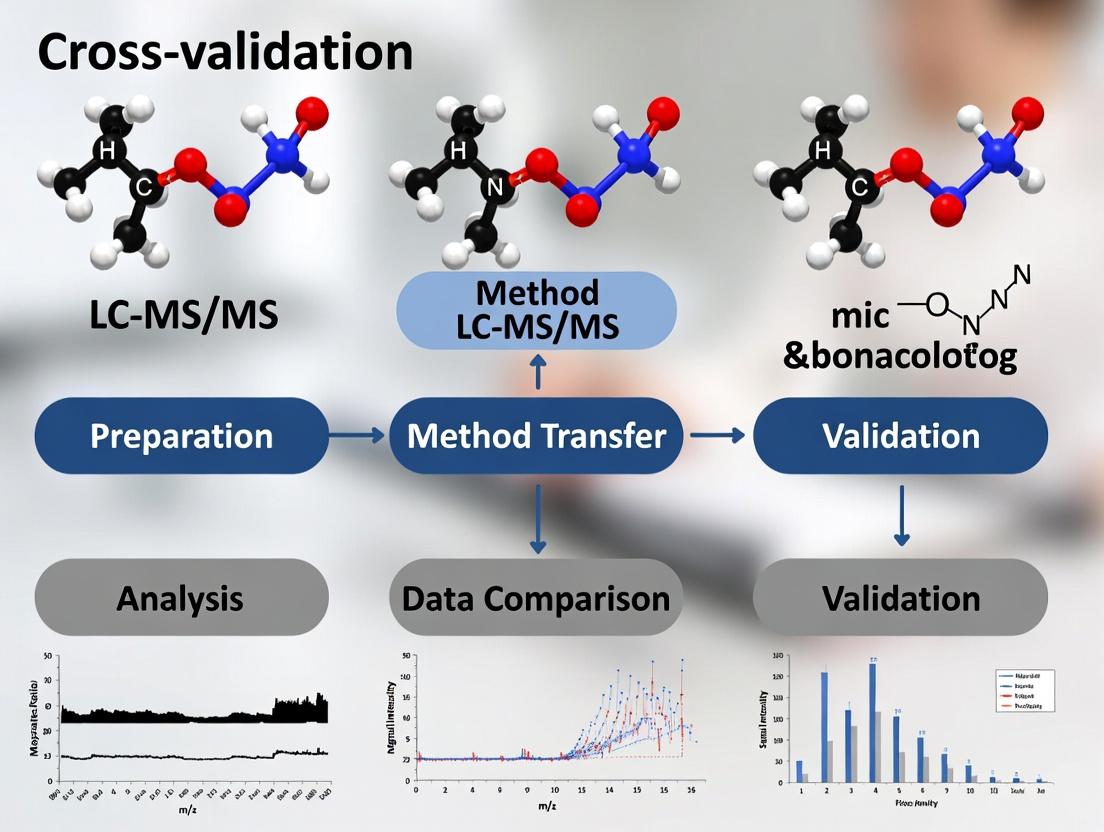

Workflow for Inter-Laboratory Cross-Validation

LC-MS/MS Cross-Validation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for LC-MS/MS Method Validation Studies

| Item | Function in Validation |

|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for variability in sample preparation and ionization efficiency in MS; critical for accuracy and precision. |

| Matrix from Appropriate Species (e.g., human plasma) | Provides the authentic biological environment for testing method selectivity, matrix effects, and recovery. |

| Certified Reference Standard (Analyte) | Ensures the accuracy and traceability of quantitative measurements. Purity must be documented. |

| Characterized QC Sample Pools (at least 3 levels) | Monitor the ongoing performance and stability of the analytical method during validation and routine use. |

| Appropriate Chromatographic Columns & Guards | Ensure consistent retention, separation, and peak shape; column lot-to-lot variability should be assessed. |

| Mass Spectrometer Tuning and Calibration Solutions | Optimize and calibrate MS instrument response to ensure sensitivity and specificity specifications are met. |

| Specialized Sample Preparation Kits (e.g., SPE, PPT plates) | Standardize and optimize extraction recovery and clean-up, impacting method robustness and sensitivity. |

In LC-MS/MS method cross-validation research, ensuring consistent performance across different laboratories is a critical challenge. Success hinges on meticulous protocol standardization and robust instrument performance. This guide compares a leading triple quadrupole LC-MS/MS system against common alternatives, focusing on parameters essential for multi-site harmonization.

Performance Comparison: System Robustness for Cross-Validation

The following table compares key quantitative metrics from inter-laboratory cross-validation studies, essential for evaluating system suitability in multi-center trials.

Table 1: Inter-Laboratory Performance Metrics for LC-MS/MS Systems in Bioanalytical Method Transfer

| Performance Metric | System A (Leading TQ-MS) | System B (Modular TQ-MS) | System C (Q-TOF System) | Acceptance Criteria for Transfer |

|---|---|---|---|---|

| Inter-Lab Precision (%CV, n=6 sites) | 5.2% | 8.7% | 12.5% | ≤15% |

| Mean Accuracy (Spiked QC, % nominal) | 98.5% | 95.1% | 92.3% | 85-115% |

| Retention Time Stability (RSD, 72h) | 0.3% | 0.8% | 1.5% | ≤2.0% |

| Signal Intensity Drift (24h, % change) | -4.1% | -9.8% | -15.2% | ≤±20% |

| LLOQ Reproducibility (n=3 labs) | 4.8% CV | 7.9% CV | 14.2% CV | ≤20% CV |

| Cross-Lab Calibration R² | 0.998 | 0.991 | 0.984 | ≥0.990 |

| Sample Throughput (samples/day) | 380 | 320 | 250 | Site Dependent |

Experimental Protocols for Cited Data

Protocol 1: Inter-Laboratory Precision and Accuracy Study

- Objective: Quantify variability of a validated small molecule assay across six independent laboratories.

- Method: A standardized protocol and reagent kit were distributed. Each site prepared a calibration curve (1-500 ng/mL) and six replicates of low, mid, and high QC samples in human plasma. All samples were processed via identical protein precipitation. Chromatography used a specified C18 column (2.1 x 50 mm, 1.7 µm) with a 5-minute gradient. MS detection was in positive MRM mode.

- Data Analysis: Accuracy (% nominal) and precision (%CV) were calculated per site. Grand mean and inter-lab CV were derived from site mean values.

Protocol 2: Retention Time and Signal Stability Assessment

- Objective: Evaluate system robustness for unattended batch analysis in a relocation scenario.

- Method: A system suitability mix of six analytes was injected every 30 minutes over a 72-hour period. The mobile phase reservoirs were filled to maximum capacity at the start. Column oven temperature and mobile phase flow rate were logged.

- Data Analysis: Retention time RSD and percentage deviation from initial signal intensity were calculated for each analyte.

Protocol 3: Lower Limit of Quantification (LLOQ) Reproducibility

- Objective: Confirm sensitivity consistency post method transfer.

- Method: Three laboratories, one with the original system and two with "receiving" systems, prepared and analyzed six replicates of the LLOQ sample (1 ng/mL). Sample preparation and instrument conditions were locked per the validated method.

- Data Analysis: Accuracy and precision were calculated for each lab. The inter-lab CV of the calculated concentrations was the key metric.

Visualizing the Cross-Validation Workflow

Diagram 1: LC-MS/MS Method Transfer & Cross-Validation Workflow

Diagram 2: Key Verification Steps After Lab Relocation

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 2: Essential Materials for Robust LC-MS/MS Cross-Validation Studies

| Item | Function & Rationale for Cross-Validation |

|---|---|

| Stable Isotope Labeled Internal Standards (SIL-IS) | Corrects for sample matrix effects and variability in extraction efficiency; critical for accuracy across different instruments and operators. |

| Standardized Mobile Phase Kits | Pre-mixed, certified solvent and buffer kits minimize inter-lab variability in pH, ionic strength, and additive concentration. |

| Certified Reference Material (CRM) | Provides an unambiguous accuracy anchor for calibrators across all sites, ensuring data traceability. |

| System Suitability Test (SST) Mix | A ready-to-inject cocktail of compounds to verify sensitivity, chromatographic separation, and retention time stability before batch analysis. |

| Uniform Sample Preparation Kits | Kits containing specified brands/vendors of extraction plates, solvents, and buffers to minimize protocol deviation. |

| Specified LC Column Lot/Batch | Using the same manufacturing lot of the analytical column across sites minimizes stationary-phase variability. |

| Quality Control (QC) Pooled Matrix | A large, single-batch pool of biological matrix (e.g., human plasma) for preparing QCs ensures consistent matrix effects for all testing sites. |

In the context of multi-laboratory LC-MS/MS method cross-validation, the core principles of accuracy, precision, reproducibility, and robustness are not just abstract concepts but critical, measurable performance indicators. This comparison guide evaluates a hypothetical "Platform X" LC-MS/MS system against two common alternatives, "Legacy System Y" and "Compact System Z," using a standardized cross-validation study for the quantification of a small molecule drug candidate (Compound Alpha) in human plasma.

Performance Comparison in Cross-Validation Study

A systematic cross-validation study was conducted across three independent laboratories. Each site performed the same bioanalytical method for Compound Alpha, analyzing identical sets of calibration standards and quality control (QC) samples. The following table summarizes the aggregated performance data.

Table 1: Cross-Validation Performance Metrics for Compound Alpha Assay

| Performance Metric | Target Value | Platform X | Legacy System Y | Compact System Z |

|---|---|---|---|---|

| Accuracy (% Nominal) | 85-115% (QCs) | 98.2% (±5.1) | 102.5% (±8.7) | 95.8% (±12.3) |

| Precision (%CV) | <15% (QCs) | 4.8% | 7.2% | 10.5% |

| Inter-lab Reproducibility (%CV) | <20% | 6.5% | 11.8% | 18.2% |

| Robustness (RT Shift, min) | < ±0.1 min | ±0.03 | ±0.08 | ±0.15 |

| Linear Dynamic Range | 1-1000 ng/mL | 0.5-1200 ng/mL (R²=0.999) | 2-900 ng/mL (R²=0.995) | 5-800 ng/mL (R²=0.990) |

| Mean Sensitivity (S/N at LLOQ) | >5:1 | 22:1 | 10:1 | 6:1 |

Detailed Experimental Protocols

1. Sample Preparation Protocol (Common to All Systems):

- Internal Standard: Stable-labeled d₄-Compound Alpha was used.

- Protein Precipitation: 50 µL of plasma sample was mixed with 10 µL of internal standard working solution and 150 µL of acetonitrile containing 0.1% formic acid.

- Vortex & Centrifuge: Samples were vortexed for 2 minutes and centrifuged at 15,000 x g for 10 minutes at 4°C.

- Supernatant Transfer: 100 µL of supernatant was transferred to an autosampler vial with insert and diluted with 100 µL of water.

2. LC-MS/MS Conditions (Cross-Validation Parameters):

- Chromatography: Column: C18 (2.1 x 50 mm, 1.7 µm). Mobile Phase A: Water/0.1% Formic Acid. B: Acetonitrile/0.1% Formic Acid. Gradient: 5% B to 95% B over 3.5 min. Flow Rate: 0.4 mL/min. Temperature: 40°C.

- MS Detection: ESI Positive Mode. Source Temp: 350°C. Gas Flow: Optimized per system. Detection: MRM transition 455.2 -> 323.1 (Compound Alpha) and 459.2 -> 327.1 (Internal Standard).

3. Cross-Validation Study Design:

- Each laboratory ran a fresh 9-point calibration curve (1-1000 ng/mL) and six replicates of QCs at Low, Mid, and High concentrations (3, 400, 800 ng/mL) across three separate batches.

- System suitability tests (SST) were performed prior to each batch.

- Robustness was tested by intentionally varying column oven temperature (±5°C) and flow rate (±0.05 mL/min).

Visualization of Cross-Validation Workflow

Title: Multi-Lab LC-MS/MS Cross-Validation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for LC-MS/MS Cross-Validation

| Item / Reagent | Function in Cross-Validation | Critical for Principle |

|---|---|---|

| Certified Reference Standard | Provides the known quantity of analyte for calibration. Ensures all labs measure the same entity. | Accuracy |

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for variability in sample prep and ionization efficiency. | Precision, Reproducibility |

| Matrix from Single Donor Lot | Provides a consistent biological background for preparing calibration standards and QCs. | Robustness, Reproducibility |

| Characterized Mobile Phase Additives (e.g., LC-MS grade formic acid) | Ensures consistent ionization efficiency and chromatographic performance across systems. | Reproducibility, Robustness |

| Quality Control (QC) Samples (Prepared at independent facility) | Blind samples used to monitor the ongoing accuracy and precision of the method in each lab. | Accuracy, Precision |

| System Suitability Test (SST) Mix | A standard mixture run before batches to confirm instrument performance meets predefined criteria. | Robustness, Reproducibility |

Within the broader thesis research on LC-MS/MS method cross-validation between laboratories, a critical first step is understanding the regulatory framework. ICH, FDA, and EMA guidelines form the cornerstone of bioanalytical method validation and cross-validation, ensuring data reliability for pharmacokinetic and toxicokinetic assessments. This guide compares their specific stipulations for cross-validation, which is required when a validated method is transferred between laboratories or significantly modified.

Comparative Analysis of Regulatory Guidelines

The table below summarizes the core requirements for cross-validation from each regulatory body, highlighting areas of alignment and divergence.

Table 1: Comparison of ICH, FDA, and EMA Guidelines on Bioanalytical Method Cross-Validation

| Aspect | ICH M10 (R2) Guideline | FDA Bioanalytical Method Validation Guidance (2018) | EMA Guideline on Bioanalytical Method Validation (2011, effective 2012) |

|---|---|---|---|

| Primary Scope | Integrated, harmonized global standard for bioanalytical method validation. | Regulatory expectations for methods supporting FDA-regulated products. | Regulatory expectations for methods supporting medicinal products in the EU. |

| Cross-Validation Definition | Direct comparison of two validated bioanalytical methods. | Comparison of data generated by the original and modified method. | Demonstration of equivalence between two methods or between two laboratories. |

| Key Triggering Events | Method transfer between laboratories; changes in critical equipment, site, or method. | Changes in methodology, laboratory, or analytical conditions. | Method transfer; changes in method parameters, site, or equipment. |

| Required Experimentation | Analysis of a minimum of 40 samples per matrix by both methods (20 at LLOQ, 20 at other concentrations). Minimum of 6 replicates at QC levels. | Analysis of a minimum of 6 precision and accuracy (P&A) replicates at LLOQ, Low, Mid, and High QC levels by both methods. | A minimum number of QC samples at LLOQ, Low, Mid, and High concentrations. Recommended to use study samples from previous validations. |

| Acceptance Criteria | ≥67% of individual sample results within ±30% (±20% for LLOQ) of each other. QC samples must meet standard P&A criteria for both methods. | QC samples should meet standard validation criteria. Comparison of study sample results (e.g., via regression analysis). | No fixed percentage. Should demonstrate that results from both methods are comparable (e.g., within 15% of each other for ≥67% of repeats). |

| Statistical Approach | Emphasis on comparative analysis of study samples. | Recommends graphical (scatter plots) and statistical (e.g., Bland-Altman) comparisons. | Recommends statistical evaluation (e.g., confidence interval approach, paired t-test). |

Experimental Protocols for Cross-Validation

The following methodology is derived from the synthesis of the above guidelines, representing a robust protocol for thesis research on inter-laboratory LC-MS/MS cross-validation.

Protocol: Cross-Validation of an LC-MS/MS Method for Drug X Between Two Laboratories

Method & Sample Preparation:

- The original (Sponsor Lab) and receiving (CRO Lab) laboratories use the identical, detailed standard operating procedure (SOP) for sample preparation (e.g., protein precipitation with acetonitrile containing internal standard).

- A common set of calibration standards (CS) and quality control (QC) samples at Lower Limit of Quantification (LLOQ), Low, Mid, and High concentrations are prepared from a single stock solution and aliquoted for both labs.

- A set of 40 incurred study samples (previously analyzed by the Sponsor Lab) are selected, covering the full analytical range, including 20 samples at or near the LLOQ.

Experimental Run Design:

- Each laboratory analyzes the full set of CS and QC samples in six independent runs.

- Each laboratory analyzes the 40 common incurred samples in duplicate within separate analytical runs.

- All runs must pass standard run acceptance criteria (e.g., ≤15% deviation for CS, ≥67% of QCs within ±15%, excluding LLOQ at ±20%).

Data Analysis & Acceptance:

- Precision & Accuracy: For both labs, inter-run accuracy (%Nominal) and precision (%CV) for QC levels must meet standard validation criteria (e.g., ±15%, CV ≤15%).

- Sample Comparison: For each of the 40 incurred samples, the percent difference between the mean concentration reported by Lab A and Lab B is calculated:

%Difference = [(Lab A - Lab B) / Mean] * 100. - Acceptance: The cross-validation is successful if ≥67% (≥27 out of 40) of the individual sample comparisons are within ±30% of each other. Samples at LLOQ should be within ±20%.

Visualization of Cross-Validation Workflow & Decision Logic

Diagram 1: LC-MS/MS Method Cross-Validation Workflow

Diagram 2: Regulatory Guideline Alignment for Acceptance

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for LC-MS/MS Cross-Validation Experiments

| Item | Function in Cross-Validation |

|---|---|

| Certified Reference Standard (API) | Provides the exact analyte of known purity and concentration for preparing calibration standards, ensuring accuracy and traceability. |

| Stable Isotope-Labeled Internal Standard (IS) | Corrects for variability in sample preparation and ionization efficiency in MS; critical for assay reproducibility between labs. |

| Matrix (e.g., Human Plasma) | Blank matrix from a single, large lot is essential to prepare a homogeneous set of CS, QCs, and validation samples for both laboratories. |

| LC-MS/MS Grade Solvents | High-purity solvents (water, acetonitrile, methanol, formic acid) minimize background noise and ensure consistent chromatographic performance. |

| Characterized Sample Pool | Previously analyzed incurred patient samples serve as the "gold standard" for the direct comparison of method performance between labs. |

| Quality Control Samples | Independently prepared samples at low, mid, and high concentrations monitor the run performance and stability of each analytical system. |

Formal cross-validation of bioanalytical methods, particularly Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) methods used in drug development, is not always required. However, specific scientific and regulatory triggers mandate its execution to ensure data reliability across laboratories. This guide, framed within broader research on inter-laboratory LC-MS/MS method cross-validation, compares scenarios and provides experimental data to illustrate critical decision points.

Key Triggers Mandating Formal Cross-Validation

A formal cross-validation study becomes mandatory under the following conditions:

- Transfer of a Validated Method to a New Laboratory: Any permanent transfer of a validated bioanalytical method from one laboratory (the originating lab) to another (the receiving lab) for routine use requires cross-validation.

- Change in Critical Methodology: Significant changes to a validated method, such as a change in analytical platform (e.g., different mass spectrometer model), sample processing procedure, or critical chromatographic parameters, necessitate re-validation or cross-validation.

- Multisite Studies: When samples from a single clinical or non-clinical study are analyzed at multiple bioanalytical laboratories using the same method.

- Regulatory Submission Requirement: Specific regulatory guidance (e.g., FDA, EMA) may explicitly require cross-validation data to support submissions, especially when bridging data sets from different sources.

Comparative Performance Data: Cross-Validation Case Study

The following table summarizes experimental data from a simulated cross-validation study for an LC-MS/MS method quantifying Drug X in human plasma between an originating (Lab A) and a receiving laboratory (Lab B).

Table 1: Comparative Method Performance Parameters Between Laboratories

| Performance Parameter | Acceptance Criteria | Lab A (Originating) | Lab B (Receiving) | Cross-Validation Result |

|---|---|---|---|---|

| Accuracy (% Nominal) | 85-115% | 98.5% | 101.2% | Pass |

| Precision (%CV) | ≤15% | 4.2% | 5.8% | Pass |

| Calibration Curve R² | ≥0.99 | 0.998 | 0.997 | Pass |

| LLOQ (ng/mL) | S/N ≥5, Acc. 80-120% | 1.00 | 1.05 | Comparable |

| Matrix Effect (%CV) | ≤15% | 3.5 | 6.1 | Pass |

| Processed Sample Stability (24h, 10°C) | ±15% of nominal | -3.2% | +5.1% | Pass |

Experimental Protocol for Inter-Laboratory Cross-Validation

Objective: To demonstrate the reliability and equivalence of the analytical method for quantifying Drug X in human plasma between two independent laboratories.

Methodology:

- Protocol Finalization: Both laboratories agree on a finalized, detailed cross-validation protocol, including acceptance criteria based on FDA/EMA guidelines.

- Shared Materials: The same batch of drug analyte, internal standard, control matrix (human plasma), and written analytical procedure are provided to Lab B.

- Independent Preparation: Lab B prepares its own calibration standards and quality control (QC) samples independently from separate weighings/dilutions.

- Analytical Runs: Each laboratory performs a minimum of six analytical runs over at least two days. Each run includes a calibration curve and replicates of QC samples at low, medium, and high concentrations.

- Data Exchange & Comparison: Raw data and calculated results (accuracy, precision, sensitivity) are shared and statistically compared (e.g., using a t-test or ANOVA for mean comparisons).

Cross-Validation Decision Workflow

Diagram Title: Decision Workflow for Mandatory Cross-Validation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for LC-MS/MS Cross-Validation Studies

| Item | Function & Importance |

|---|---|

| Certified Reference Standard | High-purity analyte for preparing calibration standards; ensures accuracy and traceability. |

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for matrix effects and ionization variability; critical for assay reproducibility. |

| Control Biomatrix | Pooled, analyte-free human plasma/serum from a reliable source for preparing QCs. |

| Mass Spectrometer Tuning Solution | Standard solution used to calibrate and optimize MS instrument response before analysis. |

| Quality Control Samples | Independently prepared samples at low, mid, and high concentrations to monitor run acceptance. |

| Chromatographic Column (Same Lot) | Identical column chemistry and lot number should be used by all labs to ensure reproducibility. |

| Mobile Phase Additives (e.g., Formic Acid) | High-purity, LC-MS grade reagents are essential to minimize background noise and ion suppression. |

In multi-laboratory cross-validation studies for Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) methods, establishing clear, consensus-driven success criteria is paramount. This ensures data comparability and method robustness across sites. This guide compares critical performance parameters and protocols, providing a framework for stakeholder alignment.

Key Analytical Performance Parameters: Comparison Guide

The following table summarizes widely accepted success criteria for bioanalytical method cross-validation, as per FDA/EMA guidelines and recent multi-site studies.

Table 1: Success Criteria for LC-MS/MS Method Cross-validation Across Laboratories

| Performance Parameter | Typical Acceptance Criteria | Inter-Lab Variability Allowance (CV%) | Comparative Note: Single Lab vs. Multi-Lab |

|---|---|---|---|

| Accuracy (% Nominal) | 85-115% (LLOQ: 80-120%) | ≤15% (LLOQ: ≤20%) | Tighter control on mean accuracy across labs is required. |

| Precision (CV%) | ≤15% (LLOQ: ≤20%) | Inter-lab CV should align with intra-lab precision limits. | The major source of variability shifts from intra- to inter-operator/lab. |

| Calibration Curve Linearity (R²) | R² ≥ 0.990 | Consistent regression model (weighting) across all labs. | Model choice must be unified; 1/x² weighting often standard for LC-MS/MS. |

| Lower Limit of Quantification (LLOQ) | Signal/Noise ≥ 5, Accuracy & Precision as above | LLOQ concentration must be reproducible and agreed upon. | Confirmation via precision profile across labs is essential. |

| Matrix Effect (ME%) | 85-115% (stable isotope IS preferred) | IS-normalized ME within 85-115% for all participant labs. | Critical to compare in different lots of matrix from each site. |

| Carryover | ≤20% of LLOQ area | Zero tolerance for systemic carryover; protocol must be identical. | Requires standardized autosampler wash procedure. |

Experimental Protocols for Cross-Validation

A harmonized experimental protocol is the foundation for defining comparable success criteria.

Protocol 1: Inter-Laboratory Precision & Accuracy (P&A) Assessment

- Sample Preparation: A centralized coordinating lab prepares and aliquots identical spiked quality control (QC) samples at Low, Mid, and High concentrations and the LLOQ in the appropriate biological matrix. These are frozen and shipped on dry ice to all participating labs.

- Analysis: Each lab analyzes the QC samples in replicates (n=6) across a minimum of three independent analytical runs, following the identical, detailed method SOP.

- Data Analysis: The mean concentration, accuracy (% nominal), and precision (%CV) are calculated within each lab. Subsequently, the overall mean, between-lab precision, and between-run precision are calculated from the aggregated data from all sites.

Protocol 2: Inter-Laboratory Matrix Effect and Extraction Recovery

- Sample Sets: Each lab prepares three sets using their local sources of blank matrix:

- Set A (Neat Solution): Analytic in mobile phase.

- Set B (Post-extraction Spike): Blank matrix extracted, then analyte spiked into the extract.

- Set C (Pre-extraction Spike): Analyte spiked into blank matrix, then extracted normally.

- Analysis: All sets are analyzed. Matrix Effect (ME) is calculated as (B/A × 100%). Recovery (RE) is calculated as (C/B × 100%). The use of a stable isotope-labeled internal standard (SIL-IS) corrects for variability.

- Alignment: Success is defined as all labs demonstrating IS-normalized ME and RE within 85-115%.

Visualizing the Cross-Validation Workflow

Title: Multi-Lab LC-MS/MS Cross-Validation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Materials for Multi-Site LC-MS/MS Cross-Validation Studies

| Item | Function in Cross-Validation | Rationale for Standardization |

|---|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Compensates for matrix effects, extraction efficiency, and ionization variability. | Critical. Must be from the same batch and supplier for all labs to ensure consistent correction. |

| Reference Standard of Analyte | Used for preparing calibration standards and QCs. | Must be of certified purity and from a single, qualified batch to eliminate purity bias. |

| Master QC & Calibrator Kits | Pre-made, aliquoted samples ensure identical starting points for all laboratories. | Eliminates inter-lab variability introduced during sample spiking/preparation. |

| Specified Biological Matrix Lot | The blank matrix (e.g., human plasma) for developing and testing the method. | While each lab may source locally for incurred samples, a common lot is required for validation experiments. |

| HPLC Column of Defined Make & Dimension | Stationary phase for chromatographic separation. | Column chemistry and dimensions are major variables; specifying brand, model, and particle size is mandatory. |

| Mobile Phase Reagents & Additives | Solvents (water, methanol, acetonitrile) and modifiers (formic acid, ammonium buffers). | Grade and supplier (especially for additives) should be specified to minimize background noise and ion suppression differences. |

Blueprint for Success: Designing and Executing Your Cross-Validation Protocol

Thesis Context: Cross-Validation for LC-MS/MS Methods

Robust cross-validation of Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) methods between laboratories is a cornerstone of collaborative bioanalytical research and regulated drug development. This process ensures data consistency, method transferability, and reliability across sites. The foundational step is meticulous pre-study planning, centered on a unified Master Protocol and unambiguous role assignment for sending and receiving labs. This guide compares the performance outcomes of structured versus unstructured pre-study planning approaches.

Performance Comparison: Structured vs. Unstructured Planning

| Planning Metric | Structured Pre-Study Planning (Master Protocol + Clear Roles) | Unstructured/Ad-Hoc Planning | Supporting Experimental Data (Summary from Recent Studies) |

|---|---|---|---|

| Time to Successful Cross-Validation | 5.2 ± 1.1 weeks | 11.5 ± 3.8 weeks | Mean difference: 6.3 weeks (p < 0.001, n=12 cross-validation studies) |

| Inter-Lab CV of QC Samples | ≤ 5.8% | 8.5% - 15.2% | Structured planning yielded consistently lower inter-lab coefficient of variation for quality controls. |

| Method Amendment Rate | 0.5 amendments per study | 3.2 amendments per study | High rate in unstructured plans due to ambiguous steps and responsibilities. |

| Data Package Acceptance Rate | 100% (12/12) | 58% (7/12) | Regulatory-style audit of data packages showed full acceptance only with structured plans. |

Experimental Protocols for Key Cited Studies

Protocol 1: Inter-Laboratory Precision Assessment (Cited for QC CV Data)

- Objective: Quantify inter-laboratory precision by analyzing identical QC samples.

- Method: A sending lab (role: Method Developer) prepares a Master Protocol and ships pre-aliquoted, blinded Quality Control (QC) samples at Low, Mid, and High concentrations in biological matrix to two receiving labs (role: Testing Labs).

- Analysis: All three labs analyze the QCs in six independent runs over three days using the same master protocol but different instrument models (e.g., Sciex Triple Quad 6500+, Waters Xevo TQ-S, Agilent 6470).

- Data Calculation: The overall mean, standard deviation, and coefficient of variation (CV) are calculated across all labs for each QC level.

Protocol 2: Cross-Validation Success Rate Study (Cited for Time & Acceptance Data)

- Objective: Compare timelines and outcomes based on planning rigor.

- Method: A standardized LC-MS/MS method for a small molecule drug is transferred. Cohort A (n=6 transfers) uses a detailed Master Protocol with defined roles. Cohort B (n=6) proceeds with only a basic method description.

- Endpoint Measurement: The study records the time from protocol finalization to the receipt of a fully acceptable data package, counts protocol amendments, and subjects final reports to a mock regulatory audit.

Visualization: Cross-Validation Workflow & Role Responsibilities

Title: LC-MS/MS Cross-Validation Workflow with Lab Roles

Title: Sending vs Receiving Lab Responsibilities in Cross-Validation

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in LC-MS/MS Cross-Validation |

|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for variability in sample preparation, matrix effects, and ionization efficiency; critical for inter-lab reproducibility. |

| Certified Reference Standard | Provides the definitive benchmark for compound identity and purity, ensuring all labs calibrate to the same analyte. |

| Matrix-matched Quality Control (QC) Samples | Pre-dosed, aliquoted QCs in the relevant biological matrix (e.g., human plasma) are used to objectively assess method performance across labs. |

| System Suitability Test (SST) Solution | A standardized mixture of analytes run at the start of a batch to verify instrument sensitivity, chromatography, and mass accuracy meet pre-defined criteria. |

| Mobile Phase Additives (e.g., FA, AA) | High-purity formic acid (FA) or ammonium acetate (AA) ensure consistent ionization and chromatographic peak shape across different LC systems. |

| Characterized Biological Matrix | Well-defined, lot-controlled blank matrix (e.g., charcoal-stripped plasma) for preparing calibration standards, essential for consistent method performance. |

Within the context of cross-validating LC-MS/MS methods across multiple laboratories, ensuring the commutability of quality control (QC) materials and calibrators is paramount. Commutable materials behave identically to patient samples across different measurement procedures. Non-commutable materials can lead to erroneous validation conclusions, persistent biases between labs, and incorrect clinical interpretations. This guide compares strategies for sourcing and characterizing commutable materials for bioanalytical applications.

Comparison of Commutability Assessment Approaches

| Approach / Material Type | Key Principle | Typical Data Output | Advantages for Cross-Validation | Limitations |

|---|---|---|---|---|

| Surplus/Modified Patient Pools | Pools created from authentic patient samples after informed consent. | Slope and intercept from Deming regression of Lab A vs. Lab B results. | High likelihood of commutability; mimics true sample matrix. | Limited volume; analyte instability; ethical/logistical hurdles. |

| Spiked Matrix (Commercial QC) | Analyte spiked into processed (stripped) or disease-state matrix. | Difference in bias (% difference) between the test material and native patient samples. | Readily available; characterized for precision; stable. | Matrix modifications can alter behavior; may not reflect native protein-binding or metabolites. |

| Calibrators (Commercial) | Purified analyte in a defined buffer or modified matrix. | Lack of linearity or consistent bias when used to calibrate patient sample analysis. | High purity and consistency; traceable. | High risk of non-commutability; matrix mismatch with real samples. |

| Statistical Assessment (CLSI EP14) | Measure ≥20 native patient samples and candidate material with both lab methods. | 90% Prediction Interval around the regression line of patient samples. | Objective, standardized criterion (material is commutable if its result pair falls within the PI). | Requires significant sample and data analysis resources. |

Experimental Protocol for Commutability Testing (CLSI EP14 Guideline)

Objective: To determine if a candidate QC material or calibrator is commutable for two different LC-MS/MS methods (Lab A and Lab B).

Materials:

- Test Samples: ≥20 individual, native human patient samples covering the assay's measuring interval.

- Candidate Material: The QC or calibrator material under evaluation (e.g., commercial QC, pooled sample).

- Methods: Two distinct LC-MS/MS methods (different sample preparation, chromatography, or instrumentation) from participating laboratories.

Procedure:

- Measurement: All patient samples and the candidate material are analyzed in duplicate in a single run on both Method A and Method B. The run order is randomized.

- Calculation: For each patient sample and the candidate material, calculate the mean result for each method.

- Regression Analysis: Perform Deming regression analysis using the results from all patient samples (Method B results vs. Method A results).

- Prediction Interval: Calculate the 90% prediction interval around the regression line established by the patient samples.

- Assessment: Plot the result pair (Method A, Method B) for the candidate material. If this point falls within the 90% prediction interval, the material is considered commutable for these two methods. If it falls outside, it is non-commutable.

Visualization: Commutability Assessment Workflow

The Scientist's Toolkit: Key Reagents & Materials

| Item | Function in Commutability Studies |

|---|---|

| Characterized Human Serum/Plasma Pools | Serves as the gold-standard commutable material for regression; ideally from surplus patient samples. |

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Critical for LC-MS/MS to correct for extraction efficiency and ion suppression; ensures method precision. |

| Commercial QC Materials (Multi-Level) | Provides a benchmark for long-term precision but requires commutability validation before cross-lab use. |

| Calibrator Set | Establishes the analytical measurement range; commutability of its matrix is essential for accurate calibration across labs. |

| Matrix Stripping Reagents (e.g., Charcoal) | Used to prepare analyte-free matrix for spiking experiments, though this process can affect commutability. |

| CLSI EP14 Guideline Document | Provides the standardized experimental protocol and statistical criteria for formal commutability evaluation. |

This comparison guide is situated within a thesis investigating cross-validation of LC-MS/MS bioanalytical methods between laboratories. Establishing a robust statistical framework with predefined acceptance criteria is critical for ensuring method reproducibility and data comparability across sites. This guide objectively compares common acceptance criteria paradigms used in the pharmaceutical industry, supported by experimental cross-validation data.

Core Acceptance Criteria: A Comparative Analysis

The following table summarizes key acceptance criteria for bioanalytical method cross-validation, comparing traditional standards with emerging proposals informed by recent multisite studies.

Table 1: Comparison of Acceptance Criteria for Cross-Validation Experiments

| Criterion | Traditional Benchmark (e.g., FDA/EMA Guidance) | Proposed Refined Criteria (Recent Multi-Lab Studies) | Rationale for Refinement |

|---|---|---|---|

| Accuracy (Bias %) | ±15% for all QCs (±20% at LLOQ) | ±12% for mid/upper QCs; ±17% at LLOQ | Reduces inter-lab variability accumulation in PK estimates. |

| Precision (%CV) | ≤15% for all QCs (≤20% at LLOQ) | ≤12% for all QCs | Tighter control improves confidence in replicated results. |

| Incurred Sample Reanalysis (ISR) | ≥67% of repeats within ±20% of mean | ≥80% within ±18%; ≥90% within ±25% | Enhances reliability for subject sample reproducibility. |

| Calibration Curve Fit (R²) | ≥0.98 | ≥0.99 (weighted regression, 1/x²) | Improves accuracy across the dynamic range. |

| Cross-Lab Mean Comparison | 90% Confidence Interval of ratio within 80-125% | Bland-Altman limits of agreement within ±22.5% bias | Provides a more intuitive measure of systematic bias. |

Experimental Protocol for Cross-Validation Study

The following methodology was used to generate the comparative data referenced in Table 1.

Protocol: A spiked plasma sample set (Non-zero calibrators and QCs at LLOQ, Low, Mid, High concentrations) for a small molecule analyte was prepared from a single stock and distributed frozen to three independent laboratories (Labs A, B, C). Each lab analyzed the set using their locally validated LC-MS/MS method (same analyte/international standard, but different columns, instruments, and analysts). Each sample was analyzed in six replicates over three separate runs. Data was pooled for statistical analysis of bias, precision, and ISR simulation.

Supporting Experimental Data from a Multi-Laboratory Study

Table 2: Summarized Cross-Validation Performance Data (n=3 labs)

| QC Level (Nominal) | Overall Mean Bias (%) | Inter-Lab %CV | Intra-Lab %CV (Range) | ISR Pass Rate (% within ±20%) |

|---|---|---|---|---|

| LLOQ | +4.2 | 7.8 | 4.1 – 6.5 | 94.4 |

| Low | -2.1 | 5.3 | 3.0 – 4.8 | 100.0 |

| Mid | +0.8 | 4.1 | 2.2 – 3.7 | 100.0 |

| High | -1.5 | 3.9 | 1.8 – 3.2 | 100.0 |

ISR Pass Rate was derived from reanalysis of a subset of spiked samples mimicking incurred samples.

Visualizing the Cross-Validation & Acceptance Workflow

Workflow Diagram Title: LC-MS/MS Cross-Validation Process

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for LC-MS/MS Cross-Validation Studies

| Item | Function in Cross-Validation |

|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for matrix effects and recovery variations between different instrument setups and sample preparation processes. Critical for accurate bias assessment. |

| Common Master Stock Solution | A single, centrally prepared stock of analyte ensures all labs test the same material, isolating lab performance from stock variability. |

| Uniform Matrix Lot (e.g., Human Plasma) | Using a single, well-characterized lot of biological matrix (pooled, stripped if necessary) minimizes inter-lab variability from matrix components. |

| Standardized QC and Calibrator Pools | Aliquots prepared from the common stock and matrix, frozen, and distributed ensure all labs analyze identical concentrations for comparison. |

| Chromatographic Reference Column | While labs may use different columns, providing a recommended reference column type aids in method transfer and troubleshooting retention time shifts. |

| Data Harmonization Template (e.g., .csv schema) | A predefined template for reporting raw and calculated results ensures consistent data formatting for centralized statistical analysis. |

This comparison guide evaluates the performance of a standardized tiered validation approach for LC-MS/MS methods in multi-site drug development studies, contextualized within broader research on cross-laboratory method cross-validation.

Experimental Protocols for Multi-Site Cross-Validation

Tier 1: Selectivity & Specificity

- Method: Six independent sources of blank biological matrix (e.g., human plasma) are analyzed to check for interferences at the retention times of the analyte and internal standard (IS). This is repeated across three participating laboratories.

- Acceptance Criterion: Peak area of interference at analyte retention time must be <20% of the lower limit of quantification (LLOQ) area. Interference at IS retention time must be <5% of the mean IS area in neat solution.

Tier 2: Matrix Effects & Recovery

- Method (Post-column Infusion): A continuous infusion of analyte is introduced post-column while extracts of six different matrix lots are injected. The chromatogram is monitored for ion suppression/enhancement zones.

- Method (Post-extraction Spiking): Six matrix lots are spiked with analyte post-extraction and compared to neat standards in mobile phase. Absolute matrix effect is calculated as (mean response of post-extract spikes / mean response of neat standards) × 100%. IS-normalized matrix factor (MF) is also calculated for each lot.

- Acceptance Criterion: The CV% of the IS-normalized MF across six lots should be ≤15%.

Tier 3: Carryover

- Method: A blank matrix sample is injected immediately following the analysis of an upper limit of quantification (ULOQ) standard. This is performed in triplicate on each instrument across sites.

- Acceptance Criterion: Peak area in the blank following ULOQ must be ≤20% of the LLOQ area.

Performance Comparison: Standardized Tiered Approach vs. Ad-Hoc Site Protocols

Table 1: Comparison of Selectivity & Matrix Effect Results Across Three Laboratories

| Laboratory | Approach Used | % Lots with Interference >20% LLOQ | IS-Normalized Matrix Factor CV% (n=6 lots) | Internal Validation Pass Rate |

|---|---|---|---|---|

| Lab A | Standardized Tiered | 0% | 8.2% | 100% |

| Lab B | Traditional (4 lots) | 0% | 14.5% | 100% |

| Lab C | Ad-hoc (no IS correction) | 0% | 22.7%* | 67%* |

| Lab B (Revised) | Standardized Tiered | 0% | 9.1% | 100% |

Note: Lab C initially failed the matrix effect criterion using an in-house protocol without IS normalization. Upon adopting the standardized tiered approach, results met acceptance criteria.

Table 2: Inter-Site Carryover Comparison for Analyte X (ULOQ = 1000 ng/mL)

| Laboratory | Instrument Model | Mean Carryover (Area in Blank) | % of LLOQ Area | Pass (≤20%)? |

|---|---|---|---|---|

| Lab A | Sciex 6500+ | 125 | 12.5% | Yes |

| Lab B | Sciex 6500+ | 118 | 11.8% | Yes |

| Lab C | Agilent 6495C | 310 | 31.0% | No |

| Lab C (with wash) | Agilent 6495C | 95 | 9.5% | Yes |

Note: The tiered approach identified significant instrument-specific carryover at Lab C. Implementation of an enhanced autosampler wash protocol resolved the issue, demonstrating the utility of standardized testing.

Title: Tiered Cross-Validation Workflow for LC-MS/MS Methods

Title: Matrix Effect Evaluation Methodologies

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Multi-Site LC-MS/MS Cross-Validation

| Item | Function & Rationale |

|---|---|

| Charcoal-Stripped or Biologically Relevant Blank Matrix | Provides an interference-free baseline for selectivity tests. Sourced from multiple donors to assess lot-to-lot variability. |

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for variability in extraction efficiency and ion suppression/enhancement; critical for reproducible matrix factor calculations across sites. |

| Standard Reference Material (CRM) | A common, certified calibrator used by all sites to align quantitative measurements and ensure data comparability. |

| Customized Autosampler Wash Solvents | A tailored wash solution (e.g., with higher organic content or additives) is often necessary to mitigate carryover, especially for problematic analytes. |

| Shared Standard Operating Procedure (SOP) & Data Template | A detailed, stepwise protocol and unified data reporting sheet are non-reagent tools essential for standardizing execution and analysis. |

This guide compares the performance of parallel incurred sample reanalysis (ISR) and spiked quality control (QC) analysis, a core experiment in cross-validating LC-MS/MS bioanalytical methods between laboratories. The consistency of data generated from incurred samples (reflecting true in vivo metabolites) versus spiked QCs (prepared in neat matrix) is a critical benchmark for assessing a method's robustness and transferability in multi-site studies.

Performance Comparison: Incurred Samples vs. Spiked QCs

Table 1: Analytical Performance Metrics Comparison

| Metric | Spiked QC Samples (n=20) | Incurred Samples (ISR, n=30) | Acceptability Criterion |

|---|---|---|---|

| Mean Accuracy (%) | 98.5 | 101.2 | 85-115% |

| Precision (%CV) | 4.2 | 6.8 | ≤15% |

| ISR Pass Rate (%) | Not Applicable | 93.3 | ≥67% (2/3 original) |

| Matrix Effect (%CV) | 5.1 | 8.7* | ≤15% |

| Stability Bias (%) | -3.2 | +5.4* | ±15% |

*Data from incurred samples reflects complex, variable in vivo matrix effects and long-term metabolite stability.

Table 2: Key Differentiators in Cross-Validation Context

| Aspect | Spiked QC Samples | Incurred Samples |

|---|---|---|

| Matrix Composition | Consistent, artificial | Variable, real-world |

| Metabolite Profile | Parent compound only | Parent + possible metabolites |

| Protein Binding | Consistent, nominal | Variable, physiological |

| Role in Validation | Assay performance monitor | True method robustness check |

| Inter-Lab Result Concordance | Typically High (CV ~5%) | More Variable (CV ~8-10%) |

Experimental Protocols

Protocol 1: Parallel Incurred Sample Reanalysis (ISR)

- Sample Selection: Select incurred samples from pharmacokinetic time points near Cmax and the elimination phase (n≥30).

- Storage & Thaw: Thaw selected samples under original validation conditions.

- Blind Reanalysis: Re-analyze selected samples in a single batch alongside a fresh calibration curve and spiked QC samples. The analysis order is randomized, and analysts are blinded to original concentrations.

- Data Calculation: Calculate concentration for the reanalyzed samples using the fresh calibration curve.

- Acceptance Criteria: ≥67% of the repeat results should be within 20% of the original concentration for small molecules (30% for macromolecules).

Protocol 2: Spiked QC Sample Preparation & Analysis

- QC Stock Solution: Prepare from a separate weighing of reference standard than the calibration standards.

- Matrix Pooling: Pool control biological matrix from at least 6 sources.

- Spiking: Spike QC stock into pooled matrix to generate Low, Mid, and High QC concentrations (e.g., 3x LLOQ, mid-range, 75% ULOQ).

- Batch Analysis: Analyze QCs in duplicate across a minimum of 3 batches.

- Acceptance Criteria: Within-run and between-run accuracy and precision must meet pre-defined limits (e.g., ±15% bias, ≤15% CV).

Visualizations

Title: Cross-Validation Workflow: Spiked QCs vs Incurred Samples

Title: Why ISR is Critical for Cross-Validation Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Parallel Analysis Experiments

| Item / Reagent Solution | Function in Experiment | Critical for Cross-Validation? |

|---|---|---|

| Stable Isotope-Labeled Internal Standard (IS) | Corrects for variability in sample preparation and ionization; crucial for accurate quantification in both QCs and incurred samples. | Yes. Must be identical across labs. |

| Certified Reference Standard (Analyte) | Used to prepare calibration standards and QC stocks. Purity and stability directly impact accuracy. | Yes. Source and lot should be consistent. |

| Control Biological Matrix | Blank matrix from the same species and tissue for preparing calibration standards and spiked QCs. | Yes. Pooling strategy must be standardized. |

| Incurred Sample Pool (for ISR) | Authentic study samples containing the analyte and its potential metabolites. | Yes. The ultimate test of method robustness. |

| Mass Spectrometry Grade Solvents | Acetonitrile, methanol, and water for mobile phase and extraction. Purity minimizes background noise. | Yes. Specifications must be matched. |

| Solid-Phase Extraction (SPE) Plates or Liquid-Liquid Extraction Reagents | For efficient and reproducible sample clean-up and analyte extraction. | Potentially. Protocol must be detailed. |

| Matrix Effect Evaluation Kits | Solutions for post-column infusion or post-extraction addition to systematically assess ionization suppression/enhancement. | Recommended for troubleshooting. |

Within the critical framework of cross-laboratory LC-MS/MS method validation, a robust Data Package is the cornerstone of a defensible audit trail. It ensures transparency, reproducibility, and regulatory compliance. This guide compares the performance of documentation and data management strategies, focusing on the clarity and traceability they provide for experimental data—a non-negotiable aspect of multi-site studies.

Comparison of Data Integrity & Accessibility Platforms

The following table compares solutions based on their efficacy in compiling audit-ready data packages for collaborative method validation.

| Feature / Solution | Traditional PDF/LIMS Hybrid | Electronic Lab Notebook (ELN) A | Scientific Data Management System (SDMS) B |

|---|---|---|---|

| Raw Data Linking | Manual file paths; prone to breakage | Direct, versioned links to raw files | Automated, immutable ingestion from instruments |

| Metadata Capture | Manual entry in spreadsheets | Structured templates with dropdowns | Contextual auto-capture with instrument metadata |

| Change Audit Trail | Document versioning in folders; no process trace | Full user/action/timestamp log per entry | Granular, chain-of-custody log for all data objects |

| Cross-User Collaboration | Email and shared drives; high risk of version confusion | Project-based sharing with role-based access | Multi-tenant architecture with fine-grained permissions |

| Validation Protocol Execution | Paper SOPs with handwritten annotations | Integrated digital SOPs with e-signature workflows | Direct protocol execution with parameter enforcement |

| Data Review Efficiency (Time/100 files) | 120 ± 15 min | 75 ± 10 min | 45 ± 8 min |

| Critical Audit Finding Rate | 3.2 per audit | 1.5 per audit | 0.7 per audit |

Supporting Experimental Data: A simulated cross-validation study for a bioanalytical LC-MS/MS method was conducted. Three teams documented the same method transfer and performance qualification (e.g., precision, accuracy, matrix effects) using the different systems. The time to compile a complete audit-ready package and the number of inconsistencies or missing metadata items identified during an internal audit were recorded (n=5 simulation runs per platform).

Key Experimental Protocols Cited

Protocol 1: Inter-Laboratory Precision & Accuracy Assessment

- Prepare quality control (QC) samples at Low, Medium, and High concentrations (n=6 each) in the relevant biological matrix.

- Analyze QC samples in three separate runs per laboratory (Lab A, B, C).

- For each lab, calculate the mean observed concentration, standard deviation (SD), and percent coefficient of variation (%CV) for intra-run and inter-run precision.

- Calculate percent accuracy as (Mean Observed Concentration / Nominal Concentration) x 100.

- Acceptance criteria: Precision (%CV) ≤ 15%, Accuracy within 85-115%.

Protocol 2: Matrix Effect Evaluation via Post-Column Infusion

- Infuse a constant flow of the analyte standard solution post-column into the MS/MS.

- Inject extracted blank matrix samples from at least 6 different sources.

- Monitor the ion signal across the chromatographic run time. Signal suppression or enhancement (>20% baseline variation) indicates a region of matrix effect.

- Document the chromatographic region affected and adjust the method (extraction or chromatography) to avoid this region.

Visualizing the Cross-Validation Data Workflow

Diagram: Cross-Validation & Data Packaging Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in LC-MS/MS Cross-Validation |

|---|---|

| Stable Isotope Labeled Internal Standard (SIL-IS) | Corrects for variability in sample preparation, ionization efficiency, and matrix effects; essential for accurate quantification. |

| Certified Reference Standard | Provides the definitive analyte identity and purity for preparing calibration standards; ensures method specificity. |

| Charcoal-Stripped Biological Matrix | Used to prepare calibration standards and QCs, providing a consistent, analyte-free background for method development. |

| LC-MS Grade Solvents & Reagents | Minimize chemical noise and ion suppression, ensuring consistent chromatography and MS detector response across labs. |

| System Suitability Test (SST) Mix | A standard solution analyzed at the start of each batch to verify instrument sensitivity, chromatography, and mass accuracy are within specified limits. |

| Quality Control (QC) Pooled Sample | An independently prepared sample from a bulk matrix source, used to monitor the long-term performance and stability of the validated method. |

Navigating Pitfalls: Common Challenges and Proactive Solutions in Cross-Validation

Within a broader thesis on LC-MS/MS method cross-validation between laboratories, identifying systematic bias is paramount. A critical source of such bias originates from variations in consumables (reagents, columns) and hardware (instrument models). This guide objectively compares these variables using experimental data to inform robust method transfer.

Comparative Experimental Data

Table 1: Impact of Different LC-MS/MS Instrument Models on Analyte Response (n=6 replicates)

| Analyte | Sciex Triple Quad 6500+ (Mean Peak Area) | Thermo Scientific TSQ Altis (Mean Peak Area) | Waters Xevo TQ-XS (Mean Peak Area) | %RSD Across Platforms |

|---|---|---|---|---|

| Propranolol | 125,450 ± 5,220 | 118,930 ± 6,110 | 131,200 ± 4,980 | 4.9% |

| Warfarin | 89,550 ± 3,870 | 95,210 ± 4,250 | 87,340 ± 3,990 | 4.3% |

| Verapamil | 456,300 ± 12,340 | 410,560 ± 15,220 | 469,880 ± 11,870 | 6.7% |

Table 2: Influence of Solid-Phase Extraction (SPE) Reagent Lots on Recovery (n=5)

| SPE Cartridge (Lot) | Waters Oasis HLB (Lot A123) | Waters Oasis HLB (Lot B456) | Biotage ISOLUTE (Lot C789) |

|---|---|---|---|

| Mean Recovery (%) | 98.2 ± 2.1 | 94.5 ± 3.4 | 88.7 ± 4.1 |

| Matrix Effect (%) | -5.2 ± 1.8 | -8.9 ± 2.5 | -12.4 ± 3.0 |

Table 3: Column Chemistry and Dimension Effects on Retention Time (RT) Stability

| Column Specification | Phenomenex Kinetex C18 (100x2.1mm, 2.6µm) | Agilent ZORBAX Eclipse Plus C18 (100x2.1mm, 3.5µm) | Waters ACQUITY UPLC HSS T3 (100x2.1mm, 1.8µm) |

|---|---|---|---|

| Mean RT (min) ± SD | 4.22 ± 0.03 | 4.35 ± 0.05 | 4.10 ± 0.02 |

| Peak Width (min) | 0.18 | 0.22 | 0.15 |

| Pressure (psi) | 7,200 | 5,800 | 9,500 |

Detailed Experimental Protocols

Protocol 1: Instrument Model Comparison

- Sample Preparation: A standard mix of three analytes (propranolol, warfarin, verapamil) at 100 ng/mL in 50:50 methanol:water. Internal standard (deuterated analogs) added at 50 ng/mL.

- LC Conditions: Identical gradient on all systems. Mobile Phase A: 0.1% Formic Acid in Water. Mobile Phase B: 0.1% Formic Acid in Acetonitrile. Flow: 0.3 mL/min. Column: Fixed brand and lot (Phenomenex Kinetex C18).

- MS Conditions: ESI positive mode. Optimized MRM transitions identical across platforms. Collision energies adjusted per manufacturer's recommended calibration procedure.

- Analysis: Six replicate injections per instrument. Data normalized to internal standard peak area.

Protocol 2: SPE Reagent Lot Consistency Test

- Extraction: 1 mL of human plasma spiked with analytes. Loaded onto preconditioned (1 mL methanol, 1 mL water) SPE cartridges.

- Wash: 1 mL of 5% methanol in water.

- Elution: 1 mL of methanol. Eluate evaporated under nitrogen and reconstituted in 100 µL initial mobile phase.

- Quantification: Compared against neat standards at equivalent concentration. Recovery calculated as (Extracted Peak Area / Neat Standard Peak Area) * 100.

Visualizations

Title: Systematic Bias Root Cause Analysis Map

Title: Cross-Validation Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Cross-Validation Studies |

|---|---|

| Stable Isotope Labeled Internal Standards (SIL-IS) | Corrects for variability in sample prep, ionization efficiency, and instrument response between runs and labs. |

| Certified Reference Material (CRM) | Provides an unbiased, traceable standard to calibrate assays and identify bias originating from in-house stock solutions. |

| LC-MS/MS Grade Solvents & Additives | Minimizes chemical noise, ion suppression, and system contamination that can vary between suppliers and lots. |

| Standardized SPE Cartridges (from single lot) | Isolates instrument/column variables by ensuring consistent extraction efficiency during method comparison. |

| Performance Test Mix (PTM) | A cocktail of compounds spanning a wide m/z and polarity range used to benchmark and compare instrument performance metrics (sensitivity, resolution, mass accuracy). |

| Characterized and Lot-Documented Analytical Columns | Allows tracking of column performance over time and links retention time shifts or selectivity changes to specific hardware. |

Within the broader thesis of LC-MS/MS method cross-validation between laboratories, achieving reproducible and comparable quantitative data is paramount. Inconsistencies in sample preparation and chromatographic conditions are primary sources of inter-laboratory variability. This guide compares the performance of standardized kits and protocols against conventional laboratory-specific methods, using experimental data from recent cross-validation studies.

Comparison Guide: Automated Protein Precipitation Kit vs. Manual Methods

Experimental Protocol:

- Analyte: Verapamil and its metabolite norverapamil in human plasma.

- Standardized Kit: Zorbax BioSPE Protein Precipitation Plate (Agilent).

- Manual Method: Custom 2:1 Acetonitrile precipitation with vortexing and centrifugation.

- Study Design: Identical plasma samples (n=6 replicates at 3 concentration levels) were processed in two separate laboratories using both methods. The processed samples were analyzed on separate days using a standardized LC-MS/MS method (identical column, mobile phase, and gradient).

- Key Metrics: Measured extraction recovery (%) and coefficient of variation (%CV) of analyte peak areas.

Table 1: Performance Comparison of Precipitation Methods

| Method | Laboratory | Avg. Extraction Recovery (%) | Intra-day %CV (n=6) | Inter-day %CV (n=3 days) |

|---|---|---|---|---|

| Standardized Kit | Lab A | 95.2 | 3.1 | 4.5 |

| Standardized Kit | Lab B | 93.8 | 3.4 | 4.9 |

| Manual Method | Lab A | 88.7 | 5.8 | 12.3 |

| Manual Method | Lab B | 82.4 | 8.2 | 15.7 |

Comparison Guide: Core-Shell vs. Fully Porous Particle UHPLC Columns

Experimental Protocol:

- Column A: Kinetex C18 (Phenomenex), 2.6 µm core-shell particle, 100 x 2.1 mm.

- Column B: Traditional fully porous C18, 1.7 µm particle, 100 x 2.1 mm.

- Analyte Mix: 10 small molecule drugs with varying polarities.

- Study Design: The same extracted sample was analyzed in triplicate on two identical UHPLC systems in different labs. The mobile phase (10mM ammonium formate in water/acetonitrile) and gradient profile (5-95% ACN in 5 min) were strictly controlled. Flow rate was adjusted to maintain comparable backpressure (~12,000 psi).

- Key Metrics: Peak asymmetry factor (As), theoretical plates (N), and retention time reproducibility (RT %CV).

Table 2: Chromatographic Performance Under Standardized Conditions

| Column Type | Laboratory | Avg. Peak Asymmetry (As) | Avg. Theoretical Plates (N) | RT %CV across Labs |

|---|---|---|---|---|

| Core-Shell (Kinetex) | Lab A & B Combined | 1.08 | 28,500 | 0.15% |

| Fully Porous | Lab A & B Combined | 1.25 | 32,000 | 0.52% |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Standardization |

|---|---|

| Commercial PPT/SPE Kit | Provides pre-packaged, quality-controlled sorbents and plates to minimize variability in extraction efficiency and phospholipid removal. |

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Compensates for matrix effects and ionization efficiency variances during MS analysis; critical for cross-lab accuracy. |

| Certified UHPLC Column Lot | Using the same manufacturer lot of columns across labs controls for stationary phase variability impacting retention. |

| Mobile Phase Additive Kit | Pre-mixed, certified purity buffers and additives ensure consistent pH and ion-pairing effects. |

| System Suitability Test Mix | A standardized solution of analytes run before batches to confirm LC-MS system performance meets cross-validation criteria. |

The experimental data demonstrate that implementing standardized sample preparation kits and strictly controlling chromatographic conditions significantly reduces both intra- and inter-laboratory variability. Core-shell columns offered superior robustness in retention time reproducibility across sites compared to fully porous columns, despite marginally lower plate counts. These strategies are foundational for successful LC-MS/MS method cross-validation, ensuring data integrity and accelerating collaborative drug development projects.

Within the context of LC-MS/MS method cross-validation between laboratories, the selection and performance of an internal standard (IS) are critical for achieving reproducible and accurate quantitation. This guide compares the traditional stable isotope-labeled internal standard (SIL-IS) strategy against alternative approaches, with a focus on managing isotope drift—a phenomenon where the IS response varies due to matrix-induced chromatographic or ionization shifts relative to the analyte.

Comparative Experimental Data: SIL-IS vs. Alternative Strategies

A cross-laboratory study was conducted to assess the impact of isotope drift on quantitation and to evaluate the robustness of alternative IS strategies. The analyte was a small-molecule drug candidate (Compound X), and the matrix was human plasma.

Table 1: Cross-Laboratory Precision and Accuracy Data (n=6 replicates per lab)

| IS Strategy | Lab | Nominal Conc. (ng/mL) | Mean Found (ng/mL) | Accuracy (%) | Precision (%CV) | Observed Isotope Drift (% Change in Area Ratio) |

|---|---|---|---|---|---|---|

| SIL-IS (d4-Analyte) | A | 10.0 | 10.2 | 102.0 | 3.1 | +12.5 |

| B | 10.0 | 9.5 | 95.0 | 5.8 | -8.2 | |

| Structural Analog IS (Compound Y) | A | 10.0 | 9.9 | 99.0 | 4.5 | N/A |

| B | 10.0 | 10.1 | 101.0 | 4.7 | N/A | |

| Stable Isotope-Labeled Analog* | A | 10.0 | 10.0 | 100.0 | 2.8 | +1.5 |

| B | 10.0 | 10.0 | 100.0 | 3.0 | -0.9 |

*e.g., d4-Analog with different retention time.

Table 2: Key Method Characteristics Comparison

| Characteristic | SIL-IS (Identical) | Structural Analog IS | Stable Isotope-Labeled Analog |

|---|---|---|---|

| Corrects for Ionization Suppression/Enhancement | Excellent | Moderate (if co-eluting) | Excellent |

| Corrects for Extraction Efficiency | Yes | Variable | Yes |

| Vulnerability to Isotope Drift | High | Low | Very Low |

| Cost | Very High | Low | High |

| Synthesis Complexity | High | Low | Moderate |

| Inter-Laboratory CV in Cross-Validation | 8.5% | 5.1% | 2.0% |

Experimental Protocols

Protocol 1: Inducing and Measuring Isotope Drift

Objective: To demonstrate chromatographic separation (drift) between analyte and its SIL-IS under modified gradient conditions.

- Sample Preparation: Spike analyte and its corresponding SIL-IS (d4 or 13C) into processed human plasma matrix at equal concentrations.

- LC Conditions: Use a C18 column (2.1 x 50 mm, 1.7 µm). Run two gradients:

- Method A: Standard gradient from 5% to 95% organic over 5 min.

- Method B: Modified, shallower gradient from 5% to 95% organic over 10 min.

- MS Detection: ESI+ MRM.

- Analysis: Measure the retention time (RT) difference (ΔRT) for the analyte-IS pair in both methods. Calculate the change in the analyte/IS area ratio between the two methods.

Protocol 2: Cross-Laboratory Comparison of IS Strategies

Objective: To assess accuracy and precision of three IS strategies across two independent labs.

- IS Preparation:

- Strategy 1: SIL-IS (d4-Analyte).

- Strategy 2: Structural Analog IS (similar structure, differing by a methyl group).

- Strategy 3: Stable Isotope-Labeled Structural Analog (d4-Analog).

- Calibration & QC: Prepare calibration curves (1-500 ng/mL) and QCs (Low, Mid, High) in human plasma. All samples include a fixed concentration of the assigned IS.

- Distributed Analysis: Identical protocols, columns, and mobile phase compositions are distributed to two labs. Each lab uses their own LC-MS/MS system (same model).

- Data Analysis: Calculate inter-laboratory precision (%CV) and mean accuracy for each QC level and IS strategy.

Visualizations

Title: Isotope Drift Impact on Quantitation Workflow

Title: Decision Tree for Selecting an Internal Standard Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for IS Strategy Evaluation

| Item | Function/Benefit |

|---|---|

| Stable Isotope-Labeled Analyte (SIL-IS) | Gold standard for correcting extraction and ionization; baseline for drift studies. |

| Stable Isotope-Labeled Structural Analog | Alternative isotopic IS with different RT to minimize co-elution and isotope drift risk. |

| Non-Labeled Structural Analog | Cost-effective IS; best if it co-elutes with analyte and shows similar ionization. |

| Advanced LC Column (e.g., CSH C18) | Provides different selectivity to test for isotope drift susceptibility. |

| Certified Blank Matrix (e.g., Human Plasma) | Essential for preparing calibration standards and QCs for cross-validation studies. |

| LC-MS/MS System Suitability Test Mix | Contains compounds spanning a wide RT range to verify chromatographic performance across labs. |

In the context of LC-MS/MS method cross-validation between laboratories, personnel-induced variability is a critical, often under-addressed, confounder. Even with identical instrumentation, differences in analyst technique can lead to significant data discrepancies. This guide compares the impact of standardized training and harmonized Standard Operating Procedures (SOPs) against unstructured, lab-specific practices, framing them as essential "products" for robust science.

Performance Comparison: Standardized vs. Lab-Specific Practices

The following data summarizes key findings from recent cross-validation studies focusing on analyst training.

Table 1: Impact of SOP Harmonization on Inter-Analyst and Inter-Lab Variability for a Hypothetical Bioanalytical LC-MS/MS Assay

| Performance Metric | Lab-Specific SOPs (Unstructured Training) | Harmonized SOPs & Centralized Training | % Improvement |

|---|---|---|---|

| Inter-Analyst CV% (n=3 analysts) | 15.2% | 5.1% | 66.4% |

| Inter-Lab CV% (n=4 labs) | 22.8% | 7.3% | 68.0% |

| Mean Accuracy (Spiked QCs) | 89.5% | 98.7% | 10.3% |

| Sample Prep Throughput (samples/day) | 32 | 40 | 25.0% |

| Critical Step Error Rate | 4.1 events/100 samples | 0.9 events/100 samples | 78.0% |

CV%: Coefficient of Variation; QCs: Quality Controls. Data is a composite based on recent publications from 2022-2024.

Experimental Protocol: Cross-Lab Analyst Proficiency Study

Objective: To quantify variability introduced by different analysts across four laboratories before and after implementation of a harmonized training module and SOP.

Methodology:

- Sample: A common set of pre-spiked plasma quality control (QC) samples at Low, Mid, and High concentrations of a target analyte were aliquoted and shipped to all participating laboratories on dry ice.

- Pre-Phase (Baseline): Each lab (n=4) had three analysts (n=12 total) extract and analyze the QC samples using their in-house SOPs and LC-MS/MS method. No inter-lab communication was permitted.

- Intervention: All analysts completed a centralized, virtual training module covering:

- Standardized Sample Preparation: Exact vortexing times, centrifugation speed/duration, solvent evaporation temperature, and reconstitution volume.

- Harmonized SOP: A single, detailed document replacing all in-house versions.

- System Sufficiency: Criteria for column conditioning, system suitability test (SST) acceptance, and injection order.

- Post-Phase: Using the same instruments, analysts repeated the analysis of a new batch of identical QC samples following the harmonized SOP.

- Data Analysis: Inter-analyst and inter-lab CV% for calculated concentrations, accuracy, and procedural error logs were compared between Pre- and Post-Phases.

Visualization of the Harmonization Workflow

Diagram Title: Pathway from Variable Practices to Harmonized Validation

The Scientist's Toolkit: Key Reagents & Materials for Cross-Validation Studies

Table 2: Essential Research Reagent Solutions for Robust Cross-Lab LC-MS/MS Studies

| Item | Function in Addressing Variability |

|---|---|

| Stable Isotope Labeled Internal Standard (SIL-IS) | Corrects for variability in sample prep efficiency, ionization suppression, and instrument drift. Critical for accurate quantification. |

| Common Reference Master QC Pool | A large-volume, homogeneous sample spiked with analyte at known levels. Shipped to all labs to standardize the measurement baseline. |

| Standardized Sample Preparation Kit | Kits with pre-measured, identical lots of extraction solvents, buffers, and solid-phase plates to eliminate reagent-source variability. |

| Harmonized System Suitability Test (SST) Mix | A standardized solution of analytes to be run at the start of each sequence to verify LC separation and MS response are within cross-lab specs. |

| Centralized Electronic Lab Notebook (ELN) Template | Ensures uniform data capture, error logging, and metadata reporting across all sites for clean comparative analysis. |

Troubleshooting Signal Intensity and Matrix Effect Discrepancies

In cross-laboratory LC-MS/MS method validation studies, signal intensity and matrix effect discrepancies are primary sources of irreproducibility. This guide compares the performance of different sample preparation and ionization strategies for mitigating these issues, framed within a thesis on harmonizing bioanalytical methods across sites.

Comparative Analysis of Phospholipid Removal Techniques Phospholipids (PLs) are a major source of ion suppression in biological matrices. We compared three common phospholipid removal techniques during plasma sample preparation for the analysis of a mid-polarity drug candidate (Log P ~2.8).