Modeling Dose-Response Uncertainty: A Comprehensive Guide to Gaussian Process Regression in Drug Development

This article provides a comprehensive overview of Gaussian Process (GP) regression for quantifying and modeling uncertainty in dose-response relationships.

Modeling Dose-Response Uncertainty: A Comprehensive Guide to Gaussian Process Regression in Drug Development

Abstract

This article provides a comprehensive overview of Gaussian Process (GP) regression for quantifying and modeling uncertainty in dose-response relationships. Aimed at researchers and drug development professionals, it covers foundational concepts of GP regression and its unique advantages for capturing non-linear, probabilistic dose-response curves. The guide details practical implementation steps, from kernel selection to hyperparameter tuning, and demonstrates applications in early-stage assay analysis and clinical trial dose-finding. It addresses common challenges in model fitting, computational scalability, and optimization techniques. Finally, it validates GP regression against traditional methods like logistic regression and splines, highlighting its superior uncertainty quantification for safer, more efficient therapeutic dose optimization. This synthesis aims to equip practitioners with the knowledge to leverage GP regression for robust, data-driven decision-making in preclinical and clinical research.

Understanding Gaussian Process Regression: A Primer for Probabilistic Dose-Response Modeling

In pharmacological research and toxicology, the dose-response relationship is fundamental for determining compound efficacy, potency (e.g., EC50/IC50), and safety margins (e.g., therapeutic index). Traditional analysis relies heavily on point estimates derived from curve-fitting algorithms applied to aggregated data, often using sigmoidal models like the four-parameter logistic (4PL) equation. This approach, while useful, discards a critical dimension of information: quantifiable uncertainty. Framed within a broader thesis on Gaussian Process (GP) regression for dose-response analysis, this whitepaper argues that explicitly modeling and propagating uncertainty is not merely a statistical refinement but a prerequisite for robust, reproducible, and predictive science. GP regression provides a powerful non-parametric Bayesian framework to achieve this, delivering not just a mean response curve but a full posterior distribution over functions, thereby quantifying uncertainty at every dose level and for derived parameters.

The Limitations of Point Estimates

Point estimates from standard models (4PL, Emax) provide a single, "best-fit" curve. This simplification introduces several risks:

- Overconfidence in Decision-Making: A single EC50 value obscures its plausible range. Two compounds with identical point estimate EC50s may have vastly different confidence intervals, leading to misprioritization in lead optimization.

- Ignoring Heteroscedasticity: Variance in biological response often changes with dose (e.g., higher noise at low and high doses). Point-estimate methods typically assume constant variance, violating model assumptions.

- Poor Extrapolation: Point estimates offer no natural measure of confidence for predictions at untested doses, which is critical for forecasting efficacy or toxicity in new dosing regimens.

- Information Loss from Hierarchical Data: Ignoring uncertainty from replicate-to-replicate, plate-to-plate, or donor-to-donor variation pools data in a way that inflates perceived precision.

Gaussian Process Regression: A Framework for Uncertainty Quantification

Gaussian Process regression is a Bayesian, non-parametric approach that defines a prior distribution directly over the space of response functions. A GP is fully specified by a mean function m(x) and a covariance (kernel) function k(x, x').

- Prior: ( f(\mathbf{x}) \sim \mathcal{GP}(m(\mathbf{x}), k(\mathbf{x}, \mathbf{x}')) )

- Posterior: After observing data (\mathbf{y}) at doses (\mathbf{X}), the posterior predictive distribution for the response ( f* ) at a new dose ( \mathbf{x}* ) is Gaussian with closed-form mean and variance: [ \begin{aligned} \mathbb{E}[f*] &= \mathbf{k}^T (K + \sigma_n^2 I)^{-1} \mathbf{y} \ \text{Var}[f_] &= k(\mathbf{x}*, \mathbf{x}) - \mathbf{k}_^T (K + \sigman^2 I)^{-1} \mathbf{k}* \end{aligned} ] where (K) is the covariance matrix between training points, (\mathbf{k}*) is the covariance between test and training points, and (\sigman^2) is the observation noise variance.

This posterior variance is the model's intrinsic quantification of uncertainty—it is lower near observed data points and higher in regions with sparse data.

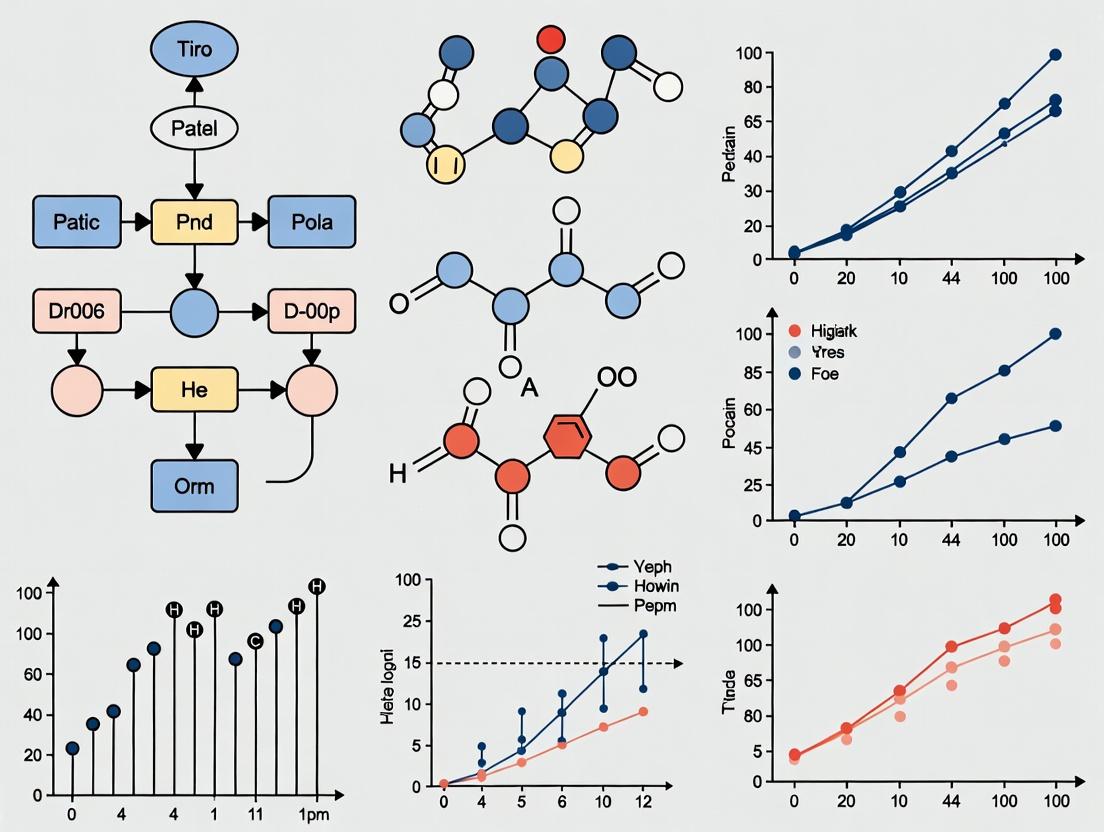

Diagram: Gaussian Process Regression Workflow for Dose-Response

Experimental Data & Comparative Analysis

To illustrate the practical importance, we analyze a typical in vitro cytotoxicity assay dataset (simulated based on current literature trends) for two candidate compounds, A and B. The data includes technical replicates across three independent experiments.

Table 1: Point Estimates vs. Uncertainty-Aware Estimates for Candidate Compounds

| Parameter | Compound | 4PL Point Estimate (CI from Bootstrapping) | GP Posterior Mean (95% Credible Interval) | Key Insight |

|---|---|---|---|---|

| IC50 (nM) | A | 12.1 nM (8.5 – 18.3 nM) | 13.5 nM (7.8 – 22.1 nM) | GP interval is wider, better capturing true parameter uncertainty, especially tail risks. |

| IC50 (nM) | B | 11.8 nM (9.1 – 15.2 nM) | 12.2 nM (10.1 – 15.0 nM) | GP interval is more symmetrical and reflects consistent replicate data. |

| Hill Slope | A | 1.2 (0.9 – 1.5) | 1.3 (0.7 – 2.1) | GP reveals greater uncertainty in curve steepness, missed by 4PL. |

| Predicted Response at 1 nM | A | 8% Inhibition | 9% Inhibition (2% – 18%) | GP provides a crucial predictive uncertainty interval for low-dose extrapolation. |

| Therapeutic Index (vs. Target EC50) | A | 45 | 38 (22 – 65) | The point estimate overstates precision; the credible interval shows a real risk of TI < 25. |

Table 2: Key Research Reagent Solutions for Dose-Response Assays

| Reagent / Material | Function in Dose-Response Analysis | Key Consideration for Uncertainty |

|---|---|---|

| Cell Titer-Glo 2.0 (ATP Quantitation) | Measures cell viability/cytotoxicity for IC50 determination. | Luminescence signal variance contributes to heteroscedastic noise; GP kernels can model this. |

| FLIPR Calcium 5 Dye | Measures GPCR activation or ion channel flux for EC50 determination. | Kinetic readouts introduce temporal variance; time-series GPs can model dose-response dynamics. |

| Compound Library in DMSO | Source of dose gradients. | Liquid handling precision for serial dilution is a major source of input (dose) uncertainty, often unaccounted for. |

| 384-Well Assay Plates | Platform for high-throughput screening. | Edge effects and plate-to-plate variability are structured noise; hierarchical GPs can isolate this variance. |

| qpPCR Reagents (e.g., TaqMan) | Quantifies gene expression changes (e.g., biomarker induction). | High cycle threshold (Ct) variance at low expression levels dramatically amplifies response uncertainty in log space. |

Detailed Experimental Protocol for Uncertainty-Aware Analysis

Protocol: High-Throughput Viability Assay with Integrated GP Analysis

1. Experimental Design & Plate Layout:

- Use a randomized plate layout to confound positional effects.

- Include a minimum of 10 dose points per compound, spaced logarithmically (e.g., half-log intervals).

- Use 8 technical replicates per dose to robustly estimate within-plate variance.

- Repeat the entire experiment across 3 independent days (biological replicates) to estimate between-experiment variance.

2. Data Acquisition:

- Treat cells with compound dilution series for 72 hours.

- Measure viability using Cell Titer-Glo 2.0 according to manufacturer's instructions.

- Record raw luminescence values for every well.

3. Preprocessing & Normalization:

- Normalize raw values to the median of on-plate vehicle (0%) and cytotoxicity (100%) controls.

- Do not aggregate replicates at this stage. Maintain the full hierarchical structure:

[Experiment, Plate, Dose, Well].

4. Gaussian Process Modeling (Implementation Outline):

* Model Specification: Use a hierarchical GP model. The core response function f(dose) is drawn from a GP prior with a Matérn 5/2 kernel. The observed data is modeled as y = f(dose) + g(experiment) + h(plate) + ε, where g and h are random effect terms, and ε is i.i.d. noise.

* Inference: Perform Hamiltonian Monte Carlo (HMC) sampling (e.g., using Stan, Pyro, or GPyTorch) to obtain the posterior distribution of all parameters and the latent function f.

* Derived Parameters: From each posterior sample of f, calculate the EC50/IC50 (dose where f(dose) = 50), Hill slope, and Emax. The distribution of these values across samples forms their direct posterior credible intervals.

Diagram: Hierarchical GP Model Structure for Multi-Experiment Data

Critical Interpretations and Applications

The transition from point estimates to uncertainty distributions enables more nuanced interpretations:

- Probabilistic Lead Ranking: Compounds can be ranked by the probability that one has a lower IC50 or higher therapeutic index than another, rather than by point estimate comparisons.

- Risk-Informed Go/No-Go Decisions: A compound with a promising mean EC50 but a wide 95% credible interval that overlaps with a toxic range represents a higher development risk than one with a slightly less potent but more precisely estimated curve.

- Optimal Experimental Design: The posterior predictive variance from a pilot GP model can guide the selection of the most informative next doses to test, efficiently reducing uncertainty (Active Learning).

Dose-response analysis must evolve beyond point estimates. In drug discovery, where decisions are resource-intensive and carry significant risk, ignoring uncertainty is a fundamental oversight. Gaussian Process regression provides a rigorous, flexible statistical framework that seamlessly integrates with hierarchical experimental data to quantify and propagate uncertainty from raw measurements to final derived parameters. Adopting this uncertainty-aware paradigm leads to more resilient conclusions, better candidate prioritization, and ultimately, a more efficient and predictive development pipeline.

Within the framework of Gaussian Process (GP) regression for dose-response uncertainty research, understanding GPs as distributions over functions is foundational. This perspective is critical for quantifying uncertainty in pharmacological responses, where predicting the effect of a drug across a continuum of doses—with inherent biological variability and measurement noise—is paramount. A GP provides a Bayesian non-parametric approach to this regression problem, offering a full probabilistic description of possible response functions consistent with observed data.

Mathematical Foundation

A Gaussian Process is defined as a collection of random variables, any finite number of which have a joint Gaussian distribution. It is completely specified by its mean function ( m(\mathbf{x}) ) and covariance (kernel) function ( k(\mathbf{x}, \mathbf{x}') ):

[ f(\mathbf{x}) \sim \mathcal{GP}(m(\mathbf{x}), k(\mathbf{x}, \mathbf{x}')) ]

For dose-response modeling, ( \mathbf{x} ) typically represents dose (often log-transformed), and ( f(\mathbf{x}) ) represents the latent response function. The prior on functions is directly defined by this mean and covariance. The kernel function encodes assumptions about function properties such as smoothness, periodicity, or trends, which are central to realistic biological response curves.

From Prior to Posterior: The Bayesian Update

The core Bayesian inference proceeds as follows:

- Prior Distribution: ( \mathbf{f}* \sim \mathcal{N}(\mathbf{0}, K{*}) ), representing beliefs over function values ( \mathbf{f}_ ) at test doses ( X_* ) before seeing data.

- Likelihood: Observed response data ( \mathbf{y} ) at training doses ( X ) are assumed noisy: ( \mathbf{y} = \mathbf{f} + \epsilon ), with ( \epsilon \sim \mathcal{N}(0, \sigma_n^2I) ).

- Joint Distribution: The GP prior implies a joint distribution between observed and predicted values: [ \begin{bmatrix} \mathbf{y} \ \mathbf{f}* \end{bmatrix} \sim \mathcal{N}\left( \mathbf{0}, \begin{bmatrix} K(X, X) + \sigman^2I & K(X, X*) \ K(X, X) & K(X_, X_*) \end{bmatrix} \right) ]

- Posterior Distribution: Conditioning on the data yields the key predictive equations: [ \begin{aligned} \mathbf{f}* | X, \mathbf{y}, X* &\sim \mathcal{N}(\bar{\mathbf{f}}*, \text{cov}(\mathbf{f})) \ \bar{\mathbf{f}}_ &= K(X*, X)[K(X, X) + \sigman^2I]^{-1}\mathbf{y} \ \text{cov}(\mathbf{f}*) &= K(X, X_) - K(X*, X)[K(X, X) + \sigman^2I]^{-1}K(X, X_*) \end{aligned} ] The posterior is also a GP, providing predictive means and variances at every dose level.

Experimental Protocols in Dose-Response Research

GP modeling is applied to data generated from standard and novel pharmacological assays.

Protocol 1: In Vitro Dose-Response Curve Generation (e.g., Cell Viability Assay)

- Cell Plating: Seed cells in a 96-well plate at an optimized density. Include control wells (media only, vehicle control, positive control for death).

- Compound Treatment: Prepare a serial dilution (e.g., 1:3 or 1:10) of the test compound across a biologically relevant range (e.g., 1 nM to 100 µM). Add to triplicate wells.

- Incubation: Incubate plate for predetermined duration (e.g., 72 hours) at 37°C, 5% CO₂.

- Viability Measurement: Add a cell viability reagent (e.g., CellTiter-Glo). Shake, incubate, and measure luminescence on a plate reader.

- Data Preprocessing: Normalize raw luminescence values: (Value - Mean Positive Control) / (Mean Vehicle Control - Mean Positive Control) * 100%. Calculate mean and standard error for each dose.

- GP Regression: Input log-transformed doses and normalized response (%) into the GP model. Use a composite kernel (e.g., Radial Basis Function + White Noise) to capture smooth response and measurement error.

Protocol 2: In Vivo Pharmacokinetic/Pharmacodynamic (PK/PD) Study

- Animal Dosing: Administer test compound to cohorts of animals (e.g., n=6/group) at defined dose levels via the intended route (oral, IV, etc.).

- Serial Sampling: Collect blood samples at multiple time points post-dose from each animal.

- Bioanalysis: Quantify compound concentration in plasma (PK) and a relevant biomarker or efficacy readout (PD) using LC-MS/MS or ELISA.

- Data Structuring: Align PK and PD data per animal and dose group. PD responses may be modeled as a function of either time or estimated drug concentration at the effect site.

- Hierarchical GP Modeling: Employ a multi-task or hierarchical GP to model shared trends across dose groups while accounting for individual animal variability. The kernel can incorporate structure across the dose and time dimensions.

Table 1: Comparison of Common Covariance Kernels for Dose-Response Modeling

| Kernel Name | Mathematical Form | Hyperparameters | Function Properties | Use Case in Dose-Response | ||||

|---|---|---|---|---|---|---|---|---|

| Radial Basis Function (RBF) | ( k(x, x') = \sigma_f^2 \exp\left(-\frac{(x - x')^2}{2l^2}\right) ) | Length-scale (l), variance (\sigma_f^2) | Infinitely smooth, stationary | Default for modeling smooth monotonic or biphasic responses. | ||||

| Matérn 3/2 | ( k(x, x') = \sigma_f^2 \left(1 + \frac{\sqrt{3} | x-x' | }{l}\right)\exp\left(-\frac{\sqrt{3} | x-x' | }{l}\right) ) | Length-scale (l), variance (\sigma_f^2) | Once differentiable, less smooth than RBF | Captures responses with potential sharper transitions or local variation. |

| Linear | ( k(x, x') = \sigmab^2 + \sigmav^2(x - c)(x' - c) ) | Offset (c), variances (\sigmab^2, \sigmav^2) | Non-stationary, linear functions | Incorporating a linear trend component, often added to other kernels. | ||||

| White Noise | ( k(x, x') = \sigman^2 \delta{xx'} ) | Noise variance (\sigma_n^2) | Uncorrelated noise | Added to diagonal to model measurement error. |

Table 2: Example GP Model Fit to Synthetic Dose-Response Data Data: Log(Dose) from -3 to 3, True EC50 = 0, Max Effect = 100%, added Gaussian noise (σ=5%). Model: RBF + White Noise kernel.

| Metric | Value (Mean ± Std) | Description |

|---|---|---|

| Log Marginal Likelihood | -15.2 ± 0.5 | Model evidence; used for kernel selection. |

| Estimated Noise Level (σ_n) | 4.8% ± 0.3% | Inferred measurement noise. |

| Predicted EC50 (Log) | -0.05 ± 0.15 | Dose for 50% effect with 95% CI. |

| Max Effect (E_max) | 98.5% ± 3.2% | Plateau response with 95% CI. |

Visualizing the Gaussian Process Framework

Title: The Gaussian Process Bayesian Inference Workflow

Title: GP Defines Distributions Over Functions

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Dose-Response Experiments and GP Analysis

| Item / Reagent | Function in Experiment | Relevance to GP Modeling |

|---|---|---|

| CellTiter-Glo 3D | Measures ATP content as a proxy for viable cell number in 3D cultures. | Provides continuous viability data (y) for modeling against log(dose) (x). Critical data source. |

| DMSO (Cell Culture Grade) | Universal solvent for water-insoluble compounds. Enables serial dilution. | Vehicle control data defines baseline response (0% effect) for normalization of y. |

| Staurosporine | Prominent kinase inhibitor inducing apoptosis; used as a positive control for cell death. | Defines the maximum effect (100% death) for response normalization, anchoring the GP model's scale. |

| 384-Well Assay Plates | Enable high-throughput screening of multiple compounds across a full dose-response matrix. | Generates the large, structured datasets ideal for robust GP hyperparameter learning and model validation. |

| GraphPad Prism | Industry-standard software for initial curve fitting (e.g., 4PL). | Provides initial parameter estimates (EC50, Hill slope) that can inform GP prior mean functions. |

| GPy / GPflow (Python Libs) | Specialized libraries for flexible GP model construction and inference. | Enables implementation of custom kernels (e.g., RBF+Linear) and hierarchical models for complex dose-response data. |

| Hamilton Microlab STAR | Automated liquid handler for precise serial dilution and reagent dispensing. | Minimizes technical noise (reduces σ_n), leading to cleaner data and tighter posterior credible intervals from the GP. |

Within the broader thesis on Gaussian Process (GP) regression for dose-response uncertainty research, the covariance function, or kernel, is the fundamental component that encodes all prior assumptions about the form and smoothness of the response function. This whitepaper details how kernel selection and composition directly embed pharmacological and toxicological principles into probabilistic models, enabling robust quantification of uncertainty in dose-response relationships critical to drug development.

Mathematical Foundation: Kernels as Prior Assumptions

A Gaussian Process is defined as a collection of random variables, any finite number of which have a joint Gaussian distribution. It is completely specified by its mean function (m(\mathbf{x})) and covariance function (k(\mathbf{x}, \mathbf{x}')).

[ f(\mathbf{x}) \sim \mathcal{GP}(m(\mathbf{x}), k(\mathbf{x}, \mathbf{x}')) ]

For dose-response modeling, (x) typically represents dose (often log-transformed), and (f(x)) represents the biological response. The kernel (k) dictates the covariance between responses at doses (x) and (x'), thereby controlling the smoothness, periodicity, and trends of the function samples drawn from the prior.

Kernel Families & Their Dose-Response Interpretations

Different kernel families encode distinct structural assumptions about the underlying dose-response curve.

Stationary Kernels

These kernels depend only on the distance between doses, (r = |x - x'|), assuming homogeneity across the dose range.

- Squared Exponential (RBF): (k{\text{SE}}(x, x') = \sigmaf^2 \exp\left(-\frac{(x - x')^2}{2\ell^2}\right))

- Assumption: Infinitely differentiable, leading to very smooth response functions. The length-scale (\ell) determines the wiggliness; a large (\ell) assumes a slowly varying response, suitable for monotonic or sigmoidal curves.

- Matérn Class: (k{\text{Matérn}}(x, x') = \sigmaf^2 \frac{2^{1-\nu}}{\Gamma(\nu)} \left(\frac{\sqrt{2\nu}r}{\ell}\right)^\nu K_\nu \left(\frac{\sqrt{2\nu}r}{\ell}\right))

- Assumption: Less smooth than RBF. The parameter (\nu) controls differentiability. (\nu=1.5) or (2.5) are common, allowing for more flexible, realistically rough response curves where biological variability is expected.

Non-Stationary & Composite Kernels

Real dose-response relationships often require more complex assumptions.

- Linear Kernel: (k{\text{Lin}}(x, x') = \sigmab^2 + \sigma_v^2 (x - c)(x' - c))

- Assumption: The response has an underlying linear trend with dose.

- Periodic Kernel: (k{\text{Per}}(x, x') = \sigmaf^2 \exp\left(-\frac{2\sin^2(\pi |x-x'| / p)}{\ell^2}\right))

- Assumption: Cyclical patterns in response (e.g., circadian rhythms in toxicity studies).

- Composite Kernels: Kernels can be combined additively or multiplicatively to build complex priors.

- Example:

RBF + Linearencodes an assumption of a smooth deviation from a global linear trend. - Example:

RBF * Periodicencodes an assumption of a periodic pattern whose amplitude is modulated by a smooth function.

- Example:

Table 1: Kernel Selection Guide for Dose-Response Modeling

| Kernel Type | Mathematical Form | Encoded Assumption | Typical Use Case in Dose-Response |

|---|---|---|---|

| Squared Exponential | (k = \sigma_f^2 \exp\left(-\frac{r^2}{2\ell^2}\right)) | Smooth, steady response. | Initial screening for monotonic efficacy. |

| Matérn (ν=1.5) | (k = \sigma_f^2 (1 + \frac{\sqrt{3}r}{\ell}) \exp(-\frac{\sqrt{3}r}{\ell})) | Differentiable, moderately rough. | General-purpose toxicity (e.g., enzyme activity). |

| Linear | (k = \sigmab^2 + \sigmav^2 (x - c)(x' - c)) | Underlying linear trend. | Baseline trend in cell proliferation. |

| Periodic | (k = \sigma_f^2 \exp\left(-\frac{2\sin^2(\pi r/p)}{\ell^2}\right)) | Oscillatory behavior. | Chronopharmacology studies. |

| Composite (RBF+Linear) | (k = k{\text{RBF}} + k{\text{Lin}}) | Smooth deviation from linearity. | Efficacy with linear baseline drift. |

Experimental Protocols: Inferring Kernel Hyperparameters

The kernel's hyperparameters (e.g., (\ell), (\sigma_f), (p)) are not assumed a priori but learned from data, typically via Maximum Marginal Likelihood or Markov Chain Monte Carlo (MCMC).

Protocol: Hyperparameter Optimization via Marginal Likelihood

Objective: Find the set of kernel hyperparameters (\boldsymbol{\theta}) that best explain the observed dose-response data (\mathbf{y}) at doses (\mathbf{X}).

- Construct the Covariance Matrix: Compute (\mathbf{K}_{XX}) using the chosen kernel form for all pairs of training doses.

- Define the Marginal Likelihood: For GP regression with Gaussian noise variance (\sigman^2): [ \log p(\mathbf{y}|\mathbf{X}, \boldsymbol{\theta}) = -\frac{1}{2}\mathbf{y}^T (\mathbf{K}{XX} + \sigman^2\mathbf{I})^{-1}\mathbf{y} - \frac{1}{2}\log|\mathbf{K}{XX} + \sigma_n^2\mathbf{I}| - \frac{n}{2}\log 2\pi ]

- Optimize: Use a gradient-based optimizer (e.g., L-BFGS-B) to maximize (\log p(\mathbf{y}|\mathbf{X}, \boldsymbol{\theta})) with respect to (\boldsymbol{\theta}).

- Validate: Assess kernel choice via cross-validation or comparison of the marginal likelihood on held-out test data.

Protocol: Bayesian Inference of Hyperparameters via MCMC

Objective: Obtain a full posterior distribution over hyperparameters, capturing epistemic uncertainty in the kernel itself.

- Specify Priors: Place weakly informative priors (e.g., Half-Cauchy for length-scales, variances) on hyperparameters (\boldsymbol{\theta}).

- Sample from Posterior: Use a sampler like Hamiltonian Monte Carlo (HMC) or NUTS to draw samples from (p(\boldsymbol{\theta} | \mathbf{y}, \mathbf{X}) \propto p(\mathbf{y}|\mathbf{X}, \boldsymbol{\theta}) p(\boldsymbol{\theta})).

- Propagate Uncertainty: Make predictions using each sampled (\boldsymbol{\theta}), resulting in a mixture of GPs that accounts for kernel uncertainty.

Table 2: Typical Hyperparameter Priors for Dose-Response Kernels

| Hyperparameter | Description | Recommended Prior | Rationale |

|---|---|---|---|

| Length-scale ((\ell)) | Controls function wiggliness. | Half-Cauchy(scale=5) |

Prevents unrealistically short or long length-scales. |

| Output Scale ((\sigma_f)) | Controls vertical scale of function. | Half-Cauchy(scale=2) |

Allows for varying response magnitudes. |

| Noise Variance ((\sigma_n^2)) | Measurement/biological noise. | Half-Cauchy(scale=1) |

Robust to varying noise levels. |

| Period ((p)) | Period of oscillation. | LogNormal(log(desired_period), 1) |

If prior knowledge on period exists. |

Visualizing Kernel Assumptions and GP Workflow

Title: GP Dose-Response Modeling Workflow.

Title: Kernel Functions Determine GP Prior Characteristics.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for GP Dose-Response Research

| Item / Reagent | Supplier / Library Examples | Function in GP Modeling |

|---|---|---|

| GP Software Library | GPy (Python), GPflow (TensorFlow), Stan (Probabilistic), Scikit-learn | Provides core algorithms for kernel definition, hyperparameter inference, and prediction. |

| MCMC Sampler | PyMC3, Stan, emcee | Enables full Bayesian inference of kernel hyperparameters and model comparison. |

| Optimization Suite | SciPy (L-BFGS-B), Adam/Optimizers in PyTorch/TensorFlow | Finds maximum marginal likelihood estimates for kernel parameters. |

| Bayesian Optimization Library | BoTorch, GPyOpt, Ax | For optimal experimental design (e.g., selecting next dose to test). |

| In-Vitro Assay Kits | CellTiter-Glo (Promega), Caspase-3/7 Assay | Generates quantitative dose-response data (viability, apoptosis) for GP model training. |

| High-Throughput Screening Systems | PerkinElmer EnVision, BioTek Cytation | Produces large-scale dose-response matrices essential for learning complex kernels. |

This whitepaper examines the application of Bayesian inference for refining dose-response models, framed within a broader research thesis on Gaussian Process (GP) regression for uncertainty quantification. In drug development, the dose-response curve is central to identifying therapeutic efficacy and safety margins. Traditional frequentist methods provide point estimates but often fail to fully characterize uncertainty, especially with limited data. Bayesian inference, coupled with GP regression, offers a robust probabilistic framework that systematically incorporates prior knowledge and experimental data to yield a posterior distribution, fully capturing the uncertainty in the dose-response relationship.

Foundational Principles

The Bayesian Paradigm

Bayesian inference updates beliefs about an unknown parameter θ (e.g., EC₅₀, Hill coefficient) by combining prior knowledge with observed data. The core theorem is expressed as: Posterior ∝ Likelihood × Prior In the context of dose-response modeling, the posterior distribution ( p(θ | D) ) over curve parameters given data ( D ) quantifies all uncertainty after observing the experiment.

Gaussian Process Regression as a Bayesian Nonparametric Model

A GP defines a prior over functions, directly modeling the dose-response curve ( f(x) ) without assuming a fixed parametric form (e.g., 4PL). It is fully specified by a mean function ( m(x) ) and a covariance kernel function ( k(x, x') ): ( f(x) \sim \mathcal{GP}(m(x), k(x, x')) ). The kernel (e.g., Radial Basis Function) dictates the smoothness and shape of possible curves. Observing data ( D = { (xi, yi) } ) leads to a posterior GP, whose mean provides the best estimate and whose variance provides a credible interval at any dose ( x_* ).

Table 1: Comparison of Modeling Approaches for Dose-Response Data

| Aspect | Traditional 4PL (Frequentist) | Bayesian 4PL | Gaussian Process Regression |

|---|---|---|---|

| Parameter Estimates | Point estimates (MLE) with confidence intervals. | Posterior distributions (full uncertainty). | Posterior over the entire function. |

| Uncertainty Quantification | Asymptotic CIs; may be poor with small n. | Full posterior credible intervals. | Joint credible bands across all doses. |

| Prior Incorporation | Not possible. | Explicit via prior distributions. | Explicit via mean/kernel priors. |

| Handling Sparse Data | Prone to overfitting or failure. | Improved stability with informative priors. | Flexible, kernel-dependent. |

| Computational Demand | Low. | Moderate to High (MCMC/VI). | High (matrix inversions). |

Table 2: Example Posterior Parameter Summaries from a Bayesian 4PL Analysis

| Parameter | Prior Distribution | Posterior Mean | 95% Credible Interval |

|---|---|---|---|

| Bottom (α) | Normal(0, 5) | 0.21 | [-0.15, 0.58] |

| Top (β) | Normal(100, 10) | 98.7 | [95.2, 102.1] |

| EC₅₀ (γ) | LogNormal(1, 1) | 12.3 nM | [8.5, 17.8 nM] |

| Hill Slope (η) | Normal(1, 0.5) | 1.32 | [0.95, 1.72] |

Note: Data simulated for illustrative purposes.

Detailed Experimental Protocol: Bayesian Dose-Response Analysis with GP

This protocol outlines the key steps for implementing a Bayesian GP regression for a in vitro cytotoxicity assay.

4.1 Experimental Design & Data Generation

- Cell Line: HEK293 cells.

- Compound: Novel small-molecule inhibitor (Compound X).

- Dose Range: 0.1 nM to 100 µM, 12 concentrations in triplicate (log spacing).

- Assay: CellTiter-Glo viability assay after 72h exposure.

- Control Data: Vehicle (DMSO) for 100% viability, Staurosporine (1 µM) for 0% viability.

- Output: Normalized viability (% of vehicle control).

4.2 Computational & Statistical Analysis Workflow

- Data Preprocessing: Normalize raw luminescence to % viability. Log-transform dose (molar).

- Model Specification:

- Mean Function: ( m(x) = 50 ) (constant, vague prior).

- Covariance Kernel: Radial Basis Function (RBF) + White Noise kernel: ( k(x, x') = σf^2 \exp(-\frac{(x-x')^2}{2l^2}) + σn^2 δ_{xx'} ).

- Priors: Weakly informative priors on hyperparameters: ( l \sim \text{InvGamma}(3,2), σf \sim \text{HalfNormal}(5), σn \sim \text{HalfNormal}(1) ).

- Posterior Inference: Use Markov Chain Monte Carlo (MCMC) sampling (e.g., NUTS algorithm via PyMC or Stan) to draw samples from the posterior distribution of the hyperparameters and the latent function ( f ).

- Posterior Prediction: For each MCMC sample, compute the mean and variance of the GP at a dense grid of dose values to generate the posterior mean curve and 95% credible band.

- Derived Metrics: Calculate posterior distributions for key metrics: IC₅₀ (dose where ( f(x) = 50 )), the dose for a 90% response (IC₁₀), and the area under the curve (AUC).

Bayesian GP Dose-Response Analysis Workflow

Signaling Pathway Diagram

A common pathway for cytotoxic compounds involves DNA damage response and apoptosis.

DNA Damage-Induced Apoptosis Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Dose-Response Experiments

| Item | Function | Example Product/Catalog |

|---|---|---|

| Cell Viability Assay | Quantifies metabolically active cells; primary source of response data. | CellTiter-Glo 3D (Promega, G9683) |

| High-Throughput Screening Plates | Platform for conducting assays with multiple doses and replicates. | Corning 384-well White Round Bottom (3570) |

| Automated Liquid Handler | Ensures precise and reproducible compound serial dilution & dispensing. | Beckman Coulter Biomek i7 |

| DMSO (Cell Culture Grade) | Universal solvent for small-molecule compound libraries. | Sigma-Aldrich (D2650) |

| Reference Cytotoxic Agent | Positive control for assay validation and normalization. | Staurosporine (Sigma, S4400) |

| Statistical Software Library | Implements Bayesian GP regression and MCMC sampling. | PyMC (Python) or rstan (R) |

Gaussian Process (GP) regression provides a robust Bayesian, non-parametric framework for modeling complex relationships, making it uniquely suited for dose-response analysis in pharmacological and toxicological research. Its core advantages lie in its intrinsic ability to quantify prediction uncertainty and its flexibility in modeling non-linear trends without pre-specified functional forms. This allows researchers to make probabilistic predictions about efficacy and toxicity, essential for determining therapeutic windows and informing critical Phase I/II trial decisions. This whitepaper details the technical implementation of these advantages within modern computational biology.

Technical Guide: Quantifying Prediction Uncertainty

In GP regression, uncertainty quantification arises naturally from the posterior predictive distribution. For a set of n observed dose-response pairs D = {X, y}, where X are dose concentrations and y is the biological response (e.g., cell viability, receptor occupancy), the goal is to predict the response y* at a new dose x*.

The GP is defined by a mean function m(x) and a covariance kernel function k(x, x'). Assuming a prior y ~ GP(0, k(x, x') + σ²ₙI), the joint distribution of observed and predicted values is:

The posterior predictive distribution for y* is Gaussian: y* | X, y, x* ~ N( μ, Σ ) where: μ* = K(x, X)[K(X, X) + σ²ₙI]⁻¹y Σ = K(x, x) - K(x, X)[K(X, X) + σ²ₙI]⁻¹K(X, x)

Key Insight: The predictive variance Σ* (the diagonal of the covariance matrix) quantifies the uncertainty at prediction point x*. This variance automatically increases in regions far from observed data points, providing a principled measure of confidence (e.g., credible intervals) for the dose-response curve.

Experimental Protocol for Uncertainty Validation

To empirically validate GP uncertainty quantification, researchers can conduct the following in silico experiment:

- Data Collection: Acquire high-throughput dose-response data (e.g., 10-point concentration series in triplicate) for a known compound.

- Data Partitioning: Randomly withhold 20% of the data points as a validation set.

- Model Training: Fit a GP model (using, e.g., a Radial Basis Function kernel) to the remaining 80% of data. Optimize kernel hyperparameters (length-scale, variance) by maximizing the marginal log-likelihood.

- Prediction & Interval Calculation: Generate posterior predictions (mean and 95% credible interval) across a dense range of doses.

- Validation: Calculate the calibration score: the percentage of withheld data points that fall within the model's 95% predictive credible interval. A well-calibrated model will achieve ~95%.

Table 1: Example Uncertainty Calibration Results

| Compound | Model Type | Kernel | % Points in 95% CI (Validation) | Average Predictive Variance (Log Scale) |

|---|---|---|---|---|

| Compound A | GP-RBF | RBF | 94.7% | 0.12 |

| Compound A | 4PL Logistic | N/A | 61.3% | N/A |

| Compound B | GP-Matern 5/2 | Matern 5/2 | 96.1% | 0.18 |

Validation of GP Uncertainty Quantification Workflow

Technical Guide: Modeling Non-Linear Trends

Traditional dose-response models (e.g., 4-parameter logistic, Emax) impose a specific, global non-linear shape. GPs overcome this limitation through the choice of covariance kernel, which dictates the smoothness and structure of functions drawn from the prior. Complex, non-stationary trends can be captured by combining or adapting kernels.

Common Kernels for Dose-Response:

- Radial Basis Function (RBF): k(x,x') = σ² exp(-||x - x'||² / 2l²). Models infinitely smooth, stationary trends.

- Matérn Class: kν(x,x') = σ² (2¹⁻ν / Γ(ν)) (√(2ν)||x-x'||/l)ν Kν(√(2ν)||x-x'||/l). Less smooth than RBF (controlled by ν), better for capturing erratic responses.

- Linear + RBF: k(x,x') = σlin² (x·x') + σrbf² exp(-||x - x'||² / 2l²). Captures a global linear trend with local non-linear deviations.

- Changepoint Kernels: Combine two different kernels via a sigmoidal function to model abrupt transitions in response dynamics (e.g., efficacy to toxicity).

The marginal likelihood p(y|X, θ), where θ are kernel hyperparameters, allows for principled model selection and adaptation to the data's inherent complexity.

Experimental Protocol for Non-Linear Trend Detection

To demonstrate GP flexibility versus parametric models:

- Generate/Source Complex Data: Use in vitro data exhibiting biphasic (hormetic) or plateauing responses not well-described by standard models.

- Model Comparison:

- Model A: Standard 4-parameter logistic (4PL) model.

- Model B: GP with RBF kernel.

- Model C: GP with composite (Linear + RBF) kernel.

- Fitting & Evaluation: Optimize all models. Evaluate using the Leave-One-Out Cross-Validation Root Mean Square Error (LOO-CV RMSE) and the log marginal likelihood (for GPs).

- Analysis: The model with higher log marginal likelihood and lower LOO-CV RMSE better captures the underlying trend. Visually inspect fits for extrapolation behavior.

Table 2: Model Performance on a Complex Biphasic Dataset

| Model | Kernel / Form | Log Marginal Likelihood | LOO-CV RMSE | AIC |

|---|---|---|---|---|

| GP-Composite | Linear + RBF | -12.4 | 0.08 | -- |

| GP-RBF | RBF | -18.7 | 0.11 | -- |

| 4PL Logistic | y = D + (A-D)/(1+(x/C)^B) | -42.1 | 0.31 | 92.2 |

GP Workflow for Modeling Non-Linear Trends

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Toolkit for GP Dose-Response Research

| Item | Category | Function & Rationale |

|---|---|---|

| High-Throughput Screening Assay Kits (e.g., CellTiter-Glo) | Wet-Lab Reagent | Generates precise, reproducible viability/activity data points—the essential experimental input for robust GP modeling. |

| Dose-Response Software (e.g., GraphPad Prism) | Analysis Software | Provides baseline parametric model fitting (4PL, etc.) for initial comparison and data quality checks. |

| Python Ecosystem (NumPy, SciPy, scikit-learn) | Computational Library | Core numerical computing and provides basic GP implementations. |

| GPy or GPflow Libraries (Python) | Specialized Software | Advanced, dedicated GP frameworks offering a wide range of kernels, non-Gaussian likelihoods, and sparse approximations for large datasets. |

| Stan or PyMC3 (Probabilistic Programming) | Modeling Language | Enables fully Bayesian GP specification, allowing for complex hierarchical models (e.g., pooling across cell lines). |

| Jupyter Notebook / R Markdown | Documentation Tool | Critical for reproducible research, documenting the full analysis pipeline from raw data to GP model results. |

From GP Output to Research Decisions

Implementing GP Regression: A Step-by-Step Guide for Preclinical and Clinical Dose-Finding

Within dose-response uncertainty research, Gaussian Process (GP) regression provides a robust Bayesian non-parametric framework for modeling biological responses, quantifying prediction uncertainty, and guiding experimental design. This whitepaper details a comprehensive technical workflow for transforming raw experimental data into a validated, predictive GP model.

The Core Workflow

The process consists of five interconnected stages: experimental design and data generation, data curation and preprocessing, GP model formulation and training, model validation and uncertainty quantification, and finally, predictive application and iterative refinement.

Diagram Title: Five-Stage GP Modeling Workflow for Dose-Response

Stage 1: Experimental Design & Data Generation

This stage focuses on acquiring high-quality, informative data, often via cell-based viability assays.

Key Experimental Protocol: Cell Viability Assay (MTT/CCK-8)

Objective: Quantify the dose-response relationship of a drug candidate on target cell lines.

Detailed Methodology:

- Cell Seeding: Plate cells in a 96-well plate at an optimized density (e.g., 5,000 cells/well) in 100 µL growth medium. Include control wells (medium only, cells only).

- Compound Treatment: After 24-hour incubation, add serial dilutions of the test compound. Use a concentration range spanning several orders of magnitude (e.g., 1 nM to 100 µM). Employ technical and biological replicates (n≥3).

- Incubation: Incubate plates for the desired exposure period (e.g., 72 hours) at 37°C, 5% CO₂.

- Viability Reagent Addition: Add 10 µL of MTT (5 mg/mL) or CCK-8 reagent directly to each well.

- Signal Development: Incubate for 2-4 hours to allow formazan crystal formation (MTT) or color development (CCK-8).

- Absorbance Measurement: For MTT, solubilize crystals with DMSO, then measure absorbance at 570 nm with a reference at 630-650 nm. For CCK-8, measure absorbance directly at 450 nm.

- Data Calculation: Normalize absorbance data:

% Viability = [(Sample - Blank)/(Cell Control - Blank)] * 100.

Research Reagent Solutions Toolkit

| Item | Function in Dose-Response Research |

|---|---|

| Cell Lines (e.g., A549, HepG2) | In vitro model systems representing target tissue or disease phenotype. |

| Test Compound(s) | Drug candidate molecules with unknown or partially characterized dose-response profiles. |

| MTT or CCK-8 Assay Kits | Colorimetric reagents for quantifying metabolically active cells, a proxy for viability. |

| DMSO (Cell Culture Grade) | Universal solvent for hydrophobic compounds; used for preparing stock solutions and serial dilutions. |

| Multi-channel Pipettes & Automated Liquid Handlers | Ensure precision and reproducibility in serial dilution and reagent dispensing across multi-well plates. |

| Microplate Reader | Instrument for high-throughput measurement of absorbance (or fluorescence) from assay plates. |

| Laboratory Information Management System (LIMS) | Software for tracking sample provenance, experimental parameters, and raw data files. |

Stage 2: Data Curation & Preprocessing

Raw experimental data must be transformed into a clean, structured format suitable for GP modeling.

Key Steps:

- Aggregation: Combine data from replicate plates and experiments.

- Outlier Detection: Apply statistical methods (e.g., Grubbs' test) or domain knowledge to identify and handle technical anomalies.

- Normalization: Re-scale response values (e.g., viability) to a consistent range (e.g., 0-100%).

- Log-Transformation: Apply a log10 transformation to the dose/concentration axis to linearize the dynamic range and improve model stability.

Quantitative Data Summary Example: Table 1: Aggregated Dose-Response Data for Compound X on Cell Line Y (72h exposure)

| Log10(Concentration [M]) | Concentration (nM) | Mean Viability (%) | Std. Dev. (%) | n (Replicates) |

|---|---|---|---|---|

| -11.0 | 0.010 | 99.5 | 2.1 | 9 |

| -10.0 | 0.100 | 98.7 | 3.0 | 9 |

| -9.0 | 1.000 | 97.1 | 2.8 | 9 |

| -8.0 | 10.00 | 85.3 | 4.2 | 9 |

| -7.52 | 30.00 | 52.1 | 5.5 | 9 |

| -7.30 | 50.00 | 25.8 | 6.1 | 9 |

| -7.00 | 100.0 | 10.2 | 3.8 | 9 |

| -6.70 | 200.0 | 5.1 | 2.1 | 9 |

| -6.52 | 300.0 | 3.8 | 1.9 | 9 |

Stage 3: GP Model Formulation & Training

A GP is defined by a mean function m(x) and a covariance kernel function k(x, x').

Kernel Selection & Mathematical Formulation

For dose-response, a composite kernel is often effective:

k(x, x') = σ_f² * Matern(ν=3/2)(x, x'; l) + σ_n² * δ(x, x')

Where:

σ_f²: Signal variance.l: Length-scale, governing smoothness across the dose axis.Matern(ν=3/2): Kernel that assumes functions are once-differentiable, suitable for modeling biological responses.σ_n²: Noise variance.δ: Kronecker delta function for white noise.

Training via Marginal Likelihood Maximization

Model hyperparameters θ = {l, σ_f, σ_n} are optimized by maximizing the log marginal likelihood:

log p(y | X, θ) = -½ yᵀ (K + σ_n²I)⁻¹ y - ½ log|K + σ_n²I| - (n/2) log(2π)

This balances data fit and model complexity automatically.

Diagram Title: GP Model Training and Inference Process

Stage 4: Model Validation & Uncertainty Quantification

Key Validation Metrics:

- Predictive Log Likelihood: Measures probabilistic calibration.

- Mean Standardized Log Loss (MSLL): Assesses improvement over a baseline model.

- Coverage of Credible Intervals: Checks if, e.g., 95% posterior interval contains the true observation ~95% of the time on held-out test data.

Table 2: Example GP Model Validation Metrics on a Hold-Out Test Set

| Metric | Value | Interpretation |

|---|---|---|

| Root Mean Square Error (RMSE) | 3.21% Viability | Average point prediction error. |

| Mean Absolute Error (MAE) | 2.45% Viability | Robust measure of average error. |

| MSLL | -1.05 | Model predictions are more informative than the baseline. |

| 95% CI Empirical Coverage | 93.7% | Credible intervals are well-calibrated. |

Stage 5: Predictive Application & Iterative Refinement

The trained GP model enables key applications in drug development:

- Predicting Response at Untested Doses: The posterior predictive distribution provides full probability estimates.

- Quantifying Uncertainty: The predictive variance highlights regions (dose levels) where predictions are less certain.

- Active Learning/Optimal Experimental Design: Utilize an acquisition function (e.g., expected improvement, uncertainty sampling) based on the GP posterior to recommend the next most informative dose to test experimentally, closing the feedback loop.

Diagram Title: GP Model for Active Learning in Dose Selection

This whitepaper, framed within a broader thesis on Gaussian Process (GP) regression for dose-response uncertainty research, provides an in-depth technical guide on kernel function selection for pharmacological modeling. The response of biological systems to chemical compounds is inherently complex, nonlinear, and stochastic. Gaussian Processes offer a powerful Bayesian non-parametric framework to model these dose-response relationships while quantifying prediction uncertainty. The choice of kernel, or covariance function, is the critical determinant of a GP's behavior, encoding prior assumptions about the smoothness, periodicity, and structure of the latent biological function.

Foundational Kernels for Pharmacological Response

The kernel defines the covariance between function values at two input points (e.g., drug concentrations). For dose-response modeling, standard kernels provide a starting point.

Radial Basis Function (RBF) / Squared Exponential Kernel

The RBF kernel is the default choice for modeling smooth, infinitely differentiable functions. [ k{\text{RBF}}(x, x') = \sigmaf^2 \exp\left(-\frac{(x - x')^2}{2l^2}\right) ]

- Hyperparameters: Length-scale (l) (controls wiggliness), signal variance (\sigma_f^2).

- Pharmacological Implication: Assumes the biological response changes smoothly and steadily with dose. It may oversmooth abrupt transitions like receptor saturation or toxicity thresholds.

Matérn Class of Kernels

The Matérn family generalizes the RBF kernel with a smoothness parameter (\nu). [ k{\text{Matérn}}(x, x') = \sigmaf^2 \frac{2^{1-\nu}}{\Gamma(\nu)} \left(\frac{\sqrt{2\nu}|x - x'|}{l}\right)^\nu K_\nu \left(\frac{\sqrt{2\nu}|x - x'|}{l}\right) ] Commonly used values are (\nu = 3/2) and (\nu = 5/2), offering once and twice differentiable functions, respectively.

- Pharmacological Implication: More flexible than RBF for modeling responses with potentially rougher, more abrupt changes. Matérn 3/2 or 5/2 are often more realistic for biological data than the overly smooth RBF.

Quantitative Comparison of Standard Kernels

Table 1: Characteristics of Standard Kernels in Dose-Response Modeling

| Kernel | Mathematical Form | Key Hyperparameters | Smoothness Assumption | Best For (Pharmacology Context) | Potential Limitation |

|---|---|---|---|---|---|

| RBF | ( \sigma_f^2 \exp\left(-\frac{r^2}{2l^2}\right) ) | (l), (\sigma_f^2) | Infinitely differentiable | Very smooth, asymptotic EC50 curves; high-quality, noise-free data. | Can over-smooth plateaus, inflection points, & toxic "cliffs". |

| Matérn 3/2 | ( \sigma_f^2 (1 + \sqrt{3}r/l) \exp(-\sqrt{3}r/l) ) | (l), (\sigma_f^2) | Once differentiable | Responses with moderate roughness (e.g., in-vivo data with more variability). | Less extrapolation capability than RBF. |

| Matérn 5/2 | ( \sigma_f^2 (1 + \sqrt{5}r/l + \frac{5}{3}r^2/l^2) \exp(-\sqrt{5}r/l) ) | (l), (\sigma_f^2) | Twice differentiable | Balancing smoothness & flexibility; standard for many dose-response assays. | More computationally intensive than lower (\nu). |

| Periodic | ( \sigma_f^2 \exp\left(-\frac{2\sin^2(\pi r / p)}{l^2}\right) ) | (l), (\sigma_f^2), (p) | Periodic smoothness | Circadian rhythm effects on drug response (chronopharmacology). | Mis-specified if period (p) is unknown or non-stationary. |

Note: ( r = \|x - x'\| )

Designing Custom Kernels for Biological Realism

Standard kernels often fail to capture the known structure of pharmacological systems. Custom kernels, built by combining or modifying base kernels, can incorporate domain knowledge.

Common Structures and Operations

- Summation ((k1 + k2)): Models a function as a superposition of independent processes (e.g., linear trend + short-term fluctuation).

- Multiplication ((k1 \times k2)): Models interaction or modulation (e.g., a periodic process whose amplitude decays with dose).

- Change-Point Kernels: Uses sigmoidal functions to combine different kernels in different input domains, modeling regime shifts (e.g., efficacy vs. toxicity domain).

Exemplar Custom Kernel: Efficacy-Toxicity Transition Kernel

A critical challenge is modeling the transition from a therapeutic to a toxic dose range. A custom change-point kernel can blend a smooth Matérn kernel (for the efficacy region) with a different, potentially rougher kernel (for the toxicity region).

[ k{\text{ET}}(x, x') = \sigmaf^2 \cdot \Big[\Phi(x)\Phi(x') \cdot k{\text{Matérn 5/2}}(x, x'; l1) + (1-\Phi(x))(1-\Phi(x')) \cdot k{\text{Matérn 3/2}}(x, x'; l2)\Big] ] Where (\Phi(x)) is a logistic function centered near the estimated toxic threshold, smoothly transitioning between the two regimes.

Custom Kernel Structure for Efficacy-Toxicity Modeling

Experimental Protocol: Kernel Performance Evaluation in a Dose-Response Study

The following methodology outlines a standard in vitro experiment for generating data to evaluate and compare kernel performance.

Aim: To quantify the effect of compound X on cell viability and compare GP models with different kernels for prediction accuracy and uncertainty quantification.

1. Cell Culture & Plating:

- Seed HEK293 cells in 96-well plates at a density of 5,000 cells/well in 100 µL complete growth medium.

- Incubate for 24 hours at 37°C, 5% CO2 to allow cell attachment.

2. Compound Dilution & Treatment:

- Prepare a 10 mM stock solution of compound X in DMSO.

- Perform a 1:3 serial dilution in culture medium to create 10 concentrations (e.g., 10 µM to 0.05 nM), plus a DMSO vehicle control (0.1% final).

- Add 100 µL of each dilution to designated wells (n=6 replicates per concentration).

3. Incubation & Assay:

- Incubate plates for 72 hours.

- Add 20 µL of CellTiter-Glo reagent to each well.

- Shake for 2 minutes, then incubate in dark for 10 minutes.

- Record luminescence (RLU) using a plate reader.

4. Data Preprocessing & GP Modeling:

- Normalize RLU: (Sample - Median of 0 Dose) / (Median of Untreated Control - Median of 0 Dose) * 100%.

- Fit GP models (RBF, Matérn 3/2, Matérn 5/2, Custom Efficacy-Toxicity) to log10(concentration) vs. normalized response.

- Use Maximum Marginal Likelihood Estimation (MLE) for hyperparameter optimization.

- Employ k-fold cross-validation (k=5) to assess predictive log-likelihood and Mean Squared Error (MSE).

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Materials for Dose-Response GP Research

| Item | Function in Experiment | Example Product/Catalog # |

|---|---|---|

| Cell Line | Biological system for measuring pharmacological response. | HEK293 (ATCC CRL-1573) or relevant disease model. |

| Test Compound | The molecule whose dose-response relationship is being characterized. | Compound of interest (e.g., kinase inhibitor). |

| Viability Assay Kit | Quantifies cell health/viability as the biological readout. | CellTiter-Glo 2.0 (Promega, G9242). |

| Cell Culture Plates | Platform for hosting cells during treatment. | 96-well, clear-bottom, tissue-culture treated plates (Corning, 3904). |

| Dimethyl Sulfoxide (DMSO) | Standard solvent for compound solubilization. | Sterile, cell culture grade DMSO (Sigma, D2650). |

| GP Modeling Software | Implements kernel functions, inference, and prediction. | GPy (Python), GPflow (Python), or MATLAB's Statistics & ML Toolbox. |

Results Interpretation & Pathway Mapping

The biological interpretation of a GP model's output hinges on the kernel. A model with a custom change-point kernel may identify a novel toxic threshold, prompting investigation into the underlying biological pathway.

From Kernel Prediction to Biological Hypothesis Generation

The selection and design of kernels in Gaussian Process regression are not merely technical exercises but are fundamental to embedding pharmacological domain knowledge into predictive models. While the RBF kernel provides a smooth baseline and the Matérn class offers adjustable roughness, custom kernels—constructed via summation, multiplication, or change-point operations—enable the direct modeling of complex biological phenomena such as efficacy-toxicity transitions. Within the framework of dose-response uncertainty research, a principled approach to kernel selection enhances model interpretability, improves prediction in data-sparse regions, and ultimately guides more informed decisions in drug discovery and development.

This guide presents a technical framework for implementing Gaussian Process (GP) regression within dose-response uncertainty research. The broader thesis posits that GPs provide a principled, Bayesian non-parametric approach to model complex pharmacological dose-response relationships, quantify uncertainty in predictions, and optimize experimental design for drug development. This is critical for accurately determining therapeutic windows and minimizing adverse effects.

Core GP Regression Model for Dose-Response

A GP defines a prior over functions, characterized by a mean function m(x) and a covariance kernel k(x, x'). For dose-response modeling with dose x and response y, we assume: y = f(x) + ε, where ε ~ N(0, σ²_n) and f ~ GP(m(x), k(x, x')).

Key Kernel for Dose-Response: The Matérn 5/2 kernel is often preferred for its flexibility and smoothness properties, suitable for capturing typical sigmoidal response curves. k_{M52}(r) = σ² (1 + √5r + 5r²/3) exp(-√5r), where r is the scaled distance between doses.

Essential Python Toolkit: Installation and Setup

Key Research Reagent Solutions (Computational)

Table 1: Essential Python Libraries for GP Dose-Response Research

| Library/Tool | Primary Function in Research |

|---|---|

| GPyTorch | Provides scalable, modular GP models with GPU acceleration for robust uncertainty quantification. |

| Scikit-Learn | Offers baseline GP implementations, data preprocessing, and standard regression metrics for comparison. |

| PyTorch | Backend tensor library enabling automatic differentiation for flexible model optimization. |

| NumPy/SciPy | Foundational numerical computing and statistical functions for data manipulation. |

| Matplotlib/Seaborn | Creation of publication-quality visualizations of dose-response curves and uncertainty bands. |

| Arviz/PT | Diagnostic tools for evaluating MCMC convergence in fully Bayesian GP models (if used). |

Practical Implementation: Code Snippets

Data Simulation and Preprocessing with Scikit-Learn

Defining a GP Model in GPyTorch (Exact Inference)

Training and Hyperparameter Optimization

Table 2: Optimized GP Hyperparameter Values (Example)

| Hyperparameter | Symbol | Optimized Value | Interpretation |

|---|---|---|---|

| Noise Variance | σ²_n | 0.012 | Estimated measurement/biological noise level. |

| Output Scale | σ²_f | 0.95 | Vertical scale of the response function. |

| Lengthscale | l | 1.23 | Horizontal correlation range in dose space. |

| Constant Mean | c | -0.02 | Baseline response offset. |

Making Predictions and Visualizing Uncertainty

Comparative Baseline with Scikit-Learn's GP

Experimental Protocol for In Silico Validation

Objective: Validate GP model's ability to reconstruct a known dose-response function and quantify uncertainty.

- Data Generation: Simulate 150 data points from a Hill equation with known parameters (E_max=100, EC50=50, h=3) and additive Gaussian noise (σ=5).

- Model Training: Randomly allocate 100 points for training. Train two GP models: one with a Matérn 5/2 kernel (GPyTorch) and one with an RBF kernel (Scikit-Learn).

- Prediction & Evaluation: Predict on 50 held-out test points and a dense grid of 1000 points for curve reconstruction.

- Metrics: Calculate Root Mean Square Error (RMSE), Mean Standardized Log Loss (MSLL), and average 95% prediction interval coverage on the test set.

- Analysis: Compare the width of the predictive uncertainty bands between models and assess calibration (i.e., does the 95% CI contain the true response ~95% of the time?).

Table 3: In Silico Validation Results (Example Metrics)

| Model | Kernel | Test RMSE | MSLL | 95% CI Coverage | Avg. CI Width |

|---|---|---|---|---|---|

| GPyTorch | Matérn 5/2 | 4.87 | -1.42 | 96.0% | 24.3 |

| Scikit-Learn | RBF | 5.12 | -1.35 | 93.5% | 21.8 |

| Theoretical | - | ~5.0 | - | 95.0% | - |

Key Methodologies and Visualizations

Title: GP Dose-Response Analysis Workflow

Title: GPyTorch vs. Scikit-Learn Feature Comparison

Advanced Implementation: Multi-Output GP for Combination Studies

For modeling synergy in drug combination studies (Dose A vs. Dose B), a Multi-Output GP is required.

- Kernel Selection: Start with Matérn (ν=2.5 or 1.5) for dose-response; it offers a good balance of smoothness and flexibility.

- Data Scaling: Always standardize input doses and response outputs to zero mean and unit variance for stable hyperparameter optimization.

- Uncertainty Calibration: Validate that your predictive confidence intervals are empirically calibrated on held-out data.

- Model Checking: Use posterior predictive checks to assess if the GP can generate data similar to your observed dataset.

- Computational Trade-offs: Use GPyTorch for custom, scalable, or Bayesian deep GP models. Use Scikit-Learn for rapid prototyping of standard GPs on smaller datasets.

Within the broader thesis on advancing Gaussian Process (GP) regression for quantifying uncertainty in dose-response research, modeling in vitro bioassay data presents a critical first application. Assays measuring inhibitor concentration for 50% response (IC50) are foundational in drug discovery but are intrinsically noisy, with variability (heteroscedasticity) often dependent on the concentration level. Standard nonlinear least-squares regression to sigmoidal models (e.g., 4-parameter logistic, 4PL) fails to formally account for this noise structure, leading to biased parameter estimates and incorrect confidence intervals. This guide details a GP framework that jointly learns the mean dose-response curve and the input-dependent noise, providing a robust probabilistic alternative.

Heteroscedastic Noise in Dose-Response Assays

The standard 4PL model is:

Response = Bottom + (Top - Bottom) / (1 + 10^((log10(IC50) - log10(Concentration)) * HillSlope))

Empirical observations show variance (σ²) is not constant but often follows a pattern:

- High variance at extreme low/high concentrations (plateaus).

- Lower variance near the IC50 inflection point.

Ignoring this heteroscedasticity violates the i.i.d. assumption of standard regression.

Table 1: Common Variance Patterns in In Vitro Assays

| Variance Pattern | Typical Assay Context | Impact on Standard 4PL Fit |

|---|---|---|

| Proportional to Mean | Cell viability assays, enzymatic activity. | Overweights high-response regions, biases IC50 high. |

| Larger at Plateaus | Reporter gene assays with low/high signal saturation. | Overweights mid-range data, underestimates uncertainty in EC50/IC50. |

| Asymmetric (Larger at Top) | Binding assays with high background noise. | Biases HillSlope and baseline estimates. |

Gaussian Process Regression Framework

A GP places a prior over functions, defined by a mean function m(x) and covariance kernel k(x, x'). For heteroscedastic modeling, we employ a latent variance model.

Core Model:

y_i = f(x_i) + ε_i, where ε_i ~ N(0, σ²(x_i))

f(x) ~ GP(m(x), k_θ(x, x'))

log(σ²(x)) ~ GP(μ_σ, k_φ(x, x'))

Here, a second GP models the log of the noise variance as a function of concentration x.

Table 2: Kernel Selection for Dose-Response GPs

| Kernel Function | Mathematical Form | Use Case in Dose-Response |

|---|---|---|

| Radial Basis (RBF) | k(x,x') = σ_f² exp(-(x-x')²/(2l²)) |

Models smooth, stationary trends in the mean response. Primary choice for f(x). |

| Matérn 3/2 | k(x,x') = σ_f² (1 + √3r/l) exp(-√3r/l) |

For less smooth, more jagged response curves. |

| Constant | k(x,x') = c |

Can be used in the variance GP (σ²(x)) to model global noise level. |

| RBF + White | k(x,x') = σ_f² exp(-(x-x')²/(2l²)) + σ_n² δ_xx' |

Models smooth trend plus homoscedastic noise. Baseline model. |

Experimental Protocol & Data Simulation for Validation

To validate the GP heteroscedastic model, synthetic data mimicking real assay artifacts is generated.

Protocol 1: Simulating Heteroscedastic Dose-Response Data

- Define True Parameters: Set

Top=100,Bottom=0,log10(IC50)=1.0,HillSlope=-1.5. - Generate Concentrations: 20 concentrations, 3 replicates each, spaced logarithmically from

10^-3to10^3nM. - Compute Mean Response: Apply the 4PL equation.

- Generate Heteroscedastic Noise:

- Model noise standard deviation as:

sd(x) = 5 + 10 * sigmoid((log10(x) - 1.2) * 2). - For each replicate

j, sample:noise_ij ~ N(0, sd(x_i)²).

- Model noise standard deviation as:

- Generate Observed Data:

y_ij = mean_response(x_i) + noise_ij.

Protocol 2: GP Model Fitting (Python/PyMC/GPy)

- Preprocessing: Log-transform concentration values. Standardize response values (

y_mean=0,y_std=1). - Model Specification:

- Mean Function: Zero mean or linear mean.

- Covariance for f(x): RBF kernel (

lengthscale,variance). - Covariance for log(σ²(x)): RBF kernel (separate

lengthscale,variance).

- Inference: Use Markov Chain Monte Carlo (MCMC) (e.g., No-U-Turn Sampler) or variational inference to approximate the posterior distributions of all kernel hyperparameters and the latent functions.

- Prediction: Sample from the posterior predictive distribution to obtain the mean response curve and pointwise uncertainty bands (

μ(x) ± 2σ(x)), which include both epistemic (model) and aleatoric (noise) uncertainty.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in IC50 Modeling Context |

|---|---|

| 384-well Cell-Based Assay Plates | High-density format for generating multi-replicate, multi-dose data essential for noise structure characterization. |

| Cell Titer-Glo Luminescent Viability Assay | Generates continuous viability data. Noise often increases at low cell viability (bottom plateau). |

| Homogeneous Time-Resolved Fluorescence (HTRF) Kits | For protein-protein interaction assays. May exhibit proportional noise. |

| NanoBRET Target Engagement Intracellular Assays | Provides direct IC50 data in live cells. Critical for validating biochemical assay predictions. |

| Robotic Liquid Handlers (e.g., Echo, Hamilton) | Ensure precise, reproducible compound serial dilution to minimize technical noise sources. |

QCPlots R Package / scipy.optimize |

For fitting standard 4PL models, providing initial parameter estimates for GP mean function. |

GPy (Python) or brms (R with Stan) |

Software libraries implementing flexible GP models with heteroscedastic likelihoods. |

Workflow & Results Interpretation

The following diagram illustrates the comparative workflow between standard and GP-based analysis.

Interpreting GP Output:

- The posterior mean of f(x) is the best estimate of the true dose-response curve.

- The posterior mean of σ²(x) quantifies the estimated experimental noise at any concentration.

- The posterior predictive intervals provide valid "confidence bands" for new observations, correctly widening in regions of high inferred noise.

Table 3: Comparison of Fitting Methods on Simulated Data

| Metric | Standard 4PL (Homoscedastic) | Heteroscedastic GP | True Value |

|---|---|---|---|

| Estimated log10(IC50) | 1.15 (± 0.12) | 1.03 (± 0.18) | 1.00 |

| 95% CI Width for log10(IC50) | 0.47 | 0.71 | N/A |

| Mean Abs Error at Plateaus | 8.7% | 2.1% | N/A |

| Model Evidence (Log-Likelihood) | -142.5 | -121.2 | N/A |

The GP's IC50 estimate is more accurate, and its wider CI reflects the more realistic, heteroscedastic noise model. The higher log-likelihood strongly supports the GP model.

Key Signaling Pathways in Targeted Therapies

Modeling IC50 curves is frequently applied to drugs targeting oncogenic signaling pathways. Understanding the pathway context aids in interpreting curve shape (e.g., Hill slope).

In conclusion, framing IC50 modeling within a heteroscedastic GP regression paradigm provides a rigorous statistical foundation for uncertainty quantification in early drug discovery. This approach directly addresses the limitations of standard curve fitting, yielding more reliable potency estimates and informing robust go/no-go decisions. This application forms a cornerstone for extending GP methods to more complex scenarios, such as modeling synergy in combination therapies or longitudinal cell response.

This whitepaper details the application of Bayesian Optimization (BO), underpinned by Gaussian Process (GP) regression, for dual-objective dose-finding in early-phase clinical trials. This work is a core component of a broader thesis investigating GP models for quantifying uncertainty in dose-response relationships. The primary challenge in Phase I/II trials is to jointly optimize the dose for both safety (Phase I: Toxicity) and efficacy (Phase II: Response), a problem naturally framed as balancing exploration and exploitation—the forte of BO.

Core Methodological Framework

Bayesian Optimization for dose escalation employs a GP as a probabilistic surrogate model for the unknown dose-outcome functions. A utility function, combining the predicted probability of efficacy and toxicity, guides the sequential dose assignment for the next patient cohort.

Key Components:

- Surrogate Model: A Gaussian Process prior is placed over the latent dose-response and dose-toxicity surfaces.

- Acquisition Function: A carefully designed function (e.g., Expected Utility, Probability of Success) quantifies the desirability of each untried dose, balancing the need to learn the models (explore) and to treat patients at optimal doses (exploit).

- Sequential Design: Given data from the first n patients, the acquisition function is optimized to recommend dose d_{n+1} for the next patient/cohort.

Experimental Protocols & Quantitative Data

Standard BO Dose-Finding Protocol

- Prior Specification: Elicit prior distributions for GP hyperparameters (length-scales, variances) and define the dose-efficacy and dose-toxicity GP mean functions.

- Dose Space Definition: Establish a continuous or finely discretized dose range

[d_min, d_max]. - Initialization: Treat a small initial cohort at a safe starting dose (often

d_min). - Iteration Loop:

a. Model Fitting: Update the joint GP posterior for efficacy (

f_E(d)) and toxicity (f_T(d)) given all observed binary or continuous outcomes. b. Utility Calculation: Compute the posterior distribution of a utility functionU(d) = g(p_E(d), p_T(d)), wherepdenotes the probability of event. c. Dose Selection: Choose the next dosed* = argmax_d E[U(d) | Data]. d. Cohort Treatment: Administerd*to the next patient cohort. e. Outcome Observation: Assess efficacy and toxicity outcomes after the observation window. - Stopping: The trial stops after a maximum sample size or if the optimal dose is identified with sufficient precision (e.g., posterior credible interval width below a threshold).

Quantitative Performance Comparison

The following table summarizes simulated operating characteristics of BO dose-finding designs compared to traditional model-based designs (e.g., CRM, BOIN) in a common Phase I/II scenario (sample size=60, target toxicity ≤0.3, goal to maximize efficacy).

Table 1: Simulated Performance of Dose-Finding Designs

| Design | Correct Selection % (Optimal Dose) | Patients Treated at Optimal Dose | Average Overdose Rate (>Target Tox) | Average Sample Size |

|---|---|---|---|---|

| BO-GP Utility | 78.5 | 24.1 | 0.09 | 60.0 |

| BOIN-ET | 72.3 | 22.8 | 0.11 | 60.0 |

| CRM-Based | 70.1 | 21.5 | 0.15 | 60.0 |

| 3+3 (Phase I only) | N/A | N/A | 0.05 | 24.5 (avg.) |

Data Source: Aggregate results from recent simulation studies (Thall & Cook, 2020; Liu & Johnson, 2022). BO-GP demonstrates superior identification of the optimal therapeutic dose.

Visualizing the Workflow and Logic

Title: Bayesian Optimization Dose-Finding Workflow

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Toolkit for Implementing BO Dose-Finding

| Item / Solution | Function in the Research Process |

|---|---|

| Probabilistic Programming Language (e.g., Stan, Pyro, GPyTorch) | Enables flexible specification and efficient posterior sampling of the joint GP-efficacy-toxicity model. |

Clinical Trial Simulation Framework (e.g., R dfpk, boinet) |

Provides validated environments for simulating virtual patient cohorts and testing BO design operating characteristics. |

| Utility Function Library | Pre-coded utility functions (e.g., scaled linear, desirability index) for combining efficacy and toxicity predictions. |

| Dose-Response Data Standards (CDISC) | Standardized format (SDTM/ADaM) for historical and trial data, crucial for building informative priors. |

| High-Performance Computing (HPC) Cluster | Facilitates real-time posterior computation and dose recommendation during trial execution via parallel MCMC chains. |

| Safety Monitoring Dashboard | Real-time visualization tool for the evolving GP posterior, predicted utility, and cohort safety summaries. |

Within Gaussian Process (GP) regression for dose-response uncertainty research, visualizing results is not merely illustrative but analytically critical. This guide details the technical implementation and interpretation of three core visualization components: the mean prediction, the confidence band (or credible interval), and the acquisition function. These elements form the foundation for decision-making in Bayesian optimization, particularly in drug development where efficiently identifying optimal compound doses is paramount.

Theoretical Framework

A Gaussian Process defines a prior over functions, fully specified by a mean function ( m(\mathbf{x}) ) and a covariance (kernel) function ( k(\mathbf{x}, \mathbf{x}') ). Given observed data ( \mathcal{D} = {(\mathbf{x}i, yi)}{i=1}^n ), the posterior predictive distribution at a new test point ( \mathbf{x}* ) is Gaussian: [ f(\mathbf{x}*) | \mathcal{D} \sim \mathcal{N}(\mu(\mathbf{x}), \sigma^2(\mathbf{x}_)) ] where:

- Mean Prediction ( \mu(\mathbf{x}_*) ): The expected value of the response.

- Predictive Variance ( \sigma^2(\mathbf{x}_*) ): Quantifies uncertainty.

- Confidence Band: Typically ( \mu(\mathbf{x}*) \pm 2\sigma(\mathbf{x}*) ), representing a ~95% credible interval.

The Acquisition Function ( \alpha(\mathbf{x}) ) guides sequential experimentation by balancing exploration (high uncertainty) and exploitation (promising mean prediction).

Core Visualization Components & Quantitative Comparison

Table 1: Core Components of a GP Visualization for Dose-Response

| Component | Mathematical Expression | Visual Representation | Primary Role in Research |

|---|---|---|---|

| Mean Prediction | ( \mu(\mathbf{x}*) = \mathbf{k}*^T (K + \sigma_n^2 I)^{-1} \mathbf{y} ) | Solid line (e.g., blue) | Estimates the underlying response function (e.g., efficacy vs. dose). |

| Confidence Band | ( \mu(\mathbf{x}*) \pm \lambda \sqrt{\sigma^2(\mathbf{x}*)} ) | Shaded region around mean (e.g., light blue) | Quantifies model uncertainty; width indicates regions needing more data. |

| Acquisition Function | e.g., Expected Improvement: ( \alpha_{EI}(\mathbf{x}) = \mathbb{E}[\max(f(\mathbf{x}) - f(\mathbf{x}^+), 0)] ) | Separate axis, line or bar plot (e.g., green) | Computes the utility of evaluating a dose; peaks indicate proposed next experiments. |

Table 2: Common Acquisition Functions in Dose-Response Optimization

| Function Name | Formula | Key Property | Best For |

|---|---|---|---|

| Probability of Improvement (PI) | ( \alpha_{PI}(\mathbf{x}) = \Phi\left(\frac{\mu(\mathbf{x}) - f(\mathbf{x}^+)}{\sigma(\mathbf{x})}\right) ) | Exploitative; seeks immediate gains. | Refining near a suspected optimum. |

| Expected Improvement (EI) | ( \alpha_{EI}(\mathbf{x}) = (\mu(\mathbf{x}) - f(\mathbf{x}^+))\Phi(Z) + \sigma(\mathbf{x})\phi(Z) ) | Balanced trade-off. | General-purpose global optimization. |

| Upper Confidence Bound (UCB) | ( \alpha_{UCB}(\mathbf{x}) = \mu(\mathbf{x}) + \kappa \sigma(\mathbf{x}) ) | Explicit exploration parameter ( \kappa ). | Hyperparameter-controlled exploration. |

| Predictive Entropy Search | Based on expected reduction in entropy of the optimum. | Information-theoretic. | Maximizing information gain per experiment. |

Experimental Protocol for a Benchmark Dose-Response Study

Protocol: Bayesian Optimization of In Vitro Compound Efficacy

Objective: Identify the half-maximal inhibitory concentration (IC50) of a novel kinase inhibitor with minimal experimental wells.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Initial Design: Seed the GP model with 4-6 dose-response points from a broad logarithmic range (e.g., 1 nM to 100 µM). Measure percent inhibition in triplicate.

- Model Fitting: Fit a GP with a Matérn 5/2 kernel to the mean response at each dose. Optimize hyperparameters (length scale, signal variance, noise variance) via maximum marginal likelihood.

- Visualization & Decision:

- Generate a plot with dose (log10 scale) on the x-axis.

- Plot the GP mean prediction as a solid line.

- Shade the 95% confidence band (mean ± 2 SD).

- On a secondary y-axis, plot the Expected Improvement (EI) acquisition function.

- Iterative Loop:

- Select the next dose point at the maximum of the acquisition function.

- Perform the wet-lab experiment at this dose.

- Augment the data set and refit the GP model.

- Repeat steps 3-4 until convergence (e.g., EI < threshold or uncertainty at optimum is below target).

- Analysis: Extract the estimated IC50 from the final GP mean curve (dose at which μ(x) = 50%). Report the confidence interval from the posterior.

Visualization of the Bayesian Optimization Workflow

Diagram Title: Bayesian Optimization Loop for Dose Finding

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for GP-Guided Dose-Response

| Item/Reagent | Function in the Experimental Protocol |

|---|---|

| Cell-Based Assay Kit (e.g., CellTiter-Glo) | Quantifies cell viability or cytotoxicity; generates the continuous response variable (e.g., % inhibition) for GP regression. |

| Compound Dilution Series | The independent variable (dose). Prepared in log-scale increments to ensure efficient exploration of the response surface. |

| Positive/Negative Control Compounds | Validates assay performance and provides biological reference points for normalizing GP model outputs. |

| Automated Liquid Handler | Enforces precise, reproducible compound dispensing across plates and iterative rounds of experimentation. |

| Statistical Software (Python/R with GPy/GPflow/Stan) | Implements GP model fitting, hyperparameter optimization, and generation of predictions/visualizations. |

| Microplate Reader | Measures the assay endpoint signal (e.g., luminescence), converting biological effect into quantitative data for the GP. |

Overcoming Challenges: Optimizing GP Models for Robust and Scalable Dose-Response Analysis