AI in Drug Discovery: Transforming Pharmaceutical R&D with Machine Learning & Generative Models

This article provides a comprehensive overview of artificial intelligence's transformative role in modern drug discovery.

AI in Drug Discovery: Transforming Pharmaceutical R&D with Machine Learning & Generative Models

Abstract

This article provides a comprehensive overview of artificial intelligence's transformative role in modern drug discovery. Targeted at researchers and drug development professionals, it explores the foundational concepts of AI/ML in biomedicine, details cutting-edge methodologies from virtual screening to generative chemistry, addresses critical challenges in data and model validation, and evaluates AI's performance against traditional methods. The analysis synthesizes current trends, practical implementation strategies, and the future trajectory of AI-driven pharmaceutical innovation.

AI in Pharma 101: Understanding the Core Concepts and Evolution of Machine Learning for Drug Discovery

The integration of Artificial Intelligence (AI) into drug discovery represents a paradigm shift from serendipity and high-throughput brute force to a predictive, data-driven science. This document, framed within broader research on AI for drug discovery applications, details tangible protocols and workflows moving beyond theoretical hype. The core lies in the iterative cycle of in silico prediction followed by in vitro/in vivo validation, creating a continuous learning loop that refines AI models with experimental data.

Table 1: Benchmark Performance of AI Models in Key Discovery Tasks (2023-2024)

| Discovery Task | AI Model Type | Key Metric | Reported Performance | Baseline (Non-AI) | Data Source (Example) |

|---|---|---|---|---|---|

| Virtual Screening | Graph Neural Network (GNN) | Enrichment Factor (EF₁%) | 15-35 | 5-10 (Docking) | PDBbind, CASF benchmarks |

| De Novo Molecular Design | Generative Adversarial Network (GAN) / REINFORCE | Synthetic Accessibility Score (SAS) & QED | SAS < 4.5, QED > 0.6 | Varies (Fragment-based) | GuacaMol benchmark suite |

| ADMET Prediction | Transformer / Deep Ensemble | AUC-ROC (e.g., for hERG inhibition) | 0.85-0.92 | 0.70-0.78 (QSAR) | ADMET benchmark datasets |

| Protein Structure Prediction | AlphaFold2 Variants | RMSD (Å) on difficult targets | 2-5 Å | >10 Å (Homology) | AlphaFold Server, EBI |

| Synergistic Drug Combination | Deep Learning on Cell Painting | Bliss Synergy Score Prediction Accuracy | ~80% | N/A | LINCS L1000, DrugComb |

Table 2: Comparative Analysis of AI-Driven Discovery Platforms (Select Examples)

| Platform/Provider | Primary Technology | Therapeutic Area Focus | Development Stage (Example) | Reported Time Reduction |

|---|---|---|---|---|

| Insilico Medicine (Chemistry42) | Generative RL, GNN | Oncology, Fibrosis | Phase II (ISM001-055) | Lead ID: ~12-18 months |

| Exscientia (CentaurAI) | Active Learning, Multi-parametric Optimization | Oncology, Immunology | Phase I/II (EXS-21546) | Preclinical candidate: 50% faster |

| Atomwise (AtomNet) | 3D Convolutional Neural Networks | Undisclosed/Multiple | Multiple preclinical programs | Screening billion-scale libraries |

| Recursion (RxRx3) | Phenotypic CNN on cell images | Rare disease, Oncology | Phase II (REC-2282) | High-content screen analysis: days vs. months |

Experimental Protocols

Protocol 1: End-to-End AI-Driven Hit Identification for a Novel Kinase Target

Objective: Identify novel, synthetically accessible chemical matter inhibiting Target Kinase X with favorable predicted ADMET profiles.

Materials: See "Scientist's Toolkit" (Section 5).

Methodology:

- Target Featurization & Model Training:

- Gather 3D structure (experimental or AlphaFold2-predicted) of Target Kinase X. Prepare a curated dataset of known actives/inactives from public sources (ChEMBL, PubChem) or proprietary assays.

- Train a hybrid Graph Neural Network (GNN) and docking scoring function model. The GNN learns from 2D molecular graphs, while a simplified molecular docking provides spatial context.

- Validate model using time-split or cluster-based split to avoid data leakage. Target EF₁% > 20 on held-out test set.

Generative Library Design & In Silico Screening:

- Employ a conditional Generative Adversarial Network (cGAN) primed with known active scaffolds and desired property filters (MW <450, cLogP <3).

- Generate a virtual library of 1,000,000 molecules. Screen this library using the trained GNN model from Step 1.

- Apply strict PAINS (Pan Assay Interference Compounds) filters and synthetic accessibility filters (e.g., using RAscore).

- Cluster top 10,000 hits by molecular fingerprint (ECFP4) and select 500 representative, diverse compounds for procurement/synthesis.

Tiered In Vitro Validation:

- Primary Screening: Test the 500 compounds in a biochemical assay (e.g., ADP-Glo Kinase Assay) at 10 µM single dose. Prioritize compounds with >70% inhibition.

- Dose-Response & Counterscreening: Determine IC₅₀ for primary hits. Run in a counterscreen panel against related kinases (Kinase A, B, C) to assess selectivity. Prioritize compounds with >10-fold selectivity.

- Early ADMET Profiling: Subject top 20 selective hits to microsomal stability (mouse/human liver microsomes) and Caco-2 permeability assays. Use LC-MS/MS for quantification.

AI Model Refinement (The Learning Loop):

- Incorporate experimental results (IC₅₀, stability data) back into the training dataset.

- Retrain the AI model (Step 1) with this new, higher-quality data to improve its predictive accuracy for the next design-make-test-analyze (DMTA) cycle.

Protocol 2: AI-Enhanced Analysis of High-Content Phenotypic Screening Data

Objective: Identify compounds inducing a desired phenotypic signature (e.g., tumor cell cytostasis without apoptosis) from high-content imaging.

Materials: See "Scientist's Toolkit" (Section 5).

Methodology:

- Experimental Setup & Imaging:

- Seed target cancer cells (e.g., U2OS) in 384-well plates. Treat with a library of 5,000 compounds (plus controls) for 48 hours at 5 µM.

- Stain cells with multiplexed dyes: Hoechst 33342 (nucleus), CellEvent Caspase-3/7 (apoptosis), MitoTracker (mitochondria), and Phalloidin (actin).

- Acquire images using a high-content confocal imager (e.g., ImageXpress Pico) with a 20x objective, capturing 9 fields per well.

AI-Powered Image Analysis:

- Use a pre-trained convolutional neural network (CNN), such as a ResNet-50, for single-cell segmentation and feature extraction. Transfer learning can be applied by fine-tuning the last layers on a manually annotated subset of your specific images.

- Extract ~1,000 morphological and intensity features (e.g., nuclear texture, cytoplasmic area, puncta count) per single cell.

- Aggregate features per well, creating a rich phenotypic profile ("fingerprint") for each compound treatment.

Phenotypic Clustering & Hit Prioritization:

- Apply an unsupervised learning algorithm (e.g., UMAP for dimensionality reduction followed by HDBSCAN clustering) to the compound phenotypic fingerprints.

- Identify clusters of compounds that recapitulate the phenotype of known reference drugs (e.g., cytostatic vs. cytotoxic controls).

- Prioritize hits from the "desired phenotype" cluster that are chemically distinct from the reference tools and have favorable in silico property predictions.

Visualizations

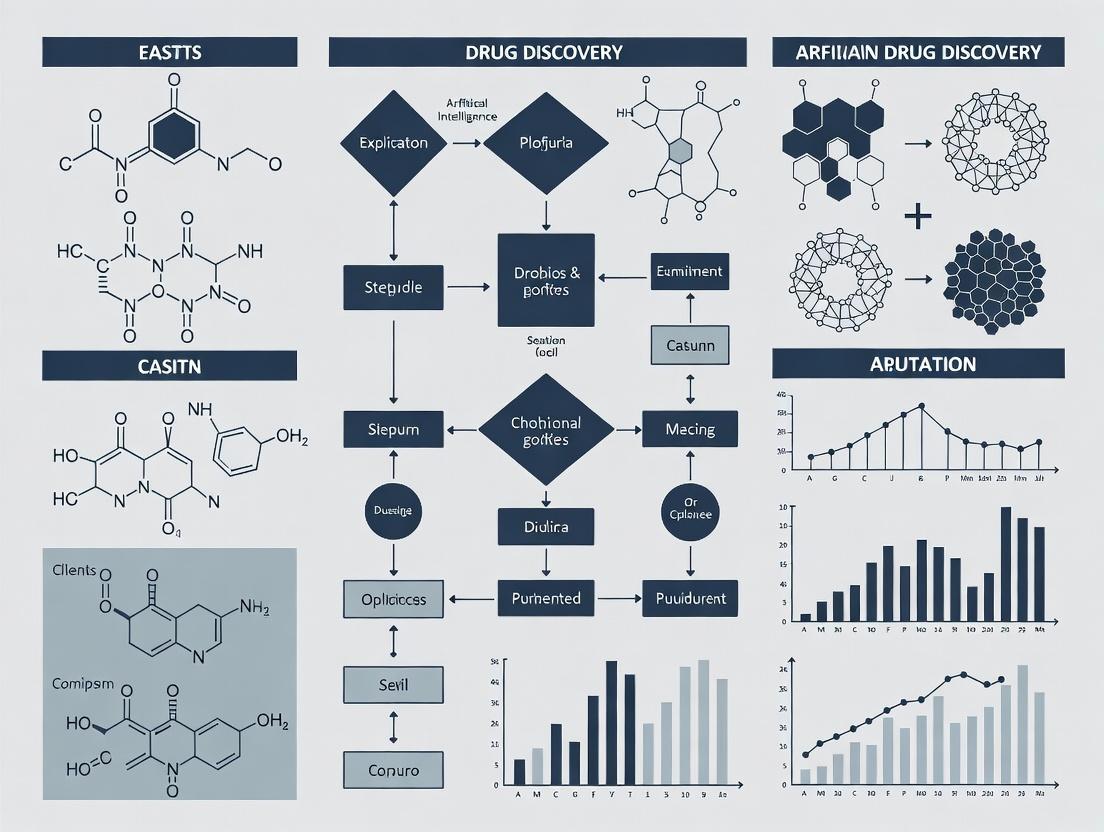

Diagram 1: AI-Driven Drug Discovery Core Workflow

Diagram 2: AI-Augmented Phenotypic Screening Analysis

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Provider (Example) | Function in AI-Driven Workflow |

|---|---|---|

| AlphaFold2 Protein Structure Database | EMBL-EBI / DeepMind | Provides high-accuracy predicted 3D protein structures for targets lacking experimental data, enabling structure-based AI design. |

| Enamine REAL Space Library | Enamine | Ultra-large (30B+ compounds) make-on-demand virtual library for in silico screening with tractable synthetic routes. |

| ADMET Predictor Software | Simulations Plus | Provides high-quality in silico ADMET property predictions (PK, toxicity) for training AI models or filtering candidates. |

| Cell Painting Kit | Thermo Fisher Scientific | Standardized multiplex fluorescent dye set for high-content imaging, generating rich, AI-analyzable phenotypic data. |

| Cerebral Organoid Culture System | STEMCELL Technologies | Complex in vitro disease models that generate multi-parametric data for AI analysis of compound effects. |

| DEL (DNA-Encoded Library) Screening Service | X-Chem, DyNAbind | Generates massive experimental binding data (billions of compounds) to train or validate AI affinity prediction models. |

| Cloud-based ML Platform (Vertex AI, AWS SageMaker) | Google Cloud, AWS | Scalable infrastructure for training and deploying large AI models without on-premise computational limits. |

| RDKit Open-Source Cheminformatics | Open Source | Fundamental Python toolkit for molecular manipulation, descriptor calculation, and integration into AI pipelines. |

The integration of computation into chemistry has fundamentally transformed the process of drug discovery. This evolution, now culminating in artificial intelligence (AI) and machine learning (ML), represents a continuum from physics-based modeling to data-driven prediction.

Table 1: Key Eras in the Evolution of Computational Chemistry to AI/ML

| Era (Approx.) | Dominant Paradigm | Key Methodologies | Typical Application in Drug Discovery |

|---|---|---|---|

| 1970s-1980s | Molecular Mechanics | Force Fields (e.g., AMBER, CHARMM), Energy Minimization | Conformational analysis, small molecule docking prep |

| 1990s-2000s | Quantum Chemistry | Semi-empirical, DFT, ab initio methods (e.g., Gaussian) | Reaction mechanism study, ligand electronic properties |

| 2000s-2010s | Molecular Simulation | Molecular Dynamics (MD), Monte Carlo, Free Energy Perturbation (FEP) | Binding affinity prediction, protein-ligand dynamics |

| 2010s-Present | AI/ML-Driven Design | Deep Learning (CNNs, GNNs, Transformers), Generative Models | De novo molecule generation, property prediction, binding affinity scoring |

Core Methodologies: From Physics-Based to Data-Driven

Foundational Computational Chemistry Protocols

Protocol 1: Molecular Dynamics (MD) Simulation for Protein-Ligand Complex Stability

- System Preparation: Obtain a protein-ligand complex PDB file. Use a tool (e.g.,

pdb2gmxin GROMACS) to assign force field parameters (e.g., CHARMM36) and solvate the system in a cubic water box (e.g., TIP3P model). Add ions to neutralize system charge. - Energy Minimization: Perform steepest descent minimization (max 5000 steps) to remove steric clashes and bad contacts.

- Equilibration:

- NVT Ensemble: Run a 100 ps simulation at 300 K using a thermostat (e.g., V-rescale) to stabilize temperature.

- NPT Ensemble: Run a 100 ps simulation at 1 bar using a barostat (e.g., Parrinello-Rahman) to stabilize pressure and density.

- Production MD: Run an unrestrained simulation (e.g., 100 ns) with a 2-fs integration time step. Save coordinates every 10 ps.

- Analysis: Calculate Root Mean Square Deviation (RMSD) of the protein backbone and ligand heavy atoms relative to the starting structure to assess stability. Compute the Radius of Gyration (Rg) for the protein. Analyze specific protein-ligand interactions (H-bonds, hydrophobic contacts) over time using tools like

gmx rms,gmx gyrate, andgmx hbond.

Protocol 2: Density Functional Theory (DFT) Calculation for Ligand Reactivity

- Ligand Input: Generate a 3D molecular structure file (

.mol2or.sdf) of the ligand in its proposed bioactive conformation. - Geometry Optimization: Use software (e.g., Gaussian 16) to perform a preliminary geometry optimization at a lower theory level (e.g., B3LYP/6-31G(d)).

- Single-Point Energy Calculation: Perform a higher-accuracy single-point energy calculation on the optimized geometry using a larger basis set (e.g., B3LYP/6-311+G(d,p)) and accounting for solvation effects (e.g., via the SMD model).

- Analysis: Extract Frontier Molecular Orbitals (Highest Occupied Molecular Orbital - HOMO and Lowest Unoccupied Molecular Orbital - LUMO) to assess chemical reactivity and potential sites for nucleophilic/electrophilic attack. Calculate molecular electrostatic potential (MEP) surfaces.

Modern AI/ML Protocols for Drug Discovery

Protocol 3: Training a Graph Neural Network (GNN) for Property Prediction

- Dataset Curation: Assemble a dataset of molecules with associated experimental properties (e.g., IC50, solubility). Standardize molecules (e.g., using RDKit) and split into training (70%), validation (15%), and test (15%) sets. Represent each molecule as a graph with atoms as nodes (features: atom type, degree, hybridization) and bonds as edges (features: bond type, conjugation).

- Model Architecture: Implement a GNN using a framework like PyTorch Geometric. The network should consist of:

- 3-5 Message Passing Layers: To aggregate neighborhood information (e.g., GraphConv, GIN layers).

- Global Pooling Layer: To generate a single graph-level representation (e.g., global mean pooling).

- Fully Connected Regressor Head: To map the pooled representation to the predicted property (e.g., a single continuous value for pIC50).

- Training: Use Mean Squared Error (MSE) loss and the Adam optimizer. Train for a fixed number of epochs (e.g., 200), evaluating performance on the validation set after each epoch. Implement early stopping to prevent overfitting.

- Evaluation: Apply the final model to the held-out test set. Report standard metrics: Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and the coefficient of determination (R²).

Protocol 4: Structure-Based Virtual Screening with a Deep Learning Scoring Function

- Input Preparation: Prepare a library of 3D small molecule structures in a suitable format (e.g., SDF). Prepare the target protein structure (cleaned, protonated, with defined binding site).

- Pose Generation: For each ligand, generate multiple docking poses within the protein's binding site using a traditional or geometric method (e.g., with SMINA or RDKit).

- Feature Extraction: For each protein-ligand complex (pose), extract spatial and topological features. Common approaches include constructing a 3D voxel grid (for CNN-based models) or a heterogeneous graph (for GNN-based models) representing atomic interactions.

- Model Inference: Feed the extracted features into a pre-trained deep learning scoring function (e.g., Pafnucy, DeepDockF). The model outputs a score or predicted binding affinity (ΔG or pKd) for each pose.

- Ranking & Selection: Rank all ligands by their best-pose predicted affinity. The top-ranking compounds (e.g., top 100) are selected for in vitro experimental validation.

Visualization of Key Workflows

AI-Enhanced Virtual Screening Workflow

GNN for Molecular Property Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for AI/ML-Driven Computational Chemistry

| Category | Item/Software | Primary Function in Drug Discovery |

|---|---|---|

| Cheminformatics | RDKit, Open Babel | Open-source toolkits for molecule manipulation, fingerprint generation, descriptor calculation, and file format conversion. Essential for dataset preparation. |

| Simulation Engines | GROMACS, AMBER, OpenMM | High-performance molecular dynamics software for simulating the physical movements of atoms and molecules, crucial for understanding dynamics and stability. |

| Quantum Chemistry | Gaussian, ORCA, PSI4 | Software for performing ab initio, DFT, and other quantum mechanical calculations to study electronic structure, reactivity, and spectroscopy. |

| Docking & Screening | AutoDock Vina, Glide, FRED | Tools for predicting how small molecules bind to a protein target, enabling structure-based virtual screening of large compound libraries. |

| ML/DL Frameworks | PyTorch, TensorFlow, PyTorch Geometric | Core libraries for building, training, and deploying custom machine learning and deep learning models, including specialized architectures for molecules (GNNs). |

| Generative Models | REINVENT, MolGPT, DiffDock | Specialized AI models for generating novel molecular structures de novo or predicting how a ligand binds (pose prediction) without traditional search algorithms. |

| Data & Benchmarks | ChEMBL, PDBbind, MoleculeNet | Publicly accessible, curated databases of bioactive molecules, protein-ligand complexes, and benchmark datasets for training and testing predictive models. |

| Cloud & HPC | AWS/GCP/Azure, SLURM | Cloud computing platforms and High-Performance Computing cluster managers essential for scaling computationally intensive simulations and model training. |

Application Notes

In the domain of drug discovery, the distinct yet interconnected subfields of Artificial Intelligence (AI) provide a powerful, multi-layered toolkit for accelerating research. Machine Learning (ML) forms the foundational layer for predictive modeling from complex datasets. Deep Learning (DL), a subset of ML, excels at extracting hierarchical features from high-dimensional data like molecular structures and medical images. Generative AI builds upon these to create novel molecular entities with desired properties. The synergy of these subfields is transforming the pipeline from target identification to preclinical candidate optimization.

Quantitative Performance Comparison of AI Subfields in Drug Discovery Tasks

Table 1: Summary of recent benchmark performance metrics for key AI applications in drug discovery (2023-2024).

| AI Subfield | Primary Application | Typical Dataset | Reported Metric | Performance Range | Key Model/Architecture |

|---|---|---|---|---|---|

| Machine Learning | Quantitative Structure-Activity Relationship (QSAR) | Curated chemical + bioactivity data (e.g., ChEMBL) | Mean Squared Error (MSE) / ROC-AUC | MSE: 0.3-0.8; AUC: 0.75-0.90 | Random Forest, Gradient Boosting, SVM |

| Deep Learning | Protein-Ligand Binding Affinity Prediction | PDBbind, DUD-E | Root Mean Square Error (RMSE) / Enrichment Factor (EF) | RMSE: 1.0-1.5 (pKd/pKi); EF@1%: 10-30 | 3D Convolutional Neural Networks, Graph Neural Networks |

| Generative AI | De Novo Molecule Generation | ZINC, PubChem | Validity, Uniqueness, Novelty, Drug-likeness (QED) | Validity >95%, Novelty >80%, QED >0.6 | Variational Autoencoders, Generative Adversarial Networks, Transformers |

| Deep Learning | High-Content Image Analysis for Phenotypic Screening | Cell painting images | Z'-factor, Hit Rate | Z'>0.5, Hit Rate increase 2-5x vs. control | Convolutional Neural Networks (ResNet, U-Net) |

| Generative AI | Scaffold Hopping & Lead Optimization | Patent-derived chemical series | Synthesizability (SA), Docking Score Improvement | SA Score 2-4, ΔDocking Score: -2.0 to -4.0 kcal/mol | Reinforcement Learning, Flow Networks |

Experimental Protocols

Protocol 1: ML-Based QSAR Model for Virtual Screening

Objective: To build a predictive classifier for identifying active compounds against a novel kinase target using historical bioassay data. Materials: Bioactivity data (IC50) from PubChem AID, RDKit, Scikit-learn, Python environment.

- Data Curation: Extract SMILES strings and IC50 values (active: IC50 < 1µM, inactive: IC50 > 10µM). Apply standardizer (RDKit) for canonicalization and desalt.

- Feature Engineering: Compute 200 molecular descriptors (e.g., MW, LogP, topological indices) and 2048-bit Morgan fingerprints (radius=2) for each compound.

- Dataset Splitting: Split data 70:15:15 into training, validation, and hold-out test sets using stratified sampling based on activity.

- Model Training: Train a Gradient Boosting Classifier (nestimators=500, maxdepth=5) on the training set using descriptors and fingerprints as concatenated features.

- Validation & Hyperparameter Tuning: Optimize hyperparameters via 5-fold cross-validation on the training set, evaluating using ROC-AUC on the validation set.

- Testing & Deployment: Evaluate final model on the hold-out test set. Report ROC-AUC, precision-recall curve, and enrichment factor at 1% of the screened database. Use model to score an external virtual library.

Protocol 2: DL-Based Protein-Ligand Binding Affinity Prediction

Objective: To predict binding affinity (pKd) using a 3D convolutional neural network (CNN) from protein-ligand complex structures. Materials: PDBbind refined set (v2023), DeepChem or PyTorch, MDock, GPU cluster.

- Data Preparation: Download and preprocess protein-ligand complexes. Remove water molecules, add hydrogens, and assign partial charges using a force field (e.g., AMBER).

- 3D Voxelization: For each complex, define a 20Šcubic box centered on the ligand. Discretize the box into 1ų voxels. Create separate channels for atomic density features (e.g., protein atom type, ligand atom type, electrostatic potential).

- Model Architecture: Implement a 3D CNN with three consecutive convolutional layers (filters: 32, 64, 128; kernel: 3³) each followed by ReLU and max-pooling. Flatten output and connect to two fully connected layers (neurons: 256, 128) ending in a linear output node.

- Training: Train the model using Mean Squared Error (MSE) loss and the Adam optimizer (lr=0.001) for 100 epochs. Use a 80:20 train/validation split.

- Evaluation: Assess model performance on the core set of PDBbind using standard metrics: Pearson's R, RMSE, and MAE. Compare results against classical scoring functions (e.g., Vina, PLP).

Protocol 3: Generative AI forDe NovoLead Design with Reinforcement Learning

Objective: To generate novel, synthesizable molecules with high predicted activity against a target and desirable ADMET properties. Materials: REINVENT v3.0 framework, pre-trained RNN as Prior, target-specific predictive Activity model (Protocol 1), ADMET prediction models.

- Agent Configuration: Initialize the RNN Agent with the Prior network weights. Define a scoring function (S) as the weighted sum: S = w1Activity + w2QED - w3SA - w4Pan-Assay Interference (PAINS) alert.

- Policy Update: The Agent generates a batch of molecules (e.g., 1000). The scoring function evaluates each. The loss is computed as the negative likelihood of generating molecules weighted by their score, guiding the Agent's policy (weights) update via augmented likelihood.

- Exploration vs. Exploitation: Incorporate a diversity filter to encourage exploration of new scaffolds, penalizing the generation of molecules too similar to previously high-scoring ones.

- Iterative Generation: Run for 500 epochs. Periodically sample the generated molecules and inspect the top scorers. Tune the weights (w1-w4) of the scoring function based on cheminformatics analysis of outputs.

- Output & Validation: Select top 50 unique, novel molecules for in silico validation via molecular docking and ADMET prediction. Propose top 10-20 with best profiles for synthesis and in vitro testing.

Visualizations

Title: AI Subfield Synergy in Drug Discovery

Title: DL-Based Binding Affinity Prediction Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential computational tools and resources for AI-driven drug discovery projects.

| Item Name | Type/Category | Primary Function in AI Drug Discovery | Example Vendor/Provider |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Enables molecular representation (SMILES, fingerprints), descriptor calculation, and basic molecular operations. | RDKit Community |

| PyTorch / TensorFlow | Deep Learning Framework | Provides the core environment for building, training, and deploying custom neural network models (CNNs, GNNs, etc.). | Meta / Google |

| DeepChem | DL Library for Life Sciences | Offers curated molecular datasets, pre-built model architectures (GraphConv, MPNN), and specialized layers for chemical data. | DeepChem Community |

| Schrödinger Suite | Commercial Computational Platform | Integrates ML/DL tools (e.g., Canvas) with physics-based simulation (FEP+, docking) for end-to-end discovery. | Schrödinger |

| REINVENT | Open-Source Generative AI Framework | A specialized platform for applying reinforcement learning to de novo molecular design with customizable scoring. | Janssen / GitHub |

| OMOP | Commercial AI-Powered Discovery Platform | Provides cloud-based generative chemistry, virtual screening, and property prediction in a unified interface. | Optibrium |

| ZINC / ChEMBL | Public Chemical Database | Sources of millions of purchasable compounds (ZINC) and annotated bioactivity data (ChEMBL) for training and testing models. | UCSF / EMBL-EBI |

| GPU Computing Instance | Hardware/Cloud Resource | Accelerates the training of deep learning models, particularly for 3D-CNNs and large generative models. | AWS, GCP, Azure, NVIDIA |

In artificial intelligence for drug discovery, the integration of multimodal datasets is paramount. Chemical structures, genomic sequences, proteomic profiles, and clinical outcomes form the core data types that fuel predictive models. This integration enables the transition from target identification to patient stratification, creating a more efficient and personalized discovery pipeline. This application note details protocols and methodologies for the curation, integration, and analysis of these four core data types within an AI/ML framework.

Table 1: Core Data Types in AI-Driven Drug Discovery

| Data Type | Primary Sources | Key Format(s) | Typical Volume per Sample | Primary Use in AI/ML |

|---|---|---|---|---|

| Chemical | PubChem, ChEMBL, ZINC, in-house libraries | SMILES, SDF, InChI | 1 KB - 10 KB (per compound) | QSAR, virtual screening, de novo molecular design |

| Genomic | TCGA, GEO, dbGaP, UK Biobank | FASTA, FASTQ, VCF, BAM | 100 GB - 200 GB (whole genome) | Target identification, biomarker discovery, patient stratification |

| Proteomic | PRIDE, CPTAC, Human Protein Atlas | mzML, mzIdentML, PSM reports | 1 GB - 50 GB (MS-based profiling) | Target engagement, pathway analysis, pharmacodynamic biomarkers |

| Clinical | EHRs, clinical trial repositories (ClinicalTrials.gov), real-world data | CDISC, OMOP, HL7 FHIR | Variable, often structured tables | Outcome prediction, trial simulation, safety signal detection |

Application Notes & Protocols

Protocol 1: Multimodal Data Integration for Target Identification

Objective: To integrate genomic variant data with proteomic expression profiles for novel oncology target identification.

Workflow:

- Data Acquisition:

- Download somatic mutation data (VCF files) and RNA-seq expression data (count matrices) for a cohort of interest (e.g., TCGA-LUAD) using the Genomic Data Commons Data Transfer Tool.

- Download matched proteomic (RPPA or MS) data from the Clinical Proteomic Tumor Analysis Consortium (CPTAC) portal.

Preprocessing & Harmonization:

- Genomic: Filter variants using

bcftoolsfor missense mutations with a population frequency <0.01 in gnomAD. Annotate withEnsembl VEP. - Transcriptomic: Process RNA-seq counts using a standard DESeq2 pipeline for normalization and variance stabilization.

- Proteomic: Normalize protein abundance values using median centering and log2 transformation.

- Harmonization: Align all data types by patient/sample ID using a common identifier (e.g., TCGA barcode). Store in an integrated data structure (e.g., AnnData for Python or MultiAssayExperiment for R).

- Genomic: Filter variants using

AI/ML Analysis:

- Train a multi-input neural network. One branch takes a mutated gene set (binary vector), and another branch takes the corresponding protein expression vector.

- The model aims to predict a clinical phenotype (e.g., pathological stage or survival risk group).

- Perform SHAP analysis on the trained model to identify key driving features at the gene-protein interface.

The Scientist's Toolkit: Key Reagents & Resources

| Item | Function | Example/Provider |

|---|---|---|

| GDC Data Transfer Tool | Secure, high-performance download of TCGA/genomic data. | NIH Genomic Data Commons |

| Ensembl VEP | Annotates genomic variants with functional consequences. | EMBL-EBI |

| DESeq2 R Package | Differential expression analysis of count-based sequencing data. | Bioconductor |

| CPTAC Data Portal | Source for harmonized, high-quality cancer proteomic datasets. | National Cancer Institute |

| PyTorch/TensorFlow | Frameworks for building and training multi-input deep learning models. | Open Source |

| SHAP Library | Explains output of machine learning models using game theory. | GitHub: shap |

AI Target Identification Workflow

Protocol 2: Building a Chemical-Proteomic Interaction Predictor

Objective: To develop a model that predicts protein target profiles for small molecules using chemical structure and primary amino acid sequence.

Methodology:

- Dataset Curation:

- Extract compound-protein interaction pairs from BindingDB or STITCH. Include both active and inactive pairs for robust learning.

- Represent compounds as Morgan fingerprints (radius 2, 2048 bits) or pre-trained molecular graph embeddings.

- Represent proteins as either k-mer frequency vectors or pre-trained sequence embeddings from models like ProtBERT.

Model Architecture & Training:

- Implement a Siamese-style neural network with two branches:

- Branch 1: Processes compound fingerprint/embedding.

- Branch 2: Processes protein sequence embedding.

- The outputs of each branch are concatenated and fed through fully connected layers to predict a binding affinity score (e.g., pKi, pIC50).

- Use mean squared error loss for regression or binary cross-entropy for classification.

- Validate rigorously using time-split or cold-start (new scaffold) splits.

- Implement a Siamese-style neural network with two branches:

Experimental Validation Protocol (In Silico to In Vitro):

- Step 1: Use the trained model to screen a virtual library (e.g., Enamine REAL) against a target of interest.

- Step 2: Select top 50 predicted hits and cluster by chemical scaffold. Choose 2-3 representatives per major cluster.

- Step 3: In vitro assay: Perform a fluorescence polarization (FP) or AlphaScreen assay.

- Prepare a 10 mM stock solution of each compound in DMSO.

- Serially dilute compounds in assay buffer (e.g., PBS, 0.01% Tween-20, 1% DMSO).

- In a 384-well plate, mix purified target protein (at Kd concentration), fluorescent tracer, and compound dilution.

- Incubate for 1 hour at room temperature, protected from light.

- Read polarization (milliP units) on a plate reader (e.g., PerkinElmer EnVision).

- Fit dose-response curves to calculate IC50 values.

The Scientist's Toolkit: Key Reagents & Resources

| Item | Function | Example/Provider |

|---|---|---|

| BindingDB | Public database of measured protein-ligand binding affinities. | University of California |

| RDKit | Open-source cheminformatics toolkit for fingerprint generation. | GitHub: rdkit |

| ProtBERT | Pre-trained transformer model for protein sequence representation. | Hugging Face Model Hub |

| Enamine REAL Database | Commercially available, synthesizable virtual compound library. | Enamine Ltd |

| AlphaScreen Kit | Bead-based homogeneous assay for detecting protein-protein/compound interactions. | Revvity (PerkinElmer) |

| 384-Well Assay Plates | Low-volume plates for high-throughput biochemical screening. | Corning, Greiner Bio-One |

Chemical-Proteomic Interaction Model

Data Integration & AI Framework Architecture

AI Drug Discovery Data Integration Hub

The systematic leveraging of chemical, genomic, proteomic, and clinical datasets through standardized protocols and integrated AI models is accelerating the drug discovery cycle. The workflows and application notes detailed herein provide a framework for researchers to build robust, translatable models that bridge the gap between in silico predictions and tangible clinical outcomes. Future advancements will depend on increased data accessibility, improved multimodal representation learning, and closer collaboration between computational and experimental scientists.

Application Notes

The contemporary AI-driven drug discovery ecosystem is a dynamic interplay between specialized entities, each contributing unique capabilities. The integration of high-throughput experimental biology with advanced AI/ML computational platforms is accelerating the identification and optimization of novel therapeutic candidates.

Stakeholder Roles & Quantitative Impact

Table 1: Representative Stakeholder Models and Key Metrics

| Stakeholder Type | Examples | Core AI/Technology Platform | Key Collaboration/Deal (Example) | Reported Impact / Metric |

|---|---|---|---|---|

| AI-First Biotechs | Recursion Pharmaceuticals, Exscientia, Insilico Medicine | Phenomics & CV; Automated Design; Generative Chemistry | Recursion + Bayer ($1.5B+); Exscientia + Sanofi ($5.2B+) | Recursion: >125 TB of biological images; Insilico: First AI-designed drug to Phase II in ~30 months. |

| Pharma Giants | Pfizer, Roche (Genentech), AstraZeneca, Merck | Internal AI units (e.g., Merck's AICC); Strategic partnerships & licensing. | Pfizer with multiple AI partners; AstraZeneca + BenevolentAI | Roche: 40+ AI projects in pipeline; AZ: AI identified new target for CKD in 6 months vs. traditional timeline. |

| Specialized Biotechs | Relay Therapeutics, Atomwise | Dynamics-based drug design; CNN for molecular screening. | Relay + Genentech; Atomwise + multiple pharmas. | Relay: RLY-2608 (PI3Kα mutant inhibitor) advanced to clinic using computationally guided design. |

| Tech & Cloud Providers | Google (Isomorphic Labs), NVIDIA, AWS | AlphaFold, BioNeMo, Cloud compute & storage. | Isomorphic Labs + Lilly, Novartis ($3B potential); NVIDIA collaborations across biopharma. | AlphaFold DB: >200 million protein structure predictions; NVIDIA BioNeMo: Accelerates training of biomolecular models. |

Table 2: Comparative Analysis of AI-Driven Discovery Pipelines

| Pipeline Stage | Traditional Timeline (Est.) | AI-Accelerated Timeline (Reported) | Key Enabling Technologies & Stakeholders |

|---|---|---|---|

| Target Identification | 1-3 years | 3-12 months | Omics data integration, causal ML (BenevolentAI), knowledge graphs (Pfizer). |

| Lead Discovery | 1-5 years | 6-18 months | Generative molecular design (Exscientia, Insilico), virtual high-throughput screening (Atomwise). |

| Preclinical Candidate | 1-2 years | 3-9 months | Predictive ADMET models (Cyclica), automated synthesis planning (IBM RXN). |

Key Experimental Protocols

Protocol 1: High-Content Phenotypic Screening with AI-Based Image Analysis (Recursion Model) Objective: To identify compounds inducing phenotypic changes linked to disease modulation.

- Cell Culture & Plating: Seed disease-relevant cell lines (e.g., iPSC-derived neurons) in 384-well plates. Use automated liquid handlers.

- Compound Treatment: Treat with compounds from a diverse library (e.g., 10,000+ small molecules). Include positive/negative controls. Incubate for a defined period (e.g., 24-72h).

- Multiplex Staining: Fix cells and stain for multiple cellular components (nuclei, cytoskeleton, organelles) using fluorescent dyes/antibodies.

- Automated Imaging: Acquire high-resolution images (4-6 channels/well) using automated confocal microscopes (e.g., PerkinElmer Opera).

- Image Processing & Feature Extraction: Use CellProfiler or proprietary software to segment cells and extract ~5000 morphological features (size, shape, intensity, texture) per cell.

- Phenotypic Clustering & Compound Scoring: Apply unsupervised ML (e.g., UMAP, t-SNE) to cluster similar phenotypes. Use supervised models to score compounds based on similarity to desired phenotypic "fingerprint."

- Hit Triangulation: Correlate phenotypic hits with genetic perturbation data (CRISPR) and OMICs data to infer mechanism of action (MoA).

Protocol 2: AI-Driven De Novo Molecule Design and In Silico Validation (Exscientia/Insilico Model) Objective: To generate novel, synthesizable compounds with optimized properties for a defined target.

- Target Featurization: Encode the target (protein structure or sequence) using 3D convolutional neural networks (CNNs) or graph neural networks (GNNs).

- Generative Chemical Design: Employ generative models (e.g., Variational Autoencoders - VAEs, Generative Adversarial Networks - GANs, or Reinforcement Learning) trained on known chemical libraries (e.g., ChEMBL, ZINC) to propose novel molecular structures.

- In Silico Screening: a. Docking & Affinity Prediction: Screen generated molecules using molecular docking (e.g., AutoDock Vina, Glide) and/or ML-based affinity predictors. b. Property Prediction: Use QSAR models to predict ADMET properties (solubility, permeability, CYP inhibition, etc.).

- Multi-Objective Optimization: Apply Pareto optimization or RL to balance potency, selectivity, synthesizability, and predicted ADMET.

- Synthesis Planning: Feed selected virtual hits into retrosynthesis AI (e.g., IBM RXN, Synthia) to generate feasible synthesis routes.

- In Vitro Experimental Validation: Synthesize top candidates (typically 50-150) and test in primary biochemical/cellular assays (see Protocol 3).

Protocol 3: Validation of AI-Discovered Hits in Biochemical/Cellular Assays Objective: To experimentally confirm the activity of AI-predicted compounds.

- Biochemical Activity Assay (e.g., Kinase Inhibition): a. Prepare assay buffer, kinase enzyme, ATP, and fluorescent/luminescent substrate. b. Dispense compounds (serial dilution) and controls into 384-well assay plates. c. Initiate reaction by adding enzyme/substrate/ATP mix. d. Incubate and measure signal (e.g., TR-FRET or luminescence). e. Calculate IC50 using nonlinear regression (4-parameter logistic fit).

- Cell-Based Potency Assay (e.g., Reporter Gene or Viability): a. Culture engineered cell lines (e.g., with luciferase reporter under pathway control). b. Seed cells, treat with compound dilutions, and incubate 24-72h. c. Measure luminescence/fluorescence or use CellTiter-Glo for viability. d. Calculate EC50/IC50 values.

- Selectivity & Early Safety Panel: Screen top candidates (IC50 < 100 nM) against related target families (e.g., kinome panel) and in cytotoxicity assays on primary cells.

Visualizations

AI Drug Discovery Pipeline with Feedback

Stakeholder Collaboration Map

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for AI-Integrated Drug Discovery Experiments

| Item / Reagent | Vendor Examples | Function in AI-Driven Workflow |

|---|---|---|

| iPSC-Derived Cell Lines | Fujifilm Cellular Dynamics, Axol Bioscience | Provide physiologically relevant, disease-modeling cells for high-content phenotypic screening (Recursion-style). |

| Cell Painting Dye Kits | Thermo Fisher, Sigma-Aldrich | Standardized fluorescent dye sets for multiplex cellular staining, enabling rich, quantitative morphological feature extraction. |

| Tag-lite Binding Assay Kits | Cisbio Bioassays | Homogeneous, time-resolved FRET assays for rapid, high-throughput binding affinity measurements of AI-designed compounds. |

| Kinase Glo / ADP-Glo Assays | Promega | Luminescent assays for measuring kinase activity and inhibition, key for validating AI-predicted inhibitors. |

| Ready-to-Use Compound Libraries | Selleckchem, MedChemExpress | Curated, diverse small-molecule libraries for experimental screening to train or validate AI models. |

| Cloud Compute Credits (AWS, GCP) | Amazon Web Services, Google Cloud | Essential for training large AI/ML models (GNNs, Transformers) and running large-scale virtual screens. |

| Automated Liquid Handlers (e.g., Echo) | Beckman Coulter, Labcyte | Enable nanoliter-scale compound dispensing for high-throughput assay miniaturization, generating large training datasets. |

| 3D Tissue Culture Platforms | Corning, MIMETAS | Advanced in vitro models (organoids, spheroids) that generate complex data for AI model training beyond 2D cultures. |

Application Note: AI-Driven Target Identification & Prioritization

The pharmaceutical industry faces a crisis of declining returns. Quantitative analysis of recent data highlights the scale of the problem:

Table 1: Key Metrics of Declining R&D Productivity (2010-2023)

| Metric | 2010-2012 Average | 2021-2023 Average | % Change |

|---|---|---|---|

| R&D Cost per Approved Drug (USD) | $1.2B | $2.3B | +92% |

| Clinical Trial Success Rate (Phase I to Approval) | 11.4% | 6.2% | -46% |

| Average Drug Development Timeline (Years) | 10.5 | 12.1 | +15% |

| Number of Novel Drug Approvals (Annual Avg.) | 28 | 43 | +54% |

While novel drug approvals have increased, the cost and failure rate have risen disproportionately. AI applications in target identification aim to reverse this trend by improving the biological understanding and validation of novel therapeutic targets before costly experimental work begins.

AI-Enhanced Target-Disease Association Protocol

Objective: To computationally identify and prioritize novel, druggable targets for a specified complex disease (e.g., Alzheimer's Disease, NASH) using multi-modal data integration.

Materials & Workflow:

Table 2: Research Reagent Solutions for AI-Target Validation

| Item / Solution | Function in AI-Driven Workflow |

|---|---|

| Public Omics Databases (e.g., GTEx, TCGA, GEO) | Provide transcriptomic, proteomic, and genomic data for disease vs. healthy tissue comparisons. |

| Knowledge Graphs (e.g., Hetionet, SPOKE) | Structured repositories of biological relationships (gene-disease-drug) for network-based inference. |

| Pathway Analysis Suites (e.g., Metascape, Reactome) | Contextualize prioritized genes within biological pathways for mechanistic plausibility checks. |

| CRISPR Knockout Screening Data (DepMap Portal) | Offer functional genomic evidence for gene essentiality in disease-relevant cellular models. |

| In Silico Druggability Predictors (e.g., canSAR, DeepDTA) | Predict the likelihood of a protein target being amenable to small-molecule or biologic modulation. |

| Cloud Compute Platform (e.g., AWS, GCP) | Provides scalable infrastructure for running computationally intensive AI/ML models on large datasets. |

Protocol Steps:

- Data Aggregation: For the disease of interest, compile:

- Genome-Wide Association Study (GWAS) hits.

- Differential gene expression profiles from ≥5 independent studies.

- Proteomic changes from relevant tissue or fluid studies.

- Literature-derived relationships from PubMed abstracts via NLP extraction.

- Multi-Modal Integration: Use a graph neural network (GNN) or similar architecture to embed the heterogeneous data (genes, diseases, variants, compounds) into a unified knowledge graph.

- Candidate Generation: Apply algorithms (e.g., random walk with restart, matrix factorization) to the knowledge graph to generate a ranked list of genes strongly connected to the disease but with no existing approved drugs.

- Prioritization Filtering: Filter and re-rank candidates based on:

- Druggability Score (from predictive models).

- Genetic Evidence (p-value from GWAS, Mendelian randomization support).

- Essentiality (CRISPR knockout effect in disease-relevant cell lines).

- Safety Profile (Expression in vital organs, knockout phenotype in model organisms).

- Output: A final shortlist of 3-5 high-confidence, novel targets with associated biological rationale for experimental validation.

Diagram Title: AI-Driven Target Prioritization Workflow

Application Note: AI-Accelerated Lead Compound Design

The hit-to-lead and lead optimization phases are resource-intensive bottlenecks. AI-driven de novo molecular design and property prediction can significantly compress timelines.

Table 3: Impact of AI on Early-Stage Discovery (Benchmark Studies)

| Study Parameter | Traditional HTS/CADD | AI-Enhanced Pipeline | Reported Improvement |

|---|---|---|---|

| Time to Identify Hit Series (Weeks) | 24-52 | 8-16 | ~70% reduction |

| Compounds Synthesized & Tested for Lead Opt. | 2,500-5,000 | 300-700 | ~80% reduction |

| Predictive Accuracy of ADMET Properties (R²) | 0.3-0.5 | 0.6-0.8 | +60-100% |

| Success Rate from Hit to Preclinical Candidate | 15% | 30-40% | 2-2.5x increase |

Protocol for Generative AI inDe NovoMolecule Design

Objective: To generate novel, synthesizable small molecules with high predicted affinity for a defined protein target and optimal drug-like properties.

Materials & Workflow:

Table 4: Research Reagent Solutions for AI-Driven Chemistry

| Item / Solution | Function in AI-Driven Workflow |

|---|---|

| Target Structure (PDB File or AlphaFold2 Model) | Provides 3D coordinates for binding pocket definition in structure-based design. |

| Assay Data Repository (Internal HTS/published IC50 data) | Forms the ground-truth dataset for training and validating affinity prediction models. |

| Chemical Representation Toolkits (e.g., RDKit, DeepChem) | Encode molecules as SMILES strings, graphs, or fingerprints for machine learning. |

| Generative AI Platform (e.g., REINVENT, MolGPT, proprietary) | The core model architecture (VAE, GAN, Transformer, Diffusion) for molecule generation. |

| ADMET Prediction Models (e.g., QSAR, graph-based predictors) | Virtually screen generated molecules for PK/PD and toxicity endpoints. |

| Synthesis Planning Software (e.g., ASKCOS, Retro*) | Evaluates the synthetic feasibility and proposes routes for top AI-generated candidates. |

Protocol Steps:

- Problem Conditioning: Define the desired properties as a multi-parameter objective (e.g., pIC50 > 8, logP 2-4, MW <450, no PAINS alerts, high synthesizability score).

- Model Selection & Training:

- Use a pre-trained generative chemical language model (e.g., on ChEMBL).

- Fine-tune the model using transfer learning on any available proprietary data for the target or related targets.

- Implement a reinforcement learning or Bayesian optimization loop where the generator is rewarded for producing molecules that score highly on the objective function.

- Molecular Generation: Run the conditioned model to generate 50,000-100,000 virtual molecules.

- Virtual Screening & Filtering:

- Step 1: Pass all generated molecules through a fast filter (chemical rules, simple properties).

- Step 2: Screen the remaining (≈10,000) with a high-fidelity, target-specific affinity predictor (e.g., a trained graph neural network or docking simulation).

- Step 3: Subject the top 1,000 from Step 2 to a battery of in silico ADMET models.

- Final Selection & Analysis: Cluster the top 200 molecules by scaffold. Select 20-30 representative, diverse, and highly scoring candidates for in vitro synthesis and testing.

Diagram Title: AI-Driven De Novo Molecular Design Cycle

Application Note: AI in Clinical Trial Optimization

Clinical trials represent the single largest cost component (~50-60% of total R&D) and have high failure rates due to patient heterogeneity and poor design.

Table 5: AI Applications in Clinical Development: Potential Impact

| Trial Challenge | Traditional Approach | AI-Enhanced Approach | Potential Outcome |

|---|---|---|---|

| Patient Recruitment Duration | 6-18 months | 3-9 months | ~50% reduction |

| Patient Population Homogeneity | Broad inclusion criteria | Digital/biomarker-defined subgroups | Increase in treatment effect signal |

| Trial Site Selection & Activation | Historical performance | Predictive analytics of site feasibility | 20-30% faster activation |

| Adaptive Trial Design Complexity | Limited, pre-planned adaptations | Continuous, simulation-driven optimization | Reduced required sample size (10-25%) |

Protocol for AI-Augmented Patient Stratification & Endpoint Prediction

Objective: To identify digital/biomarker-based patient subgroups most likely to respond to a therapy, enabling a smaller, faster, and more precise Phase II trial.

Materials & Workflow:

Table 6: Research Reagent Solutions for Clinical Trial AI

| Item / Solution | Function in AI-Driven Workflow |

|---|---|

| Historical Clinical Trial Data (Control arm data, failed studies) | Training set for models predicting disease progression and placebo response. |

| Real-World Data (RWD) Sources (EHR, claims, registries) | Provides broader patient phenotypic data to model heterogeneity and comorbidities. |

| Multi-Omics Patient Profiles (from biopsy/liquid biopsy) | Molecular data for deep biomarker discovery beyond single-gene markers. |

| Digital Health Technologies (Wearables, mobile apps) | Generate continuous, real-world physiological and behavioral endpoints. |

| AI/ML Modeling Suite (e.g., Python scikit-learn, TensorFlow) | For building supervised (classification/regression) and unsupervised (clustering) models. |

| Clinical Trial Simulation Software | To simulate outcomes of different design and stratification strategies. |

Protocol Steps:

- Data Compilation & Feature Engineering: Aggregate data from RWD, historical trials, and multi-omics studies for the disease. Engineer features including demographics, lab values, genetic variants, transcriptomic signatures, and activity metrics from wearables.

- Unsupervised Phenotyping: Apply clustering algorithms (e.g., consensus clustering, deep autoencoders) to identify distinct patient subgroups based on the engineered features, without using outcome labels.

- Predictive Model Training: Using historical trial data where treatment outcome is known, train a model (e.g., XGBoost, neural network) to predict a patient's probability of response or magnitude of endpoint improvement.

- Subgroup Discovery & Enrichment Analysis: Apply the trained predictive model to the clusters identified in Step 2. Statistically test for clusters where the predicted response is uniformly high (enriched responders) or low (non-responders). Identify the key driving features (biomarkers) of the high-response cluster.

- Trial Simulation & Design: Propose a new trial protocol with inclusion criteria refined by the AI-identified biomarker signature. Use simulation software to power the trial, comparing the required sample size and expected effect size against a traditional design.

Diagram Title: AI-Powered Clinical Trial Patient Stratification

From Algorithms to Pipelines: Practical AI Methods Powering Modern Drug Discovery Applications

This document provides detailed Application Notes and Protocols for the application of machine learning (ML) in predictive modeling for drug discovery. This work supports the broader thesis that artificial intelligence is a transformative technology for accelerating and de-risking pharmaceutical research. The focus here is on three interconnected pillars: Quantitative Structure-Activity Relationship (QSAR) modeling, physicochemical and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) property prediction, and computational toxicity assessment.

Core Methodologies & Protocols

Protocol: Standardized QSAR Modeling Workflow

This protocol details the steps for building a robust QSAR model for biological activity prediction (e.g., pIC50).

Materials & Software: Python/R, RDKit or Mordred, Scikit-learn, DeepChem, Jupyter Notebook, Dataset of compounds with associated activity values.

Procedure:

- Data Curation: Compound structures and activity data from public sources (e.g., ChEMBL) or proprietary assays.

- Descriptor Calculation: Compute molecular descriptors (e.g., topological, constitutional, electronic) and/or fingerprints (ECFP, MACCS).

- Data Splitting: Split data into training (70%), validation (15%), and hold-out test (15%) sets using scaffold splitting to assess model generalizability.

- Feature Selection: Apply methods like Variance Threshold, Recursive Feature Elimination (RFE), or LASSO to reduce dimensionality.

- Model Training: Train algorithms (e.g., Random Forest, Gradient Boosting, Support Vector Machines, Neural Networks) on the training set.

- Hyperparameter Optimization: Use grid/random search or Bayesian optimization on the validation set.

- Model Evaluation: Assess final model on the independent test set using metrics: R², RMSE, MAE.

Protocol: ADMET Property Prediction Using Graph Neural Networks (GNNs)

This protocol describes using advanced GNNs to predict complex properties directly from molecular graphs.

Materials & Software: DeepChem, PyTorch Geometric, DGL, OMOP databases, ADMET benchmark datasets.

Procedure:

- Graph Representation: Convert SMILES strings to molecular graphs with nodes (atoms) and edges (bonds). Atom and bond features are initialized.

- Model Architecture: Implement a Message Passing Neural Network (MPNN) or Attentive FP architecture.

- Training Loop: Train the GNN to learn graph representations and map them to property endpoints (e.g., solubility, permeability, hERG inhibition).

- Validation: Use time-split or structurally diverse external validation sets to estimate real-world performance.

Protocol: In Silico Toxicity Assessment with Multi-Task Learning

This protocol outlines building a model for simultaneous prediction of multiple toxicity endpoints.

Materials & Software: Tox21, ToxCast datasets, Multi-task learning frameworks.

Procedure:

- Data Assembly: Compile data from Tox21 challenge (12 assays) and other in vitro toxicity data.

- Multi-Task Model Design: Build a neural network with shared hidden layers and multiple task-specific output heads.

- Training with Imbalanced Data: Employ class weighting or focal loss to handle highly imbalanced assay data (many more negatives than positives).

- Interpretation: Apply gradient-based attribution methods (e.g., Integrated Gradients) to highlight structural features associated with toxicity predictions.

Data Presentation

Table 1: Performance Comparison of ML Algorithms on Benchmark Datasets

| Model Type | Dataset (Endpoint) | Metric (Test Set) | Value | Advantage |

|---|---|---|---|---|

| Random Forest (RF) | Lipophilicity (LogD) | R² | 0.73 | Interpretable, robust to noise |

| Graph Convolutional Net | Tox21 (Nuclear Receptor) | AUC-ROC | 0.83 | Learns features directly from structure |

| Support Vector Machine | BBB Penetration (Binary) | Accuracy | 0.89 | Effective in high-dimensional descriptor spaces |

| Directed-Message Passing | FreeSolv (Hydration Free Energy) | RMSE | 1.12 | State-of-the-art for quantum mechanical properties |

| Multi-Task DNN | ADMET Core (5 properties) | Avg. Concordance | 0.76 | Efficient, leverages shared information across tasks |

Visualizations

Diagram 1: ML Model Development & Validation Workflow

Diagram 2: Multi-Task Neural Network for Toxicity Prediction

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for ML-Driven Predictive Modeling

| Item Name | Function & Application |

|---|---|

| ChEMBL Database | A manually curated database of bioactive molecules with drug-like properties. Primary source for QSAR data. |

| RDKit | Open-source cheminformatics toolkit for descriptor calculation, fingerprint generation, and molecule handling. |

| Tox21/ ToxCast Data | High-throughput screening data from US federal agencies for building and validating toxicity prediction models. |

| scikit-learn | Core Python library for classical ML algorithms (RF, SVM), feature selection, and model evaluation. |

| DeepChem / PyTorch Geometric | Libraries specifically designed for deep learning on molecular structures and graphs (GNNs). |

| Jupyter Notebook | Interactive development environment for creating reproducible analysis pipelines and sharing results. |

| Model Evaluation Suite | Custom scripts to calculate OECD-principle aligned metrics (R², RMSE, AUC, sensitivity, specificity). |

This document, framed within a broader thesis on artificial intelligence for drug discovery, details the evolution from traditional virtual screening to the current paradigm of "Virtual Screening 2.0." This new era is defined by the integration of deep learning at scale, enabling the ultra-rapid, physics-aware evaluation of billion-plus compound libraries and the generation of novel, synthetically accessible chemical matter. The convergence of high-performance computing, foundational AI models, and automated experimentation is reshaping the early discovery pipeline, significantly increasing throughput and hit quality.

AI-Enhanced Molecular Docking at Scale

Application Notes

Traditional physics-based docking (e.g., AutoDock Vina) is computationally limited to millions of compounds. AI-powered docking surmounts this via two approaches: 1) Surrogate Model Docking, where a deep neural network is trained to predict the docking score and pose of new molecules, and 2) End-to-End Learning, where models directly predict binding affinity from 3D protein-ligand representations (e.g., EquiBind, DiffDock). These methods accelerate screening by 100- to 10,000-fold.

Table 1: Performance Comparison of Docking Methods (Representative Data)

| Method | Type | Throughput (compounds/day)* | RMSD vs. Experimental Pose (Å) | Typical Use Case |

|---|---|---|---|---|

| AutoDock Vina | Classical Physics-Based | 10⁵ - 10⁶ | 1.0 - 2.5 | Focused Libraries, Lead Optimization |

| GNINA (CNN-Score) | AI-Scored Docking | 10⁶ - 10⁷ | 1.0 - 2.0 | Large Library Screening |

| DiffDock | Diffusion-based E2E | 10⁷ - 10⁸ | 1.5 - 3.0 | Ultra-Large & Pocket-First Screening |

| Surrogate Model (e.g., RF) | ML-Predicted Score | 10⁸ - 10⁹ | N/A (Score only) | Pre-filtering for Billion+ Libraries |

*On a modern GPU cluster. *Highly dependent on training data quality.*

Detailed Protocol: AI-Surrogate Model Screening for a Novel Kinase Target

Objective: To screen 1.2 billion compounds from the ZINC20 library against the ATP-binding site of a novel kinase using an AI surrogate model.

Materials & Software:

The Scientist's Toolkit: Key Reagent Solutions for AI-Docking

| Item | Function | Example/Provider |

|---|---|---|

| Prepared Protein Structure | High-resolution (≤2.5Å) crystal or Alphafold2 model for the target binding site. | PDB, AlphaFold DB |

| Ultra-Large Chemical Library | Enumerated, 3D-prepped, and filtered compound library. | ZINC20, Enamine REAL, CHEMriya |

| Docking Software (Base) | Generates initial training data for the surrogate model. | AutoDock Vina, FRED, GOLD |

| Machine Learning Framework | For building and training the surrogate model. | PyTorch, TensorFlow, scikit-learn |

| High-Performance Computing | CPU/GPU cluster for parallel processing. | AWS EC2 (p4d instances), NVIDIA DGX, Google Cloud TPU |

| Ligand Preparation Pipeline | Standardizes and prepares ligands for docking/featurization. | RDKit, Open Babel, Schrodinger LigPrep |

Protocol Steps:

Initial Training Set Generation:

- Prepare the protein structure: remove water, add hydrogens, assign partial charges (e.g., using PDB2PQR).

- Randomly select a diverse subset (50,000 - 100,000 compounds) from the full library.

- Dock this subset using a standard docking program (e.g., Vina) to generate a labeled dataset of

(molecular descriptor, docking_score, pose)tuples. - Cluster results and retain top-scoring and diverse poses.

Surrogate Model Training & Validation:

- Featurize the molecules from Step 1. Use ECFP4 fingerprints or 3D spatial fingerprints (e.g., ROCS-style).

- Train a deep neural network (e.g., 5-layer DenseNet) or gradient boosting model (XGBoost) to predict the docking score from the features.

- Validate the model on a held-out test set (20% of initial data). Target: Pearson R > 0.8 between predicted and actual docking scores.

Large-Scale Inference & Top-Hit Selection:

- Featurize all 1.2 billion compounds in the library using the same method.

- Use the trained surrogate model to predict docking scores for the entire library. This can be distributed across multiple GPUs.

- Rank all compounds by predicted score and select the top 50,000 - 100,000 for standard docking (Step 4).

Refinement & Pose Validation:

- Perform full, traditional docking on the top-ranked subset from Step 3.

- Apply more rigorous scoring functions (MM/GBSA) to the top 1,000 poses.

- Visually inspect the top 100 complexes for sensible binding interactions.

Experimental Triaging:

- Apply ADMET filters, synthetic accessibility scoring, and chemical novelty checks.

- Select 100-500 compounds for initial experimental purchase and testing (e.g., biochemical assay).

AI-Driven Ligand-Based Screening

Application Notes

Ligand-based screening uses known active compounds to find new ones, independent of a protein structure. AI has revolutionized this through Generative Chemistry and Advanced Similarity Search. Models like REINVENT, MoLeR, and GPT-based molecular generators can create novel, optimized scaffolds. Large-scale similarity searching using learned molecular representations (e.g., from ChemBERTa, Grover) outperforms traditional fingerprint-based methods.

Table 2: AI Methods for Ligand-Based Screening

| Method Category | Key Technology | Primary Output | Advantage |

|---|---|---|---|

| Generative Models | Variational Autoencoders (VAE), Recurrent Neural Networks (RNN) | Novel molecules with optimized properties (e.g., QSAR, synthesizability) | De novo design, scaffold hopping |

| Transformer Models | Chemical Language Models (e.g., ChemGPT) | Sequence of molecular tokens (SMILES/SELFIES) | Captures complex chemical grammar, multi-parameter optimization |

| Graph-Based Models | Graph Neural Networks (GNN) | Molecular property predictions & embeddings for similarity | Incorporates topological structure directly |

| One-Shot Learning | Siamese Networks, Metric Learning | Similarity metric for few-shot or single-shot lead identification | Effective with very few known actives |

Detailed Protocol: Few-Shot Lead Generation Using a Chemical Language Model

Objective: To generate novel, synthetically accessible analogs for a target with only 5 known active compounds, using a fine-tuned transformer model.

Materials & Software:

Protocol Steps:

Data Curation & Model Selection:

- Assemble the 5 known active compounds (seeds) and a large, general corpus of drug-like molecules (e.g., 10 million from PubChem) for background training.

- Select a pre-trained chemical language model (e.g., ChemBERTa, MoLeR).

Model Fine-Tuning:

- Format the seed actives and background molecules as SMILES or SELFIES strings.

- Fine-tune the pre-trained model using a masked language modeling objective, biasing the learning toward the chemical space of the actives.

- Validate by checking the model's ability to reconstruct the seed molecules and generate valid, novel structures.

Controlled Generation with Scoring:

- Use the fine-tuned model for explorative generation (via sampling) and exploitative generation (beam search starting from seed molecules).

- Generate a pool of 100,000 candidate molecules.

- Score the generated pool using a predictive QSAR model (if available) or a simple pharmacophore filter.

- Filter candidates for synthetic accessibility (SA Score < 4.5) and medicinal chemistry properties (e.g., Lipinski's Rule of Five).

Diversity Selection & In Silico Validation:

- Cluster the top 10,000 scored candidates using Morgan fingerprints (radius=2) and Butina clustering.

- Select 2-5 representatives from each of the top 50 clusters to ensure scaffold diversity.

- Perform a final in silico safety/toxicology check (e.g., using a panel of QSAR models for hERG, Ames, etc.).

- Output a final list of 150-200 proposed compounds for synthesis or purchase.

Virtual Screening 2.0: AI-Powered Workflow Selection

AI-Surrogate Docking Protocol Flow

Application Notes: Current Landscape & Quantitative Benchmarks

Generative AI and Reinforcement Learning (RL) are transforming de novo drug design by enabling the exploration of vast chemical spaces beyond human intuition. These methods generate novel, optimized molecular structures with desired pharmacological properties, directly addressing challenges in early-stage discovery.

Table 1: Performance Benchmarks of Key Generative Models (2023-2024)

| Model/Architecture | Primary Approach | Dataset (Size) | Key Metric & Result | Benchmark (e.g., Guacamol) |

|---|---|---|---|---|

| REINVENT 2.0 | RNN + RL | ZINC (~1.3M compounds) | Novel Hit Rate: 32% (vs. 5% for HTS) | N/A (Direct synthesis validation) |

| MolFormer | Transformer + SSL | PubChem (100M+ SMILES) | Relative Property Prediction Improvement: 18% (vs. traditional QSAR) | Top 1% on 8/12 property tasks |

| GFlowNet | Generative Flow Network | QM9 (134k molecules) | Diversity (Avg. Tanimoto): 0.35 | 95% sample validity, high diversity |

| DiffLinker | E(3)-Equivariant Diffusion | PDBBind (20k complexes) | Binding Affinity (pIC50) Improvement: +1.2 log units (designed vs. reference) | Successful in-silico generation for 3 targets |

| Hierarchical RL | Multi-Objective RL | ChEMBL (2M compounds) | Multi-Property Optimization Success Rate: 41% (simultaneous QED, SA, Target Score >0.8) | Outperforms single-objective RL by 22% |

Table 2: Comparative Analysis of Reinforcement Learning Rewards in Molecular Generation

| Reward Function Component | Description | Weight (Typical Range) | Impact on Generation Outcome |

|---|---|---|---|

| Target Affinity (Docking Score) | Predicted binding energy from molecular docking (e.g., Vina score). | 0.5 - 0.7 | Drives generation towards high-affinity binders. Can lead to overly complex molecules. |

| Drug-Likeness (QED) | Quantitative Estimate of Drug-likeness score. | 0.2 - 0.3 | Encourages ADME-favorable properties. Improves synthetic feasibility. |

| Synthetic Accessibility (SA) | Score based on fragment complexity and rarity. | 0.1 - 0.2 | Reduces molecular complexity, increases likelihood of synthesis. |

| Novelty (Tanimoto Distance) | Distance from nearest neighbor in training set. | 0.05 - 0.15 | Ensures chemical novelty, avoids simple mimicry of known actives. |

| Pharmacophore Match | 3D alignment to critical interaction points. | 0.3 - 0.6 (if used) | Enforces key binding interactions, improving target specificity. |

Experimental Protocols

Protocol 1: Training a REINVENT 2.0-Inspired RNN-RL Agent for a Novel Kinase Inhibitor

Objective: To generate novel, synthetically accessible small molecules predicted to inhibit JAK2 kinase with high affinity.

Materials: See "Scientist's Toolkit" below.

Procedure:

Part A: Prior & Agent Preparation

- Prior Model Training:

- Load the curated dataset of known kinase inhibitors (e.g., from ChEMBL, ~500k SMILES).

- Tokenize the SMILES strings using a character-level tokenizer.

- Train a Long Short-Term Memory (LSTM) network (2 layers, 512 hidden units) in a teacher-forced manner for 50 epochs to maximize the likelihood of the training sequences. This is the "Prior" model.

- Validate using the Negative Log-Likelihood (NLL) loss on a held-out set.

Part B: Reinforcement Learning Fine-Tuning

- Initialize Agent Model:

- Create a copy of the trained Prior model. This becomes the "Agent" model.

- Define Reward Function:

- For each generated batch of molecules (N=128), compute a composite reward R:

R = 0.6 * Docking_Score_Norm + 0.2 * QED + 0.15 * SA_Score_Norm + 0.05 * Novelty - Docking scores are obtained via an automated script calling AutoDock Vina against a prepared JAK2 structure (PDB: 6VNE).

- Scores are normalized per batch using the augmented Hill function as per REINVENT.

- For each generated batch of molecules (N=128), compute a composite reward R:

- RL Policy Update (PPO):

- For each generation step (100,000 steps total):

a. The Agent generates a batch of SMILES.

b. Compute rewards for valid molecules.

c. Calculate the loss:

L = σ * (R - B) * LogP(Agent) + β * KL(Agent || Prior)where σ is the learning rate, B is a running baseline (average reward), LogP is the log-likelihood of the Agent's actions, and β is a coefficient penalizing deviation from the Prior (β=0.5). d. Update the Agent's weights via gradient descent (Adam optimizer).

- For each generation step (100,000 steps total):

a. The Agent generates a batch of SMILES.

b. Compute rewards for valid molecules.

c. Calculate the loss:

- Sampling & Validation:

- After training, sample 10,000 molecules from the optimized Agent.

- Filter for molecules with QED > 0.6, SA Score < 4.5, and predicted pIC50 > 7.0.

- Select top 50 candidates for in-silico molecular dynamics (MD) simulation (100 ns) to assess binding stability.

Protocol 2: Fine-Tuning a Pre-trained Transformer (MolFormer) with Protein-Specific Adapters

Objective: To adapt a large pre-trained molecular transformer for target-aware generation using a limited set of known active compounds for a specific GPCR (e.g., Adenosine A2A receptor).

Materials: See "Scientist's Toolkit" below.

Procedure:

- Data Preparation:

- Gather a small dataset of known A2A binders (100-500 SMILES).

- For each, compute 3D conformers and align to a reference pharmacophore using RDKit.

- Encode this alignment as a binary fingerprint (pharmacophore fingerprint).

- Adapter Module Integration:

- Load the frozen weights of a pre-trained MolFormer model.

- Insert lightweight adapter modules (e.g., LoRA - Low-Rank Adaptation) after the attention and feed-forward layers in the transformer blocks.

- Add a multi-task prediction head that outputs both the next SMILES token and a scalar pharmacophore similarity score.

- Fine-Tuning:

- Train only the adapter parameters and the prediction head for 20 epochs.

- Use a combined loss:

L = 0.8 * NLL_SMILES + 0.2 * MSE(Pharmacophore_Sim). - Use a low learning rate (1e-4) and a cosine annealing schedule.

- Controlled Generation:

- During inference, use the pharmacophore similarity score as a guidance signal in the beam search, biasing the generation towards molecules that fulfill the key interaction constraints of the target.

Diagrams

Diagram Title: RL-Based Molecular Generation Workflow

Diagram Title: Multi-Objective Reward for RL in Drug Design

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Libraries for Generative Molecular Design

| Item (Software/Library) | Primary Function | Access/URL (Example) |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule manipulation, descriptor calculation (QED, SA), and SMILES parsing. | https://www.rdkit.org |

| PyTorch / TensorFlow | Deep learning frameworks for building and training Prior, Agent, and Transformer models. | https://pytorch.org, https://tensorflow.org |

| REINVENT 2.0 Framework | Reference implementation of the RNN+RL paradigm for molecular generation. | https://github.com/MolecularAI/Reinvent |

| AutoDock Vina or Gnina | Molecular docking software for rapid in-silico assessment of protein-ligand binding affinity. | https://vina.scripps.edu, https://github.com/gnina/gnina |

| OpenMM or GROMACS | Molecular dynamics simulation packages for stability validation of generated hits. | https://openmm.org, https://www.gromacs.org |

| GUACAMOL / MOSES | Benchmarking suites for evaluating generative model performance (diversity, novelty, etc.). | https://github.com/BenevolentAI/guacamol, https://github.com/molecularsets/moses |

| Streamlit or Dash | Libraries for building interactive web applications to visualize and filter generated molecules. | https://streamlit.io, https://dash.plotly.com |

Table 4: Key Datasets & Knowledge Bases

| Item (Database) | Content Type | Application in Training/Reward |

|---|---|---|

| ChEMBL | Curated bioactivity data for drug-like molecules. | Primary source for Prior model training and known actives for specific targets. |

| ZINC15 | Commercially available compounds for virtual screening. | Source of "purchasable" chemical space for transfer learning and benchmarking. |

| PubChem | Massive repository of chemical structures and properties. | Pre-training large-scale models (e.g., MolFormer) on general chemical knowledge. |

| PDBBind | Experimentally determined protein-ligand complex structures and binding affinities. | Training structure-aware models (e.g., DiffLinker) and validating docking scores. |

| QM9 | Quantum mechanical properties for small molecules. | Training generative models with embedded physical property constraints. |

Within the broader thesis on artificial intelligence for drug discovery, this application note details a core methodology: the integration of multi-omics data with machine learning for the systematic identification and initial validation of novel therapeutic targets. The shift from serendipitous discovery to data-driven, in-silico-first approaches is foundational to modern drug development, reducing target failure rates by prioritizing candidates with stronger genetic and biological evidence.

Application Notes: AI-Driven Target Identification Workflow

1. Data Acquisition & Curation Multi-omics datasets form the substrate for AI models. Key public repositories and data types are summarized below.

Table 1: Essential Public Omics Data Repositories for Target Discovery

| Data Type | Primary Sources | Key Metrics (Approx. Volume) | Primary Use in AI Models |

|---|---|---|---|

| Genomics | UK Biobank, gnomAD, GWAS Catalog | 500k+ human genomes; 200k+ GWAS associations | Identifying disease-associated genetic variants and loci. |

| Transcriptomics | GTEx, TCGA, GEO, ARCHS4 | 30k+ RNA-seq samples across tissues; 1M+ archived samples | Defining disease-specific gene expression signatures and co-expression networks. |

| Proteomics & Phosphoproteomics | CPTAC, PRIDE, Human Protein Atlas | 10k+ mass spectrometry runs; tissue/cell atlas data | Quantifying protein abundance, post-translational modifications, and cellular localization. |

| Single-Cell Omics | Human Cell Atlas, Tabula Sapiens, CellxGene | 50M+ cells characterized across tissues | Resolving cellular heterogeneity and identifying rare cell-type-specific targets. |

2. AI Model Training & Target Prioritization A supervised learning pipeline is employed to rank genes by their predicted likelihood of being a druggable disease target.

Table 2: Representative Performance Metrics of a Multi-Layer AI Prioritization Model

| Model Stage | Input Features | Benchmark Dataset | Key Performance Metric | Reported Result |

|---|---|---|---|---|

| Initial Ranking (Graph Neural Network) | Protein-protein interactions, pathway membership, GWAS signals, differential expression. | Known drug targets from DrugBank vs. non-targets. | Area Under the Precision-Recall Curve (AUPRC) | 0.78 |

| Druggability Filter (Classifier) | Protein structure features, ligandability predictions, tissue specificity. | Targets of approved small molecules & biologics. | F1-Score | 0.85 |

| Safety Triage (Classifier) | Essential gene scores (from CRISPR screens), genetic constraint (pLI), side effect associations. | Known toxic targets vs. safe targets. | Recall (Sensitivity) for toxicity | >0.95 |

Protocol: In Vitro Validation of AI-Prioritized Targets

Protocol 1: CRISPR-Cas9 Knockout Validation in a Disease-Relevant Cell Model

Objective: To functionally validate the necessity of an AI-prioritized gene target for a disease phenotype (e.g., cell proliferation, cytokine release) in a relevant human cell line.

Materials & Reagents (The Scientist's Toolkit) Table 3: Key Research Reagent Solutions for CRISPR Validation

| Reagent/Material | Function | Example Product (Supplier) |

|---|---|---|

| CRISPR-Cas9 Ribonucleoprotein (RNP) | Enables precise, high-efficiency gene knockout without genetic integration. | TrueCut Cas9 Protein v2 (Thermo Fisher) |

| Target-specific sgRNA | Guides Cas9 to the genomic locus of the AI-prioritized gene. | Custom Synthesized sgRNA (IDT) |

| Electroporation System | Facilitates delivery of RNP complexes into hard-to-transfect cells. | Neon Transfection System (Thermo Fisher) |

| Cell Viability Assay | Quantifies phenotypic consequence of gene knockout. | CellTiter-Glo Luminescent Assay (Promega) |

| Next-Gen Sequencing Kit | Validates editing efficiency at the target locus. | Illumina DNA Prep Kit (Illumina) |

Methodology:

- sgRNA Design & Preparation: Design two independent sgRNAs targeting early exons of the candidate gene using a platform like CRISPick. Resuspend sgRNAs in nuclease-free buffer.

- RNP Complex Formation: For each sgRNA, combine 60 pmol of Cas9 protein with 120 pmol of sgRNA. Incubate at 25°C for 10 minutes.