Beyond False Positives: Building and Applying QSAR Models to Predict Chemical-Assay Interference

This article provides a comprehensive guide for researchers and drug development professionals on Quantitative Structure-Activity Relationship (QSAR) models designed to predict chemical-assay interference.

Beyond False Positives: Building and Applying QSAR Models to Predict Chemical-Assay Interference

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on Quantitative Structure-Activity Relationship (QSAR) models designed to predict chemical-assay interference. It explores the foundational mechanisms of common interference phenomena, details the methodologies for constructing and curating high-quality training datasets, and outlines best practices for model building and validation. Furthermore, it addresses troubleshooting strategies for model limitations and performance optimization, and critically compares different modeling approaches. The goal is to empower scientists to proactively filter out nuisance compounds, thereby increasing the efficiency and reliability of high-throughput screening and early-stage drug discovery.

Decoding the Problem: What is Chemical-Assay Interference and Why Predictive Models Are Crucial

1. Introduction Within the critical pursuit of developing Quantitative Structure-Activity Relationship (QSAR) models for chemical-assay interference prediction, a precise taxonomy of interference mechanisms is foundational. Misleading false positives or negatives due to compound interference are a primary source of noise and error, corrupting high-throughput screening (HTS) data and derailing lead optimization. This document provides a structured taxonomy, supported by quantitative data, detailed protocols for detection, and essential research tools for the experimental pharmacologist and computational scientist.

2. Taxonomy & Quantitative Data Summary Assay interferences are categorized by their primary mechanism. The following table summarizes key characteristics and detection signatures.

Table 1: Taxonomy and Characteristics of Major Assay Interference Types

| Interference Type | Sub-Type | Typical Size/Conc. | Key Readout Artifact | Common Assay Formats Affected |

|---|---|---|---|---|

| Aggregation | Non-specific Colloidal Aggregates | 50-1000 nm aggregates at µM [1] | Loss of signal, steep IC50 curves, detergent sensitivity | Enzyme, protein-protein interaction, cell-based (membrane targets) |

| Fluorescence | Inner Filter Effect | Compound at high µM-mM | Quenching or excitation/emission light absorption | All fluorescence-based (FLINT, TR-FRET, FP) |

| Fluorescence | Signal Interference (Fluorophore) | Compound at low µM | Direct emission at detection wavelengths | FLINT, single-wavelength fluorescence |

| Reactivity | Redox-Active Compounds | Low µM | Reduction of reporter dyes (e.g., resazurin) | Viability, oxidoreductase assays |

| Reactivity | Nucleophilic/Elec trophilic | Varies | Irreversible, time-dependent inhibition, cysteine trapping | Enzyme, target engagement |

| Surface Binding | Non-specific to well/plate | Varies | Apparent activity at edges or specific wells | Ultra-low volume, 1536-well plate assays |

| Light Scattering | Turbidity from precipitates | >500 nm particles | Increased background absorbance/fluorescence | Absorbance, fluorescence polarization |

3. Experimental Protocols for Interference Detection

Protocol 3.1: Detecting Aggregation-Based Interference Objective: To confirm if compound activity is due to protein-sequestering colloidal aggregates. Materials: Test compound(s), target enzyme/protein, assay buffer, non-ionic detergent (e.g., 0.01% Triton X-100 or Tween-20), DMSO control. Workflow:

- Prepare a dose-response series of the compound in assay buffer with final DMSO concentration ≤1%.

- Perform the standard activity assay in two parallel conditions: a. Control: Assay buffer only. b. Test: Assay buffer supplemented with 0.01% (v/v) non-ionic detergent.

- Run the assay in triplicate.

- Data Interpretation: A rightward shift (higher IC50) of the dose-response curve by >10-fold in the detergent condition strongly suggests aggregate-based inhibition. True target engagement is typically detergent-insensitive.

Protocol 3.2: Detecting Fluorescence Interference (Inner Filter & Signal) Objective: To distinguish true modulation from compound-fluorescent artifacts. Materials: Test compound(s), fluorophore used in the assay (e.g., fluorescein, coumarin), assay buffer, plate reader. Workflow:

- Prepare the compound at the top test concentration in assay buffer.

- In a black plate, add: a. Fluorophore Only: Reference well with fluorophore at concentration used in assay. b. Compound + Fluorophore: Fluorophore + compound. c. Compound Only: Compound in buffer (no fluorophore).

- Measure fluorescence at the assay's excitation/emission wavelengths.

- Data Interpretation: Compare signals. Quenching (a < b) suggests inner filter effect. Direct signal (c > background) indicates compound fluorescence. Signal in (b) significantly different from (a)+(c) suggests interaction or interference.

Protocol 3.3: Detecting Redox Reactivity Objective: To identify compounds that reduce common reporter dyes. Materials: Test compound(s), redox dye (e.g., 10-50 µM resazurin), assay buffer, positive control (e.g., ascorbic acid). Workflow:

- Dilute compound in buffer in a clear or black plate.

- Add resazurin to all wells.

- Incubate at assay temperature (e.g., 30 min, 25°C).

- Measure fluorescence (Ex/Em ~560/590 nm).

- Data Interpretation: Compounds causing increased fluorescence (reduction of resazurin to resorufin) comparable to the positive control are redox-active. Activity in primary assays using such reporters is suspect.

4. Visualizing Interference Mechanisms and Workflows

Title: Hit Triage Workflow for Interference Detection

Title: Mechanism of Aggregation-Based Interference

5. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Interference Studies

| Reagent/Material | Function in Interference Studies | Example Use Case |

|---|---|---|

| Non-ionic Detergents (Triton X-100, Tween-20) | Disrupts colloidal aggregates by altering solvent-particle interface. | Diagnostic tool in Protocol 3.1. |

| Redox Dyes (Resazurin, DCPIP) | Indicators of compound redox reactivity. | Core of Protocol 3.3. |

| Fluorescent Reference Dyes (Fluorescein, Coumarin derivatives) | Controls for inner filter effect and signal overlap. | Required for Protocol 3.2. |

| Thiol Reagents (DTT, β-mercaptoethanol, glutathione) | Scavengers for electrophilic/reactive compounds; can mask true activity. | Used in counter-screening reactive hits. |

| Albumin (BSA, HSA) | Reduces surface adsorption & non-specific binding; can also stabilize proteins. | Added to assay buffers to mitigate surface binding artifacts. |

| Label-free Detection Platforms (SPR, MS, CETSA) | Orthogonal, non-optical methods for detecting direct binding or stabilization. | Critical for confirming hits from optical assays post-triage. |

| Dynamic Light Scattering (DLS) Instrumentation | Directly measures particle size distribution in solution. | Gold-standard confirmation of compound aggregation. |

Application Notes

False positives in High-Throughput Screening (HTS) are compounds that show apparent activity in an assay but do not act through the intended biological mechanism. Their impact is multi-faceted, leading to misallocation of resources, delays in project timelines, and ultimately, increased drug discovery costs. Within QSAR model development for chemical-assay interference prediction, the primary goal is to computationally flag these nuisance compounds early.

Key Impacts:

- HTS Campaign Efficacy: False positives can constitute >95% of initial hits in certain assay types (e.g., fluorescence-based), overwhelming follow-up efforts.

- Resource Drain: Significant medicinal chemistry and assay development resources are expended on characterizing and triaging non-progressible chemical series.

- Timeline Inflation: Projects can be delayed by 6-18 months due to the pursuit of leads derived from assay artifacts or pan-assay interference compounds (PAINS).

Strategic Integration of QSAR Filters:

Implementing pre- or post-screening QSAR models for interference prediction can reduce false positive rates by 30-70%, depending on the assay technology. This directly translates to a more focused hit list and more efficient resource deployment.

Protocols

Protocol 1: In-silico Triaging of HTS Output Using an Aggregator Prediction QSAR Model

Purpose: To prioritize true positives by identifying and removing compounds with high predicted aggregation potential from primary HTS hit lists.

Materials:

- HTS hit list (SMILES format)

- Pre-trained aggregator prediction QSAR model (e.g., model based on the

AZLogDdescriptor and molecular weight) - Cheminformatics software (e.g., RDKit, KNIME, or Pipeline Pilot)

- Research Reagent Solutions: See Table 1.

Procedure:

- Data Preparation: Standardize the SMILES notation for all compounds in the hit list (e.g., neutralize charges, remove salts).

- Descriptor Calculation: For each compound, calculate the relevant molecular descriptors used by the model. Commonly used descriptors for aggregation prediction include:

MolLogP(Octanol-water partition coefficient)MolWt(Molecular Weight)- Number of Rotatable Bonds

- Topological Polar Surface Area (TPSA)

- Model Application: Input the calculated descriptors into the pre-trained QSAR model to obtain a prediction score (e.g., probability of being an aggregator).

- Triaging: Flag all compounds with a prediction score above a defined threshold (e.g., p(aggregator) > 0.7). These are candidates for removal or low-priority follow-up.

- Output: Generate a prioritized hit list with flags and prediction scores appended.

Table 1: Quantitative Impact of False Positives on Project Resources

| Parameter | Without Interference Filters | With QSAR Interference Filters | % Change |

|---|---|---|---|

| Initial Hit Rate | 0.5 - 3.0% | 0.5 - 3.0% | 0% |

| False Positive Rate* | 70 - 95% | 30 - 60% | ~ -50% |

| Compounds for Confirmatory Assay | 5,000 - 15,000 | 1,500 - 6,000 | ~ -65% |

| FTEs for Hit Triage (weeks) | 12 - 20 | 4 - 8 | ~ -65% |

| Estimated Timeline Delay | 6 - 18 months | 2 - 6 months | ~ -67% |

*Percentage of initial hits that are false positives. Varies widely by assay type.

Protocol 2: Experimental Confirmation of Aggregation-Based False Positives

Purpose: To validate computationally flagged aggregators using a detergent sensitivity test in a biochemical assay.

Materials:

- Compounds predicted as aggregators and a subset of predicted negatives.

- Target enzyme and substrate.

- Assay buffer.

- 0.01% v/v Triton X-100 (or 0.1% w/v BSA) detergent solution.

- Plate reader capable of detecting assay signal (e.g., fluorescence, absorbance).

Procedure:

- Plate Setup: Prepare two identical assay plates for each compound at their initial hit concentration (typically 10 µM).

- Detergent Addition: To the test plate, add Triton X-100 to a final concentration of 0.01%. The control plate receives an equivalent volume of buffer.

- Assay Execution: Run the standard biochemical assay protocol on both plates.

- Data Analysis: Calculate % inhibition for each compound in both conditions.

- Interpretation: A compound whose inhibition is abolished or significantly reduced (>50% decrease in inhibition) in the presence of detergent is confirmed as an aggregation-based false positive.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Interference Studies

| Item | Function in False Positive Investigation |

|---|---|

| Triton X-100 / BSA | Detergent/protein used in counter-screens to disrupt compound aggregates, confirming aggregation-based inhibition. |

| DTT / β-Mercaptoethanol | Reducing agents used to test for redox-cycling or thiol-reactive compound interference. |

| Chelators (EDTA, EGTA) | To rule out inhibition caused by metal chelation rather than target engagement. |

| Fluorescent Probe (e.g., Thioflavin T) | To detect and quantify compound promiscuity via amyloid-like aggregation. |

| Cytotoxicity Assay Kit (e.g., MTT, CellTiter-Glo) | To confirm that observed activity in cell-based assays is not due to general cytotoxicity. |

| LC-MS/SFC-MS Systems | To verify compound integrity and purity post-assay, ruling out degradation products as a source of interference. |

Visualizations

HTS Workflow with and without QSAR Triage

Mechanisms of Interference and QSAR Prediction Logic

This application note details the implementation of Quantitative Structure-Activity Relationship (QSAR) models to transition from retrospective analysis of chemical assay interference to proactive prediction. Framed within our broader thesis on computational toxicology, this protocol provides a systematic workflow for building, validating, and deploying predictive models for common interference mechanisms, specifically targeting aggregation-based assay interference and fluorescence interference.

Table 1: Descriptors and Their Association with Assay Interference Mechanisms

| Descriptor Category | Specific Descriptor | Association with Aggregation | Association with Fluorescence | Typical Value Range (Normalized) |

|---|---|---|---|---|

| Physicochemical | logP (cLogP) | High (>4.0) increases risk | Moderate | -2 to 8 |

| Molecular Weight (MW) | High (>400 Da) increases risk | Low | 150-600 Da | |

| Topological Polar Surface Area (TPSA) | Low (<75 Ų) increases risk | Low | 0-150 Ų | |

| Electronic | pKa (Basic) | High (>8) increases risk | Significant for quenching | 0-14 |

| HOMO-LUMO Gap | Not Significant | Low gap increases risk | 5-15 eV | |

| Structural | Number of Aromatic Rings | High (>3) increases risk | High increases risk (chromophores) | 0-6 |

| Rotatable Bond Count | Low (<5) increases risk | Not Significant | 0-15 | |

| Aggregation-Specific | Aggregation Propensity Score (e.g., from DLS)* | Direct correlation (Score >0.7) | Not Applicable | 0-1 |

*Derived from Dynamic Light Scattering (DLS) training data.

Table 2: Model Performance Metrics for a QSAR Classifier Predicting Aggregation

| Model Algorithm | Training Set (n=1200 cpds) | Cross-Validation (5-fold) | Hold-Out Test Set (n=300 cpds) | Primary Use Case |

|---|---|---|---|---|

| Random Forest | Accuracy: 0.95 | AUC: 0.93 (±0.02) | Accuracy: 0.88, Sensitivity: 0.85, Specificity: 0.91 | High-confidence prioritization |

| Support Vector Machine (RBF) | Accuracy: 0.93 | AUC: 0.92 (±0.03) | Accuracy: 0.86, Sensitivity: 0.82, Specificity: 0.90 | Boundary case analysis |

| Neural Network (Multilayer Perceptron) | Accuracy: 0.96 | AUC: 0.94 (±0.02) | Accuracy: 0.87, Sensitivity: 0.90, Specificity: 0.84 | Large, complex descriptor sets |

Detailed Protocols

Protocol 1: Data Curation for Retrospective Analysis

Objective: To compile a high-quality dataset from historical HTS data for model training. Materials: See "The Scientist's Toolkit" below. Procedure:

- Data Source Identification: Extract data from institutional compound management and HTS databases. Flag all compounds with anomalous dose-response curves (e.g., steep slopes, high Hill coefficients) or IC50 values inconsistent across related assays.

- Experimental Confirmation: Subject flagged compounds to confirmatory orthogonal assays.

- For Aggregation: Perform Dynamic Light Scattering (DLS). Prepare a 100 µM solution of the compound in DMSO and dilute to 10 µM in assay buffer. Measure particle size distribution. Compounds with significant counts of particles >100 nm are labeled as "Aggregators."

- For Fluorescence: Perform fluorescence spectral scanning. Prepare a 10 µM solution in assay buffer. Excite at common HTS filter wavelengths (e.g., 485 nm, 540 nm). A compound emitting signal >10% of a standard control fluorophore is labeled as "Fluorescent."

- Descriptor Calculation: For all confirmed compounds, calculate 2D and 3D molecular descriptors (see Table 1) using a tool like RDKit or MOE. Standardize all descriptors (e.g., Z-score normalization).

Protocol 2: Construction and Validation of a Proactive Prediction QSAR Model

Objective: To build a validated classification model for interference prediction. Procedure:

- Model Training: Using the curated dataset (e.g., 1200 compounds), split into a training set (70%) and a test set (30%). Train a Random Forest classifier using the scikit-learn library. Optimize hyperparameters (nestimators, maxdepth) via grid search with 5-fold cross-validation on the training set.

- Model Validation:

- Internal: Apply the optimized model to the hold-out test set. Generate performance metrics (Table 2).

- External: Test the model on a completely new, proprietary library of 500 compounds. Correlate predictions with new experimental DLS/fluorescence data.

- Applicability Domain (AD) Assessment: Calculate the distance of new compounds to the training set (e.g., using leverage or k-NN distance). Flag predictions for compounds outside the AD as "low confidence."

Protocol 3: Prospective Deployment in a Screening Pipeline

Objective: To integrate the QSAR model for real-time prediction in early screening. Procedure:

- Integration: Deploy the validated model as a REST API or a KNIME node.

- Virtual Screening: Before purchasing or synthesizing new compounds for a screening campaign, submit their SMILES strings to the model.

- Triage & Design: Compounds predicted as "High-Risk" for interference are either:

- Deprioritized for purchase.

- Flagged for inclusion of control experiments (e.g., with detergent for aggregators) if assayed.

- Chemically modified to reduce risk descriptors (e.g., reduce logP, modify aromatic systems) in the next design cycle.

Visualizations

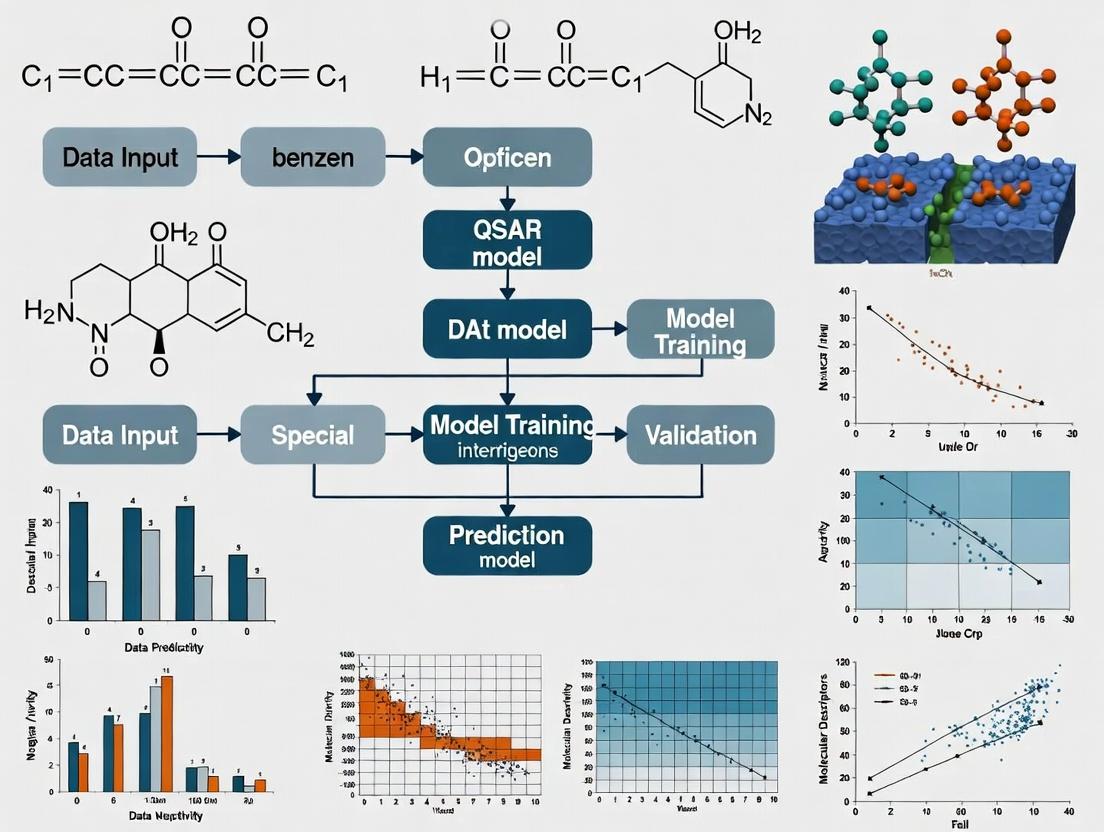

Diagram 1: The QSAR-Driven Paradigm Shift in Screening

Diagram 2: Experimental Workflow for Model Building & Deployment

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol | Example Product/Source |

|---|---|---|

| Dynamic Light Scattering (DLS) Instrument | Measures particle size distribution to confirm nano-aggregate formation. | Malvern Panalytical Zetasizer series, Wyatt DynaPro. |

| Fluorescence Spectrophotometer | Measures excitation/emission spectra to confirm compound fluorescence. | Agilent Cary Eclipse, Tecan Spark. |

| Chemical Descriptor Software | Calculates molecular descriptors (logP, TPSA, etc.) from chemical structures. | RDKit (Open Source), Molecular Operating Environment (MOE). |

| Machine Learning Library | Provides algorithms for building and validating QSAR models. | scikit-learn (Python), R caret package. |

| Assay Buffer (e.g., PBS with 0.01% BSA) | Standardized buffer for DLS and fluorescence confirmatory assays to mimic HTS conditions. | Thermo Fisher Scientific, Sigma-Aldrich. |

| Detergent Control (Triton X-100 or CHAPS) | Added to assay to disrupt aggregators; used to validate aggregation interference. | Sigma-Aldrich. |

| High-Quality DMSO | Compound solubilization solvent. Must be low fluorescence and hygroscopically controlled. | Sigma-Aldritz DMSO Hybri-Max. |

Key Historical and Recent Advances in Interference Prediction Literature

Application Notes on Historical Progression

Foundational Era (Pre-2000s)

The initial recognition of chemical assay interference emerged from observations of false-positive results in high-throughput screening (HTS). Key advances were qualitative, focusing on identifying problematic compound classes like pan-assay interference compounds (PAINS) through retrospective analysis. The primary mechanism studied was nonspecific protein reactivity or aggregation.

QSAR Integration Era (2000-2015)

The application of Quantitative Structure-Activity Relationship (QSAR) models marked a shift toward predictive interference assessment. Models evolved from simple rule-based filters (e.g., identifying Michael acceptors, redox-active moieties) to machine learning classifiers trained on large HTS datasets. This era established the core thesis that interference is a predictable property based on chemical structure.

The "Aggregator-Advisor" and Data Consolidation (2015-2020)

The publication of the "Aggregator Advisor" and similar tools represented a major advance by providing publicly accessible, model-driven predictions. Research expanded beyond reactivity to include spectroscopic interference (fluorescence, quenching), membrane potential disruptors, and assay-specific artifacts. Large-scale public datasets, such as those from the PubChem Bioassay resource, became critical for model training.

Contemporary AI and Mechanistic Integration (2020-Present)

Recent advances leverage deep learning (graph neural networks, transformer-based models) and multi-task learning to predict interference across diverse assay technologies. There is a concerted push toward "mechanistically informed" models that predict not just interference likelihood, but also the probable mechanism (e.g., aggregation, fluorescence, chemical reactivity with a specific assay component). Integration with high-content imaging and spectral data is a frontier.

Table 1: Evolution of Key Predictive Model Performance Metrics

| Era (Example Model/Tool) | Primary Algorithm | Typical Dataset Size (Compounds) | Reported Accuracy/Precision | Key Limitation |

|---|---|---|---|---|

| Foundational (Rule-based filters) | Structural Alerts | 1,000 - 10,000 | High specificity, low recall (~30% recall) | Misses novel interference scaffolds |

| QSAR Integration (Baell & Holloway, 2010 PAINS) | SMARTS patterns | ~4,000 (annotated) | Not quantitatively reported | High false-positive rate in certain chemotypes |

| Data Consolidation (Aggregator Advisor, 2015) | Naïve Bayes, Random Forest | ~850,000 (from PubChem) | AUC-ROC: 0.70-0.85 (assay-dependent) | Limited to aggregation-based interference |

| Contemporary AI (ChemInterp, 2023) | Graph Neural Network | >2,000,000 (multi-source) | AUC-PR: 0.82, MCC: 0.65 | Computationally intensive; requires significant tuning |

Detailed Experimental Protocols

Protocol for Validating Aggregation-Based Interference (Dynamic Light Scattering Assay)

Purpose: To confirm if a predicted aggregator forms colloidal aggregates in assay buffer. Materials:

- Candidate compound stock solution (10 mM in DMSO)

- Assay buffer (e.g., PBS, pH 7.4)

- Dynamic Light Scattering (DLS) instrument (e.g., Malvern Zetasizer)

- 0.02 µm filtered assay buffer

- Low-volume quartz cuvette

Procedure:

- Sample Preparation: Dilute the candidate compound in filtered assay buffer to a final concentration of 10-50 µM (final DMSO ≤0.5%). Prepare a vehicle control (buffer with same % DMSO).

- Instrument Equilibration: Power on DLS instrument and allow laser to stabilize for 15 minutes. Set temperature to 25°C.

- Measurement: Load sample into clean cuvette, place in instrument. Set measurement parameters: 3 runs of 10 seconds each. Record the intensity-weighted size distribution.

- Data Analysis: Analyze the correlation function using instrument software. A positive result is indicated by a population of particles with hydrodynamic radius > 50 nm that is not present in the vehicle control.

- Confirmatory Test (Triton X-100): Repeat measurement with the addition of 0.01% v/v Triton X-100 nonionic detergent. Disruption of the particle population and loss of signal confirms detergent-sensitive aggregation.

Protocol for Fluorescence Interference Profiling (Dual-Wavelength Scan)

Purpose: To characterize a compound's fluorescent properties across excitation/emission wavelengths relevant to common assays. Materials:

- Candidate compound stock (10 mM in DMSO)

- Black, flat-bottom 384-well plates

- Multi-mode plate reader with spectral scanning capability

- Relevant assay buffers (PBS, TRIS, etc.)

Procedure:

- Plate Setup: Dispense 50 µL of buffer + 0.5% DMSO (control) or buffer containing candidate compound at 10 µM final concentration into designated wells. Use triplicates for each condition.

- Excitation Scan: Set the emission monochromator to a common assay emission wavelength (e.g., 520 nm for GFP/FITC assays). Program the reader to perform an excitation scan from 200 nm to 600 nm in 5-10 nm increments. Record fluorescence intensity.

- Emission Scan: Set the excitation monochromator to a common assay excitation wavelength (e.g., 485 nm). Program an emission scan from 300 nm to 650 nm.

- Interference Calculation: For each assay-relevant filter pair (e.g., Ex485/Em520), calculate the interference potential (IP): IP = (Signalcompound - Signalcontrol) / Signal_control. An IP > 10% or < -10% (quenching) is considered significant.

- Corrective Action: If interference is detected, note the spectral profile. For a fluorescent compound, shifting assay filters away from the compound's peak may mitigate interference.

Visualizations

Title: Evolution of Interference Prediction Research Eras

Title: General QSAR Model Workflow for Interference Prediction

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Reagents for Interference Investigation

| Item | Function/Brief Explanation | Example/Catalog Consideration |

|---|---|---|

| Triton X-100 | Non-ionic detergent used to disrupt detergent-sensitive colloidal aggregates, confirming aggregation-based interference. | Sigma-Aldrich, T8787 |

| β-Lactoglobulin | Model protein used in positive control experiments for aggregator compounds. | Sigma-Aldrich, L3908 |

| Hill Dye Cocktail (Fluorescent) | A mixture of fluorescent dyes used to profile and identify spectral interference across common wavelengths. | Thermo Fisher, H10299 |

| Redox-Sensitive Dye (e.g., DCFH-DA) | Used to test if a compound causes oxidative interference or generates reactive oxygen species in assay buffer. | Cayman Chemical, 85155 |

| Chelator (e.g., EDTA) | Used to test for metal-dependent interference or compound chelation. | Thermo Fisher, AM9260G |

| BSA (Fatty-Acid Free) | Used to test for interference mediated by non-specific protein binding or sequestration. | Sigma-Aldrich, A7030 |

| Specialized Assay Buffer Kits | Pre-formulated, low-fluorescence, low-autofluorescence buffers for sensitive biochemical assays. | Corning, CLS3303500 |

| Reference Aggregators (e.g., Congo Red) | Positive control compounds for aggregation interference studies. | Sigma-Aldrich, C6277 |

| Reference Fluorescent Compounds (e.g., Quinine sulfate) | Controls for calibrating and validating fluorescence interference assays. | Sigma-Aldrich, 207837 |

Core Chemical Descriptors and Structural Alerts Linked to Interfering Behaviors

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) models for chemical-assay interference prediction, identifying core molecular descriptors and structural fragments is paramount. Interfering compounds, often termed pan-assay interference compounds (PAINS), generate false-positive signals across various assay formats, confounding early drug discovery. This application note details the key chemical descriptors, structural alerts, and experimental protocols for their identification and validation, aiming to build robust in-silico filters and predictive models.

Core Chemical Descriptors and Structural Alerts

The table below summarizes the primary chemical descriptors and structural alert classes linked to established interfering behaviors, based on recent literature and cheminformatics analyses.

Table 1: Key Descriptor Classes and Structural Alerts for Assay Interference

| Descriptor Category | Specific Descriptor / Alert Name | Typical Range/Value in Interferors | Associated Interference Mechanism |

|---|---|---|---|

| Physicochemical | LogP (Octanol-water partition coefficient) | > 5.0 (Highly lipophilic) | Non-specific membrane disruption, compound aggregation. |

| Physicochemical | Topological Polar Surface Area (TPSA) | < 75 Ų | Promotes membrane permeability & non-specific binding. |

| Reactivity | Michael Acceptor motif (e.g., α,β-unsaturated carbonyl) | Presence = Alert | Electrophilic reactivity with cysteines in assay proteins. |

| Reactivity | Redox-active moiety (e.g., quinone, hydroquinone) | Presence = Alert | Generates reactive oxygen species or undergoes redox cycling. |

| Spectroscopic | Predicted absorbance at assay wavelength (e.g., 300-500 nm) | High molar absorptivity | Fluorescence or absorbance overlap, causing signal interference. |

| Aggregation Propensity | Calculated Aggregation Index (e.g., from DLS simulations) | > Threshold (e.g., 0.5) | Forms colloidal aggregates inhibiting enzymes non-specifically. |

| Structural Alert (PAINS) | Rhodanine | Presence = Alert | Promiscuous, redox-active, often yields invalid leads. |

| Structural Alert (PAINS) | Curcuminoid | Presence = Alert | Photo-reactive, unstable, chelator, frequent hitter. |

| Structural Alert (PAINS) | Enone (isolated) | Presence = Alert | Electrophilic, prone to Michael addition. |

Experimental Protocols for Interference Validation

Protocol 3.1: Aggregate Formation Detection via Dynamic Light Scattering (DLS)

Objective: To confirm if a compound forms colloidal aggregates in assay buffer, a primary mechanism of biochemical assay interference. Materials: See Scientist's Toolkit. Procedure:

- Prepare a 10 mM stock solution of the test compound in 100% DMSO.

- Dilute the compound to a final concentration of 50 µM in assay buffer (e.g., PBS, pH 7.4, with 0.01% Triton X-100 as optional control). Maintain DMSO concentration ≤ 1%.

- Incubate the solution at 25°C for 30 minutes.

- Load 60 µL of the solution into a low-volume quartz cuvette.

- Perform DLS measurement using a Zetasizer or equivalent:

- Set temperature to 25°C.

- Perform 12 sub-runs of 10 seconds each.

- Record the mean hydrodynamic radius (Z-average, d.nm) and polydispersity index (PDI).

- Interpretation: A Z-average > 50 nm with a PDI < 0.2 suggests monodisperse aggregate formation. Compare with buffer-only and non-aggregating control (e.g., known drug molecule).

- Include a detergent sensitivity test: Repeat with 0.01% Triton X-100. Disappearance of aggregate signal confirms detergent-reversible aggregation.

Protocol 3.2: Fluorescence Interference Assay (Inner Filter Effect & Fluorescence Quenching)

Objective: To quantify compound interference in fluorescence-based assays. Materials: Black 384-well plate, fluorescent probe (e.g., Fluorescein, 1 µM in PBS), plate reader. Procedure:

- In a black 384-well plate, serially dilute the test compound in assay buffer across a concentration range (e.g., 0.1 µM to 100 µM). Include a buffer-only control column.

- Add an equal volume of fluorescent probe solution (2 µM final) to all wells. Final DMSO ≤ 1%.

- Incubate protected from light for 15 minutes at 25°C.

- Read fluorescence at the probe's excitation/emission maxima (e.g., 485/535 nm for Fluorescein).

- Data Analysis: Calculate % signal change relative to buffer control. A concentration-dependent decrease suggests quenching; an increase may indicate auto-fluorescence. Correct for inner filter effect using the formula:

F_corr = F_obs * antilog((A_ex + A_em)/2), where A is absorbance at ex/em wavelengths.

Protocol 3.3: Reactivity Probe Assay (for Electrophilic Compounds)

Objective: To detect thiol reactivity, indicative of potential Michael acceptor or other electrophile interference. Materials: Glutathione (GSH, 1 mM in PBS), Ellman's reagent (DTNB, 100 µM in PBS), UV-Vis plate reader. Procedure:

- Prepare a 10 mM solution of test compound in DMSO.

- In a clear 96-well plate, mix GSH solution (final 500 µM) with test compound (final 100 µM) or DMSO vehicle in PBS. Final volume 100 µL.

- Incubate at 37°C for 1 hour.

- Add 20 µL of DTNB solution (final ~17 µM).

- Incubate 5 minutes at 25°C and measure absorbance at 412 nm.

- Interpretation: Reduced absorbance compared to DMSO control indicates GSH adduct formation (thiol reactivity). Report as % GSH depletion.

Visualizations

Diagram Title: Key Molecular Pathways Leading to Assay Interference

Diagram Title: QSAR Model Development for Interference Prediction

The Scientist's Toolkit: Key Research Reagents and Materials

Table 2: Essential Reagents for Interference Studies

| Item Name | Supplier Examples | Function in Protocols |

|---|---|---|

| Triton X-100 Detergent | Sigma-Aldrich, Thermo Fisher | Used in DLS to test reversibility of compound aggregation. |

| Reduced Glutathione (GSH) | Cayman Chemical, MilliporeSigma | Reactive thiol probe for identifying electrophilic compounds. |

| Ellman's Reagent (DTNB) | Thermo Fisher, Abcam | Colorimetric reagent to quantify free thiol concentration. |

| Fluorescein Sodium Salt | Sigma-Aldrich, Bio-Rad | Standard fluorescent probe for interference (quenching/inner filter) assays. |

| Dynamic Light Scattering (DLS) Zeta Potential Standard | Malvern Panalytical | Used for calibration and validation of DLS instrument performance. |

| Low-Binding 384-Well Microplates (Black/Clear) | Corning, Greiner Bio-One | Minimizes non-specific compound binding for fluorescence/UV-Vis assays. |

| Assay Buffer Salts (PBS, TRIS, HEPES) | Various | Provides consistent physiological pH and ionic strength. |

| High-Quality Anhydrous DMSO | Sigma-Aldrich (D8418), Alfa Aesar | Primary solvent for compound stocks; low absorbance in UV range is critical. |

Building the Predictor: A Step-by-Step Guide to QSAR Model Development for Interference

Within Quantitative Structure-Activity Relationship (QSAR) modeling for chemical-assay interference (CAI) prediction, the quality of the predictive model is intrinsically tied to the quality of its training data. A gold-standard dataset of positive/native data—verified, non-interfering compounds that yield true biological activity in a specific assay—is foundational. This document outlines protocols for curating such datasets, framed within CAI-QSAR research to distinguish true bioactivity from assay artifact signals.

Reliable positive data is sourced from experimental results where the mechanism of action is confirmed and interference mechanisms are rigorously ruled out.

| Source | Description | Key Considerations for CAI Research |

|---|---|---|

| PubChem BioAssay (AID 743255) | Dose-response confirmation data from the NCATS assay interference library. | Provides confirmatory data from orthogonal assays. |

| ChEMBL (Version 33) | Manually curated bioactive molecules with drug-like properties. | Use only records with "Direct" target assignment and high confidence score (≥8). |

| BRENDA | Enzyme-specific functional assay data under optimized conditions. | Filter for native substrates and recommended pH/temperature. |

| Internal HTS Campaigns | Corporate data with full pharmacological validation profiles. | Requires secondary confirmation via SPR or cellular phenotypic assays. |

| Literature (PubMed) | Peer-reviewed journals detailing mechanistic studies. | Prioritize studies employing counter-screens (e.g., redox, fluorescence quenching). |

Core Challenges in Data Curation

- Misannotation Propagation: Historical mislabeling of interferent compounds as active in public databases.

- Assay Condition Variability: Buffer composition, detergent concentration, and enzyme source can alter compound behavior.

- Confounder Compounds: Compounds acting via non-target-specific mechanisms (e.g., aggregation, reactivity, fluorescence).

- Data Standardization: Inconsistent representation of chemical structures (tautomers, stereochemistry, salt forms).

Application Notes & Protocols

Protocol 4.1: Data Extraction and Triage from Public Repositories

Objective: Extract high-confidence positive data from ChEMBL for a specific protein target (e.g., Tyrosine-protein kinase JAK2). Materials: See "Scientist's Toolkit" below. Procedure:

- Query: Execute SQL query on ChEMBL database: Select compounds with

target_confidence=9,pchembl_value>=6.0,assay_type='B'(binding), andrelationship_type='D'(direct interaction). - Filter: Remove compounds flagged in any PubChem interference assay (AID 743255, 624039). Cross-reference with the "PAINS" (Pan Assay Interference Compounds) filter using the RDKit toolkit.

- Standardize: Apply the "Standardizer" tool (RDKit) with rules: neutralize charges, remove solvents, retain major tautomer, canonicalize stereochemistry.

- Curate: Manually inspect remaining entries against primary literature for confirmatory evidence (e.g., X-ray co-crystallization).

Protocol 4.2: Orthogonal Confirmatory Assay for Positive Data Validation

Objective: Experimentally validate a candidate positive compound using a secondary, biophysical assay. Workflow: See Diagram 1. Procedure:

- Primary HTS Hit: Identify compound from a JAK2 enzymatic assay at 10 µM.

- Dose-Response: Confirm potency in the primary assay (11-point, 1:3 dilution).

- Counter-Screen: Test compound in interference assay panels (e.g., fluorescence interference, chemical reactivity, aggregation via dynamic light scattering).

- Orthogonal Assay: Validate binding using Surface Plasmon Resonance (SPR) with immobilized JAK2 kinase domain. A positive result requires a kon/koff binding signature.

- Cellular Assay: Confirm functional activity in a cell line with JAK2-dependent STAT5 phosphorylation (pSTAT5) measured via ELISA.

Protocol 4.3: Data Curation Workflow for CAI-QSAR Modeling

Objective: Integrate and format validated data for QSAR model training. Workflow: See Diagram 2. Procedure:

- Aggregation: Merge validated compound lists from internal and external sources.

- Descriptor Calculation: Compute molecular descriptors (e.g., MOE, Dragon) and fingerprints (ECFP6).

- Chemical Space Analysis: Perform PCA on descriptors to ensure diversity of positive set.

- Label Assignment: Assign class label "1" (positive/native) to all curated compounds.

- Final Dataset Assembly: Create a table of

[Canonical_SMILES, Standardized_Name, pChEMBL_Value/IC50, Assay_ID, Descriptor_Vector].

Mandatory Visualizations

Diagram 1 Title: Orthogonal Validation Workflow for Positive Data

Diagram 2 Title: Data Curation Pipeline for QSAR Modeling

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials

| Item/Reagent | Function in Positive Data Curation |

|---|---|

| ChEMBL Database (v33+) | Primary source of annotated bioactive molecules with confidence scores. |

| RDKit Cheminformatics Toolkit | Open-source platform for chemical standardization, PAINS filtering, and descriptor calculation. |

| NCATS Assay Interference Library (PubChem AID 743255) | Critical resource for identifying and filtering known interferent compounds. |

| Surface Plasmon Resonance (SPR) Instrument (e.g., Biacore) | Label-free, orthogonal method to confirm direct, stoichiometric binding of compound to target. |

| Dynamic Light Scattering (DLS) Plate Reader | Detects compound aggregation, a common interference mechanism, at assay-relevant concentrations. |

| Cellular Assay Kit (e.g., pSTAT5 ELISA) | Confirms target engagement and functional activity in a physiologically relevant cellular context. |

| MOE or Dragon Software | Computes comprehensive sets of 2D/3D molecular descriptors for chemical space analysis. |

| Standardized Assay Buffer (with DTT & Chelators) | Reduces false positives from redox-cycling or metal-mediated compound reactivity. |

Within the broader thesis on developing robust QSAR models for predicting chemical-assay interference, the selection of molecular descriptors that directly map to known interference mechanisms is a critical step. This document outlines application notes and detailed protocols for identifying and validating descriptors that correlate with mechanisms such as compound aggregation, redox cycling, singlet oxygen generation, and direct protein reactivity. The goal is to build predictive models with high mechanistic interpretability and reduced false-positive rates in early drug discovery.

Descriptor Selection Framework & Quantitative Analysis

Descriptors are selected based on their hypothesized link to physicochemical underpinnings of interference. The following table summarizes key descriptor categories and their mechanistic relevance, supported by recent literature analyses.

Table 1: Molecular Descriptor Categories for Interference Mechanisms

| Interference Mechanism | Relevant Descriptor Categories | Example Specific Descriptors | Typical Problematic Range/Value | Primary Literature Support |

|---|---|---|---|---|

| Aggregation | Hydrophobicity, Molecular Size, 3D Shape | LogP, Topological Polar Surface Area (TPSA), Number of Rotatable Bonds, Molecular Weight | High LogP (>3), Low TPSA (<75 Ų) | Irwin et al., 2015; Shoichet et al., 2020 |

| Redox Cycling | Electrochemical, Substructural | Calculated Reduction Potential, Presence of Quinone-like substructures (PubChem FP 881) | Reduction Potential > -0.5 V | Aldrich et al., 2020; Johnston, 2021 |

| Singlet Oxygen Generation | Photophysical, Electronic | Calculated Singlet-Triplet Energy Gap (ΔEST), Absorption Wavelength (λabs) | Low ΔEST (<1 eV), λabs > 400 nm | Schmitz et al., 2022 |

| Reactive Electrophiles | Chemical Reactivity, Atomic Partial Charges | Suspector Alert Scores, Hard Soft Acid Base (HSAB) η value, LUMO Energy | High Suspector Score, Low LUMO Energy | Baell & Holloway, 2010; Sushko et al., 2012 (PAINS) |

| Metal Chelation | Donor Atom Count, Topological | Number of O/N donor atoms (e.g., catechol, hydroxamate), Molecular Fingerprint Bits | ≥3 donor atoms in proximity | Capuzzi et al., 2017 |

Experimental Protocols for Descriptor Validation

Protocol 3.1: Experimental Confirmation of Aggregation-Prone Compounds

- Objective: To validate computational predictions of aggregation using dynamic light scattering (DLS).

- Materials: Test compounds, DMSO, assay buffer (e.g., PBS, pH 7.4), Dynamic Light Scattering instrument.

- Procedure:

- Prepare a 10 mM stock solution of the compound in DMSO.

- Dilute the stock in assay buffer to a final concentration of 50-200 µM (final DMSO ≤1%).

- Incubate the solution at assay temperature (e.g., 25°C) for 30 minutes.

- Load sample into a low-volume quartz cuvette.

- Perform DLS measurement with 3 runs of 30 seconds each.

- Analyze the intensity-weighted size distribution. A population with a hydrodynamic diameter > 50 nm indicates aggregation.

- Data Integration: Compounds with high LogP/low TPSA and positive DLS readout are tagged as "confirmed aggregators."

Protocol 3.2: High-Throughput Redox Cycling Assay (Nitroblue Tetrazolium - NBT Reduction)

- Objective: Experimentally identify redox-active compounds.

- Materials: Test compounds in DMSO, Nitroblue Tetrazolium (NBT), NADH, phosphate buffer (0.1 M, pH 7.4), 384-well plate, plate reader.

- Procedure:

- In a 384-well plate, add 50 µL of phosphate buffer containing 200 µM NBT and 200 µM NADH.

- Add 0.5 µL of 10 mM compound stock (final concentration 100 µM) or DMSO control.

- Incubate at 25°C for 60 minutes.

- Measure absorbance at 560 nm (formation of insoluble formazan).

- Calculate percentage increase in absorbance relative to DMSO control. A >3 standard deviation increase is considered positive.

- Validation: Correlate positive hits with calculated reduction potential descriptors.

Protocol 3.3: Singlet Oxygen Generation Detection via Chemical Trapping (DPBF Assay)

- Objective: Confirm compounds capable of photosensitized singlet oxygen generation.

- Materials: Test compound, 1,3-Diphenylisobenzofuran (DPBF), LED light source (450 nm), spectrometer, quartz cuvette, methanol.

- Procedure:

- Prepare a solution of 50 µM DPBF in methanol.

- Add test compound to a final concentration of 10 µM.

- Irradiate the solution with the LED light source (e.g., 10 mW/cm² for 10 mins).

- Monitor the decay of DPBF absorbance at 410 nm every 2 minutes.

- Calculate the rate constant of DPBF decay. Compare to a dark control (no light) and a no-sensitizer control.

- Descriptor Link: Positive hits should correlate with low calculated ΔE_ST.

Visualizing the Descriptor Selection & Validation Workflow

Diagram Title: Workflow for Mechanism-Driven Descriptor Selection

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Interference Mechanism Studies

| Item / Reagent | Supplier Examples | Function in Protocol |

|---|---|---|

| Nitroblue Tetrazolium (NBT) | Sigma-Aldrich, Thermo Fisher | Substrate for detecting superoxide/reduction in redox cycling assays (Protocol 3.2). |

| 1,3-Diphenylisobenzofuran (DPBF) | TCI Chemicals, Sigma-Aldrich | Chemical trap for singlet oxygen; its decay is monitored spectrophotometrically (Protocol 3.3). |

| Dynamic Light Scattering (DLS) Instrument | Malvern Panalytical (Zetasizer), Wyatt Technology | Measures hydrodynamic particle size to confirm nano-aggregate formation (Protocol 3.1). |

| NADH (Disodium Salt) | Roche, Sigma-Aldrich | Electron donor used in redox cycling assays to initiate the reduction process. |

| 384-Well, Clear Bottom, Assay Plates | Corning, Greiner Bio-One | Platform for high-throughput spectrophotometric interference assays. |

| RDKit or PaDEL-Descriptor Software | Open Source | Calculates 2D/3D molecular descriptors from chemical structures for initial filtering. |

| Suspector or PAINS Filtering Tools | Open Source (e.g., RDKit implementation) | Identifies substructures associated with reactive or promiscuous compounds. |

Predicting chemical-assay interference (e.g., aggregation, reactivity, fluorescence, light scattering) is a critical step in early drug discovery to eliminate false positives in high-throughput screening. Quantitative Structure-Activity Relationship (QSAR) models built using various machine learning (ML) algorithms can identify such interfering compounds based on their structural and physicochemical features. This document provides Application Notes and Protocols for implementing key ML methods—Random Forest (RF), Support Vector Machine (SVM), XGBoost, and Deep Learning (DL)—within this research context.

The following table summarizes the core characteristics and recent benchmark performance of each algorithm on public chemical interference datasets (e.g., PAINS, ALARM NMR).

Table 1: Algorithm Comparison for QSAR-Based Interference Prediction

| Algorithm | Key Mechanism | Typical Data Scale | Avg. Accuracy (Recent Benchmarks) | Avg. AUC-ROC | Key Pros for Interference Prediction | Key Cons for Interference Prediction |

|---|---|---|---|---|---|---|

| Random Forest (RF) | Ensemble of decorrelated decision trees using bagging | 1K - 100K compounds, 100-5K features | 0.85 - 0.89 | 0.88 - 0.92 | Robust to noise, provides feature importance, less prone to overfitting. | Can overfit on very noisy datasets; limited extrapolation. |

| Support Vector Machine (SVM) | Finds optimal hyperplane maximizing margin between classes | 100 - 10K compounds, 100-1K features | 0.83 - 0.87 | 0.85 - 0.90 | Effective in high-dimensional spaces; strong theoretical foundations. | Computationally heavy for large datasets; sensitive to kernel choice. |

| XGBoost | Gradient boosting ensemble with sequential tree building & regularization | 1K - 500K compounds, 100-10K features | 0.87 - 0.91 | 0.90 - 0.94 | High predictive performance; built-in handling of missing data. | Can overfit without careful tuning; less interpretable than RF. |

| Deep Learning (DL) | Multi-layer neural networks learning hierarchical feature representations | 10K - 1M+ compounds, 100-10K features (or SMILES strings) | 0.88 - 0.93 | 0.91 - 0.95 | Can learn from raw data (e.g., SMILES); models complex non-linear relationships. | Requires very large data; computationally intensive; "black box." |

Detailed Experimental Protocols

Protocol 3.1: Standardized Workflow for QSAR Model Development

This protocol outlines the common pipeline for building a QSAR classification model for assay interference prediction.

Materials & Software: Python/R, RDKit, Scikit-learn, XGBoost, TensorFlow/PyTorch, Jupyter Notebook. Dataset: Curated chemical library with labeled interference compounds (e.g., from PubChem BioAssay).

Procedure:

- Data Curation: Compound structures (SMILES/SDF) are standardized using RDKit (neutralization, salt stripping, tautomer normalization). Known interfering compounds are labeled as "1" and clean compounds as "0".

- Descriptor Calculation: Compute molecular descriptors (e.g., MOE, RDKit descriptors) and/or fingerprints (ECFP4, MACCS keys) for each compound.

- Dataset Splitting: Split data into Training (70%), Validation (15%), and Hold-out Test (15%) sets using Stratified Splitting to maintain class balance. Apply Scaffold Splitting for a more realistic assessment of model generalizability to novel chemotypes.

- Feature Preprocessing: On the training set only, apply feature scaling (StandardScaler for SVM/DL; not required for tree-based methods) and remove low-variance features.

- Model Training & Hyperparameter Tuning: For each algorithm, use the Validation set and Bayesian Optimization (or Grid Search) with 5-fold cross-validation to identify optimal hyperparameters (see Protocol 3.2).

- Model Evaluation: Retrain the best model on the combined Training+Validation set. Evaluate final performance on the Hold-out Test set using Accuracy, AUC-ROC, Precision, Recall, and F1-score. Generate confusion matrices.

- Interpretation: For RF/XGBoost, analyze feature importance plots. For DL, use attention mechanisms or SHAP values. Identify key structural alerts contributing to interference prediction.

Protocol 3.2: Algorithm-Specific Hyperparameter Optimization

This protocol details the key hyperparameters to tune for each algorithm within the QSAR pipeline.

Table 2: Core Hyperparameters for Tuning

| Algorithm | Critical Hyperparameters | Recommended Search Range | Optimization Objective |

|---|---|---|---|

| Random Forest | n_estimators, max_depth, min_samples_split, max_features |

nestimators: [100, 500]; maxdepth: [5, 30]; minsamplessplit: [2, 10] | Maximize Validation AUC-ROC |

| SVM (RBF Kernel) | C (regularization), gamma (kernel coefficient) |

C: [1e-3, 1e3] (log scale); gamma: [1e-4, 1e1] (log scale) | Maximize Validation AUC-ROC |

| XGBoost | learning_rate, max_depth, n_estimators, subsample, colsample_bytree |

learningrate: [0.01, 0.3]; maxdepth: [3, 10]; n_estimators: [100, 500] | Maximize Validation AUC-ROC |

| Deep Learning (MLP) | Number of layers & units, dropout_rate, learning_rate, batch_size |

Layers: [2, 5]; Units: [64, 512]; dropout_rate: [0.1, 0.5] | Minimize Validation Loss |

Procedure:

- Define the hyperparameter space as in Table 2.

- Using the training set, initiate a Bayesian Optimization process (e.g., using

scikit-optimize) for 30-50 iterations. - For each hyperparameter set, perform 5-fold cross-validation. The average validation fold AUC-ROC is the objective score.

- Select the hyperparameter set yielding the highest average validation score.

Visualizations

Diagram 1: QSAR Model Development Workflow

Diagram 2: Algorithm Selection Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for QSAR Model Development in Interference Prediction

| Resource Name | Type | Primary Function in Research | Key Provider/Reference |

|---|---|---|---|

| RDKit | Open-source Cheminformatics Library | Standardizing structures, calculating molecular descriptors/fingerprints. | http://www.rdkit.org |

| PubChem BioAssay | Public Database | Source of labeled chemical screening data for identifying interfering compounds. | NIH / PubChem |

| PAINS & ALARM NMR Filters | Curated Substructure Libraries | Provide rule-based baselines and training data for interference compounds. | Baell & Holloway, 2010; Journal of Medicinal Chemistry |

| Scikit-learn | ML Library in Python | Provides implementations for RF, SVM, and essential data processing tools. | https://scikit-learn.org |

| XGBoost | Optimized Gradient Boosting Library | State-of-the-art tree boosting algorithm for high-performance QSAR. | https://xgboost.ai |

| TensorFlow / PyTorch | Deep Learning Frameworks | Building and training neural network models (e.g., from SMILES strings). | Google / Facebook AI |

| SHAP (SHapley Additive exPlanations) | Model Interpretation Library | Explains output of any ML model, critical for interpreting "black box" models. | https://shap.readthedocs.io |

| Bayesian Optimization (scikit-optimize) | Hyperparameter Tuning Tool | Efficiently searches hyperparameter space to maximize model performance. | https://scikit-optimize.github.io |

Within the broader thesis on developing robust Quantitative Structure-Activity Relationship (QSAR) models for predicting chemical-assay interference, the model training workflow is a critical pillar. Assay interference, where compounds generate false-positive or false-negative signals through non-target mechanisms (e.g., aggregation, fluorescence, reactivity), poses a significant challenge in early drug discovery. A rigorously designed training workflow ensures the developed predictive models are generalizable, reliable, and can effectively flag problematic chemotypes before costly experimental follow-up. This document outlines the detailed protocols and application notes for constructing such a workflow.

Data Collection and Curation Protocol

Objective: Assemble a high-confidence, chemically diverse dataset of compounds labeled for assay interference potential. Source: Data is typically aggregated from public sources (e.g., PubChem BioAssay, ChEMBL) and proprietary high-throughput screening (HTS) campaigns, specifically annotated for interference mechanisms. Curation Steps:

- Compound Standardization: Using RDKit or KNIME, standardize structures: neutralize charges, remove salts, generate canonical tautomers, and check for valency errors.

- Descriptor Calculation: Compute molecular descriptors (e.g., RDKit, MOE descriptors) and fingerprints (ECFP4, MACCS keys).

- Duplicate Removal: Remove exact duplicates based on canonical SMILES. For non-identical duplicates, retain the most reliable label.

- Label Definition: Define a binary label (e.g.,

1for confirmed interferent,0for non-interferent). For multi-class models (predicting interference type), define categorical labels. - Chemical Space Analysis: Perform PCA or t-SNE on descriptors to visualize data coverage and identify potential outliers.

Data Splitting Strategy

A critical step to avoid data leakage and over-optimistic performance estimates, especially crucial for QSAR models where similar compounds can lead to artificial inflation of predictive ability.

Protocol:

- Rationale: Standard random splitting is inappropriate for chemical data due to structural correlations. Temporal splits (if data is time-stamped) or more robust structure-based splits are required.

- Methodology – Scaffold Split:

- Implement using the

GroupShuffleSplitin scikit-learn or theButinaclustering method in RDKit. - Identify molecular scaffolds (Murcko frameworks) for all compounds.

- Split the data such that compounds sharing a core scaffold are contained within the same partition (train/validation/test). This tests the model's ability to generalize to novel chemotypes.

- Implement using the

- Split Ratios: A common ratio is 70:15:15 for Train:Validation:Test sets. The validation set is used for hyperparameter tuning, and the test set is held out for a single, final evaluation.

Feature Engineering and Selection

Objective: Create and select the most informative molecular representations to predict interference.

Protocol:

- Feature Generation:

- 2D Descriptors: Calculate a comprehensive set (~200-500 descriptors) using software like RDKit, PaDEL, or MOE (e.g., topological, electronic, hydrophobic descriptors).

- Fingerprints: Generate binary bit vectors (e.g., ECFP4, FP2).

- Feature Preprocessing:

- Remove near-constant variance features (variance threshold < 0.01).

- Handle missing values (impute with median or drop features).

- Standardize numerical features (StandardScaler) for distance-based algorithms.

- Feature Selection:

- Apply univariate methods (e.g., SelectKBest using mutual information) for initial filtering.

- Use model-based importance (Random Forest or Gradient Boosting feature importance) or recursive feature elimination (RFE).

- Caution: Perform feature selection only on the training fold during cross-validation to prevent data leakage.

Model Training & Algorithm Selection

Objective: Train a suite of candidate algorithms suitable for binary/multi-class classification.

Protocol:

- Candidate Algorithms: Based on current literature, the following are effective for QSAR tasks:

- Tree-Based Ensembles: Random Forest, Gradient Boosting Machines (XGBoost, LightGBM, CatBoost).

- Support Vector Machines (SVM): Effective with kernel tricks for non-linear relationships.

- Neural Networks: Multilayer Perceptrons (MLPs) or Graph Neural Networks (GNNs) for direct structure learning.

- Baseline Model: Always train a simple baseline (e.g., DummyClassifier predicting the majority class) to contextualize performance.

- Training: Fit each algorithm on the preprocessed training set. Implement early stopping for iterative algorithms (GBMs, NNs) using the validation set.

Hyperparameter Tuning

Objective: Systematically identify the optimal hyperparameter combination for each algorithm to maximize validation performance.

Protocol:

- Define Search Space: Create a dictionary of hyperparameters and their ranges to explore.

- Example for Random Forest:

{'n_estimators': [100, 300, 500], 'max_depth': [10, 30, None], 'min_samples_split': [2, 5]} - Example for XGBoost:

{'learning_rate': [0.01, 0.1], 'max_depth': [3, 6, 9], 'subsample': [0.7, 0.9]}

- Example for Random Forest:

- Select Tuning Method:

- GridSearchCV: Exhaustive search over all combinations. Computationally expensive but thorough for small spaces.

- RandomizedSearchCV: Samples a fixed number of parameter settings from specified distributions. More efficient for large search spaces.

- Bayesian Optimization: Uses probabilistic models to direct the search to promising hyperparameters (e.g., scikit-optimize, Optuna).

- Implementation:

- Use

scikit-learn'sGridSearchCVorRandomizedSearchCV. - Critical: The cross-validation within the tuning process must respect the initial data splitting strategy (e.g., scaffold-based

GroupKFold). The validation set can serve as a hold-out for final selection.

- Use

Table 1: Example Hyperparameter Search Space & Optimal Results for an Assay Interference Model

| Algorithm | Key Hyperparameters Tested | Optimal Configuration (Found) | Validation Metric (BA) |

|---|---|---|---|

| Random Forest | nestimators: [100,500]; maxdepth: [5,15,None]; minsamplesleaf: [1,3] | nestimators=500, maxdepth=15, minsamplesleaf=3 | 0.82 |

| XGBoost | learningrate: [0.01,0.1]; maxdepth: [3,6]; colsample_bytree: [0.7,1.0] | learningrate=0.05, maxdepth=6, colsample_bytree=0.8 | 0.85 |

| SVM (RBF) | C: [0.1, 1, 10]; gamma: ['scale', 'auto', 0.01] | C=10, gamma=0.01 | 0.79 |

BA = Balanced Accuracy

Model Evaluation and Validation

Objective: Assess the final tuned model's performance on the completely held-out test set.

Protocol:

- Final Training: Retrain the model with the optimal hyperparameters on the combined training and validation data.

- Test Set Evaluation: Generate predictions on the untouched test set.

- Metrics: Report a suite of metrics suitable for potentially imbalanced datasets:

- Primary: Balanced Accuracy, Matthews Correlation Coefficient (MCC), Area Under the ROC Curve (AUC-ROC).

- Supporting: Precision, Recall, F1-score (for the interferent class), Confusion Matrix.

- External Validation: If available, test on an external dataset from a different source or assay technology to stress-test generalizability.

Table 2: Model Performance Comparison on Assay Interference Test Set

| Model | Balanced Accuracy | MCC | AUC-ROC | Precision (Class 1) | Recall (Class 1) |

|---|---|---|---|---|---|

| Baseline (Dummy) | 0.50 | 0.00 | 0.50 | 0.19 | 0.50 |

| Random Forest (Tuned) | 0.80 | 0.55 | 0.87 | 0.75 | 0.76 |

| XGBoost (Tuned) | 0.83 | 0.60 | 0.90 | 0.78 | 0.80 |

| SVM (RBF, Tuned) | 0.78 | 0.52 | 0.85 | 0.72 | 0.75 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for QSAR Model Development Workflow

| Item | Function in Workflow |

|---|---|

| RDKit | Open-source cheminformatics toolkit for molecule standardization, descriptor calculation, fingerprint generation, and scaffold analysis. |

| scikit-learn | Primary Python library for data splitting, preprocessing, model training, hyperparameter tuning, and evaluation. |

| XGBoost/LightGBM | Optimized gradient boosting libraries providing state-of-the-art tree ensemble models with high performance. |

| Optuna | Hyperparameter optimization framework enabling efficient Bayesian search for optimal model configurations. |

| KNIME or Pipeline Pilot | Visual workflow platforms for designing, documenting, and executing reproducible data preprocessing and model training pipelines. |

| Molport or Enamine REAL Database | Commercial sources for purchasing physical compounds predicted to be non-interfering for downstream experimental validation. |

| Cytoscape | Network visualization tool for analyzing model interpretations, such as feature importance networks or compound cluster relationships. |

Workflow Diagrams

Within the broader thesis research on Quantitative Structure-Activity Relationship (QSAR) models for predicting chemical-assay interference, this document details their practical application in early-stage drug discovery. Assay interference, where compounds generate false-positive signals via mechanisms like aggregation, reactivity, or fluorescence, remains a major source of attrition and wasted resources. The integration of predictive QSAR models into the virtual screening (VS) and compound prioritization pipeline is a critical strategy to de-risk biological screening campaigns, enhance hit quality, and accelerate the identification of true bioactive leads.

Application Notes: Model-Guided Triage Strategy

The core application involves a multi-filter triage system applied to virtual compound libraries prior to experimental screening. This sequential workflow prioritizes compounds with a high likelihood of being genuine modulators of the biological target while deprioritizing those predicted to be frequent interferers.

Table 1: Key Predictive Models for Compound Triage

| Model Type | Primary Prediction | Typical Descriptors/Features | Application Point in Pipeline | Goal |

|---|---|---|---|---|

| Target-Specific QSAR/Docking | Bioactivity against primary target | 2D/3D molecular fingerprints, pharmacophores, docking scores | Primary Virtual Screening | Enrich library with putative actives. |

| Aggregation Propensity | Likelihood to form colloidal aggregates | LogP, topological polar surface area, number of rotatable bonds | Post-Docking Prioritization | Filter out promiscuous inhibitors. |

| PAINS (Pan-Assay INterference compounds) Filter | Presence of substructures known to react or interfere | SMARTS patterns for >400 problematic substructures | Initial Library Curation & Post-Docking | Remove compounds with known reactive/flagged motifs. |

| Assay Interference QSAR (Thesis Focus) | Probability of interference in specific assay formats (e.g., fluorescence quenching, luciferase inhibition) | Electrotopological state, charge descriptors, calculated spectral properties | Assay-Specific Prioritization | Rank-order compounds for testing in a given assay to minimize false positives. |

| ADMET Profiling | Predicted permeability, metabolic stability, toxicity | Molecular weight, H-bond donors/acceptors, similarity to toxicophores | Final Lead Selection | Prioritize compounds with favorable drug-like properties. |

Detailed Experimental Protocols

Protocol 3.1: Integrated Virtual Screening and Interference-Aware Prioritization

Objective: To computationally screen a multi-million compound library and generate a prioritized list of 500 compounds for experimental testing, enriched for target actives and depleted in assay interferers.

Materials & Software:

- Compound library (e.g., ZINC20, Enamine REAL, in-house collection) in SDF or SMILES format.

- High-performance computing cluster or cloud instance.

- Docking software (e.g., AutoDock Vina, Glide, GOLD).

- KNIME Analytics Platform or Python/R scripting environment.

- Validated QSAR models for target activity and assay interference.

Procedure:

- Library Preparation:

- Standardize all structures: neutralize charges, remove duplicates, generate tautomers.

- Apply Rule-based Filters: Remove compounds violating Lipinski's Rule of Five or containing PAINS substructures (using publicly available SMARTS lists).

- Output: A cleaned library of ~1.5 million compounds.

Target-Focused Virtual Screening:

- Prepare the protein target structure (e.g., crystal structure PDB ID).

- Define the binding site grid.

- Perform high-throughput molecular docking for the entire cleaned library.

- Prioritization 1: Rank compounds by docking score. Retain the top 50,000.

QSAR-Based Interference Prediction & Triage:

- For the top 50,000 compounds, calculate molecular descriptors (e.g., using RDKit, Mordred).

- Apply the thesis-developed assay interference QSAR model. Each compound receives a probability score (P(interfere)) for the specific assay technology planned (e.g., fluorescence polarization).

- Apply the aggregation propensity model.

- Prioritization 2: Generate a composite score:

Composite Score = (Docking Score Norm) - w1*(P(interfere)) - w2*(Aggregation Score), where w are weighting factors determined by model validation. - Re-rank the 50,000 compounds by this composite score.

Final Selection & Diversity Analysis:

- Select the top 2,000 compounds from the re-ranked list.

- Perform maximum diversity selection (e.g., using Tanimoto similarity on Morgan fingerprints) to choose the final 500 compounds for procurement and testing.

- Output: A final list of 500 prioritized compounds with associated scores for docking, interference probability, and aggregation.

Protocol 3.2: Experimental Validation of Model Predictions

Objective: To experimentally confirm that compounds flagged as high-interference probability by the QSAR model indeed generate false-positive signals in the target assay.

Materials:

- Test Compounds: 20 compounds predicted as high-interference (P(interfere) > 0.8), 20 compounds predicted as low-interference (P(interfere) < 0.2).

- Assay Reagents: (See Scientist's Toolkit below).

- Control Compounds: Known agonist/antagonist for the target, known interferer (e.g., aggregator like curcumin).

Procedure:

- Perform the primary high-throughput screening (HTS) assay at a single concentration (e.g., 10 µM) for all 40 test compounds.

- Identify "hits" showing >50% inhibition/activation.

- For all hits, perform a counter-screen in the absence of the critical assay component (e.g., no enzyme, no substrate). A compound active in the counter-screen is a confirmed interferer.

- For hits passing the counter-screen, perform a concentration-response curve (CRC) in the primary assay. Compounds with non-sigmoidal or unstable CRCs are suggestive of interference.

- Correlate Results: Calculate the positive predictive value (PPV) of the interference model:

PPV = (True Positives) / (All Predicted Positives).

Visualization of Workflows and Relationships

Title: Virtual Screening & Model-Based Triage Pipeline

Title: QSAR Model Role in Discovery Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Validation Assays

| Item | Function/Explanation | Example Vendor/Product |

|---|---|---|

| Recombinant Target Protein | The purified biological target for primary screening. Essential for biochemical assays. | Sino Biological, R&D Systems, in-house expression. |

| Fluorescent/Luminescent Probe | Generates the detectable signal in HTS assays. Interference models often target these technologies. | ATP-Glo (Luciferase), Fluorogenic peptide substrate. |

| Detergent (e.g., Triton X-100) | Used at low concentration (e.g., 0.01%) in assay buffers to mitigate compound aggregation. | Sigma-Aldrich. |

| Reference Aggregator | Positive control for aggregation interference (e.g., Curcumin, Congo Red). | Tocris, Sigma-Aldrich. |

| AlphaScreen/ALPHA beads | For bead-based assays; compounds interfering with bead proximity cause false signals. | PerkinElmer. |

| Chelating Agents (EDTA) | Controls for interference from metal ion contamination in compounds or buffers. | Sigma-Aldrich. |

| High-Quality DMSO | Universal compound solvent for screening. Lot-to-lot consistency is critical for reproducibility. | Hybri-Max (Sigma-Aldrich). |

| 384-Well Assay Plates | Standard format for HTS. Low background fluorescence/adsorption is key. | Corning, Greiner Bio-One. |

| Plate Reader | Detects optical signals (fluorescence, luminescence, absorbance). Requires precision at low volumes. | PHERAstar (BMG Labtech), EnVision (PerkinElmer). |

Navigating Pitfalls: Troubleshooting and Enhancing QSAR Model Performance

The development of robust Quantitative Structure-Activity Relationship (QSAR) models for predicting chemical-assay interference is critical in early drug discovery. Poor model performance often stems from three interconnected issues: severe class imbalance, inherent dataset bias, and applicability domain violations. This document provides detailed application notes and experimental protocols to diagnose and remediate these challenges.

Core Concepts & Data Landscape

Prevalence and Impact of Class Imbalance

Class imbalance is pervasive in interference datasets, as most compounds are not promiscuous interferents.

Table 1: Reported Class Distribution in Public Assay Interference Datasets

| Dataset (Source) | Total Compounds | Interferent Class (%) | Non-Interferent Class (%) | Imbalance Ratio |

|---|---|---|---|---|

| PubChem Bioassay (Aggregated) | 456,782 | 1.8% | 98.2% | 1:55 |

| PAN Assay Interference (PAINS) Alerts | 12,340 | 4.1% | 95.9% | 1:23 |

| Merck Aggregator Database | 8,911 | 2.5% | 97.5% | 1:39 |

| HTS Interference Library (MLSMR) | 32,144 | 3.7% | 96.3% | 1:26 |

Quantifying Dataset Bias

Bias arises from non-representative chemical space sampling. Common metrics include:

Table 2: Metrics for Quantifying Structural and Assay-Type Bias

| Bias Type | Measurement Metric | Typical Problematic Threshold | Remediation Target |

|---|---|---|---|

| Structural (Scaffold) | Murcko Scaffold Diversity (Unique Scaffolds/Total Compounds) | < 0.15 | > 0.30 |

| Assay-Type Over-representation | Max % of Compounds from Single Assay Type (e.g., fluorescence) | > 40% | < 20% |

| Property Clustering | Normalized Mean Pairwise Tanimoto Similarity (within class) | > 0.65 | < 0.45 |

Experimental Protocols for Diagnosis and Remediation

Protocol 3.1: Comprehensive Performance Diagnosis Workflow

Title: Holistic QSAR Model Interference Prediction Diagnosis Objective: Systematically evaluate model performance degradation sources. Materials: Validated interference dataset, cheminformatics toolkit (e.g., RDKit, KNIME), model evaluation suite.

Procedure:

- Baseline Performance: Train a standard model (e.g., Random Forest) using 5-fold cross-validation. Record Accuracy, Precision, Recall, F1-score, and MCC.

- Class Imbalance Impact: a. Calculate class distribution. b. Plot Precision-Recall curve and compute Area Under the PR Curve (AUPRC). Compare to AUC-ROC. c. Apply balanced class weighting during model training. Re-evaluate metrics.

- Dataset Bias Audit: a. Perform Bias-Weighted Validation: Split data by assay type or source lab. Train on one subset, test on others. b. Calculate Property Distribution Metrics (e.g., molecular weight, logP) for each assay subset using Kolmogorov-Smirnov test.

- Applicability Domain (AD) Analysis: a. Use Leverage (Hat Matrix) and Distance-based methods (e.g., Euclidean in PCA space) to define AD. b. Flag predictions for compounds outside the AD (standardized residual > 3). c. Quantify % of test set outside AD and its error rate.

- Integrated Report: Generate a diagnostic table linking performance drops to specific issues.

Expected Output: A ranked list of performance issues with quantitative evidence (e.g., "Recall drop of 40% attributable to class imbalance; bias contributes 15% error in fluorescence assays").

Protocol 3.2: Strategic Data Rebalancing and Augmentation

Title: SMOTE-ENN Hybrid Rebalancing for Interference Datasets Objective: Mitigate class imbalance while cleaning overlapping data regions. Materials: Imbalanced dataset, imbalanced-learn Python library, molecular descriptor set.

Procedure:

- Descriptor Calculation: Compute a relevant molecular descriptor set (e.g., ECFP6 fingerprints, RDKit 2D descriptors).

- Hybrid Re-sampling: a. Apply Synthetic Minority Over-sampling Technique (SMOTE) with k-neighbors=5 to generate synthetic interferent compounds. b. Follow with Edited Nearest Neighbors (ENN) to remove any synthetic or real samples from either class that are misclassified by their three nearest neighbors.

- Validation: Ensure the process does not create unrealistic chemical entities (validate with chemical rule filters).

- Model Retraining: Train model on rebalanced dataset using stratified cross-validation.

Protocol 3.3: Bias-Reduced Dataset Curation

Title: Assay-Type Stratified Sampling for Bias Mitigation Objective: Create a chemically diverse and assay-representative training set. Materials: Raw aggregated data from multiple assay types (e.g., fluorescence, absorbance, luminescence, NMR).

Procedure:

- Categorization: Label each compound record by its primary assay interference detection method.

- Stratified Sampling: a. For the majority (non-interferent) class, perform max-min sampling: Select compounds to maximize minimum Tanimoto distance to already selected compounds, within each assay type. b. For the minority (interferent) class, include all available compounds. c. Cap contribution from any single assay type to 20% of the total training set.

- External Validation Set: Hold out complete assay types not seen during training (e.g., use all AlphaScreen assay data for final testing only).

Protocol 3.4: Applicability Domain Definition and Model Guarding

Title: Consensus Applicability Domain for Interference Prediction Objective: Define a reliable AD to flag low-confidence predictions. Materials: Training set descriptors, PCA software, domain definition criteria.

Procedure:

- Descriptor Space Reduction: Perform PCA on training set descriptors. Retain PCs explaining >95% variance.

- Multi-Method AD Definition: a. Range Method: For the first 3 PCs, define AD as mean ± 3σ for the training set. b. Leverage Method: Calculate leverage threshold, h* = 3p/n, where p is number of model parameters, n is training set size. c. Distance Method: Calculate mean Euclidean distance in PC space; threshold = mean distance + 3*std.

- Consensus Rule: A compound is inside AD only if it satisfies at least 2 of the 3 criteria above.

- Implementation: Integrate the AD check as a pre-prediction filter in the deployment pipeline.

Visualization of Key Concepts and Workflows

Title: Integrated Remediation Workflow for Reliable QSAR

Title: Applicability Domain Decision Filter

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Resources for Interference QSAR Research

| Item / Resource | Function / Purpose | Example / Source |

|---|---|---|

| Curated Benchmark Datasets | Provides standardized, annotated data for model training and comparison. | PubChem Bioassay, ChEMBL Aggregator Dataset, MLSMR HTS Interference Library. |

| Cheminformatics Suites | Calculates molecular descriptors, fingerprints, and performs essential preprocessing. | RDKit (Open Source), KNIME with Cheminformatics Extensions, Schrödinger Canvas. |

| Imbalance Correction Libraries | Implements algorithmic re-sampling techniques (SMOTE, ADASYN, etc.). | Python: imbalanced-learn; R: SMOTE in DMwR package. |