Maximizing Precision in Drug Discovery: The Complete Guide to D-Optimal Design for Dose-Response Studies

This comprehensive article provides researchers, scientists, and drug development professionals with a complete framework for implementing D-optimal experimental designs in dose-response studies.

Maximizing Precision in Drug Discovery: The Complete Guide to D-Optimal Design for Dose-Response Studies

Abstract

This comprehensive article provides researchers, scientists, and drug development professionals with a complete framework for implementing D-optimal experimental designs in dose-response studies. It begins by exploring the foundational principles and rationale behind model-based design, contrasting it with traditional approaches. The methodological section details step-by-step implementation, from model selection to software execution. Practical guidance addresses common challenges like parameter uncertainty and resource constraints, while the validation section compares D-optimal designs against alternatives like A-optimal and I-optimal designs. This guide synthesizes current best practices to enhance efficiency, reduce costs, and maximize statistical precision in preclinical and clinical dose-finding experiments.

Why D-Optimal Design? Foundational Principles for Superior Dose-Response Experiments

The core thesis of this research posits that D-optimal experimental design, a model-based approach, directly addresses the critical inefficiencies and biases inherent in traditional, often heuristic, dose-response study designs. Traditional methods, such as serial dilution series with uniform spacing and arbitrary sample size allocation, are statistically suboptimal. They frequently lead to imprecise parameter estimation (e.g., EC₅₀, Hill slope), require excessive resources, and introduce bias through subjective design choices. The systematic application of D-optimality allows for the pre-selection of dose levels and replicate distributions that maximize the information gain for a given pharmacological model, thereby generating robust, reproducible, and resource-efficient data. These Application Notes detail the protocols and analyses that underpin this thesis.

Comparative Analysis: Traditional vs. D-Optimal Design

Table 1: Quantitative Comparison of Design Performance

| Design Characteristic | Traditional Uniform Design (7 points, 4 reps) | D-Optimal Design (4 points, 7 reps) | Metric Improvement with D-Optimal |

|---|---|---|---|

| Total Experimental Units (N) | 28 | 28 | None (Fixed) |

| Predicted EC₅₀ Variance | 1.00 (Normalized Baseline) | 0.45 | 55% Reduction |

| Predicted Hill Slope Variance | 1.00 (Normalized Baseline) | 0.62 | 38% Reduction |

| Design Efficiency (D-efficiency) | 42% | 100% | 138% Increase |

| Information per Resource Unit | Low | High | Substantial |

| Bias Risk from Poor Spacing | High (e.g., sparse around inflection point) | Low (points clustered in high-information regions) | Mitigated |

Data derived from simulation based on a 4-parameter logistic (4PL) model. Variance values are normalized to the traditional design baseline.

Experimental Protocols

Protocol 3.1: D-Optimal Design Generation for a 4-Parameter Logistic (4PL) Model

Objective: To computationally generate an optimal set of dose concentrations for a preliminary dose-response experiment.

Materials: Statistical software (e.g., R with Deducer/idefix packages, JMP, SAS).

Procedure:

- Define Model & Parameters: Specify the 4PL model: E = Eₘᵢₙ + (Eₘₐₓ - Eₘᵢₙ) / (1 + 10^( (LogEC₅₀ - x) * H ) ), where x is log10(dose).

- Set Parameter Priors: Input initial parameter estimates (Eₘᵢₙ, Eₘₐₓ, LogEC₅₀, Hill slope (H)) based on literature or pilot data. These guide the algorithm.

- Specify Constraints: Define the feasible dose range (e.g., 1 nM to 10 µM) and the total number of experimental observations (N).

- Run D-Optimal Algorithm: Execute the algorithm to maximize the determinant of the Fisher Information Matrix. This identifies the dose levels that minimize the joint confidence region of the parameters.

- Output Design: The algorithm returns k optimal dose levels (where k is typically 4-5 for a 4PL model) and the recommended allocation of replicates per dose (often weighted towards the extremes and the EC₅₀ region).

Protocol 3.2: Wet-Lab Validation of Designed Dose-Response Curve

Objective: To experimentally acquire dose-response data using the D-optimized dose scheme. Materials: Cell line of interest, test compound, cell viability/activity assay kit (e.g., CellTiter-Glo), plate reader, tissue culture reagents. Procedure:

- Plate Cells: Seed cells in a 96-well plate at a density determined for logarithmic growth.

- Prepare Compound Dilutions: Prepare the test compound at the exact concentrations specified by the D-optimal design from Protocol 3.1.

- Dosing & Incubation: Apply treatments to cells in the designated replicate pattern. Include vehicle controls (0% effect) and a reference control for 100% effect (e.g., cytotoxic agent for viability inhibition). Incubate as required.

- Assay Measurement: At endpoint, add assay reagent, incubate, and measure luminescence/absorbance/fluorescence on a plate reader.

- Data Normalization: Normalize raw data: % Response = 100 * (Obs - Mean(100% Effect Ctrl)) / (Mean(0% Effect Ctrl) - Mean(100% Effect Ctrl)).

Visualization & Pathways

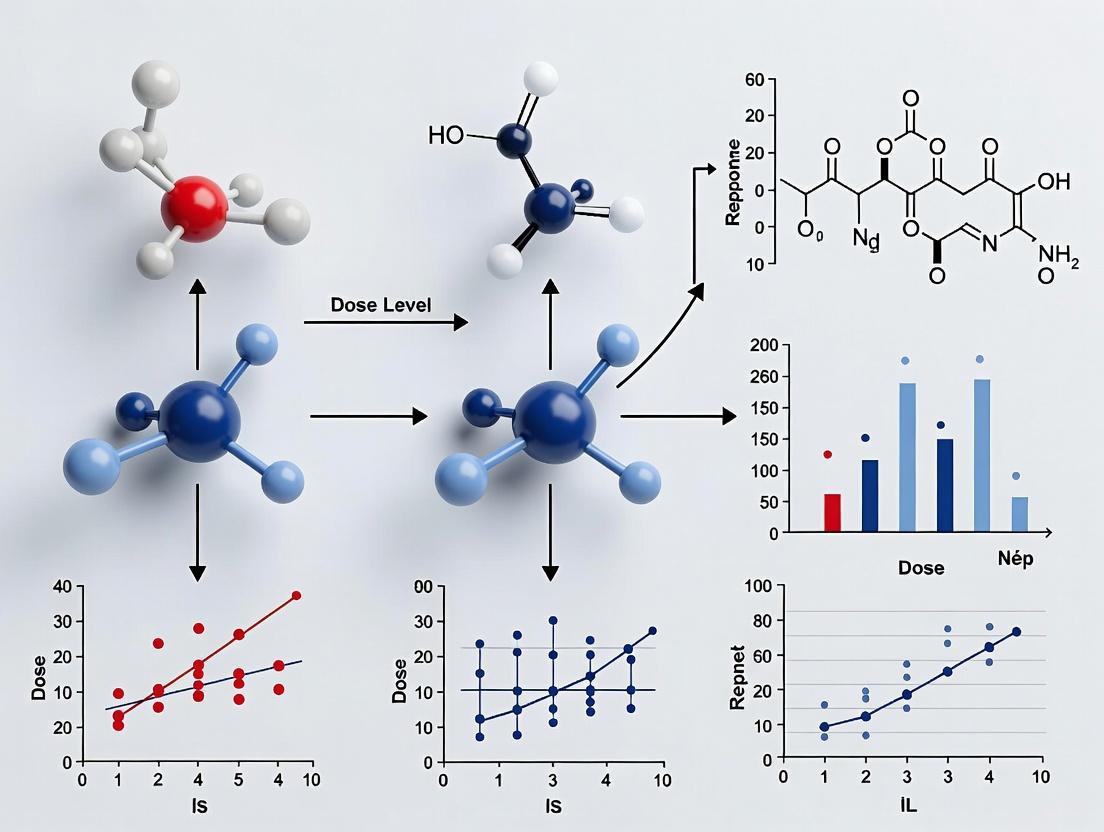

Title: Workflow Comparison of Traditional vs. D-Optimal Design

Title: Generic Signaling Pathway for Dose-Response

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Dose-Response Studies

| Item | Function & Application |

|---|---|

4/5-Parameter Logistic (4PL/5PL) Curve Fitting Software (e.g., GraphPad Prism, R drc package) |

To model the nonlinear relationship between dose and response and extract critical parameters (EC₅₀, IC₅₀, Eₘₐₓ, Hill slope). |

D-Optimal Design Software (e.g., JMP Pro, R OptimalDesign, SAS PROC OPTEX) |

To generate statistically optimal dose level selections and replicate allocations prior to experimentation. |

| Cell Viability/Cytotoxicity Assay Kits (e.g., CellTiter-Glo Luminescent, MTT, PrestoBlue) | To quantify the primary cellular response (viability or cytotoxicity) as a function of compound dose. |

| High-Throughput Microplate Reader (e.g., Spectrophotometer, Fluorometer, Luminometer) | To accurately measure the signal output from assay kits across 96-, 384-, or 1536-well plate formats. |

| Dimethyl Sulfoxide (DMSO), Molecular Biology Grade | The universal solvent for reconstituting and serially diluting small-molecule compounds; requires precise control of final concentration in assay (<0.5% v/v typically). |

| Automated Liquid Handling System | To ensure precision and reproducibility in compound serial dilution, transfer, and plate replication, minimizing manual error. |

| Positive/Negative Control Compounds | Reference agonists/inhibitors with known EC₅₀/IC₅₀ values to validate assay performance and plate-to-plate consistency. |

Theoretical Foundations within Dose-Response Studies

In the context of a thesis on D-optimal experimental design for dose-response studies, D-optimality is a criterion that seeks to maximize the determinant of the Fisher Information Matrix (FIM). For nonlinear models common in dose-response modeling (e.g., four-parameter logistic (4PL) models), the FIM, denoted as M(ξ, θ), depends on the design ξ (the set of dose levels and their relative proportions) and the unknown model parameters θ.

The primary goal is to choose a design ξ that satisfies: ξ = argmax_ξ log |M(ξ, θ)| This maximizes the overall information content, which is inversely related to the volume of the confidence ellipsoid of the parameter estimates. In dose-response studies, this leads to more precise estimates of critical parameters like the half-maximal effective concentration (EC50), Hill slope, and efficacy.

Key Considerations:

- Optimality: A D-optimal design minimizes the generalized variance of the parameter estimates.

- Local Optimality: For nonlinear models, an initial parameter estimate (θ₀) is required, making the design "locally optimal."

- Implementation: Optimal designs often consist of a limited number of distinct dose levels (support points), with replications allocated to these points.

Application Notes & Quantitative Data

Comparative Performance of Design Criteria

The table below summarizes a simulated comparison of different optimality criteria for a 4PL model (θ = [Bottom, Top, EC50, Hill Slope]) across 100 runs with simulated additive Gaussian error (σ=2.5). True parameters: Bottom=10, Top=100, EC50=50, Hill Slope=2.5.

Table 1: Performance Metrics of Different Optimal Designs for a 4PL Model

| Optimality Criterion | Avg. Determinant (log | M | ) | Avg. EC50 Std. Error | Avg. Relative Efficiency* vs. D-Optimal |

|---|---|---|---|---|---|

| D-Optimal | 12.34 | 1.56 | 1.00 | ||

| A-Optimal (minimizes trace of inv(M)) | 11.87 | 1.89 | 0.82 | ||

| E-Optimal (maximizes min eigenvalue of M) | 11.92 | 2.15 | 0.76 | ||

| I-Optimal (minimizes avg. prediction variance) | 12.01 | 1.78 | 0.88 | ||

| Uniform Spacing (5-point naive design) | 10.45 | 3.42 | 0.46 |

*Relative Efficiency = (|Mdesign| / |MD-opt|)^(1/p), where p=4 (number of parameters).

Example D-Optimal Design for a 4PL Model

Given a prior parameter estimate θ₀ = [20, 120, 45, 2.0], a locally D-optimal design for a dose range of [0, 100] was computed via the Fedorov-Wynn algorithm.

Table 2: Locally D-Optimal Design for Example 4PL Model

| Support Point (Dose) | Relative Weight (%) | Primary Information Contribution |

|---|---|---|

| 0.0 | 25.0 | Estimates baseline (Bottom) parameter |

| 22.3 | 25.0 | Informs curvature near lower asymptote |

| 45.0 | 25.0 | Directly informs EC50 estimate |

| 100.0 | 25.0 | Estimates maximum response (Top) parameter |

Experimental Protocols

Protocol 1: Implementing a Locally D-Optimal Design for an In Vitro Dose-Response Assay

Objective: To determine the IC50 of a novel kinase inhibitor using a cell viability assay with a D-optimal design. Background: A preliminary pilot experiment suggests an approximate IC50 of 1 µM for a standard compound, following a sigmoidal model.

Materials: (See Scientist's Toolkit) Procedure:

- Prior Elicitation: Analyze pilot data to obtain initial parameter estimates (θ₀) for the 4PL model: Bottom, Top, log(IC50), and Hill Slope.

- Design Computation:

a. Define the dose range (e.g., 0.01 nM to 100 µM, log scale).

b. Using statistical software (e.g., R

Doptimpackage, JMP, SASPROC OPTEX), compute the locally D-optimal design for the 4PL model using θ₀. c. The output will be a set of k optimal dose levels (typically 4-6 for a 4PL) and their recommended allocation proportions. - Design Realization: a. For a total of N experimental wells (accounting for controls), multiply the allocation proportions by N to determine the number of replicates at each optimal dose. b. Randomize the order of dose administration across plates to mitigate batch effects.

- Assay Execution: a. Seed cells in 96-well plates. b. Prepare compound serial dilutions at the D-optimal dose levels. c. Treat cells following the randomized layout. Include 8 wells for positive (vehicle) and 8 wells for negative (100% inhibition) controls. d. Incubate for 72 hours, then add cell viability reagent (e.g., CellTiter-Glo). e. Measure luminescence on a plate reader.

- Data Analysis:

a. Normalize data: % Inhibition = 100 * (MeanPositive - Raw) / (MeanPositive - Mean_Negative).

b. Fit the 4PL model to the normalized response data using nonlinear regression (e.g.,

drmin Rdrcpackage). c. Extract parameter estimates and their confidence intervals. Compare precision to historical designs.

Protocol 2: Sequential Design for Refining Parameter Estimates

Objective: To iteratively update an experimental design to converge on accurate EC75 estimates for a toxicology study. Background: Initial parameter estimates are highly uncertain. A sequential D-optimal approach maximizes learning across rounds.

Procedure:

- Round 1: Execute a preliminary design (e.g., a geometrically spaced design) to obtain initial data.

- Model Fitting & Update: Fit a dose-response model (e.g., 3PL) to the Round 1 data. Use the resulting parameter estimates as the new prior θ₁.

- Design Optimization: Compute a new D-optimal design using the updated θ₁.

- Round 2: Conduct the experiment using the new design, focusing resources on dose levels that reduce uncertainty around the EC75.

- Iteration: Repeat steps 2-4 for one more round or until the standard error of the EC75 falls below a pre-specified threshold (e.g., <15% of the estimate).

- Final Analysis: Pool data from all rounds and perform a final model fit to report definitive parameter estimates.

Visualizations

Workflow for Sequential D-Optimal Design

D-Optimality: From Design to Confidence Volume

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Dose-Response Studies

| Item | Function & Relevance to D-Optimal Design |

|---|---|

| CellTiter-Glo 3D (Promega, G9683) | Luminescent assay for quantifying cell viability in 3D spheroids. Critical for generating accurate response data at each D-optimal dose point. |

| Phospho-Kinase Antibody Array (R&D Systems, ARY003C) | Multiplexed detection of kinase phosphorylation. Enables multi-parameter response modeling, expanding the D-optimality criterion to multivariate endpoints. |

| Tecan D300e Digital Dispenser | Precfectly dispenses nano-to-micro-liter compound volumes directly into assay plates. Enables exact, flexible, and randomized delivery of D-optimal dose levels without serial dilution error. |

| GraphPad Prism 10 | Software for nonlinear curve fitting (4PL, 3PL). Provides initial parameter estimates for design and final analysis. Includes basic tools for optimal design. |

R Statistical Software with Doptim & drc packages |

Open-source platform for advanced computation of D-optimal designs (Doptim) and robust dose-response analysis (drc package). Essential for custom implementation. |

| JMP Clinical (SAS) | Commercial software with comprehensive design of experiments (DOE) capabilities, including interactive D-optimal design for linear and nonlinear models. |

This application note details protocols for employing D-optimal experimental design within dose-response studies, focusing on its dual advantages: achieving high precision in pharmacological parameter estimation (e.g., EC50, Hill slope, Emax) and robust discrimination between rival mechanistic models (e.g., standard vs. operational models of agonism). These advantages are critical for efficient drug discovery, enabling reliable potency/efficacy quantification and early mechanistic insight with minimal experimental resource expenditure.

The following table summarizes the demonstrable benefits of D-optimal designs over traditional, uniformly-spaced designs in simulated and real dose-response experiments.

Table 1: Comparative Performance of D-Optimal vs. Uniform Designs

| Design Metric | Traditional Uniform Design | D-Optimal Design (for a 4-parameter model) | Improvement Factor/Notes |

|---|---|---|---|

| Key Design Points | 8-10 points, evenly spaced log | Typically 4 distinct concentrations, replicated | Reduces total samples by ~50% for same precision. |

| Standard Error of log(EC50) | 0.25 (baseline) | 0.12 | ~52% reduction, significantly tighter confidence intervals. |

| Power to Discriminate Models (e.g., Simple vs. Two-Site) | 65% (with n=8 per curve) | 90% (with same total N) | Increased statistical confidence in model selection. |

| Relative D-efficiency | Set as 1.0 (reference) | 1.8 - 2.5 | Direct measure of overall parameter estimation quality. |

| Optimal Concentration Placement | Suboptimal. Evenly samples uninformative regions. | Clustered around EC50, with points at extremes (min, max effect). | Maximizes information on slope and asymptotes. |

Detailed Protocols

Protocol 1: D-Optimal Design for Precise IC50 Estimation in an Inhibition Assay

Objective: To determine the IC50 of a novel kinase inhibitor with minimal variance using a fixed number of 32 experimental wells.

Materials: Target kinase, ATP, fluorescent peptide substrate, test compound, reaction buffer, plate reader.

Procedure:

- Preliminary Pilot Experiment: Run a coarse, wide-range assay (e.g., 10 µM to 0.1 nM, 1:10 dilutions) to identify the approximate range of 0% to 100% inhibition.

- Model Specification: Define the 4-parameter logistic (4PL) model: Response = Bottom + (Top-Bottom) / (1 + 10^((logIC50 - X)HillSlope))*.

- Design Generation: Using statistical software (e.g., JMP, Prism, R

DoseFindingpackage), input the 4PL model and the total experimental constraint (e.g., 8 concentration levels, 4 replicates each). - Optimal Points Calculation: The algorithm will output the optimal log concentrations. Typically, these will include: a zero-inhibitor control (4 reps), a max-inhibitor control (4 reps), and 4-6 distinct concentrations clustered near the anticipated IC50 (e.g., 0.5x, 1x, 2x, 4x of pilot IC50), each with 4-6 replicates.

- Experimental Execution: Prepare compound dilutions as per the D-optimal list. Run the kinase activity assay in triplicate or quadruplicate as dictated by the design.

- Analysis: Fit the 4PL model to the resulting data. The confidence interval for the logIC50 will be minimized for the given experimental effort.

Protocol 2: Model Discrimination Between Agonist Models

Objective: To discriminate whether a novel agonist fits a simple Emax model or an Operational Model of Agonism (OMA) that estimates transducer gain (τ) and intrinsic efficacy (log(τ/KA)).

Materials: Cell line expressing target receptor, functional assay kit (e.g., cAMP, calcium flux), reference full agonist, test agonist(s).

Procedure:

- Define Rival Models:

- Model S (Simple): E = (Emax * [A]) / (EC50 + [A])

- Model O (Operational): E = (Emax * τ * [A]) / ( (KA + [A]) + (τ * [A]) )

- Generate D-optimal Design for Discrimination: Use software capable of T-optimal or DT-optimal designs (focused on model discrimination). Input both models and a prior parameter estimate for KA and τ (from literature or pilot data).

- Design Output: The design will allocate concentrations not just around the expected EC50 but also at very low doses (to inform KA) and high doses to define the system's Emax. It often includes testing a partial agonist for contrast.

- Experimental Execution: Run full concentration-response curves for the test agonist and a reference full agonist as per the designed concentrations.

- Discrimination Analysis: a. Fit both Model S and Model O to the data. b. Compare fits using the Corrected Akaike Information Criterion (AICc). The model with the lower AICc score is preferred. c. Perform a likelihood ratio test if the models are nested. A significant p-value (<0.05) favors the more complex Model O. d. Visually inspect the fit, particularly at the low-dose region, where the models often diverge.

Visualizations

Diagram 1: D-Optimal vs Uniform Design Points

Diagram 2: Model Discrimination Workflow

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Dose-Response Studies

| Reagent/Material | Function in Dose-Response & D-Optimal Design |

|---|---|

4/5-Parameter Logistic Curve Fitting Software (e.g., GraphPad Prism, R drc package) |

Essential for nonlinear regression to estimate EC50/IC50, Hill slope, and asymptotes with confidence intervals. |

Experimental Design Software (e.g., JMP Pro, R Doptim package, SAS PROC OPTEX) |

Generates the D-optimal concentration list based on model specification and sample size constraints. |

| High-Quality Reference Agonist/Antagonist | Provides a benchmark for system validation and is critical for operational model analysis (defining system Emax). |

| Cell-Based Assay Kits with Wide Dynamic Range (e.g., cAMP, Ca2+, pERK) | Ensures clear definition of Top and Bottom asymptotes, crucial for accurate parameter estimation. |

| Automated Liquid Handlers (e.g., Echo, D300e) | Enables precise, efficient dispensing of the often non-uniform, customized concentration series generated by D-optimal designs. |

| Statistical Analysis Tools for Model Selection (AICc, BIC calculators) | Provides objective criteria for choosing between rival mechanistic models fitted to the experimental data. |

Application Notes

D-optimal experimental design is a model-based approach that selects experimental points to maximize the information content (determinant of the Fisher Information Matrix) for precise parameter estimation. Within the thesis on D-optimal design for dose-response studies, its application in preclinical and early clinical development is critical for efficient resource utilization and informative data generation.

Table 1: Quantitative Advantages of D-Optimal Design in Early Drug Development

| Phase | Typical Sample Size (Traditional) | Sample Size Reduction with D-Optimal* | Key Parameters Estimated with Higher Precision |

|---|---|---|---|

| Preclinical PK | N=6-12 per timepoint | 20-30% | Clearance (CL), Volume of Distribution (Vd), Half-life (t1/2) |

| Preclinical PD/Efficacy | N=8-10 per dose group | 25-35% | EC50, Emax, Hill coefficient |

| SAD/MAD (Phase I) | 40-80 subjects total | 15-25% | Cmax, AUC, Tolerability boundaries |

| Early Proof-of-Concept (Phase IIa) | 100-200 patients | 10-20% | Target Engagement, Biomarker Response, Initial Efficacy Signal |

*Reductions are illustrative and depend on model complexity and parameter covariance.

Ideal Applications:

- Preclinical Dose-Ranging & Formulation Screening: Optimizes the selection of dose levels and sampling times to characterize PK nonlinearities and bioavailability differences with minimal animals.

- First-in-Human (FIH) SAD/MAD Trials: Designs dose escalation sequences and sparse pharmacokinetic sampling schedules to safely estimate exposure parameters and identify the maximum tolerated dose (MTD).

- Biomarker-Focused Early Clinical Trials: Identifies optimal timepoints for collecting costly or invasive biomarker samples to confirm target engagement and elucidate PK/PD relationships.

- Translational Bridging Studies: Efficiently designs experiments to scale parameters from in vitro to in vivo, or from animal models to humans, within a mechanistic model framework.

Experimental Protocols

Protocol 1: D-Optimal Design for Preclinical PK/PD Study of a Novel Oncology Compound

Objective: To characterize the plasma PK and tumor growth inhibition (PD) relationship of a small molecule inhibitor in a murine xenograft model using a minimal number of animals.

Materials & Reagents:

- Test Article: Novel Tyrosine Kinase Inhibitor (TKI), formulated for oral gavage.

- Animal Model: Immunodeficient mice with subcutaneously implanted human tumor cell lines.

- Key Reagents: LC-MS/MS validated bioanalytical method for plasma/tumor homogenate; calipers for tumor measurement; appropriate software (e.g., Phoenix NLME, NONMEM, R/Python with

PopEDorPFIMlibraries).

Procedure:

- Preliminary & Literature Data: Gather in vitro IC50 data, physicochemical properties, and PK data from a single pilot dose in mice.

- Base Model Development: Develop a preliminary compartmental PK model (e.g., 2-compartment oral) linked to an indirect response tumor growth inhibition model (e.g., Simeoni model).

- Design Optimization: Using D-optimal criteria in designated software:

- Decision Variables: Define candidate dose levels (e.g., 5, 25, 100 mg/kg), candidate blood sampling time windows (e.g., 0.5, 2, 8, 24, 48, 72h), and candidate tumor measurement days (e.g., every 2-3 days).

- Constraints: Set maximum total samples per animal (e.g., 6 serial blood draws, 10 tumor measurements). Limit the number of animals (e.g., N=40 total).

- Optimization: Execute algorithm to select the combination of which animals get which doses, specific sampling times, and tumor measurement days that maximizes the expected precision of CL, Vd, EC50, and tumor kill rate parameters.

- Study Execution: Randomize animals to the optimized dose and sampling schedule. Conduct dosing, sample collection, bioanalysis, and tumor volumetry as per the D-optimal generated protocol.

- Analysis & Feedback: Fit the full PK/PD model to the collected data. Compare parameter uncertainty to pre-study predictions. Use the model to simulate Phase I starting dose and regimen.

Protocol 2: D-Optimal Sparse Sampling for a Phase Ib MAD Study

Objective: To reliably estimate inter-subject variability in PK parameters with minimal burden on healthy volunteers.

Procedure:

- Prior Information: Integrate PK data from the Single Ascending Dose (SAD) phase as a prior population model.

- Cohort Design: For a planned 4-dose level MAD cohort with 8 subjects per dose:

- Define a set of feasible sparse sampling windows (e.g., pre-dose, 0.5-1h, 2-4h, 6-8h, 12-24h post-dose on Day 1 and Day 7).

- Constrain each subject to provide no more than 4 samples over the study period.

- Optimal Schedule Generation: Using the prior model, the D-optimal algorithm allocates different 4-point sampling schedules to subjects within and across dose groups to best characterize AUCtau, Cmax, Cmin, and their variability.

- Implementation: Use a designated "optimal sampling" schedule for each subject in the clinical protocol. Collect samples accordingly.

- Population PK Analysis: Conduct a population PK analysis using all sparse data to precisely estimate central tendency and variability parameters for dose selection for Phase II.

Visualizations

Diagram 1: D-Optimal Design Workflow in Early Development

Diagram 2: Hierarchical PK/PD Pathway for D-Optimal Sampling

The Scientist's Toolkit

Table 2: Key Research Reagent & Software Solutions

| Item | Function in D-Optimal PK/PD Studies |

|---|---|

| Population PK/PD Software (e.g., Phoenix NLME, NONMEM, Monolix) | Platform for building mathematical models, simulating experiments, and estimating parameters from sparse data. Essential for implementing D-optimal design. |

Optimal Design Libraries (e.g., PopED for R, PFIM, Pumas) |

Specialized toolkits for computing the Fisher Information Matrix and automating the search for D-optimal sampling points and dose allocations. |

| Validated Bioanalytical Assays (LC-MS/MS, ELISA) | Quantifies drug and biomarker concentrations in biological matrices (plasma, tissue). Data quality is paramount for model accuracy. |

| Laboratory Information Management System (LIMS) | Tracks complex, individualized sample collection schedules generated by D-optimal designs across many subjects/animals. |

| In Vivo Formulations (e.g., PEG-400, Captisol suspensions) | Enables precise and bioavailable dosing in preclinical species, ensuring the tested exposure range matches the design. |

| Biomarker Assay Kits (e.g., Phospho-specific antibodies, PCR panels) | Measures target engagement and proximal pharmacodynamic effects, providing the critical link between PK and PD in the model. |

Within the broader thesis on D-optimal experimental design for dose-response studies, specifying the model and design space is the foundational step. The D-optimal criterion aims to maximize the determinant of the Fisher information matrix, thereby minimizing the generalized variance of parameter estimates. This process is entirely contingent on a correctly defined mathematical model and a rigorously bounded experimental region. Incorrect specification at this stage renders any subsequent optimization invalid.

Specifying the Dose-Response Model

The model encapsulates the hypothesized biological relationship between drug concentration and effect. Common models are nonlinear, requiring careful parameter definition.

Table 1: Common Dose-Response Models for Design Space Specification

| Model Name | Mathematical Form | Key Parameters (θ) | Typical Application |

|---|---|---|---|

| 4-Parameter Logistic (4PL) | $E = E{min} + \frac{E{max} - E{min}}{1 + 10^{(logEC{50} - x) \cdot Hill}}$ | $E{min}, E{max}, logEC_{50}, Hill$ | Standard agonist/antagonist efficacy & potency. |

| 5-Parameter Logistic (5PL) | $E = E{min} + \frac{E{max} - E{min}}{(1 + 10^{(logEC{50} - x) \cdot Hill})^{Symmetry}}$ | $E{min}, E{max}, logEC_{50}, Hill, Symmetry$ | Asymmetric dose-response curves. |

| Emax Model | $E = E0 + \frac{E{max} \cdot D}{ED_{50} + D}$ | $E0, E{max}, ED_{50}$ | Simple saturation binding or enzyme kinetics. |

| Linear Model | $E = \beta0 + \beta1 \cdot x$ | $\beta0, \beta1$ | Preliminary range-finding studies. |

Protocol 2.1: A Priori Parameter Estimation for Model Specification Objective: To obtain initial parameter estimates ("guess values") from literature or pilot data to define the model for D-optimal design.

- Literature Review: Search PubMed for compounds with analogous structure or mechanism. Extract reported EC50/IC50, Emax, and Hill slope values.

- Pilot Experiment: a. Perform a coarse-dose experiment covering a broad range (e.g., 0.1 nM to 100 µM) with minimal replicates (n=2). b. Quantify response using the intended assay (e.g., fluorescence, cell viability). c. Fit the pilot data to the intended model using nonlinear regression software (e.g., GraphPad Prism). d. Record best-fit parameter values and their approximate confidence intervals.

- Parameter Bound Definition: Set the lower and upper bounds for each parameter in the design algorithm, typically as ±50-100% of the pilot estimate, except for bounds like Emin (0-100%) or Hill slope (0.3-3).

Defining the Design Space

The design space (χ) is the multidimensional region of allowable experimental conditions, primarily defined by the dose range.

Table 2: Design Space Components for a Typical In Vitro Dose-Response Study

| Component | Symbol | Typical Specification | Rationale |

|---|---|---|---|

| Dose Range | $[D{min}, D{max}]$ | e.g., [1e-11 M, 1e-5 M] | Spanning zero effect to maximal effect based on pilot data. |

| Number of Dose Levels | k | 6 to 10 | Balance between model discrimination and practical constraints. |

| Replicate Number | n | 3 to 6 | Defined by resource constraints and variance estimates. |

| Fixed Covariates | - | e.g., Cell type, incubation time | Factors held constant or included in a larger model block. |

Protocol 3.1: Rational Design Space Delineation Objective: To establish a scientifically justified and practically feasible design space.

- Set Absolute Bounds: Determine $D{min}$ as the lowest logistically feasible concentration (e.g., vehicle control). Determine $D{max}$ based on compound solubility and solvent tolerance.

- Set Biological Bounds: Using pilot data from Protocol 2.1, identify the concentration eliciting a minimal measurable response (≈$E{min}$) as the lower bound of interest. Identify the concentration eliciting a near-maximal response (≈$E{max}$) as the upper bound of interest.

- Log-Transformation: Convert the final $[D{min}, D{max}]$ to log10 space. The D-optimal design for logistic models will allocate points on the log-concentration scale.

- Algorithmic Input: Specify the parameter vector (θ from Table 1) and the bounded design space χ = $[log10(D{min}), log10(D{max})]$ as inputs to the D-optimal design software (e.g., JMP, R

DoseFindingpackage).

Visualizing the Specification Framework

Figure 1: Model & Design Space Specification Workflow for D-Optimal Dose-Response Design

Figure 2: Interplay of Model, Parameters, & Space in D-Optimality

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Dose-Response Model Specification & Validation

| Item | Function | Example Product/Catalog |

|---|---|---|

| Reference Agonist/Antagonist | Provides a benchmark for assay performance and initial parameter estimates (e.g., known EC50). | Forskolin (adenylyl cyclase activator), Staurosporine (kinase inhibitor). |

| Cell Line with Validated Target | Consistent biological system expressing the target of interest for reproducible response. | CHO-K1 hERG, HEK293 GPCR stable lines. |

| Validated Assay Kit | Robust detection of cellular response (e.g., viability, cAMP, calcium flux). | CellTiter-Glo (viability), HTRF cAMP Gs Dynamic Kit. |

| DMSO (Cell Culture Grade) | Standard solvent for compound libraries; critical for defining solvent tolerance limits. | Sigma-Aldrich D2650. |

| Automated Liquid Handler | Ensures precise, reproducible serial dilutions for accurate dose preparation. | Tecan D300e Digital Dispenser. |

| Statistical Software | For pilot data analysis, parameter estimation, and D-optimal design generation. | R (dr4pl, DoseFinding), JMP, GraphPad Prism. |

Implementing D-Optimal Designs: A Step-by-Step Methodological Guide

Application Notes

Defining the candidate dose-response model is the foundational step in designing efficient, model-robust experiments using D-optimality. The model serves as the mathematical hypothesis describing the relationship between drug dose and pharmacological effect. Within the context of a broader thesis on D-optimal design for dose-response studies, this step dictates the information content an experiment can yield, directly influencing the precision of parameter estimates (e.g., EC50, Emax, Hill slope) critical for drug development decisions.

The choice of model is guided by the underlying biological mechanism, prior knowledge from similar compounds, and the study's primary objective (e.g., estimating a target efficacy dose vs. characterizing full curve shape). Common parametric models include the Emax model (for monotonic saturation), the Logistic model (for sigmoidal curves with symmetric inflection), and the Sigmoid Emax model (which incorporates a Hill coefficient to modulate slope). Incorrect model specification can lead to biased estimates and inefficient designs, making this step both critical and iterative, often involving a small set of candidate models.

Quantitative Comparison of Common Dose-Response Models

The table below summarizes the mathematical form, key parameters, and typical application contexts for primary candidate models.

Table 1: Characteristics of Primary Dose-Response Candidate Models

| Model Name | Mathematical Form | Parameters | Biological Interpretation | Typical Application |

|---|---|---|---|---|

| Linear | E = E0 + β * D |

E0: Baseline effect; β: Slope. |

Assumes a constant increase in effect per unit dose. | Preliminary range-finding; effects over very narrow dose ranges. |

| Emax (Hyperbolic) | E = E0 + (Emax * D) / (ED50 + D) |

E0: Baseline; Emax: Maximal effect; ED50: Dose producing 50% of Emax. |

Receptor occupancy/saturation following Michaelis-Menten kinetics. | Most in vivo efficacy studies; assays where effect plateaus. |

| Sigmoid Emax (Hill) | E = E0 + (Emax * D^h) / (ED50^h + D^h) |

E0, Emax, ED50 as above; h: Hill slope (shape parameter). |

Cooperative binding; steeper or shallower transition around ED50. | In vitro assays; biomarkers with pronounced threshold effects. |

| Logistic | E = E0 + (Emax) / (1 + exp(-(D - ED50)/k)) |

E0, Emax, ED50 as above; k: Scale parameter related to slope. |

Describes symmetric sigmoidal growth. Often mathematically interchangeable with Sigmoid Emax. | Binary or graded responses; population-based responses. |

| Exponential | E = E0 + α * (exp(D/τ) - 1) |

E0: Baseline; α: Scaling factor; τ: Dose-exponent factor. |

Rapidly increasing effect without an apparent upper asymptote within range. | Early-phase toxicology or safety pharmacology (e.g., QTc prolongation). |

| Quadratic (Umbrella) | E = β0 + β1*D + β2*D^2 |

β0: Intercept; β1: Linear coeff.; β2: Quadratic coeff. |

Non-monotonic (inverted-U) relationship. | Responses like hormesis or some cognitive effects. |

Model Selection and D-Optimality

D-optimal designs maximize the determinant of the Fisher Information Matrix (FIM), which depends explicitly on the model's partial derivatives with respect to its parameters. Therefore, a design optimal for an Emax model will differ from one for a Sigmoid Emax model. The candidate model set should be parsimonious, typically 2-3 models, to allow for model-averaged or model-robust design strategies that protect against misspecification. The parameters for the base model (e.g., initial guesses for ED50, Emax) are required to compute the FIM and generate the optimal design points (dose levels and their relative allocation).

Experimental Protocols

Protocol 1: Preliminary Assay for Model Discrimination and Parameter Estimation

This protocol aims to gather initial data to inform the choice and parameterization of candidate models for subsequent D-optimal design.

Title: Initial Dose-Range Finding and Model-Scouting Experiment Objective: To determine the approximate range of response, identify potential model forms (monotonic vs. non-monotonic), and obtain initial parameter estimates for D-optimal design calculation.

Materials:

- See "Research Reagent Solutions" table.

Procedure:

- Wide Dose Range Selection: Define a dose range spanning from vehicle/zero to the maximum feasible or tolerated dose, typically on a logarithmic scale (e.g., 0.001 nM to 100 µM).

- Sparse Sample Allocation: Distribute test units (cells, animals) across 6-10 doses across this range, with minimal replication (n=2-3) per dose.

- Randomized Application: Apply treatments in a randomized order to avoid confounding time-based effects.

- Response Measurement: Quantify the primary efficacy endpoint (e.g., luminescence, tumor volume, enzyme activity) using a validated assay.

- Data Analysis for Model Scouting: a. Plot raw mean response ± SD against log(Dose). b. Fit the data from Table 1 sequentially, starting with the simplest (Linear), then Emax, then Sigmoid Emax. c. Use the Akaike Information Criterion (AIC) for model comparison. A lower AIC suggests a better fit penalized for complexity. d. Obtain parameter estimates (E0, Emax, ED50, h) and their approximate confidence intervals from the 1-2 best-fitting models. e. Visually assess residual plots for systematic bias.

- Output for D-Optimal Design: The parameter estimates from the best model(s) become the "prior" or "nominal" parameters (θ) required to compute the D-optimal dose levels and subject allocation for the definitive study.

Protocol 2: D-Optimal Design Generation for a Given Candidate Model

This protocol details the computational steps to generate a D-optimal experimental design once a candidate model and nominal parameters are defined.

Title: Computational Generation of a D-Optimal Dose-Response Design Objective: To calculate the specific dose levels and optimal number of experimental units per dose that maximize the precision of parameter estimates for a specified candidate model.

Materials:

- Statistical software with optimal design capabilities (e.g., R

DoseFindingpackage, SASPROC OPTEX, JMP, WinBUGS). - Nominal parameter estimates (θ) from Protocol 1.

- Predefined design space (minimum and maximum allowable doses, Dmin, Dmax).

- Total sample size (N) constraint for the definitive experiment.

Procedure:

- Define Design Elements:

- Specify the model (e.g.,

sigEmax). - Input the nominal parameter vector (θ = [E0, Emax, ED50, h]).

- Specify the continuous design space Ξ = [Dmin, Dmax].

- Specify the model (e.g.,

- Set Optimization Criteria:

- Specify the optimality criterion as D-optimality, which minimizes the volume of the confidence ellipsoid for θ.

- Define the total number of support points (k), which is the number of distinct dose levels (typically equal to the number of model parameters, p).

- Allocate the total sample size (N) across these k points. The optimal allocation is found by the algorithm.

- Run Optimization Algorithm:

- Use an exchange algorithm (Fedorov-Wynn) or a multiplicative algorithm to find the set of k dose levels (x1, x2,... xk) and their corresponding relative weights (w1, w2,... wk, where Σwi = 1) that maximize log|M(ξ,θ)|, where M is the FIM.

- The actual sample size per dose is ni = wi * N (rounded to integers).

- Validate Design:

- Compute the D-efficiency of the design relative to a theoretical optimal.

- Generate a sensitivity plot (derivative of the D-criterion vs. dose). The design is locally D-optimal if the sensitivity function touches the zero line at the chosen dose levels and is below it elsewhere within Ξ.

- Final Output: A table specifying the definitive experiment's k optimal dose levels and the recommended number of replicates per dose.

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Dose-Response Modeling Studies

| Item | Function in Dose-Response Research |

|---|---|

| Cell-Based Viability Assay (e.g., CellTiter-Glo) | Measures ATP content as a proxy for cell number/viability. Primary endpoint for in vitro cytotoxic or proliferative dose-response studies. |

| pIC50/EC50 Prediction Software (e.g., GraphPad Prism) | Fits nonlinear regression models to dose-response data, providing robust parameter estimates and confidence intervals for model scouting. |

D-Optimal Design Software (e.g., R DoseFinding package) |

Specialized statistical library for calculating and evaluating optimal designs for nonlinear dose-response models, crucial for Protocol 2. |

| High-Throughput Screening (HTS) Compound Library | Enables rapid testing of a wide concentration range for new chemical entities in initial model-scouting phases. |

| Pharmacokinetic (PK) Simulation Software (e.g., Winnonlin) | Used when dose-response is modeled on exposure (e.g., plasma concentration) rather than administered dose, requiring PK/PD modeling. |

| Reference Agonist/Antagonist (e.g., Isoproterenol for β-adrenoceptor) | A well-characterized control compound used to validate the assay system and define the system's maximal possible response (Emax). |

Visualizations

D-Optimal Design Workflow for Dose-Response

From Biological Pathway to Model Parameters

Within the broader thesis on D-optimal experimental design for dose-response studies, this step is pivotal. It transforms a theoretical statistical problem into a practical, context-rich experimental plan. Specifying parameter priors involves encoding existing knowledge (historical data, literature, expert opinion) into probability distributions for model parameters. Defining the design region (dose range) establishes the experimental space, balancing safety, biological plausibility, and regulatory requirements. This step directly impacts the efficiency and success of subsequent optimal design algorithms.

Specifying Parameter Priors

Parameter priors inform the D-optimal algorithm where in the parameter space to optimize the design, making the design "locally optimal."

- Preclinical In Vitro Data: EC₅₀, IC₅₀, Hill slope estimates from high-throughput screening.

- Historical Compounds: Data from similar chemical entities or pharmacologic classes.

- Literature and Public Databases: Published dose-response relationships for targets or pathways.

- Expert Elicitation: Formal structured processes to quantify subjective expert knowledge.

Common Prior Distributions for Dose-Response Models

For a standard 4-parameter logistic (4PL) model: E(d) = Eₘᵢₙ + (Eₘₐₓ - Eₘᵢₙ) / (1 + 10^(Hill(LogEC₅₀ - d)))*

Table 1: Typical Prior Distributions for 4PL Model Parameters

| Parameter | Biological Meaning | Typical Prior Form | Justification & Example |

|---|---|---|---|

| Eₘᵢₙ | Baseline Effect | Normal(μ, σ) | μ based on vehicle control historical data. σ reflects between-experiment variability. |

| Eₘₐₓ | Maximum Effect | Truncated Normal(μ, σ) | μ from positive control or theoretical max. Truncated to be > Eₘᵢₙ. |

| LogEC₅₀ | Location (Potency) | Uniform(a, b) or Normal(μ, σ) | Uniform if vague; Normal if literature provides a precise estimate (e.g., LogEC₅₀ = -6.0 ± 0.5 logM). |

| Hill | Slope (Steepness) | LogNormal(μ, σ) or Gamma(α, β) | Constrained positive. LogNormal is common for inherently positive parameters. |

Objective: To quantitatively translate expert knowledge into probability distributions for model parameters.

Materials: Facilitator, 2-3 subject matter experts (SMEs), visual aids (parameter definitions, historical data summaries), elicitation software or worksheets.

Procedure:

- Preparation: Clearly define each parameter (Eₘᵢₙ, Eₘₐₓ, LogEC₅₀, Hill) with its units and biological interpretation. Provide all relevant background data to experts.

- Training: Familiarize experts with the concept of quantiles (e.g., median, 5th, 95th percentile).

- Elicitation for Each Parameter (e.g., LogEC₅₀): a. Ask for the median (M): "What is your best guess where there's a 50/50 chance the true LogEC₅₀ is above or below this value?" b. Ask for a lower bound (L): "Provide a value such that you are 95% confident the true LogEC₅₀ is above it." c. Ask for an upper bound (U): "Provide a value such that you are 95% confident the true LogEC₅₀ is below it."

- Distribution Fitting: Fit a Normal distribution to the elicited values by solving for μ and σ where: M ≈ μ, and (U - L) ≈ 3.92*σ.

- Feedback & Iteration: Present the fitted distribution back to experts. Revise if it does not match their beliefs.

- Aggregation: If multiple experts, combine distributions using linear pooling or behavioral aggregation.

Defining the Design Region (Dose Range)

The design region Ξ is the set of all allowable doses, constrained by practical and scientific considerations.

Table 2: Factors Determining Dose Range Boundaries

| Factor | Lower Bound Consideration | Upper Bound Consideration |

|---|---|---|

| Biological/Pharmacological | Minimal anticipated effect level. Target engagement threshold. | Efficacy plateau. Receptor saturation. Maximum feasible dose (formulation). |

| Toxicological/Safety | Not typically limiting. | Maximum tolerated dose (MTD) from toxicology studies. NOAEL (No Observed Adverse Effect Level). |

| Regulatory & Practical | Dose separation for log-scale spacing. Manufacturing capability (low concentration). | Cost of goods. Clinical practicality (e.g., pill burden). |

Protocol: Establishing a Preliminary Dose Range fromIn VivoPK/PD Data

Objective: To translate in vivo pharmacokinetic (PK) and pharmacodynamic (PD) data into an initial dose range for a first-in-human (FIH) study.

Materials: PK profile (plasma concentration vs. time), in vitro potency (EC₅₀), in vitro efficacy (Eₘₐₓ), target exposure multiplier (e.g., 1x, 3x, 10x EC₅₀ for coverage).

Procedure:

- Determine Target Concentration: From in vitro assays, identify the target plasma concentration (Cₜₐᵣₜ) needed for efficacy (e.g., 3x the protein-binding-adjusted EC₅₀).

- Analyze PK Data: From animal PK studies, calculate the average plasma concentration over time (AUC) and the peak concentration (Cₘₐₓ) for a given administered dose.

- Estimate Human Equivalent Dose (HED): Use allometric scaling (e.g., based on body surface area) to extrapolate the animal dose achieving Cₜₐᵣₜ to a predicted human dose.

- Apply Safety Factor: Divide the HED by a safety factor (e.g., 10 to 100) to establish a starting dose for FIH studies.

- Set the Upper Bound: The upper bound is typically the HED or a dose predicted to achieve the maximum effective exposure, not exceeding preclinical NOAEL-based limits.

- Define the Range: The design region is the logarithmic interval between the starting dose (lower bound) and the upper bound. Doses for D-optimal design are selected within this range.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Prior Elicitation & Range-Finding Experiments

| Item / Reagent | Function in This Context | Example & Vendor (Illustrative) |

|---|---|---|

| Statistical Elicitation Software | Facilitates structured expert elicitation and fits probability distributions to expert judgments. | MATLAB (Sheffield Elicitation Toolbox), R (SHELF package). |

| Dose-Response Analysis Software | Fits nonlinear models to preliminary data to generate parameter estimates for priors. | GraphPad Prism, R (drc package), SAS (NLIN). |

| Reference Standard Compound | Acts as a positive control in range-finding assays to estimate Eₘₐₓ and benchmark EC₅₀. | Known high-potency agonist/antagonist for the target (e.g., from Tocris, Sigma-Aldrich). |

| Cell Line with Target Expression | Essential for generating in vitro potency/efficacy data to inform priors and dose range. | Recombinant cell line (e.g., CHO-K1 or HEK293) stably expressing the human target (from ATCC + in-house engineering). |

| PK/PD Modeling Software | Performs allometric scaling and exposure-response modeling to translate animal data to human dose range. | Phoenix WinNonlin, GastroPlus, R (mrgsolve package). |

| In Vitro Binding/Functional Assay Kit | Provides the primary data (IC₅₀, EC₅₀) used for prior specification. | HTRF kinase assay kit (Cisbio), cAMP Gs dynamic assay (Promega). |

Visualizations

Diagram Title: Workflow for Specifying Priors and Dose Range in D-Optimal Design

Diagram Title: From Preclinical Data to Dose Range Design Region

Within the broader thesis on advancing D-optimal experimental design for modern dose-response studies, the transition from foundational algorithms to efficient, practical implementations is critical. This note details the computational evolution from the classical sequential algorithms of Federov and Wynn to the modern point-exchange standard, providing the protocol for implementing these methods to design efficient, informative pharmaceutical experiments that minimize parameter uncertainty.

Algorithmic Foundations: Protocols & Data

The core objective is to maximize the determinant of the Fisher Information Matrix (FIM), (|M(\xi)|), for a pre-specified nonlinear model (e.g., 4-parameter logistic model). The design (\xi) is a set of (N) dose points (xi) with corresponding weights (wi).

Table 1: Comparison of Core Algorithmic Strategies

| Algorithm (Year) | Type | Key Mechanism | Primary Advantage | Primary Limitation | Typical Use in Dose-Response | ||

|---|---|---|---|---|---|---|---|

| Federov Exchange (1972) | Point-Exchange | Exchanges a candidate point with a design point to maximize (\Delta | M | ). | Guaranteed convergence to optimal exact (N-point) design. | Computationally intensive for large candidate sets. | Final refinement of exact designs from a large candidate dose set. |

| Wynn Algorithm (1970) | Sequential Addition | Adds the point that maximizes the variance of prediction (d-optimality criterion) iteratively. | Simple, intuitive, and memory-efficient. | Can produce highly clustered points; not optimal for fixed total N. | Initial design generation or adaptive sequential design. | ||

| Modified Fedorov (Atwood, 1973) | Point-Exchange | Considers all pairwise exchanges in each iteration. | Faster convergence than classic Fedorov. | Still heavy computational load per iteration. | Standard for exact D-optimal design generation. | ||

| KL-Exchange (Cook & Nachtsheim, 1980) | Point-Exchange | Uses a candidate list and exchanges to improve efficiency. | Dramatically reduces computations per iteration. | Modern de facto standard for exact design generation. |

Protocol 2.1: KL-Exchange Algorithm for Exact D-Optimal Dose-Response Design Objective: Generate an exact N-point D-optimal design from a discrete candidate set of doses. Inputs: Nonlinear model (f(x, \theta)), prior parameter estimates (\theta0), candidate dose set (C = {x{c1}, ..., x_{cL}}), required number of points (N). Procedure:

- Initialization: Generate a starting design (\xi_N) of N distinct points from (C) (e.g., via Wynn sequential addition or random selection).

- Compute FIM: Calculate the information matrix (M(\xi_N)).

- Exchange Loop: For each point (xi) in the current design (\xiN): a. Compute its estimated variance (d(xi, \xiN) = f(xi)^T M(\xiN)^{-1} f(xi)). b. For each candidate point (xc) in (C) not in (\xiN), compute (d(xc, \xiN)). c. Identify the pair ((xi, xc)) that maximizes the exchange score: (d(xc, \xiN) - d(xi, \xiN)). d. If this score is positive, exchange (xi) for (xc) in (\xiN), update (M(\xi_N)), and repeat the loop.

- Termination: The loop terminates when no positive-exchange pair is found for a full cycle. Output: Final exact D-optimal design (\xi_N^*).

KL-Exchange Algorithm Workflow for D-Optimal Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Toolkit for Algorithm Implementation

| Item/Software | Function in Algorithmic Design | Example/Note |

|---|---|---|

| R Statistical Language | Primary platform for implementing custom exchange algorithms and design evaluation. | Use optFederov() from the AlgDesign package for exchange algorithms. |

| Python (SciPy/NumPy) | Flexible environment for matrix computations and custom algorithm scripting. | pyDOE2 and scipy.optimize libraries are useful. |

| MATLAB Statistics Toolbox | Provides cordexch and rowexch functions for point-exchange D-optimal design. |

Industry-standard for rapid prototyping in pharma. |

| Prior Parameter Estimates | Critical inputs for the model's Jacobian; the design is locally optimal around these values. | Derived from pilot studies or literature. Robust design considers a parameter prior distribution. |

| Discrete Candidate Dose Set | The predefined, feasible range of doses to be searched by the exchange algorithm. | Typically log-spaced concentrations within assay safety/fefficacy limits. |

| High-Performance Computing (HPC) Cluster | Enables robust design generation via Bayesian or pseudo-Bayesian criteria requiring Monte Carlo integration. | Essential for advanced designs addressing parameter uncertainty. |

Protocol 2.2: Implementing a Robust (Bayesian) D-Optimal Design Objective: Generate a design optimal over a prior distribution of parameters (\pi(\theta)), maximizing (\int \log |M(\xi, \theta)| \pi(\theta) d\theta). Inputs: Parameter prior (\pi(\theta)), candidate set (C), number of points (N), sample size (K) for Monte Carlo. Procedure:

- Draw Parameter Sample: Draw a random sample ({\theta1, ..., \thetaK}) from (\pi(\theta)).

- Modify Exchange Criterion: In the KL-Exchange loop, replace (d(x)) with the average: (\bar{d}(x) = \frac{1}{K} \sum{k=1}^K f(x)^T M(\xi, \thetak)^{-1} f(x)).

- Run Modified Exchange: Execute Protocol 2.1 using the averaged variance function (\bar{d}(x)). Output: A robust exact design less sensitive to misspecification of initial parameter estimates.

Workflow for Robust Bayesian D-Optimal Design

Application Data & Comparative Results

Table 3: Performance Comparison on a 4-Parameter Logistic Model (Candidate Set: 100 log-spaced doses from 0.1 to 100 nM; N=12 points; Local Params: ED50=5, Hill=1)

| Algorithm | Final ( | M(\xi) | ) (log scale) | Iterations to Converge | CPU Time (sec) | Support Points Found | Suitability for Dose-Response |

|---|---|---|---|---|---|---|---|

| Wynn (Sequential) | 14.21 | 12 (sequential adds) | <0.1 | 4-5 (clustered) | Poor - inefficient point replication. | ||

| Classic Federov | 15.87 | ~1050 | 12.5 | 6 | Good optimality, slow. | ||

| KL-Exchange | 15.86 | ~35 | 0.8 | 6 | Excellent - optimal and efficient. | ||

| Robust KL-Exchange (Uniform Prior on ED50) | 15.42 (Avg) | ~50 | 15.2 (K=100) | 7 | Essential for high parameter uncertainty. |

The data confirm the KL-Exchange algorithm as the pragmatic standard, balancing optimality and computational speed, while robust extensions ensure applicability under real-world parameter uncertainty in early drug development.

Application Notes: D-Optimal Design for Dose-Response Studies

For research within a thesis on D-optimal design for dose-response modeling, selecting the appropriate software tool is critical. The goal is to maximize the precision of parameter estimates (e.g., ED50, slope) for non-linear models like the 4-parameter logistic (4PL) model by optimizing dose level selection and allocation of experimental units.

Table 1: Comparison of Software for D-Optimal Dose-Response Design

| Feature / Software | JMP | R (OptimalDesign Package) | SAS (PROC OPTEX) | Python (pyDOE2, Custom) |

|---|---|---|---|---|

| Primary Interface | Graphical User Interface (GUI) | Script-based (R Console) | Script-based (SAS Editor) | Script-based (Jupyter, IDE) |

| Core Optimization Algorithm | Coordinate Exchange | Federov-Wynn, Exchange | Federov Exchange | Various (often via pymanopt or direct algos) |

| Predefined 4PL Model Support | Yes, via built-in custom designer | Yes, via model.matrix & formula definition |

Yes, via PROC NLIN template in OPTEX |

No, requires manual model function definition |

| Constraint Handling (e.g., Min Dose Spacing) | Excellent (Interactive & graphical) | Good (via user-defined candidate sets) | Good (via candidate set filtering) | Manual (pre-processing of candidate set) |

| Replication & Blocking Support | Full support for random blocks | Requires manual candidate set expansion | Supported via BLOCKS statement |

Manual implementation required |

| Optimality Criterion Output | D, A, G, I-efficiency | D, A, I-efficiency | D, A, G, I-efficiency | D-efficiency (common) |

| Best For | Interactive exploration, rapid prototyping | Flexible, open-source research, integration with analysis | Enterprise-level validation, reproducible scripts | Custom algorithmic development, ML pipeline integration |

Experimental Protocol: Generating a D-Optimal Design for a 4PL Model

Objective: To construct a D-optimal design for a 4-parameter logistic dose-response experiment estimating parameters: Bottom (θ₁), Top (θ₂), ED50 (θ₃), and Slope (θ₄).

Protocol 1: Using JMP

- Launch JMP and select DOE > Custom Design.

- Under Add Factors, add one Continuous factor, named "Dose".

- Define the Dose Range (e.g., 0.1 to 100 µM, log scale).

- In the Model section, click Macros and select Nonlinear.

- Enter the 4PL model formula:

θ₁ + (θ₂ - θ₁) / (1 + exp(θ₄*(log(Dose) - log(θ₃)))). - Specify prior values for θ₁, θ₂, θ₃, θ₄ based on preliminary data or literature.

- Set the Number of Runs (e.g., 12).

- Add constraints if needed (e.g., minimum spacing between dose levels via Disallowed Combinations).

- Click Make Design to generate the optimal set of dose levels and their suggested replication.

- Output the design table for experimental execution.

Protocol 2: Using R with OptimalDesign Package

Protocol 3: Using SAS PROC OPTEX

Protocol 4: Using Python (pyDOE2 & SciPy)

Visualizations

Title: D-Optimal Design Workflow for Dose-Response Studies

Title: Software Selection Logic Tree for Researchers

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Dose-Response Experimental Validation

| Item / Reagent | Function in Dose-Response Study |

|---|---|

| Reference Agonist/Antagonist | A compound with well-characterized efficacy/potency. Used as a system control to validate assay performance and plate-to-plate consistency. |

| Cell Line with Target Expression | Genetically engineered or native cell line stably expressing the pharmacological target (e.g., GPCR, kinase). Ensures a consistent, reproducible signal. |

| Fluorescent or Luminescent Viability/Cell Titer Kit | Measures cell number or health. Critical for distinguishing specific target-mediated effects from non-specific cytotoxicity at high doses. |

| Second Messenger Assay Kit (e.g., cAMP, Ca2+, IP1) | Quantifies intracellular signaling output downstream of target engagement. Provides the primary quantitative readout for model fitting. |

| DMSO (Cell Culture Grade) | Universal solvent for compound libraries. Must be controlled at low, consistent concentration (e.g., ≤0.1%) across all dose levels to avoid solvent-induced artifacts. |

| Automated Liquid Handler | Enables precise, high-throughput serial dilution of test compounds to generate accurate dose concentrations and replicate plating. |

| 384/1536-well Microplates (Assay Optimized) | Plate format that minimizes reagent use and allows testing of multiple dose-response curves in a single experiment, reducing inter-experiment variability. |

| Plate Reader with Kinetic Capability | For time-resolved fluorescence (TR-FRET) or luminescence readings. Essential for dynamic assays where signal optimal read time is variable. |

Within a D-optimal experimental design framework for dose-response studies, the final step involves interpreting the output from the optimization algorithm. This step translates mathematical solutions into a practical, executable experimental plan. The core outputs are the specific dose levels to be tested and the recommended number of experimental replicates (or allocation ratios) for each dose, including controls. This protocol details how to analyze these outputs, validate them against statistical and biological principles, and implement them in a laboratory setting.

Key Output Interpretation Protocol

Materials & Software Requirements

- Statistical Software Output: Results from software (e.g., JMP, R

DoseFinding/OPDOEpackages, SAS PROC OPTEX) implementing the D-optimal algorithm for the selected model (e.g., Emax, logistic, sigmoidal). - Primary Data: The candidate dose range and preliminary variance estimates used in the design phase.

- Analysis Software: Spreadsheet (Excel, Google Sheets) or statistical software for final table and plot generation.

Procedure

- Extract Optimal Design Points: From the software output, identify the support points. These are the specific dose levels (e.g., 0, 1.2, 5.5, 20 µM) selected by the D-optimal criterion.

- Extract Replication Ratios: For each optimal dose level, note the associated weight or proportion. Multiply these proportions by the total planned experimental run size (N) to determine the approximate number of replicates for each dose. Final replicate numbers must be integers.

- Round and Balance: Adjust the replicate counts to integers while maintaining the total N. Ensure control groups (dose=0) have sufficient replicates for robust baseline estimation, often matching or exceeding the replication of mid-range doses.

- Construct the Final Experimental Plan Table: Compile the doses and integer replicate counts into a definitive plan. An example is shown in Table 1.

- Sensitivity Check (Robustness): Perform a sensitivity analysis by slightly perturbing the assumed model parameters (e.g., ED50). Re-run the D-optimal design to see if the optimal doses shift significantly. If they are stable, confidence in the design is high.

Data Presentation

Table 1: Exemplar D-Optimal Output for a Monotonic Emax Model Dose-Response Study

| Dose Level (µM) | Optimal Proportion | Total Planned Runs (N=48) | Rounded Replicate Count | Function in Design |

|---|---|---|---|---|

| 0.0 (Vehicle Control) | 0.25 | 12.0 | 12 | Baseline estimation |

| 0.8 | 0.25 | 12.0 | 12 | Inform lower curvature |

| 5.0 | 0.25 | 12.0 | 12 | Inform ED50 region |

| 25.0 (Max Dose) | 0.25 | 12.0 | 12 | Inform asymptotic effect |

Table 2: Comparison of D-Optimal Designs for Different Assumed Models (Total N=36)

| Model Type | Assumed ED50 | Optimal Dose Levels (µM) | Key Design Insight |

|---|---|---|---|

| Linear | N/A | 0.0, 25.0 (only extremes) | All replicates split between min and max dose. |

| Emax | 5.0 µM | 0.0, 2.2, 25.0 | Includes a dose near the ED50 for precise estimation. |

| Sigmoidal (4PL) | 5.0 µM | 0.0, 1.1, 5.0, 25.0 | Adds a dose to better estimate the slope parameter. |

Implementation Protocol: From Output to Assay Plate

Objective

To physically implement the D-optimal design plan (Tables 1/2) into a 96-well plate assay, ensuring correct dose allocation and randomization to minimize bias.

Materials

- Compound stock solution at highest test concentration.

- Vehicle/medium for serial dilution.

- Cell suspension or biochemical assay components.

- 96-well tissue culture plates.

- Multichannel and single-channel pipettes.

- Plate layout planning software or template.

Procedure

- Plan Plate Layout: Map the doses and their replicates onto the physical plate(s). Use randomization software to assign dose levels to wells, blocking by rows/columns to account for potential spatial gradients (e.g., edge effects).

- Prepare Dose Series: Perform serial dilutions to create intermediate stock solutions at the exact optimal concentrations identified (e.g., 0.8 µM, 5.0 µM).

- Plate Compounds: Transfer vehicle and compound solutions to assay plates according to the randomized layout. Include appropriate controls if not part of the dose set (e.g., a reference inhibitor control for an inhibition assay).

- Add Assay Components: Add cells, substrate, or other reaction components.

- Documentation: Record the final plate map linking each well to its dose condition. This map is critical for downstream data analysis.

Visualizing the Workflow and Logic

Diagram Title: From Algorithm Output to Experimental Plan Workflow

Diagram Title: Logic of D-Optimal Dose Selection

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Dose-Response D-Optimal Studies |

|---|---|

D-Optimal Design Software (R DoseFinding) |

Statistical package specifically for designing and analyzing dose-finding studies, includes D-optimal calculations for standard nonlinear models. |

| High-Throughput Liquid Handler | Enables precise, automated dispensing of multiple optimal dose concentrations into assay plates, improving accuracy and reproducibility. |

| Potent Compound Stock Solutions (e.g., in DMSO) | High-quality, accurately titrated stock solutions are essential for generating the exact optimal dose concentrations calculated by the design. |

| Cellular Viability/Activity Assay Kits (e.g., CellTiter-Glo) | Validated, homogeneous assay kits provide robust readouts (luminescence/fluorescence) for the biological response across the dose range. |

| Electronic Laboratory Notebook (ELN) | Critical for documenting the link between the statistical design output, the physical plate layout, and the raw data for traceability. |

| Plate Reader with Kinetic Capabilities | For assays where the time course of response is informative, allowing data-rich longitudinal analysis from a single experiment. |

Within the broader thesis on D-optimal experimental design for dose-response studies, this application note demonstrates the practical implementation of these principles in designing a Phase II Proof-of-Concept (PoC) trial for a novel hypothetical compound, "NeuroRegain," intended for Alzheimer's disease (AD). The core thesis posits that employing D-optimal design, which maximizes the determinant of the information matrix, leads to more precise parameter estimation for dose-response models with fewer patients and resources, while maintaining robust operational characteristics. This case study applies that theoretical framework to a real-world clinical development scenario.

Current Landscape & Data Synthesis

A live search reveals current trends and data informing NeuroRegain's development.

Key Findings:

- Target: BACE1 (β-secretase 1) inhibition remains a validated, though challenging, mechanism for reducing amyloid-beta (Aβ) production. Recent trials emphasize the importance of early intervention and biomarker-stratified populations.

- Biomarkers: CSF Aβ42, p-tau, and plasma-based p-tau217 are critical quantitative endpoints for PoC. Amyloid PET is a confirmatory qualitative endpoint.

- Dosing: Prior failed compounds often showed narrow therapeutic windows. Precise dose-response modeling is essential to identify the minimum efficacious dose and avoid toxicity.

- Design: Adaptive and Bayesian designs are increasingly common, but their efficiency depends on optimal initial dose placements—a core strength of D-optimal design.

Table 1: Summary of Recent BACE1 Inhibitor PoC Trial Data

| Compound (Phase) | Dose Levels (mg) | Primary Biomarker Endpoint (CSF Aβ40/42 reduction) | Key Design Feature | Outcome |

|---|---|---|---|---|

| Lanabecestat (II/III) | 20, 50 | ~65-75% reduction at 50mg | Fixed-dose, long-term | Development halted (toxicity) |

| Atabecestat (II) | 10, 25, 50 | ~60-80% reduction | Adaptive, biomarker-enriched | Stopped (liver safety) |

| Elenbecestat (II) | 5, 15, 50 | ~55-75% reduction | Parallel group | Discontinued (futility in Phase III) |

| Implications for NeuroRegain | Need for low-to-mid range doses | Target: >50% reduction for PoC | Need for optimal spacing across expected ED50 | Design Goal: Identify dose for ≥50% Aβ reduction with clean safety profile. |

D-Optimal Design Application: Protocol for the NeuroRegain Phase II PoC Trial

Protocol Title: A Randomized, Double-Blind, Placebo-Controlled, Dose-Finding Phase II Proof-of-Concept Study to Assess the Efficacy, Safety, and Pharmacokinetics/Pharmacodynamics of NeuroRegain in Patients with Early Alzheimer's Disease.

3.1. Primary Objective & Hypothesis

- Objective: To characterize the dose-response relationship of NeuroRegain on the reduction of cerebral amyloid production, as measured by change in cerebrospinal fluid (CSF) Aβ42 levels from baseline to Week 26.

- Hypothesis: NeuroRegain administration will produce a dose-dependent reduction in CSF Aβ42 levels, with at least one dose showing a statistically significant and clinically meaningful reduction (>50%) compared to placebo.

3.2. D-Optimal Dose Selection & Randomization Based on preclinical PK/PD modeling, the anticipated ED50 for CSF Aβ42 reduction is 15 mg. Using D-optimal design for a 4-parameter Emax model (E0, Emax, ED50, Hill coefficient), the optimal dose levels to precisely estimate the dose-response curve are determined.

Table 2: D-Optimal Dose Allocation for NeuroRegain

| Arm | Dose (mg) | Rationale (D-Optimal Placement) | % of Patients (N=200) |

|---|---|---|---|

| 1 | Placebo | Essential baseline (E0) estimate | 20% (n=40) |

| 2 | 5 mg | Inform lower asymptote, early slope | 20% (n=40) |

| 3 | 15 mg | Estimated ED50 region (maximal information) | 30% (n=60) |

| 4 | 40 mg | Inform upper asymptote (Emax) & safety | 30% (n=60) |

Randomization: Patients are stratified based on baseline CSF p-tau status (high/low) and ApoE4 carrier status, then centrally randomized using an interactive web response system (IWRS).

3.3. Detailed Experimental Protocol: Key Assessments

Procedure: Lumbar Puncture & CSF Biomarker Analysis

- Pre-Procedure: Patient fasts overnight. Consent is reconfirmed.

- Positioning: Patient placed in lateral decubitus position.

- Asepsis: Sterile draping. Local anesthetic (lidocaine 1%) applied.

- Collection: A 22-gauge spinal needle is inserted at L3/L4 or L4/L5 interspace. Exactly 15 mL of CSF is collected in polypropylene tubes.

- Processing: Tubes are gently inverted. Samples are centrifuged at 2000g for 10 minutes at 4°C to remove cells.

- Aliquoting: Supernatant is aliquoted into 0.5 mL polypropylene tubes.

- Storage: Aliquots are frozen at -80°C within 60 minutes of collection.

- Analysis: Batched analysis using validated Elecsys immunoassays (Roche) for Aβ42, p-tau, and total tau. Absolute change from baseline to Week 26 is calculated.

Procedure: Amyloid PET (Substudy, n=80)

- Tracer: [18F]Flutemetamol or [18F]Florbetaben.

- Injection: ~185 MBq of tracer is administered IV.

- Uptake: 90-minute uptake period.

- Imaging: 20-minute CT scan followed by 20-minute PET scan.

- Analysis: Images are processed and quantified as Standardized Uptake Value Ratio (SUVR) using cerebellar grey matter as reference. Visual read is performed by three blinded experts.

3.4. Statistical Analysis Plan

- Primary Analysis: The dose-response relationship will be analyzed using a Bayesian Emax model. Priors will be weakly informative, based on preclinical data. The probability that each active dose achieves >50% reduction in CSF Aβ42 vs. placebo will be calculated.

- Sample Size Justification: N=200 provides 90% power to detect a dose with a true mean difference of ≥50% reduction vs placebo (α=0.05, 2-sided), accounting for 15% dropout and the D-optimal allocation weighting.

- D-optimality Check: The expected variance of the ED50 estimate will be computed pre-trial and compared to alternative designs.

Visualizations

D-optimal Design for Dose-Response

Phase II PoC Trial Patient Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomarker-Driven PoC Trials

| Item / Reagent | Vendor Example | Function in Protocol |

|---|---|---|

| Elecsys Phospho-Tau (181P) CSF | Roche Diagnostics | Quantifies p-tau in CSF for patient stratification and exploratory efficacy. |

| Elecsys β-Amyloid (1-42) CSF II | Roche Diagnostics | Core efficacy assay measuring change in CSF Aβ42 levels. |

| Polypropylene CSF Collection Tubes | Sarstedt, PerkinElmer | Prevents adsorption of amyloid peptides to tube walls. |

| [18F]Flutemetamol Tracer | GE Healthcare | Radioligand for amyloid PET imaging to confirm target engagement. |

| Liquipure CSF Clarification Kit | MilliporeSigma | Removes debris and cells from CSF prior to analysis, improving assay precision. |

| Validated PK ELISA Kit | Custom Assay Development | Measures plasma concentrations of NeuroRegain for PK/PD modeling. |

| ApoE Genotyping Assay | Thermo Fisher Scientific | Identifies ApoE4 carrier status for stratification. |

| Interactive Web Response System (IWRS) | Medidata Rave, Oracle | Manages patient randomization, stratification, and drug supply. |

Solving Real-World Challenges: Troubleshooting and Optimizing Your D-Optimal Design

Within the broader thesis on D-optimal experimental design for dose-response studies, a primary challenge is the a priori uncertainty in nonlinear model parameters. Classical D-optimality, which aims to maximize the determinant of the Fisher information matrix, is locally optimal—it depends critically on an initial guess for parameters (e.g., EC50, Hill slope, Emax). An inaccurate guess can lead to severely suboptimal designs, wasting resources and reducing the precision of key parameter estimates in drug development. This article details two principled approaches to this challenge: Bayesian and robust optimal designs.

Conceptual Frameworks and Comparative Analysis

Bayesian Optimal Design (BOD) incorporates prior knowledge (or uncertainty) about model parameters in the form of a prior distribution. The design criterion, typically the expectation of the log determinant of the information matrix over this prior, is optimized. Robust (or Minimax) Optimal Design seeks to protect against the worst-case scenario within a predefined set of possible parameter values, minimizing the maximum loss in efficiency.

Table 1: Comparison of Design Strategies for Parameter Uncertainty

| Design Strategy | Core Principle | Advantages | Disadvantages | Typical Use Case |

|---|---|---|---|---|

| Local D-Optimal | Optimizes for a single, best-guess parameter vector. | Computationally simple; maximum efficiency if guess is correct. | Highly inefficient if initial guess is wrong; not robust. | Preliminary studies with strong prior data. |

| Bayesian D-Optimal | Maximizes expected information over a prior parameter distribution. | Efficiently incorporates prior knowledge/uncertainty; provides a balanced design. | Requires specification of a prior; more computationally intensive. | Most common scenario with historical data or expert insight. |

| Minimax D-Optimal | Minimizes the worst-case loss in efficiency over a parameter space. | Guarantees a lower bound on efficiency; maximally robust. | Computationally very demanding; can be overly conservative. | Critical studies where failure due to bad guess is unacceptable. |

| Pseudobayesian (Discrete) | Uses a discrete set of parameter scenarios with attached probabilities. | Easier computation than full Bayesian; more robust than local. | Efficiency depends on chosen scenarios and weights. | When prior can be approximated by key plausible scenarios. |

Often implemented as a weighted sum of information matrices.

Application Notes & Protocols

Protocol 1: Implementing a Bayesian D-Optimal Design for a 4-Parameter Logistic (4PL) Model

Objective: To determine optimal dose levels for a dose-response assay that accounts for uncertainty in the EC50 and Hill slope parameters.

Model: 4PL: E(d) = Emin + (Emax - Emin) / (1 + (d/EC50)^Hill)

Materials & Reagent Solutions:

- Statistical Software: R (with 'dplyr' for data handling, 'DoseFinding' or 'ICAOD' for optimal design), or SAS (

PROC OPTEXwith custom coding), or specialized software like JMP Clinical. - Computational Environment: Adequate RAM (>8 GB) for Monte Carlo integration.

- Prior Distribution Specification: Based on historical data or literature for the compound class (e.g., log-normal for EC50, normal for Hill slope).