Predicting ADME in Drug Discovery: A Comprehensive Guide to Modern QSAR Models, Applications, and Best Practices

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) models for predicting Absorption, Distribution, Metabolism, and Excretion (ADME) properties in drug discovery.

Predicting ADME in Drug Discovery: A Comprehensive Guide to Modern QSAR Models, Applications, and Best Practices

Abstract

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) models for predicting Absorption, Distribution, Metabolism, and Excretion (ADME) properties in drug discovery. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of ADME and QSAR, details state-of-the-art methodological approaches and practical applications, addresses common challenges and optimization strategies, and concludes with rigorous validation techniques and comparative analyses of leading tools. The guide synthesizes current trends, including the integration of AI/ML and big data, to empower more efficient and predictive preclinical development.

ADME & QSAR Fundamentals: Building the Bedrock for Predictive Pharmacology

Why ADME Prediction is a Critical Bottleneck in Modern Drug Discovery

The high attrition rate in clinical development, predominantly due to unfavorable pharmacokinetics and toxicity, underscores ADME (Absorption, Distribution, Metabolism, Excretion) prediction as a pivotal bottleneck. Within Quantitative Structure-Activity Relationship (QSAR) research for ADME, the challenge lies in developing models that are both interpretable and generalizable across diverse chemical space. This application note details protocols and current perspectives central to advancing this field.

1. Application Note: High-Throughput In Vitro-to-In Vivo Extrapolation (IVIVE) for Clearance Prediction

A core application of ADME QSAR models is to prioritize compounds for experimental validation. This protocol integrates computational predictions with high-throughput in vitro assays to estimate human hepatic clearance (CLh).

- Objective: To predict human in vivo hepatic clearance from in vitro microsomal stability data using QSAR-informed compound selection and mechanistic scaling.

- Key Data & Rationale: Late-stage attrition due to poor pharmacokinetics remains significant. Recent analyses indicate that approximately 40% of drug failures in Phase II/III are linked to ADME/Tox issues, with poor metabolic stability and unanticipated drug-drug interactions being major contributors.

Table 1: Key In Vitro ADME Assays for IVIVE Pipeline

| Assay | Throughput | Primary Measurement | QSAR Model Input |

|---|---|---|---|

| Microsomal Stability | High (96/384-well) | Intrinsic Clearance (CLint) | Metabolic soft-spot identification |

| Caco-2/ MDCK-MDR1 | Medium | Apparent Permeability (Papp), Efflux Ratio | Absorption/ P-gp substrate classification |

| Plasma Protein Binding | High | Fraction Unbound (fu) | Estimation of free drug concentration |

| CYP Inhibition | High | IC50/ Ki | Prediction of drug-drug interaction risk |

Protocol 1.1: Parallel Microsomal Incubation & Data Generation

- Reagent Preparation: Prepare 1 mg/mL pooled human liver microsomes (HLM) in 100 mM potassium phosphate buffer (pH 7.4). Pre-warm NADPH regeneration system (Solution A: NADP+, glucose-6-phosphate; Solution B: glucose-6-phosphate dehydrogenase).

- Incubation: In a 96-well plate, mix 5 µL of 10 µM test compound (in DMSO, final [DMSO] ≤0.1%), 335 µL HLM suspension, and 10 µL of NADPH regeneration system. Initiate reaction by adding Solution B.

- Time-point Sampling: Aliquot 50 µL from each well at t = 0, 5, 15, 30, and 45 minutes into a quench plate containing 100 µL of cold acetonitrile with internal standard.

- Analysis: Centrifuge, dilute supernatant, and analyze via LC-MS/MS. Quantify parent compound depletion.

- Calculation: Determine in vitro half-life (t1/2) and intrinsic clearance: CLint, in vitro = (0.693 / t1/2) * (Volume of incubation / Microsomal protein).

Protocol 1.2: IVIVE Using the Well-Stirred Model

- Scale-up: Apply scaling factors. CLint, in vivo = CLint, in vitro * Microsomal protein per gram liver (MPPGL, ~40 mg/g) * Human liver weight (~20 g/kg body weight).

- Model Application: Predict human hepatic clearance using the well-stirred model: CLh = (Qh * fu * CLint, in vivo) / (Qh + fu * CLint, in vivo), where Qh is human hepatic blood flow (~20 mL/min/kg).

- QSAR Integration: Input predicted CLh and measured fu into a consensus QSAR model (e.g., random forest or graph neural network) trained on known in vivo clearance data to refine the prediction and flag structural outliers.

2. Protocol: Developing a Consensus QSAR Model for P-glycoprotein (P-gp) Substrate Classification

Predicting P-gp-mediated efflux is critical for anticipating bioavailability and CNS penetration. This protocol outlines the development of a robust classification model.

- Objective: To build a consensus QSAR model classifying compounds as P-gp substrates or non-substrates.

- Data Curation: Compile a dataset from public sources (e.g., ChEMBL) and proprietary assays. A recent benchmark study highlights the challenge: models trained on single datasets show >25% accuracy drop on external validation sets, emphasizing the need for diverse training data.

Table 2: Representative Dataset for P-gp Substrate Modeling

| Data Source | Number of Compounds | Substrate:Non-Substrate Ratio | Assay Type (Efflux Ratio Cut-off) |

|---|---|---|---|

| Literature (Broccatelli, 2012) | 1,149 | ~1:1.3 | In vitro (MDR1-MDCK II, ER ≥ 2) |

| FDA Drug Labels | 200+ | Varies | Clinical (Digoxin DDI, CNS warning) |

| In-house Caco-2 | 500 (example) | ~1:1 | In vitro (B>A/A>B, ER ≥ 2) |

Protocol 2.1: Model Building Workflow

- Descriptor Calculation & Selection: Generate 2D and 3D molecular descriptors (e.g., MOE, RDKit). Apply redundancy filtering (Pearson's R > 0.95) and univariate analysis (ANOVA) to select ~200 top descriptors.

- Model Training: Split data (70/15/15 for Train/Validation/Test). Train multiple algorithms:

- Algorithm A (Random Forest): 500 trees, Gini impurity.

- Algorithm B (Support Vector Machine): RBF kernel, optimize C and gamma via grid search on validation set.

- Algorithm C (Neural Network): 3 dense layers (200, 100, 50 nodes), ReLU activation, dropout (0.2).

- Consensus Prediction: For a new compound, obtain predictions from all three models. The final classification is based on a majority vote. Assign a "confidence score" based on the agreement level (e.g., 3/3 models agree = high confidence).

- Validation: Assess using the hold-out test set. Report accuracy, precision, recall, MCC, and AUC-ROC. Validate externally against a newly acquired assay dataset.

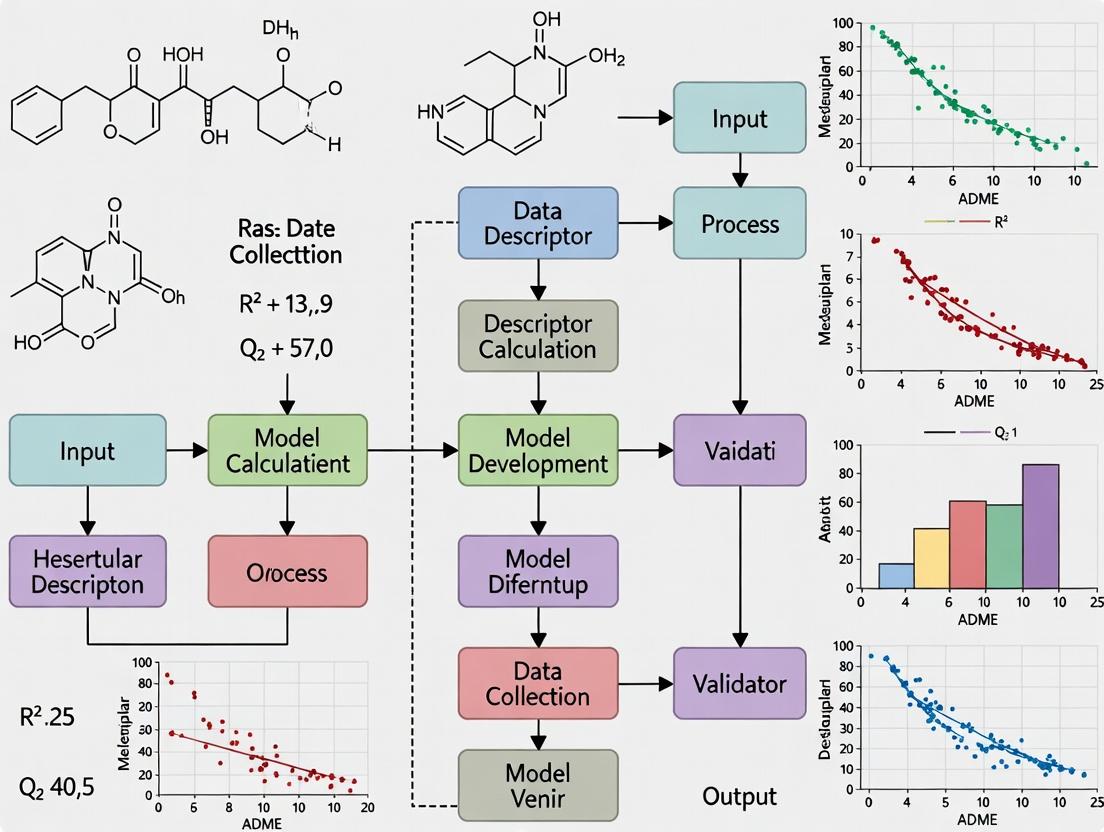

Visualization 1: ADME QSAR Model Development & Validation Workflow

ADME QSAR Model Development & Validation Workflow

Visualization 2: Key ADME Properties & Their Interplay in Drug Disposition

Key ADME Properties & Their Interplay

The Scientist's Toolkit: Key Research Reagent Solutions for ADME Studies

| Reagent / Material | Function in ADME Prediction Research |

|---|---|

| Pooled Human Liver Microsomes (HLM) | Contains the full complement of human Phase I metabolizing enzymes (CYPs) for in vitro metabolic stability and reaction phenotyping studies. |

| Recombinant CYP Isozymes | Individual CYP enzymes (e.g., CYP3A4, 2D6) used to identify specific enzymes responsible for compound metabolism and to assess inhibition potency. |

| Caco-2 / MDR1-MDCK II Cell Lines | Cell-based monolayers used to measure apparent permeability (Papp) and assess transporter-mediated efflux (e.g., P-gp) critical for predicting absorption. |

| Human Hepatocytes (Cryopreserved) | Gold-standard in vitro system containing both Phase I/II enzymes and physiological transporter expression for comprehensive clearance and metabolite ID studies. |

| LC-MS/MS System | High-sensitivity analytical platform for quantifying parent drug depletion, metabolite formation, and measuring compound concentrations in complex biological matrices. |

| QSAR Modeling Software (e.g., Schrödinger, MOE, RDKit) | Computational tools for molecular descriptor calculation, model building, validation, and virtual screening of compound libraries for ADME properties. |

| High-Quality, Curated ADME Databases (e.g., ChEMBL, PubChem) | Essential sources of public domain experimental ADME data for training, benchmarking, and expanding the chemical space coverage of predictive models. |

Historical Foundations and Modern Definition

Quantitative Structure-Activity Relationship (QSAR) modeling is a computational methodology that quantitatively correlates molecular descriptors (numerical representations of chemical structure) with a biological, physical, or ADME (Absorption, Distribution, Metabolism, Excretion) activity. Its evolution is marked by increasing complexity, from simple linear free-energy relationships to sophisticated machine learning models.

Table 1: Evolution of Key QSAR Paradigms

| Era | Paradigm | Key Equation/Concept | Primary Application |

|---|---|---|---|

| 1930s-1960s | Linear Free-Energy Relationships (LFER) | Hammett Equation: log(K/K₀) = ρσ | Substituent effects on reaction rates/equilibria in congeneric series. |

| 1960s-1970s | Hansch Analysis | log(1/C) = k₁π + k₂σ + k₃ | Incorporating hydrophobicity (π) and electronic (σ) effects for biological activity. |

| 1970s-1980s | 3D-QSAR | Comparative Molecular Field Analysis (CoMFA) | Steric and electrostatic fields correlated with activity for non-congeneric molecules. |

| 2000s-Present | Modern Computational QSAR | Machine Learning (RF, SVM, DNN), Multitask Learning, Deep Learning | Prediction of complex endpoints (e.g., toxicity, ADME properties) from large, diverse chemical datasets. |

Core QSAR Workflow for ADME Prediction

The standardized workflow for developing a QSAR model, particularly for ADME properties like human liver microsomal (HLM) stability or P-glycoprotein (P-gp) inhibition, involves sequential steps.

Diagram: QSAR Model Development and Validation Workflow

Protocol: Developing a QSAR Model for Human Intestinal Absorption (HIA) Prediction

This protocol details the steps for constructing a classification model (High vs. Low Absorption) using a public dataset.

Protocol 3.1: Data Acquisition and Curation

- Source: Obtain experimental %HIA data from a curated public repository (e.g., ChEMBL, ADME DB).

- Criteria: Filter compounds with:

- Directly measured human in vivo or reliable in situ permeability data.

- SMILES (Simplified Molecular Input Line Entry System) representation available.

- Remove salts and duplicates; keep the most reliable measurement.

- Binning: Classify compounds: High HIA (≥80% absorption) as positive class (1); Low HIA (≤30% absorption) as negative class (0). Exclude intermediates (30-80%).

Protocol 3.2: Descriptor Calculation and Dataset Preparation

- Software: Use PaDEL-Descriptor, RDKit, or Mordred.

- Input: Canonical SMILES for each compound.

- Calculation: Generate a comprehensive set of 1D, 2D, and 3D descriptors (e.g., molecular weight, logP, topological polar surface area (TPSA), number of rotatable bonds).

- Pre-processing:

- Remove descriptors with zero variance or >90% missing values.

- Impute remaining missing values (e.g., with column mean).

- Apply variance filtering and remove highly correlated descriptors (|r| > 0.95).

- Standardize (scale) the final descriptor matrix.

Protocol 3.3: Model Training and Validation

- Split: Perform a stratified split (maintaining class ratio) into a training set (80%) and a completely held-out external test set (20%).

- Algorithm: Train on the training set using a suitable algorithm (e.g., Random Forest).

- Internal Validation: Perform 5-fold or 10-fold cross-validation on the training set to optimize hyperparameters (e.g.,

n_estimators,max_depthfor RF) using metrics like accuracy or AUC-ROC. - External Validation: Apply the final optimized model to the held-out test set. This is the primary performance assessment.

- Metrics: Report for the test set: Accuracy, Sensitivity, Specificity, Precision, AUC-ROC, and Confusion Matrix.

Table 2: Performance Metrics for a Notional HIA Classification QSAR Model

| Metric | 5-Fold CV (Mean ± SD) | External Test Set | Interpretation |

|---|---|---|---|

| Accuracy | 0.85 ± 0.03 | 0.83 | Overall correctness of predictions. |

| AUC-ROC | 0.91 ± 0.02 | 0.89 | Model's ability to discriminate between classes. |

| Sensitivity | 0.87 ± 0.04 | 0.85 | Proportion of actual High-HIA compounds correctly identified. |

| Specificity | 0.82 ± 0.05 | 0.80 | Proportion of actual Low-HIA compounds correctly identified. |

| Precision | 0.88 ± 0.03 | 0.86 | Proportion of predicted High-HIA compounds that are correct. |

Table 3: Key Research Reagent Solutions for QSAR-Driven ADME Studies

| Item | Function in QSAR/ADME Research |

|---|---|

| In Silico Descriptor Software (RDKit, PaDEL) | Open-source libraries for calculating thousands of molecular descriptors and fingerprints from chemical structures (SMILES). |

| Machine Learning Platforms (scikit-learn, TensorFlow) | Python libraries providing algorithms (RF, SVM, DNN) for model building, training, and validation. |

| Curated ADME Databases (ChEMBL, PubChem) | Public repositories providing high-quality, experimental bioactivity and ADME data for model training and validation. |

| Molecular Dynamics Software (GROMACS, Desmond) | Used for advanced 3D-QSAR and to simulate molecular interactions (e.g., with lipid bilayers for permeability studies). |

| Commercial ADMET Predictor Suites (Schrödinger, BIOVIA) | Integrated platforms offering proprietary descriptors, automated QSAR model development, and high-throughput ADME prediction. |

Modern Framework: Integrative ADME Prediction

Current research in the thesis context focuses on multi-task, descriptor-fused models that predict multiple ADME endpoints simultaneously, improving efficiency and capturing shared underlying biology.

Diagram: Integrative Multi-Task QSAR Framework for ADME

Application Notes

Within modern Quantitative Structure-Activity Relationship (QSAR) model development for ADME property prediction, in vitro assays provide the essential high-quality data required for training and validation. This document details core assays and their integration into a predictive research thesis.

Caco-2 Permeability

The Caco-2 cell monolayer model is a cornerstone for predicting intestinal absorption and transcellular permeability in drug discovery. QSAR models trained on Caco-2 apparent permeability (Papp) data can effectively classify compounds as high (>1 x 10⁻⁶ cm/s) or low permeability. Recent model development emphasizes the differentiation between passive paracellular and transcellular routes, as well as active transport involvement.

P-glycoprotein (P-gp) Substrate Identification

P-gp efflux is a major determinant of drug disposition, affecting bioavailability and brain penetration. Assays determine if a compound is a substrate, inhibitor, or non-interactor. For QSAR, the efflux ratio (Papp(B-A)/Papp(A-B)) from bidirectional Caco-2 or MDCK-MDR1 assays is a critical quantitative endpoint. Models predicting efflux ratio help prioritize compounds with reduced risk of multidrug resistance and poor CNS exposure.

Cytochrome P450 (CYP450) Metabolism

CYP inhibition and reaction phenotyping are vital for predicting drug-drug interactions (DDIs). High-throughput fluorescence- and LC-MS/MS-based assays generate IC50 values for major CYP isoforms (1A2, 2C9, 2C19, 2D6, 3A4). QSAR models built on this data aim to identify structural alerts responsible for enzyme inhibition, thereby guiding the design of compounds with lower DDI potential.

hERG Channel Inhibition

Inhibition of the hERG potassium channel is a key surrogate for predicting cardiac QT interval prolongation (Torsades de Pointes risk). Patch-clamp electrophysiology and fluorescence-based binding assays yield IC50 data. The primary goal of hERG QSAR models is early-stage triaging of compounds with high-affinity binding motifs (e.g., basic amines, aromatic groups) to reduce cardiotoxicity liability.

Integrated ADME Profiling

The convergence of data from these core assays, alongside solubility, microsomal stability, and plasma protein binding, enables the construction of comprehensive, multi-parameter QSAR models. Such integrated models support lead optimization by forecasting a compound's overall pharmacokinetic profile.

Table 1: Benchmark Values for Core ADME Assays in QSAR Model Training

| Property | Assay System | Typical Output | Common QSAR Classification/Threshold |

|---|---|---|---|

| Caco-2 Permeability | Caco-2 cell monolayer, 21-day culture | Apparent Permeability (Papp in cm/s) | High: Papp (A-B) > 1 x 10⁻⁶ cm/s |

| P-gp Substrate | Bidirectional Caco-2/MDCK-MDR1 | Efflux Ratio (ER) | Substrate: ER ≥ 2; Inhibitor: IC50/EC50 |

| CYP450 Inhibition | Human liver microsomes/ recombinant CYP | IC50 (µM) | Potent Inhibitor: IC50 < 1 µM |

| hERG Inhibition | Patch-clamp / Fluorescence binding | IC50 (µM) | High Risk: IC50 < 10 µM |

| Microsomal Stability | Rat/Human liver microsomes | % Remaining, t₁/₂, Clint (µL/min/mg) | High Clearance: Clint > 50% of liver blood flow |

Detailed Experimental Protocols

Protocol 1: Caco-2 Permeability Assay

Objective: To determine the apparent permeability (Papp) of a test compound across a differentiated Caco-2 cell monolayer.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Cell Culture & Seeding: Maintain Caco-2 cells in complete DMEM. Seed onto collagen-coated Transwell inserts (1-3 µm pore, 0.33 cm²) at high density (e.g., 1 x 10⁵ cells/insert). Culture for 21-23 days, changing medium every 2-3 days.

- Monolayer Integrity Check: Prior to experiment, measure Transepithelial Electrical Resistance (TEER) using an epithelial volt-ohm meter. Accept monolayers with TEER > 300 Ω·cm². Alternatively, perform a Lucifer Yellow permeability test (Papp < 1 x 10⁻⁶ cm/s indicates tight junctions).

- Compound Dosing: Prepare test compound (typically 10 µM) in pre-warmed HBSS-HEPES transport buffer (pH 7.4). Aspirate culture medium and wash monolayers twice with buffer.

- A→B (Apical to Basolateral): Add donor solution to apical chamber, buffer to basolateral chamber.

- B→A (Basolateral to Apical): Add donor solution to basolateral chamber, buffer to apical chamber.

- Incubation: Place plates in an orbital shaker (37°C, ~50 rpm). Sample (e.g., 100 µL) from the receiver compartment at 30, 60, 90, and 120 minutes, replacing with fresh buffer.

- Sample Analysis: Quantify compound concentration in samples using LC-MS/MS.

- Data Calculation:

- Calculate Papp (cm/s) = (dQ/dt) / (A * C0), where dQ/dt is the steady-state flux, A is the filter area, and C0 is the initial donor concentration.

- Calculate Efflux Ratio = Papp (B→A) / Papp (A→B).

Protocol 2: P-gp Substrate Assay (Bidirectional)

Objective: To determine if a compound is a P-gp substrate by comparing bidirectional permeability with/without a P-gp inhibitor.

Procedure:

- Follow Protocol 1 steps 1-3 for seeding and integrity checks.

- Perform bidirectional transport (A→B and B→A) in parallel with and without a specific P-gp inhibitor (e.g., 10 µM Cyclosporin A or 1 µM Zosuquidar) added to both chambers.

- Sample and analyze as in Protocol 1.

- Data Interpretation: A compound is considered a P-gp substrate if its efflux ratio (without inhibitor) is ≥2 and this ratio decreases significantly (e.g., by >50%) in the presence of the inhibitor.

Protocol 3: CYP450 Inhibition (Fluorometric)

Objective: To determine the IC50 of a test compound for a specific recombinant human CYP enzyme.

Materials: Recombinant CYP enzyme, fluorogenic probe substrate (e.g., 3-cyano-7-ethoxycoumarin for CYP2C9), NADPH regeneration system, stop reagent.

Procedure:

- Prepare test compound serial dilutions (typically 0.1-100 µM) in assay buffer.

- In a black 96-well plate, combine: 25 µL test compound (or buffer control), 25 µL CYP enzyme, and 25 µL probe substrate at Km concentration.

- Pre-incubate for 5 minutes at 37°C.

- Initiate reaction by adding 25 µL of NADPH regeneration system. Incubate for a linear time period (e.g., 30 min).

- Stop reaction with 100 µL stop reagent (e.g., acetonitrile with internal standard).

- Measure fluorescence (ex/em wavelengths specific to metabolite).

- Data Analysis: Calculate % activity relative to vehicle control. Plot % activity vs. log[inhibitor] and fit data to a sigmoidal dose-response model to determine IC50.

Protocol 4: hERG Inhibition (Patch-Clamp)

Objective: To measure the concentration-dependent inhibition of hERG potassium current by a test compound.

Procedure:

- Cell Preparation: Use stable hERG-expressing CHO or HEK293 cells.

- Electrophysiology Setup: Use whole-cell patch-clamp configuration. Maintain cells at ~35°C. Voltage protocol: Hold at -80 mV, step to +20 mV for 4 sec, then repolarize to -50 mV for 6 sec to elicit tail current (IhERG). Repeat every 10-15 sec.

- Baseline Recording: Record stable IhERG tail current amplitude for ≥2 minutes.

- Compound Application: Apply vehicle control (e.g., 0.1% DMSO) via perfusion, then apply increasing concentrations of test compound (e.g., 0.1, 1, 3, 10 µM), perfusing each until steady-state block is achieved (≈3-5 min per concentration).

- Washout: Perfuse with compound-free solution to assess reversibility.

- Data Analysis: Normalize tail current amplitude to baseline. Plot % inhibition vs. [compound] and fit to Hill equation to derive IC50.

Visualizations

Diagram 1: Integrated ADME Data Workflow for QSAR

Diagram 2: hERG Inhibition Leads to QT Prolongation

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Kit | Provider Examples | Primary Function in ADME Assays |

|---|---|---|

| Caco-2 Cell Line | ATCC, ECACC | Gold-standard intestinal barrier model for permeability/efflux studies. |

| Transwell Permeable Supports | Corning, Greiner Bio-One | Polycarbonate membrane inserts for forming cell monolayers for transport studies. |

| P-gp Inhibitors (e.g., Cyclosporin A, Zosuquidar) | Sigma-Aldrich, Tocris | Pharmacological tools to confirm P-gp-mediated efflux in bidirectional assays. |

| Recombinant Human CYP450 Enzymes | Corning, Sigma-Aldrich | Individual isoforms for clean CYP inhibition and reaction phenotyping studies. |

| CYP450 Fluorogenic Probe Substrates | Promega, Thermo Fisher | Enzyme-specific probes that yield fluorescent metabolites for high-throughput inhibition screening. |

| hERG-Expressing Cell Lines | ChanTest (Eurofins), Thermo Fisher | Stable cell lines expressing the hERG channel for reliable patch-clamp or fluorescence assays. |

| hERG Binding Assay Kit | Eurofins DiscoverX, PerkinElmer | Non-electrophysiology, high-throughput screening for hERG channel interaction. |

| NADPH Regeneration System | Promega, Thermo Fisher | Provides essential cofactor for CYP450 and other oxidative metabolism reactions. |

| Pooled Human Liver Microsomes (pHLM) | Corning, XenoTech | Essential for in vitro metabolism (stability, inhibition) studies. |

| Rapid Equilibrium Dialysis (RED) Device | Thermo Fisher | High-throughput tool for assessing plasma protein binding (PPB). |

Within a thesis focused on developing robust Quantitative Structure-Activity Relationship (QSAR) models for predicting Absorption, Distribution, Metabolism, and Excretion (ADME) properties, the selection and quality of input data are paramount. This document details the essential components—chemical descriptors, molecular fingerprints, and curated experimental datasets—and provides application notes and protocols for their effective use in computational ADME research.

Chemical Descriptors: Categories and Applications

Chemical descriptors are numerical representations of molecular properties. For ADME-QSAR, descriptors quantifying lipophilicity, polarity, size, and flexibility are critical.

Table 1: Key Descriptor Categories for ADME-QSAR

| Category | Example Descriptors | Relevance to ADME Property |

|---|---|---|

| Constitutional | Molecular Weight, Number of Rotatable Bonds, Heavy Atom Count | Solubility, Permeability, Metabolism |

| Topological | Wiener Index, Zagreb Index, Connectivity Indices | Membrane penetration, Bioavailability |

| Electrostatic | Partial Charges, Dipole Moment, Polar Surface Area (TPSA) | Solubility, CYP450 metabolism, BBB penetration |

| Quantum Chemical | HOMO/LUMO energies, Ionization Potential, Electronegativity | Reactivity, Metabolic transformation |

| Geometrical | Principal Moments of Inertia, Molecular Volume | Shape-based recognition by transporters |

Protocol: Calculating a Standard Descriptor Set with RDKit

Objective: Generate a comprehensive set of 2D and 3D molecular descriptors for a dataset of SMILES strings. Materials: Python environment with RDKit, Pandas; dataset in .sdf or .csv format. Procedure:

- Data Loading: Read the chemical structures (e.g., from a SMILES column in a CSV file) using

pandasand convert them into RDKit molecule objects.

Add Hydrogens & Generate 3D Conformations: For 3D descriptors, generate a low-energy conformation.

Descriptor Calculation: Iterate over molecules and calculate descriptors using built-in functions.

Molecular Fingerprints: Types and Use Cases

Fingerprints are bit vectors representing the presence or absence of molecular features. They are essential for similarity searching and as input for machine learning models.

Table 2: Common Fingerprint Types in ADME Prediction

| Fingerprint Type | Generation Method (Example) | Length | Typical Application in QSAR |

|---|---|---|---|

| Extended Connectivity (ECFP) | RDKit: AllChem.GetMorganFingerprintAsBitVect(mol, radius=2) |

1024, 2048 | "Circular" fingerprints; core for many ML models. |

| MACCS Keys | RDKit: MACCSkeys.GenMACCSKeys(mol) |

167 | Substructure keys; fast similarity screening. |

| PubChem Fingerprint (PubChemFP) | RDKit: rdMolDescriptors.GetHashedAtomPairFingerprintAsBitVect(mol) |

881 | Broad coverage of PubChem substructures. |

| Atom Pairs & Topological Torsions | RDKit: rdMolDescriptors.GetHashedTopologicalTorsionFingerprintAsBitVect(mol) |

Variable | Capture distance between atoms; useful for scaffold hopping. |

| RDKit Topological Fingerprint | RDKit: rdMolDescriptors.GetHashedTopologicalTorsionFingerprintAsBitVect(mol) |

2048 | Default hashed path-based fingerprint. |

Protocol: Generating and Comparing Fingerprints for Similarity Analysis

Objective: Calculate Tanimoto similarity between a query molecule and a library using ECFP4 fingerprints. Procedure:

- Generate Fingerprints: For the query molecule and all molecules in the library, compute ECFP4 bit vectors.

Calculate Similarities: Compute pairwise Tanimoto coefficients.

Identify Nearest Neighbors: Sort the library based on similarity scores.

High-Quality Experimental Datasets: The ChEMBL Database

Public repositories like ChEMBL provide curated, high-throughput screening and ADME data, essential for training and validating predictive models.

Table 3: Key ADME/Tox Assay Data Available in ChEMBL (as of 2023)

| Assay Type | Typical Measurement | ChEMBL Assay Classification | Example Target/Process |

|---|---|---|---|

| Solubility | Kinetic/Intrinsic Solubility (µg/mL) | ADME | Thermodynamic solubility |

| Permeability | Papp (x10⁻⁶ cm/s) in Caco-2, MDCK | ADME | Intestinal absorption |

| Microsomal Stability | % Remaining after incubation | ADME | Hepatic Phase I metabolism |

| Cytochrome P450 Inhibition | IC50 (nM) for CYP1A2, 2C9, 2D6, 3A4 | Tox | Drug-drug interaction potential |

| hERG Inhibition | IC50 (nM) in patch-clamp assay | Tox | Cardiac liability (QT prolongation) |

| Plasma Protein Binding | % Bound | ADME | Volume of distribution, free fraction |

Protocol: Extracting and Preprocessing ADME Data from ChEMBL

Objective: Retrieve a clean, machine-learning-ready dataset for human liver microsomal stability.

Materials: chembl_webresource_client Python library, Pandas, NumPy.

Procedure:

- Connect and Search: Query ChEMBL for target-specific assays.

Data Curation: Filter for relevant data, handle missing values, and standardize units.

Fetch Structures: Retrieve canonical SMILES for the curated compound list.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for ADME-QSAR Data Workflow

| Item/Category | Example/Source | Function in Research |

|---|---|---|

| Cheminformatics Toolkit | RDKit (Open Source), Schrödinger Suite, OpenBabel | Core library for molecule manipulation, descriptor/fingerprint calculation, and file format conversion. |

| Database Access Client | chembl_webresource_client (Python) |

Programmatic access to retrieve curated bioactivity data from the ChEMBL database. |

| Descriptor Calculation Suite | PaDEL-Descriptor, Mordred | Standalone or library-based tools to calculate thousands of molecular descriptors in batch. |

| Toxicity/PK Prediction Service | pkCSM, ProTox-II (Web Servers) | Quick validation benchmarks for preliminary ADME/Tox predictions. |

| Data Standardization Tool | MolVS (Molecular Validation and Standardization) | Ensures chemical structure consistency (e.g., neutralization, tautomer canonicalization) before modeling. |

| Curated Public Dataset | Therapeutics Data Commons (TDC) ADME Benchmarks | Provides pre-split, curated datasets for fair benchmarking of ADME prediction models. |

Visualization of the Integrated ADME-QSAR Data Workflow

Diagram Title: Integrated Data Pipeline for ADME-QSAR Model Development

Diagram Title: Key Descriptor-ADME Property Relationships for Modeling

Within the development of robust QSAR models for ADME (Absorption, Distribution, Metabolism, and Excretion) property prediction, the regulatory context is paramount. The ICH (International Council for Harmonisation) M7 and M9 guidelines provide the critical framework governing the use of in silico approaches for assessing mutagenic impurities and biopharmaceutics, respectively. These guidelines formalize the role of (Q)SAR as a key component in the safety and efficacy assessment of pharmaceuticals, moving it from a research tool to a regulatory-accepted methodology.

ICH M7 & QSAR for Mutagenic Impurity Assessment

ICH M7 (R2) provides a framework for the assessment and control of DNA-reactive (mutagenic) impurities to limit potential carcinogenic risk. (Q)SAR methodologies are formally recognized under this guideline for predicting the outcome of bacterial mutagenicity (Ames test) studies.

2.1 Core Regulatory Principles & Data Requirements

- Predictive Models: Two (Q)SAR prediction methodologies that complement each other by using different rules and/or training sets must be employed.

- Expert Review: A knowledge-based expert review is required to resolve any conflicting predictions and provide a final, reasoned conclusion.

- Acceptable Predictions: A compound is considered of no concern only if both models provide a negative prediction for mutagenicity.

- Threshold of Toxicological Concern (TTC): A default TTC of 1.5 µg/day intake of a mutagenic impurity is considered an acceptable risk for most pharmaceuticals.

Table 1: ICH M7 (Q)SAR Prediction Outcomes and Regulatory Actions

| Prediction Outcome (Model 1 / Model 2) | Expert Review Conclusion | Required Regulatory Action (Control Strategy) |

|---|---|---|

| Negative / Negative | Non-mutagenic | Impurity can be controlled at or below the general qualification threshold (typically 1-5 mg/day). |

| Positive / Negative | Inconclusive; requires structural assessment | Typically treated as positive. Control at or below the TTC (1.5 µg/day) or conduct a bacterial mutagenicity assay. |

| Positive / Positive | Mutagenic | Classify as a "mutagenic impurity." Strict control at or below the TTC is required. Purge or justify higher levels. |

2.2 Protocol: Standardized (Q)SAR Workflow for ICH M7 Compliance

- Step 1: Structure Preparation. Generate a canonical, unambiguous 2D chemical structure (e.g., SMILES notation) of the impurity. Check for tautomers, stereochemistry, and salt forms.

- Step 2: Dual Model Prediction. Submit the prepared structure to two complementary (Q)SAR prediction tools. Common, commercially available regulatory-compliant suites include:

Lhasa Ltd.'s Derek Nexus (expert rule-based) and Sarah Nexus (statistical-based).U.S. EPA's TEST andMultiCASE Inc.'s MC4PC or Case Ultra.

- Step 3: Expert Knowledge-Based Review. A trained toxicologist reviews all predictions, considering:

- Applicability domain of the models.

- Presence of structural alerts and their relevance.

- Conflicting predictions and underlying mechanistic rationale.

- Available experimental data on analogues (read-across).

- Step 4: Documentation and Reporting. Create a comprehensive report detailing the chemical structure, software/versions used, all predictions, the expert rationale, and the final conclusion for regulatory submission.

Title: ICH M7 QSAR Assessment Workflow

ICH M9 & QSAR for Biopharmaceutics Classification

ICH M9 provides guidance on the biopharmaceutics classification of APIs based on solubility and permeability, enabling biowaivers. While primarily focused on in vitro methods, the guideline acknowledges the potential use of in silico models, including QSAR, for permeability prediction as supporting evidence.

3.1 Key Data and Model Considerations for Permeability Prediction For a QSAR model's prediction to hold regulatory weight under ICH M9, it must be scientifically justified.

- Model Validation: The model must be built and validated using high-quality experimental data (e.g., human intestinal permeability, Caco-2 assay).

- Applicability Domain: The chemical space of the drug candidate must fall within the model's applicability domain.

- Endpoint Correlation: The predicted endpoint must be scientifically linked to human intestinal permeability (e.g., predicting Papp in Caco-2 cells or log Peff).

Table 2: Comparison of ICH M7 and ICH M9 QSAR Applications

| Aspect | ICH M7 (Mutagenicity) | ICH M9 (Permeability) |

|---|---|---|

| Primary Role of QSAR | Primary, regulatory-accepted method for hazard identification. | Supportive evidence, not a standalone method for classification. |

| Regulatory Expectation | Mandatory use of two complementary models + expert review. | Use is optional and must be scientifically justified. |

| Key Endpoint Predicted | Bacterial mutagenicity (Ames test outcome). | Human intestinal permeability (e.g., high/low). |

| Typical Model Types | Expert rule-based (Derek) & statistical (Sarah, MCASE). | Statistical/ML models (e.g., PLS, Random Forest, ANN). |

3.2 Protocol: Developing a QSAR Model for Permeability Prediction (Research Context)

- Step 1: Data Curation. Compile a dataset of compounds with reliable experimental human intestinal permeability values or robust surrogate measures (e.g., Caco-2 Papp, % absorbed in humans). Critical: Apply rigorous data quality checks and normalization.

- Step 2: Descriptor Calculation & Selection. Calculate molecular descriptors (e.g., topological, electronic, thermodynamic) using software like

PaDEL-Descriptor,RDKit, orDragon. Use feature selection techniques (e.g., genetic algorithm, stepwise regression) to reduce dimensionality and avoid overfitting. - Step 3: Model Building & Internal Validation. Split data into training and test sets (e.g., 80/20). Apply machine learning algorithms (e.g., Support Vector Machine, Random Forest, Partial Least Squares Regression). Validate using cross-validation (e.g., 5-fold) and report key metrics: R², Q², RMSE.

- Step 4: External Validation & AD Definition. Validate the model using a completely external compound set. Define the Applicability Domain using methods like leverage (Williams plot) or distance-based measures (e.g., Euclidean distance in descriptor space).

- Step 5: Regulatory Context Application. For ICH M9 context, use the model to predict permeability class for novel compounds within its AD. This prediction should be used in conjunction with, or to guide, experimental studies.

Title: QSAR Model Development for ADME Prediction

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item / Solution | Function in QSAR/ADME Research |

|---|---|

| Commercial (Q)SAR Software Suites (e.g., Derek Nexus, Sarah Nexus, MCASE, StarDrop) | Provide regulatory-accepted, pre-validated prediction platforms for endpoints like mutagenicity (ICH M7) and ADME properties. Essential for standardized screening. |

| Molecular Descriptor Calculation Tools (e.g., RDKit (Open Source), PaDEL-Descriptor, Dragon) | Generate numerical representations of chemical structures (descriptors) which are the input variables for building QSAR models. |

| Machine Learning Libraries (e.g., scikit-learn (Python), caret (R)) | Provide algorithms (Random Forest, SVM, PLS) and validation frameworks for building and testing predictive QSAR models in-house. |

| High-Quality Experimental ADME-Tox Databases (e.g., ChEMBL, PubChem BioAssay, Lhasa Ltd. Vitic) | Serve as critical sources of curated biological data for model training, validation, and read-across assessments. |

| Chemical Structure Drawing & Standardization Tools (e.g., ChemDraw, KNIME with RDKit nodes) | Ensure input chemical structures are accurate, canonicalized, and suitable for descriptor calculation and prediction. |

| Applicability Domain Assessment Scripts/Codes | Custom or published scripts to calculate the domain of a QSAR model (e.g., using leverage, distance measures), a mandatory step for reliable prediction. |

Building & Applying QSAR Models: Algorithms, Workflows, and Real-World Use Cases

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) modeling for ADME (Absorption, Distribution, Metabolism, Excretion) property prediction, the selection and application of robust machine learning algorithms are paramount. This document provides detailed Application Notes and Protocols for four cornerstone algorithms: Partial Least Squares (PLS), Random Forest (RF), Support Vector Machines (SVM), and Deep Neural Networks (DNN). These tools form a complementary toolkit, ranging from interpretable linear models to high-capacity nonlinear predictors, enabling researchers to tackle diverse ADME endpoints with varying data characteristics.

Table 1 summarizes the core characteristics, typical applications in ADME, and benchmark performance metrics for the four algorithms based on recent literature (2022-2024). Performance is generalized across common ADME tasks like human liver microsomal (HLM) stability, Caco-2 permeability, and hERG inhibition.

Table 1: Algorithm Toolkit for ADME-QSAR Modeling

| Algorithm | Core Principle | Best Suited For ADME Endpoints | Key Advantages | Typical Reported Performance (Range)* | Key Limitations |

|---|---|---|---|---|---|

| Partial Least Squares (PLS) | Projects predictors and targets to a new, lower-dimensional space of latent variables to maximize covariance. | Solubility, logP, pKa (Continuous). Early-stage screening with few samples. | High interpretability, robust to multicollinearity, works well with limited data (n < 100). | R²: 0.65 - 0.80 RMSE: 0.50 - 0.80 (Log-scale endpoints) | Limited ability to capture complex nonlinearities. Performance plateaus with high-dimensional descriptors. |

| Random Forest (RF) | Ensemble of decision trees built on bootstrapped samples with random feature selection. | CYP inhibition, Bioavailability classification, Toxicity flags (Binary/Continuous). | Handles nonlinearity, provides feature importance, robust to outliers and irrelevant features. | AUC: 0.80 - 0.90 Accuracy: 75% - 85% (Classification) R²: 0.70 - 0.85 (Regression) | Can overfit on noisy datasets. Less interpretable than PLS. Extrapolation poor. |

| Support Vector Machine (SVM) | Finds a hyperplane that maximizes the margin between classes (classification) or fits data within a tube (regression). | Clear binary endpoints (e.g., P-gp substrate/non-substrate, BBB penetration). High-dimensional descriptor sets. | Effective in high-dimensional spaces, strong theoretical foundation, good generalization with right kernel. | AUC: 0.85 - 0.93 Accuracy: 78% - 88% (Classification) | Computationally intensive for large datasets (>10k). Kernel and parameter choice is critical. |

| Deep Neural Network (DNN) | Multiple layers of interconnected neurons (nodes) that learn hierarchical feature representations. | Complex, multifactorial endpoints (e.g., in vivo clearance, volume of distribution). Large, diverse chemical datasets (>10k compounds). | Highest capacity for learning complex patterns, can model raw structures (SMILES) via graph NNs. | R²: 0.75 - 0.90 AUC: 0.88 - 0.95 (State-of-the-art on large benchmarks) | "Black box" nature. Requires very large data, extensive hyperparameter tuning, and significant computational resources. |

*Performance metrics are highly dataset-dependent. R²: Coefficient of Determination; RMSE: Root Mean Square Error; AUC: Area Under the ROC Curve.

Detailed Experimental Protocols

Protocol 3.1: Standardized Model Development Workflow for ADME-QSAR

- Objective: To establish a reproducible pipeline for developing, validating, and comparing PLS, RF, SVM, and DNN models for a given ADME endpoint.

- Materials: See "Research Reagent Solutions" section.

- Procedure:

- Dataset Curation: Compile a chemically diverse dataset with experimentally measured ADME properties from reliable sources (e.g., ChEMBL, PubChem). Apply stringent curation: remove duplicates, correct units, flag experimental errors.

- Descriptor Calculation & Data Preprocessing: Calculate a consistent set of molecular descriptors (e.g., RDKit, Mordred) or generate molecular fingerprints for all compounds. For PLS, consider feature selection (e.g., VIP scores) to reduce dimensionality. For all models: scale features (StandardScaler for SVM/DNN; often not needed for RF).

- Data Splitting: Perform a stratified split (by activity or key structural clusters) into Training (70%), Validation (15%), and hold-out Test (15%) sets. The Test set must only be used for final evaluation.

- Model Training & Hyperparameter Optimization:

- Use the Training set and 5-fold cross-validation (CV) to optimize hyperparameters via grid or random search.

- PLS: Optimize number of latent components.

- RF: Optimize number of trees (

n_estimators), max tree depth (max_depth),min_samples_split. - SVM: Optimize regularization parameter (

C), kernel coefficient (gammafor RBF kernel), kernel type. - DNN: Optimize architecture (# layers, # nodes/layer), learning rate, dropout rate, batch size.

- Model Validation: Train final model with optimal hyperparameters on the full Training set. Evaluate on the Validation set to check for overfitting.

- Final Evaluation & Interpretation: Apply the finalized model to the held-out Test set. Report standard metrics (R², RMSE, MAE for regression; AUC, Accuracy, Precision, Recall for classification). For PLS/RF, analyze variable importance. For DNN, consider SHAP or LIME for interpretability.

- Applicability Domain (AD) Assessment: Define the AD using methods like leverage (for PLS) or distance-based metrics (for RF/SVM/DNN) to flag predictions for compounds far from the training space.

Protocol 3.2: Consensus Modeling Protocol

- Objective: To improve prediction robustness by combining predictions from the four algorithms.

- Procedure:

- Develop optimized PLS, RF, SVM, and DNN models for the same endpoint using Protocol 3.1.

- Generate predictions for an external validation set using each model.

- Compute the consensus prediction. For regression: use the median of the four predictions. For classification: use majority voting or the average of class probabilities.

- Evaluate consensus model performance against individual models. The consensus often shows reduced variance and improved reliability for compounds within the collective AD of all models.

Visualization and Workflows

Title: ADME-QSAR Model Development and Validation Workflow

Title: Consensus Modeling Strategy for ADME Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for ADME-QSAR Modeling

| Item/Category | Example (Specific Tool/Library) | Function in ADME-QSAR Research |

|---|---|---|

| Chemical Database | ChEMBL, PubChem BioAssay | Primary source for curated, experimental ADME/Tox data for model training and validation. |

| Descriptor Calculation | RDKit, Mordred, PaDEL-Descriptor | Computes numerical representations (descriptors) of molecular structures (e.g., topological, electronic). |

| Fingerprint Generator | RDKit, DeepChem | Generates molecular fingerprints (e.g., ECFP, MACCS) for similarity searching and as model input. |

| Machine Learning Core | scikit-learn (Python) | Provides robust, standardized implementations of PLS, RF, SVM, and essential data preprocessing utilities. |

| Deep Learning Framework | TensorFlow/Keras, PyTorch, DeepChem | Enables the construction, training, and deployment of complex DNN and graph neural network architectures. |

| Hyperparameter Optimization | scikit-learn (GridSearchCV), Optuna, Hyperopt | Automates the search for optimal model parameters to maximize predictive performance. |

| Model Interpretation | SHAP, LIME, scikit-learn feature_importances_ |

Provides post-hoc explanations for "black-box" models (especially DNN/RF), crucial for scientific insight. |

| Applicability Domain | scikit-learn PCA, BallTree/KDTree |

Methods to define the chemical space of the training set and flag unreliable extrapolations. |

| Cheminformatics Platform | KNIME, Pipeline Pilot | Offers visual, workflow-based environments for integrating and automating the entire QSAR modeling pipeline. |

The development of robust Quantitative Structure-Activity Relationship (QSAR) models for predicting Absorption, Distribution, Metabolism, and Excretion (ADME) properties is a critical pillar in modern drug discovery. This protocol details an end-to-end computational workflow, framed within a broader thesis aiming to increase the reliability and regulatory acceptance of in silico ADME models. The focus is on creating reproducible, well-documented, and chemically meaningful models that can effectively prioritize compounds for synthesis and in vitro testing.

Application Notes & Detailed Protocols

Phase I: Dataset Curation & Preparation

The foundation of any predictive QSAR model is a high-quality, chemically diverse, and accurately labeled dataset.

Protocol 2.1.1: Data Collection and Standardization

- Source Identification: Gather experimental ADME endpoint data (e.g., intrinsic clearance, P-gp efflux ratio, solubility) from reliable public databases (e.g., ChEMBL, PubChem BioAssay) and proprietary in-house studies.

- Data Aggregation: Compile data into a single structured table. Essential columns include: Canonical SMILES, Compound ID, Experimental Endpoint Name, Experimental Value, Unit, and Data Source.

- Standardization:

- Structures: Using a toolkit like RDKit, standardize all molecular structures (SMILES). This includes:

- Neutralizing charges (where appropriate for the endpoint).

- Removing salts and solvents.

- Generating canonical tautomers.

- Aromatization.

- Activity Values: Convert all values to a consistent unit (e.g., log units for concentrations). For categorical endpoints (e.g., substrate/non-substrate), apply consistent labeling.

- Structures: Using a toolkit like RDKit, standardize all molecular structures (SMILES). This includes:

- Duplicate Handling: Identify records for the same compound/endpoint pair. Apply a predefined rule (e.g., retain the mean value, or the value from the most trusted source) to resolve conflicts.

Protocol 2.1.2: Chemical Space Analysis and Splitting

- Descriptor Calculation: Calculate a set of simple 2D molecular descriptors (e.g., molecular weight, LogP, number of rotatable bonds) for all standardized compounds.

- Similarity Analysis: Generate a molecular fingerprint (e.g., Morgan fingerprint, radius=2) for each compound. Compute the pairwise Tanimoto similarity matrix.

- Dataset Splitting: Use a structure-based splitting method (e.g., Kennard-Stone, Sphere Exclusion) on the principal components derived from the fingerprints/descriptors. This ensures that structurally similar compounds are kept together in the training or test set, providing a more realistic assessment of model predictivity on novel chemotypes.

- Standard Split: 70-80% Training Set, 20-30% External Test Set (locked away until final model evaluation).

- Training Set Sub-split: Use cross-validation (e.g., 5-fold) on the training set for hyperparameter tuning.

Table 1: Example Curated Dataset for Human Liver Microsomal (HLM) Stability

| Compound ID | Canonical SMILES | HLM Clint (µL/min/mg) | log(HLM Clint) | Source | Set Assignment |

|---|---|---|---|---|---|

| CID_1234 | CC(=O)Oc1ccccc1C(=O)O | 25.6 | 1.41 | ChEMBL | Training |

| CID_5678 | CN1C=NC2=C1C(=O)N(C(=O)N2C)C | 5.2 | 0.72 | In-house | Training |

| CID_9012 | C1=CC(=C(C=C1Cl)Cl)Br | 120.5 | 2.08 | PubChem | Test |

Phase II: Molecular Descriptor Calculation & Selection

Descriptors translate chemical structure into numerically quantifiable features.

Protocol 2.2.1: Comprehensive Descriptor Calculation

- Tool Selection: Utilize cheminformatics software (e.g., RDKit, PaDEL-Descriptor, Mordred) to calculate descriptors.

- Descriptor Types:

- 1D/2D Descriptors: Constitutional, topological, electronic, and molecular property descriptors (e.g., counts of atoms/bonds, topological polar surface area (TPSA), LogP).

- 3D Descriptors: Based on optimized 3D conformations (e.g., WHIM, GETAWAY). Note: Requires conformational generation and minimization, which is computationally intensive.

- Fingerprints: Binary or count-based representations of substructural features (e.g., MACCS keys, Extended Connectivity Fingerprints - ECFP).

- Pre-processing: Handle missing values and errors (e.g., remove descriptors with >15% missing values, impute or remove remaining). Standardize (scale) all continuous descriptors.

Protocol 2.2.2: Descriptor Filtering and Selection

- Low Variance Filter: Remove descriptors with near-zero variance across the dataset.

- Correlation Filter: For highly correlated descriptor pairs (|r| > 0.95), retain one to reduce redundancy.

- Feature Selection: Apply methods like Recursive Feature Elimination (RFE) or LASSO (L1 regularization) embedded in model training to identify the most predictive subset of descriptors. This step is performed only on the training set cross-validation folds to avoid data leakage.

Table 2: Key Descriptor Categories for ADME-QSAR

| Category | Example Descriptors | Relevance to ADME |

|---|---|---|

| Lipophilicity | LogP (octanol/water), LogD at pH 7.4 | Membrane permeability, distribution |

| Size & Shape | Molecular Weight, Rotatable Bond Count, PSA | Absorption, passive diffusion, transporter interaction |

| Electronics | pKa, HOMO/LUMO energies, Partial Charges | Metabolism (CYP interactions), solubility |

| Topology | Kier & Hall Indices, Wiener Index | Relates to complex molecular properties |

| Fingerprints | ECFP4, MACCS Keys | Captures substructural alerts for specific interactions |

Phase III: Model Training, Validation & Interpretation

This phase involves selecting algorithms, training models, rigorously validating them, and extracting chemical insights.

Protocol 2.3.1: Model Building and Hyperparameter Tuning

- Algorithm Selection: Choose based on dataset size and descriptor type. Common choices include:

- Random Forest (RF): Robust, handles non-linear relationships, provides feature importance.

- Gradient Boosting Machines (GBM/XGBoost): Often high performance, requires careful tuning.

- Support Vector Machines (SVM): Effective for smaller datasets with clear margins.

- Multilinear Regression (MLR): For simple, interpretable, and potentially more regulatory-friendly models.

- Hyperparameter Optimization: Use grid or random search within a cross-validation loop on the training set to find optimal model parameters (e.g., number of trees in RF, learning rate in GBM).

Protocol 2.3.2: Model Validation & Acceptance Criteria Adhere to OECD Principle 4: "Appropriate measures of goodness-of-fit, robustness, and predictivity."

- Internal Validation: Report metrics from cross-validation (e.g., 5-fold CV): Q², RMSEₑᵥ.

- External Validation: Evaluate the final model, tuned on the full training set, on the locked external test set. Key metrics: R²ₑₓₜ, RMSEₑₓₜ, MAE.

- Y-Randomization: Shuffle the response variable and re-train. A significant drop in performance confirms the model is not based on chance correlation.

- Applicability Domain (AD): Define the chemical space where the model's predictions are reliable (e.g., using leverage/Williams plot or distance-based methods). Flag predictions for compounds outside the AD.

Protocol 2.3.3: Model Interpretation

- Feature Importance: Analyze descriptors ranked by importance (e.g., Gini importance in RF, coefficients in MLR).

- Partial Dependence Plots (PDPs): Visualize the relationship between a key descriptor and the predicted ADME outcome.

- Structural Alerts: Map important fingerprint bits or descriptor ranges back to specific chemical substructures to generate testable hypotheses.

Table 3: Example Model Performance for a Caco-2 Permeability Classifier

| Model | CV Accuracy | CV F1-Score | External Test Accuracy | External Test F1-Score | Key Descriptors (Top 3) |

|---|---|---|---|---|---|

| Random Forest | 0.85 ± 0.03 | 0.83 ± 0.04 | 0.82 | 0.80 | TPSA, LogP, Number of H-Bond Donors |

| XGBoost | 0.86 ± 0.02 | 0.84 ± 0.03 | 0.83 | 0.81 | LogP, Molar Refractivity, TPSA |

Visualizations

QSAR Model Development Workflow

Chemical Space-Based Data Splitting Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software & Resources for ADME-QSAR Modeling

| Tool/Resource Name | Type/Category | Primary Function in Workflow |

|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Core toolkit for molecular standardization, descriptor calculation, fingerprint generation, and basic modeling. |

| KNIME Analytics Platform | Visual Workflow Tool | Provides a graphical interface to build, document, and execute the entire workflow with integrated nodes for cheminformatics and machine learning. |

| PaDEL-Descriptor | Descriptor Calculation Software | Calculates a comprehensive suite of 1D, 2D, and fingerprint descriptors from chemical structures. |

| scikit-learn | Machine Learning Library (Python) | Provides a unified, well-documented API for feature selection, model training (RF, SVM, etc.), hyperparameter tuning, and validation. |

| ChEMBL Database | Public Bioactivity Database | A primary source for curated, target-focused ADME and toxicity data with standardized assay annotations. |

| OECD QSAR Toolbox | Regulatory Assessment Software | Used for profiling chemicals, identifying analogues, and filling data gaps, aligning research with regulatory frameworks. |

1. Introduction & Thesis Context Within the broader thesis research on Quantitative Structure-Activity Relationship (QSAR) models for ADME (Absorption, Distribution, Metabolism, Excretion) property prediction, the practical integration of these models into drug discovery workflows is critical. This document provides detailed application notes and protocols for employing ADME-QSAR predictions to guide virtual screening (VS) and iterative lead optimization cycles, thereby reducing late-stage attrition due to poor pharmacokinetics.

2. Core Application Notes

2.1. Primary Workflow for ADME-Aware Virtual Screening The contemporary virtual screening pipeline is augmented by early ADME filtration using QSAR models. This pre-filtering enriches the hit list with compounds that have a higher probability of acceptable pharmacokinetic profiles.

2.2. Key QSAR Models for Integration The following ADME endpoints, prioritized within the thesis research, are essential for integration. Predictive models for these properties are typically built using curated in-house or commercial datasets using algorithms like Random Forest, Support Vector Machines, or Deep Neural Networks.

Table 1: Core ADME Properties for QSAR-Guided Screening & Optimization

| ADME Property | Target/Threshold for Hits | Common Descriptor Classes | Typical Model Performance (Q²/ R²ₑₓₜ) |

|---|---|---|---|

| Aqueous Solubility (logS) | > -5.0 log(mol/L) | Topological, Atom-centered fragments, LogP | 0.70 - 0.85 |

| Human Liver Microsome Stability (% remaining) | > 30% at 30 min | Molecular fingerprints, ECFP6, P450 site descriptors | 0.65 - 0.80 |

| Caco-2 Permeability (Papp, 10⁻⁶ cm/s) | > 5 (high permeability) | PSA, H-bond donors/acceptors, LogD | 0.75 - 0.82 |

| hERG Inhibition (pIC₅₀) | < 5.0 (low risk) | Positive ionizable features, Lipophilic descriptors | 0.70 - 0.78 |

| CYP3A4 Inhibition (pIC₅₀) | < 5.0 (low risk) | Molecular size, Nitrogen features, Substructure keys | 0.68 - 0.75 |

3. Detailed Experimental Protocols

3.1. Protocol: Integrated Structure- and ADME-Based Virtual Screening

Objective: To screen a large virtual compound library (e.g., 1-10 million molecules) against a target using molecular docking, followed by sequential filtration with ADME-QSAR predictions. Materials:

- Compound library in SDF or SMILES format.

- Prepared protein target structure (PDB format).

- Docking software (e.g., AutoDock Vina, Glide, GOLD).

- Validated QSAR models for key ADME properties (see Table 1).

- Scripting environment (Python/R/Knime).

Procedure:

- Library Preparation: Standardize the library using chemoinformatics tools (e.g., RDKit). Generate 3D conformers if required by the docking software.

- Primary Docking Screen: Execute docking against the target’s active site. Retain the top 100,000 compounds ranked by docking score.

- ADME-QSAR Prediction: For the 100,000 hits, generate molecular descriptors or fingerprints required by each QSAR model. Run predictions for: a. Solubility (logS) b. Microsomal Stability (% remaining) c. Permeability (Caco-2 Papp) d. hERG pIC₅₀

- Multi-Parameter Optimization (MPO) Scoring: Apply a desirability function or a weighted-sum score. Example MPO score = (DockScore weight * normalized DockScore) + (Solubility weight * desirability(logS)) + ...

- Hit Selection: Re-rank the library based on the MPO score. Select the top 1,000-5,000 compounds for visual inspection and purchase/testing.

- Output: A curated list of compounds with associated predicted ADME properties and MPO scores.

3.2. Protocol: QSAR-Guided Lead Optimization Cycle

Objective: To iteratively design new analogs with improved potency and ADME properties using predictive models. Materials:

- Chemical series of interest (core scaffold with 50-200 analogs).

- Experimental biological activity (e.g., IC₅₀) and ADME data for the series.

- QSAR model generation software (e.g., Schrödinger QikProp, MOE, in-house Python scripts).

- Medicinal chemistry design tools (e.g., for R-group enumeration).

Procedure:

- Data Curation: Assemble a dataset of tested compounds with measured in vitro potency and key ADME endpoints (e.g., metabolic stability, solubility).

- Local QSAR Model Building: For each property (Potency, Stability, etc.), build a focused QSAR model using the congeneric series data. Use leave-one-out or leave-cluster-out cross-validation.

- Virtual Analog Enumeration: Generate a virtual library of proposed analogs (e.g., 500-5,000) by systematically varying R-groups on the core scaffold.

- Prediction and Triaging: Predict the activity and ADME profile for all virtual analogs using the local models from Step 2.

- Design Selection: Apply a compound quality index (e.g., Ligand Efficiency, Lipophilic Efficiency, ADME MPO score) to rank the proposed analogs. Select 10-20 top-priority compounds for synthesis based on a balanced profile.

- Iteration: Synthesize, test, and add the new experimental data to the dataset. Rebuild/refine models and repeat the cycle.

4. Visualization of Workflows

Diagram 1: ADME-Aware Virtual Screening Workflow

Diagram 2: Iterative QSAR-Guided Lead Optimization Cycle

5. The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Tools

| Item / Tool | Function / Purpose | Example Vendor/Software |

|---|---|---|

| Curated ADME-Tox Database | Provides high-quality experimental data for training & validating QSAR models. | ChEMBL, PubChem, in-house databases. |

| Descriptor Calculation Suite | Generates numerical representations (descriptors/fingerprints) of molecular structures for modeling. | RDKit, PaDEL-Descriptor, MOE. |

| QSAR Modeling Platform | Integrated environment for building, validating, and deploying predictive machine learning models. | KNIME, Orange Data Mining, Scikit-learn (Python). |

| Commercial ADME Prediction Suite | Provides pre-built, extensively validated models for key ADME endpoints for screening. | Schrödinger QikProp, Simulations Plus ADMET Predictor, ACD/Percepta. |

| Medicinal Chemistry Design Tool | Facilitates virtual analog enumeration and R-group analysis for lead optimization. | Cresset Flare, ChemAxon Reactor, OpenEye BROOD. |

| Multi-Parameter Optimization (MPO) Calculator | Computes composite scores balancing multiple predicted properties to rank compounds. | In-house scripts, Dotmatics, SeeSAR. |

This application note, framed within a broader thesis on QSAR models for ADME prediction, presents modern case studies where computational models successfully guided the optimization of key pharmacokinetic parameters. We detail the methodologies, data, and tools that enabled these successes for the research community.

Application Note 1: Optimization of Metabolic Stability in a Kinase Inhibitor Series

Background: A preclinical candidate for oncology exhibited poor metabolic stability in human liver microsomes (HLM), leading to high clearance and short half-life. A QSAR model was employed to guide synthesis toward improved stability.

Key Data & Results: Table 1: QSAR-Guided Improvement of Metabolic Stability

| Compound | Generation | Microsomal Clint (µL/min/mg) | Predicted Stability Class | Half-life in vivo (rat, h) |

|---|---|---|---|---|

| Lead-0 | Initial | 120 | Low | 0.8 |

| Analog-5 | Iteration 1 | 65 | Medium | 1.9 |

| Analog-12 | Iteration 2 | 22 | High | 4.5 |

| Candidate | Final | 15 | High | 6.2 |

Detailed Protocol for Metabolic Stability Assay (HLM):

Reagent Preparation:

- Prepare 1 mg/mL HLM solution in 100 mM potassium phosphate buffer (pH 7.4).

- Prepare a 10 mM stock solution of the test compound in DMSO (final DMSO <0.1%).

- Prepare 10 mM NADPH cofactor solution in buffer.

Incubation:

- In a 96-well plate, add 395 µL of HLM solution.

- Add 0.5 µL of test compound stock (final concentration: 1 µM).

- Pre-incubate for 5 minutes at 37°C.

- Initiate reaction by adding 50 µL of NADPH solution (final volume: 500 µL). For negative controls, use buffer without NADPH.

Quenching and Analysis:

- At time points (0, 5, 15, 30, 45 min), withdraw 50 µL aliquot and quench with 100 µL of ice-cold acetonitrile containing internal standard.

- Centrifuge at 4000xg for 15 min to precipitate proteins.

- Analyze supernatant using LC-MS/MS to determine parent compound remaining.

- Calculate intrinsic clearance (Clint) from the first-order decay constant.

Visualization: QSAR-Guided Optimization Workflow

QSAR-Driven ADME Optimization Cycle

Application Note 2: Enhancing Passive Permeability in a CNS Program

Background: A potent neuropeptide receptor antagonist suffered from low predicted blood-brain barrier (BBB) penetration due to poor passive permeability (PAMPA) and high P-glycoprotein (P-gp) efflux.

Key Data & Results: Table 2: Optimization of Permeability and Efflux Properties

| Compound | Modification | Papp (PAMPA) (x10⁻⁶ cm/s) | Predicted LogPS | Efflux Ratio (MDR1-MDCKII) | Brain/Plasma Ratio (Mouse) |

|---|---|---|---|---|---|

| Parent | - | 2.1 | -2.8 | 12.5 | 0.05 |

| Opt-3 | Reduce HBD | 8.5 | -2.1 | 8.2 | 0.18 |

| Opt-7 | Reduce PSA | 15.2 | -1.7 | 5.1 | 0.35 |

| Final | LogD adjust | 18.7 | -1.5 | 2.5 | 0.82 |

Detailed Protocol for Parallel Artificial Membrane Permeability Assay (PAMPA):

Plate Preparation:

- Coat the filter of a 96-well PAMPA plate with 4 µL of phospholipid solution (e.g., 2% Lecithin in dodecane).

- Allow the lipid to distribute for 1 hour at room temperature.

Compound Dosing:

- Prepare a 100 µM solution of test compound in PBS at pH 7.4 (Donor solution).

- Fill the donor wells with 200 µL of this solution.

- Fill the acceptor wells with 300 µL of PBS pH 7.4 buffer.

Assay Run:

- Carefully place the acceptor plate on the donor plate.

- Incubate the assembled plate for 4 hours at room temperature under gentle agitation.

Analysis:

- Disassemble the plate.

- Quantify compound concentration in both donor and acceptor compartments using UV spectrophotometry or LC-MS.

- Calculate apparent permeability (Papp) using the standard equation.

The Scientist's Toolkit: Key Research Reagents

Table 3: Essential Materials for ADME Property Optimization Studies

| Item | Function/Benefit | Example Product/Type |

|---|---|---|

| Human Liver Microsomes (HLM) | Pooled in vitro system for Phase I metabolic stability studies. Essential for predicting hepatic clearance. | Xenotech HLM, Corning Gentest |

| MDR1-MDCKII Cells | Polarized canine kidney cells expressing human P-gp. Gold-standard for assessing transporter-mediated efflux. | ATCC CRL-3247 |

| PAMPA Plate | High-throughput tool for assessing passive transcellular permeability independent of active transport. | Corning Gentest, pION |

| Cryopreserved Hepatocytes | More complete in vitro system (Phase I & II metabolism) for advanced clearance and metabolite ID studies. | BioIVT, Lonza |

| Simulated Intestinal Fluid (FaSSIF/FeSSIF) | Biorelevant media for predicting solubility and dissolution in the GI tract. | Biorelevant.com media |

| LC-MS/MS System | Quantitative analysis of parent drug depletion or metabolite formation in biological matrices. | Sciex Triple Quad, Agilent 6495C |

Visualization: Key ADME Property Interplay for CNS Drugs

Molecular Drivers of Key ADME Properties

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) models for Absorption, Distribution, Metabolism, and Excretion (ADME) property prediction, traditional descriptor-based methods are increasingly augmented by deep learning architectures that directly learn from molecular structure. Graph Neural Networks (GNNs) and Transformer models represent two dominant, complementary paradigms. GNNs natively operate on molecular graphs, where atoms are nodes and bonds are edges, to learn topological representations. Transformers, adapted from natural language processing, process linearized molecular representations (e.g., SMILES, SELFIES) to capture long-range dependencies and contextual patterns. This document provides application notes and detailed protocols for implementing these models in a molecular property prediction pipeline, specifically focused on ADME endpoints.

Current State: Performance Benchmarking

A live search for recent benchmarks (2023-2024) on key ADME datasets reveals the comparative performance of GNNs, Transformers, and hybrid models. Key metrics include Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and Area Under the Receiver Operating Characteristic Curve (ROC-AUC) for classification tasks.

Table 1: Benchmark Performance on ADME-Relevant Datasets

| Model Architecture | Dataset (Task) | Key Metric | Performance | Reference/Note |

|---|---|---|---|---|

| Attentive FP (GNN) | ClinTox (Classification) | ROC-AUC | 0.942 | Message-passing GNN with graph attention mechanism. |

| GROVER (Transformer) | BBBP (Classification) | ROC-AUC | 0.931 | Pre-trained on 10M molecules via SMILES and graph-based objectives. |

| MolFormer (Transformer) | ESOL (Regression) | RMSE | 0.58 kcal/mol | Large-scale, rotary position embeddings for SMILES. |

| D-MPNN (GNN) | FreeSolv (Regression) | RMSE | 0.90 kcal/mol | Direct message-passing neural network, robust on small data. |

| Hybrid (GNN+Transformer) | Lipophilicity (Regression) | RMSE | 0.49 log units | Combines graph features from GNN with sequential context from Transformer. |

| ChemBERTa-2 (Transformer) | HIV (Classification) | ROC-AUC | 0.816 | SMILES-based, pre-trained with masked language modeling. |

Detailed Experimental Protocols

Protocol A: Training a GNN for Aqueous Solubility Prediction (Regression)

Objective: Predict logS (ESOL dataset) using a Directed Message Passing Neural Network (D-MPNN).

Materials & Software: Python 3.9+, PyTorch 1.13+, DeepChem 2.7, RDKit 2022.09, CUDA 11.6 (optional for GPU), pandas, scikit-learn.

Procedure:

- Data Preparation:

- Download the ESOL dataset (Delaney) from MoleculeNet.

- Standardize molecules using RDKit (neutralize charges, aromaticity perception, remove salts).

- Split data into training/validation/test sets (80%/10%/10%) using scaffold splitting for realistic assessment.

- Featurize molecules into graph representations: nodes (atoms) are featurized with atomic number, degree, hybridization, etc.; edges (bonds) are featurized with bond type, conjugation, stereochemistry.

Model Configuration:

- Implement a D-MPNN architecture with 3 message-passing steps (hidden size=300).

- Follow the message-passing phase with a global mean pooling readout function.

- Use a 3-layer feed-forward network (FFN: 300->100->50->1) as the prediction head.

- Apply ReLU activation and 20% dropout between FFN layers.

Training:

- Loss Function: Mean Squared Error (MSE).

- Optimizer: AdamW (learning rate=0.001, weight decay=0.01).

- Scheduler: ReduceLROnPlateau (factor=0.5, patience=10 epochs).

- Batch Size: 32.

- Epochs: 200, with early stopping based on validation loss (patience=30).

- Validate after each epoch.

Evaluation:

- Predict on the held-out test set.

- Report RMSE, MAE, and R² values.

- Perform uncertainty estimation via deep ensembles (train 5 models with different random seeds).

Protocol B: Fine-Tuning a Transformer for CYP450 Inhibition (Classification)

Objective: Predict binary inhibition of Cytochrome P450 3A4 (CYP3A4) using a pre-trained SMILES Transformer.

Materials & Software: Python 3.9+, PyTorch, HuggingFace Transformers 4.28+, ChemBERTa-2 pre-trained weights, RDKit, imbalanced-learn.

Procedure:

- Data Curation:

- Curate data from public sources (e.g., ChEMBL, PubChem BioAssay). Filter for human CYP3A4 inhibition assays with clear inhibition thresholds (e.g., IC50 < 10 µM = positive).

- Apply stringent data cleaning: remove inorganic/organometallic compounds, standardize to canonical SMILES, and deduplicate.

- Address class imbalance using SMOTE-ENN from the

imbalanced-learnlibrary.

Tokenization & Input Formatting:

- Use the tokenizer corresponding to the pre-trained model (e.g., ChemBERTaTokenizer).

- Tokenize SMILES strings, adding [CLS] and [SEP] tokens.

- Set maximum sequence length to 512, applying truncation/padding as needed.

Model Setup & Fine-Tuning:

- Load the pre-trained ChemBERTa-2 model.

- Replace the top classification head with a new linear layer (768 hidden units -> 1 output for binary classification).

- Employ gradual unfreezing: first unfreeze the classification head and last two Transformer layers, then unfreeze all layers after 5 epochs.

- Loss Function: Binary Cross-Entropy with logits loss.

- Optimizer: AdamW (lr=2e-5, epsilon=1e-8).

- Batch Size: 16 (accumulate gradients if necessary).

- Epochs: 15, with evaluation on a 15% validation set after each epoch.

Evaluation:

- Calculate ROC-AUC, Precision-Recall AUC, F1-score, and Matthews Correlation Coefficient (MCC) on the test set.

- Perform 5-fold cross-validation to assess robustness.

- Use SHAP (SHapley Additive exPlanations) based on attention scores to interpret key substructures influencing prediction.

Visualization of Model Architectures and Workflows

Title: GNN-Based ADME Property Prediction Pipeline

Title: Transformer Encoder for SMILES Sequence Processing

Title: Hybrid GNN-Transformer Model Architecture

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Computational Reagents for GNN/Transformer ADME Modeling

| Item/Category | Example/Product | Function & Brief Explanation |

|---|---|---|

| Deep Learning Framework | PyTorch (v1.13+), TensorFlow (v2.12+) | Core library for building, training, and deploying neural network models. PyTorch is preferred for dynamic graphs in research. |

| Molecular Machine Learning Library | DeepChem, DGL-LifeSci, PyTorch Geometric (PyG) | Provides pre-built layers for GNNs (e.g., MPNN, GAT), molecular datasets, and featurization utilities. |

| Transformer Library | HuggingFace Transformers | Access to pre-trained chemical language models (ChemBERTa, MolFormer, GROVER) for transfer learning. |

| Chemistry Toolkit | RDKit (Open-source) | Fundamental for cheminformatics: SMILES parsing, molecular graph generation, descriptor calculation, and standardization. |

| Data Source | MoleculeNet, ChEMBL, PubChem BioAssay | Curated benchmarks (MoleculeNet) and large-scale experimental bioactivity databases for training and validation. |

| Hyperparameter Optimization | Optuna, Ray Tune | Automates the search for optimal model parameters (e.g., learning rate, layer depth) to maximize predictive performance. |

| Model Interpretation | Captum (for PyTorch), SHAP | Provides gradient-based and attention-based attribution methods to interpret model predictions and identify important substructures. |

| High-Performance Compute | NVIDIA A100 GPU, Google Colab Pro | Accelerates model training, especially for large Transformers or ensemble methods. Cloud-based options provide accessibility. |

Overcoming QSAR Challenges: Model Pitfalls, Applicability Domain, and Performance Enhancement

In the development of Quantitative Structure-Activity Relationship (QSAR) models for predicting Absorption, Distribution, Metabolism, and Excretion (ADME) properties, three fundamental challenges consistently arise: overfitting, underfitting, and the curse of dimensionality. These pitfalls compromise model generalizability, predictive accuracy, and ultimately, the translational value of computational findings in drug development. This document provides detailed application notes and protocols to identify, diagnose, and mitigate these issues within the specific context of ADME-QSAR research.

Table 1: Impact of Model Complexity and Dimensionality on QSAR Model Performance

| Metric / Scenario | Low Complexity Model (e.g., Linear, few descriptors) | High Complexity Model (e.g., SVM/RF, many descriptors) | Very High Dimensional Space (p >> n) |

|---|---|---|---|

| Training Error | Often High (Bias) | Often Very Low (<0.1) | Can be Near Zero |

| Validation/Test Error | High (Underfitting) | High (Overfitting) | Extremely High & Unstable |

| Model Variance | Low | High | Very High |

| Typical Cause | Insufficient model capacity, feature pruning | Excessive parameters, noise fitting | Descriptors >> Compounds |

| Mitigation Strategy | Add relevant features, complex algorithm | Regularization, feature selection, more data | Dimensionality reduction (PCA, t-SNE), rigorous feature selection |

Table 2: Recommended Benchmark Values for ADME-QSAR Model Assessment

| Assessment Metric | Acceptable Range | Optimal Range | Warning Sign |

|---|---|---|---|

| Δ (Train - Test R²) | < 0.2 | < 0.1 | > 0.3 |

| Root Mean Square Error (RMSE) Test | Context-dependent (e.g., < 0.5 log units for logP) | As low as possible, aligned with experimental error | Test RMSE > 2*Train RMSE |

| Y-Randomization (q²) | Should be negative or near zero | Significantly negative | Positive q² |

| Applicability Domain Coverage | > 80% of intended prediction set | > 90% | < 70% |

Experimental Protocols

Protocol 3.1: Systematic Workflow for Diagnosing Overfitting & Underfitting in ADME-QSAR